Required for core functionality such as security, network management, and accessibility. These cannot be disabled.

Welcome to Part 2 of our blog series for understanding the microservices-based architecture behind the powerful, unbeatable performance of Netflix.

In the first part, we discussed the system architecture of Netflix and decoded how it works, the reasons behind the unprecedented scalability of Netflix, its playback architecture, the Netflix backend architecture, and more.

Now, we move on to part 2 of this blog series, where we will understand the components that we described in part 1 of the series, and we will analyze how Netflix is able to deliver such world-class availability and scalability to millions of users, all across the world.

And, how these components satisfy the ever-demanding design goals of the Netflix platform, for stunning results and a delightful user experience.

There is a reason why Netflix’s 167 million users are spending more than 165 million hours on this platform daily, watching 4000 movies and 47,000 episodes of web series daily.

And there is a reason how Netflix is able to deliver stunning performance day after day, without any fail.

Let’s dive straight into the components of Netflix’s microservices architecture, and decode its power and capabilities for a clear understanding.

Secret Behind Amazing Performance of Client

The team behind Netflix has invested a lot in making their client-side application powerful, seamless, and scalable. Netflix is able to seamlessly operate on smartphones, computers, laptops, and tablets, and the reason behind this flawless usage is the SDK or Software Development Kit called Netflix Ready Device Platform (NRDP).

In fact, for running Netflix seamlessly on some smart TVs that don’t have a specialized Netflix client, the performance and output are controlled by Netflix via this SDK.

NRDP needs to be downloaded and installed in any device environment, for running Netflix, and this happens automatically in the backend.

Hence, we can safely say that the Netflix Ready Device Platform (NRDP) is the secret behind Netflix’s amazing device capability and client-side performance.

Netflix Client Structural Component: How it Works?

Here is the illustration of the Netflix Client Structural Component:

This is how it works:

- As mentioned in Part 1, there are two types of connection requests to the server: Playback and Discovery. The Client Apps are able to separate these two types of connection requests and ensure that both of these don’t interrupt one another.

- The Client uses NTBA protocol intelligently for Playback requests, which is done to provide more security and integrity of data over its OCA server layers and to remove any latency caused by SSL/TLS validations.

- During the Playback requests, Netflix’s Client app automatically switches to different OCA servers or lower-down the video quality in case of slow Internet connections, and this ensures that the end-user is able to have a seamless, flawless viewing experience.

- Hence, the secret behind the uninterrupted viewing experience for the Netflix user is the Netflix Platform SDK, which is installed on the Client app. This SDK will continuously monitor the network connections, internet speed and switch over OCA servers in case of any issues or overloading.

Decoding the Netflix Backend Architecture

API Gateway Services

For resolving all requests from the Clients, the API Gateway Service component constantly communicates with AWS Load Balancers, for optimal performance.

This API Gateway Service component can be deployed to multiple AWS EC2 instances, in case of a sudden spike in service requests and the number of end-users.

Here is the visual representation of Zuul, an open-source API Gateway created by Netflix engineers for handling the Client requests in run-time:

- For authenticating, routing, and decorating the service requests, Inbound Filters are deployed.

- For routing the requests to Origin or Application API, Outbound Filters are deployed, which can also return Static Resources for the specific requests.

- As mentioned earlier, Zuul component developed by Netflix is able to swiftly route the traffic for various purposes such as onboarding new application APIs, load tests, and more.

Understanding Application API

Netflix engineers have spent a lot of time and resources to develop a robust Application API, that acts as an orchestration layer for the Netflix microservices. This API prioritizes the services requests based on the relevance and importance, and forms appropriate responses based on the available microservices, in a split second.

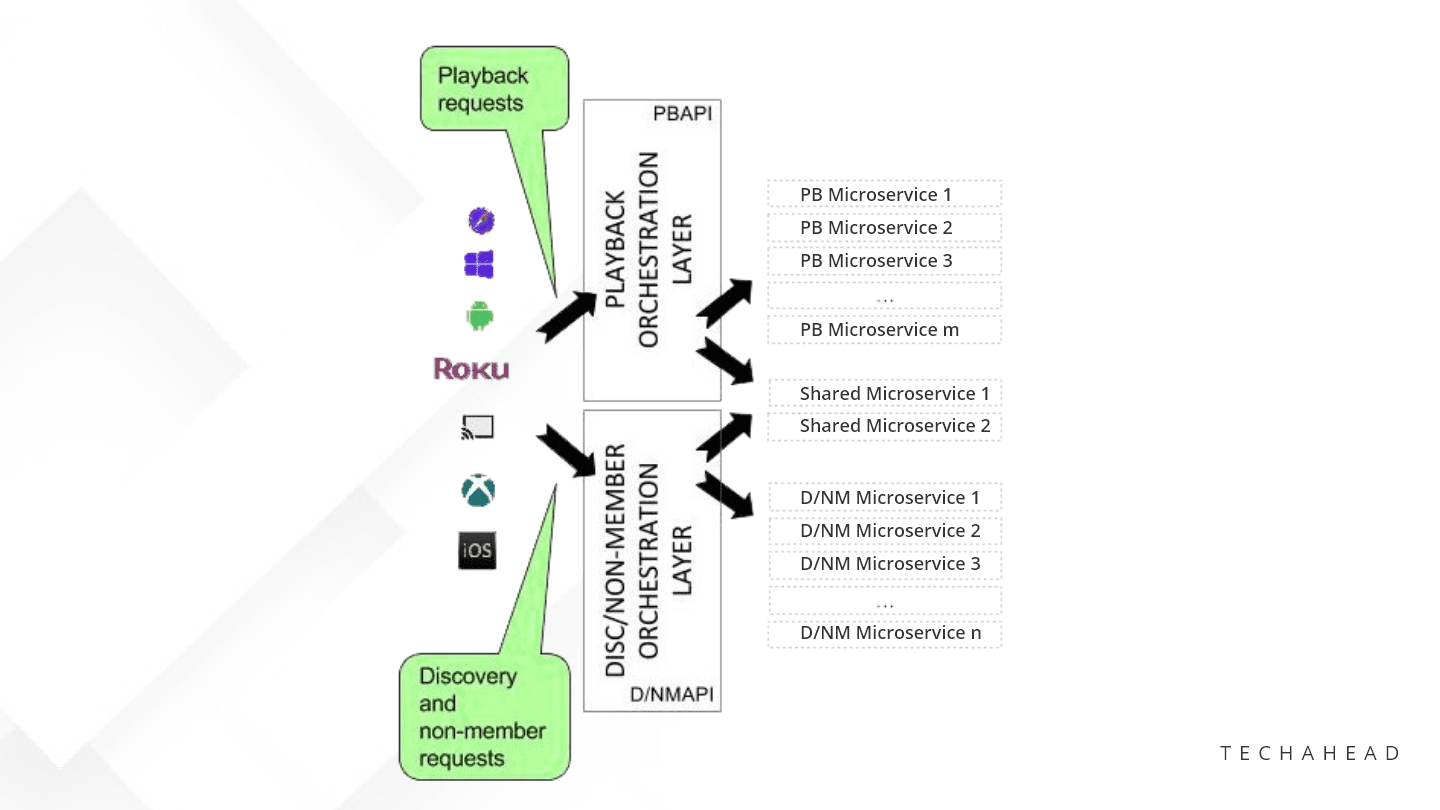

This is how Playback and Discovery Requests are separated by Application API:

As of now, the Application APIs are defined under three categories:

Signup API: Only for non-members, prompting them to sign-up, choose free trial, make payments etc.

Discovery API: Used only for searching, recommending new content, and more.

Play API: Used for streaming videos and viewing licensing requests.

Understanding Microservices Components

The main reason why Netflix delivers powerful performance consistently is the microservices-based architecture, that resists failures and optimizes performance.

Microservices are basically small suites of services, that are running parallelly, in their own process bubbles, and communicating with each other via lightweight, agile communication protocols.

This is how microservices-based architecture is implemented by Netflix:

- These microservices are able to operate on their own or trigger other microservices via REST or gRPC

- Every microservice has its data stores and a little bit of in-memory cache stores of recent activities. Via the EVCache component, Netflix is able to cache the memory of microservices and use them for timely action.

Understanding Data Stores

When Netflix was initially launched, they had their own databases and servers, which was not a feasible solution in the long term. This is the reason they migrated to Amazon Web Services or AWS, and while migrating, they heavily used Data Stores, both SQL, and NoSQL, for different purposes.

Here are the different data stores used by Netflix all through these years:

- MySQL data stores are exclusively used for managing movie/web series titles and transactions and billing-related activities.

- For Big Data processing, Hadoop is used

- For searching the titles, ElasticSearch is deployed

- Cassandra, a high-performance, distributed column-based NoSQL datastore is used for handling large requests of data processing, with no single point of failure.

Understanding Stream Processing Pipeline

The backbone of Netflix’ business analytics along with personalized recommendations is Stream Processing Data Pipeline, as visualized here:

Critical activities related to microservices such as producing, collecting, processing, aggregating, and moving the relevant microservices to data processors in real-time are executed by this Stream Processing Pipeline.

Some interesting highlights:

- This streaming process pipeline processes trillions of events and petabytes of data, every single day.

- It empowers the platform to scale swiftly, in case of a sudden spike in the active user count.

- The Router Module enables routing of microservices to different applications or data sinks, while Kafka is used for the same purpose along with buffering for downstream movies/web shows.

- With Stream Processing as a Service (SPaaS), the data engineers are able to create their own streaming processing pipelines, and this enables the platform to manage scalability and performance.

Global Content Delivery Via Open Connect

While understanding the components of Netflix’s microservices-based architecture, Open Connect is a crucial aspect, we can’t ignore.

For storing, and delivering content to millions of users, Netflix has built and operates Open Connect, a global Content Delivery Network with some stunning features.

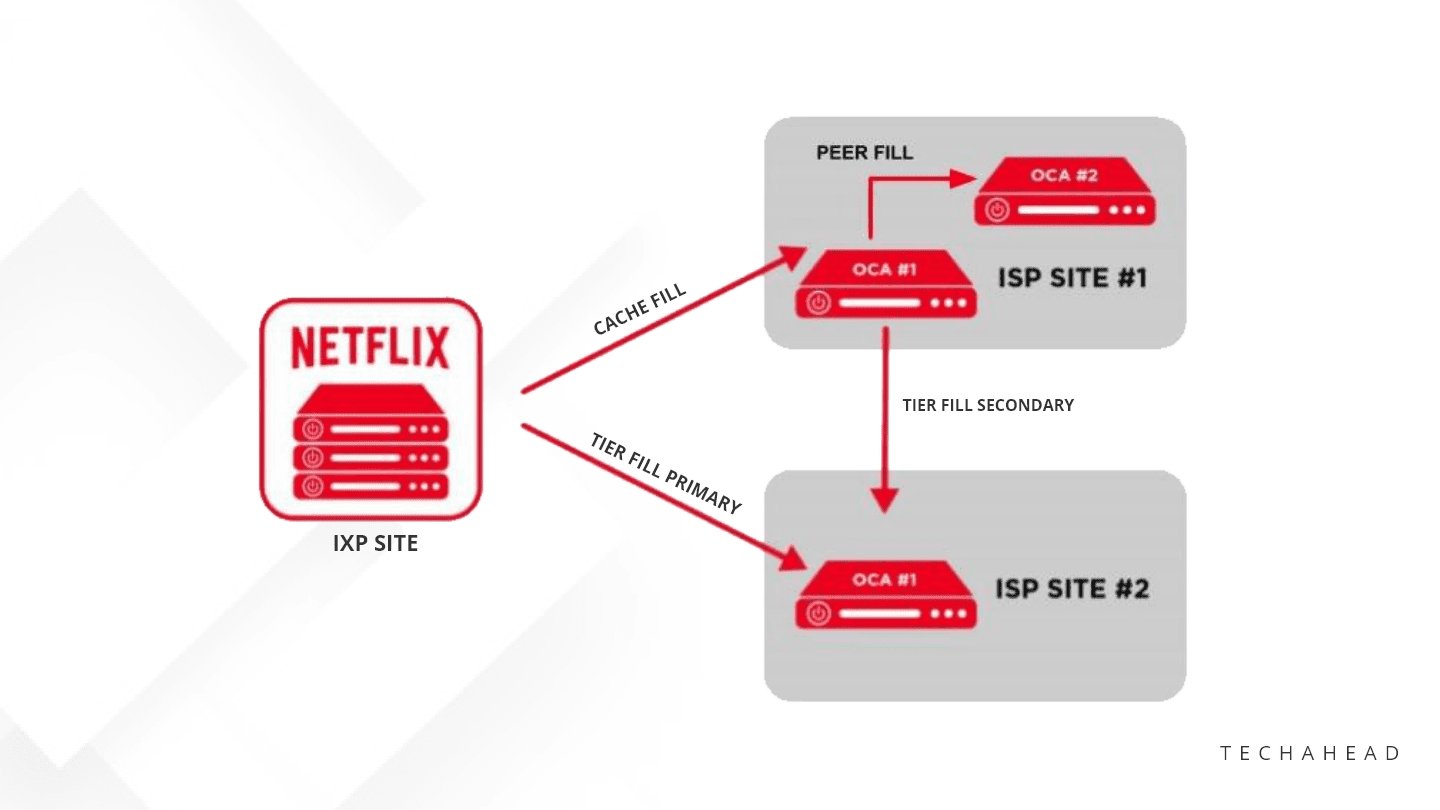

Infact, such is the importance of Open Connect for delivering powerful performance, Netflix has partnered with leading Internet Service Providers (ISPs) and Internet Exchange Points (IXs or IXPs), for deploying Open Connect Appliances (OCAs) into their network.

This is how this deployment of OCAs work:

Few highlights about Open Connect:

- Open Connect Applications or OCAs are optimized for storing and retrieving large video files from the servers of ISPs and IXPs directly into the subscriber’s preferred devices (smart TVs, phones, laptops, tablets)

- These servers learn about the health metrics of the network from ISPs, IXPs data logs and optimize the performance.

- The OCAs also report the data of stored videos to the Open Connect Control Plane services on the AWS platform.

- As a result, the Control Plane Services will pick up this data and ensure instant availability of this data via the most active and fast OCAs.

- When a new video file is transcoded successfully, and stored in the AWS3 instance, the control plane services will instantly transfer these files to the OCAs servers on IXP sites.

- Cache fill is then used to transfer these files to OC servers within the ISP sites, under their sub-network.

- If needed, Peer Fill is deployed by OCA Servers to transfer the video files to other OCA servers.

Here is a visual representation of Fill Patterns by OCAs:

This concludes Part 2 of our blog series for understanding the microservices-based architecture of Netflix.

In the third and concluding part, we will understand the entire system, and find out how it works with respect to the design and architecture of the system.

If you are looking for expert programmers and system architects who can design and develop similar platforms by deploying microservices-based architecture, then we at TechAhead can provide you with world-class solutions.

Schedule a no-obligation consultation with our Mobile App Engineers, and find out how our passionate and talented streaming app developers can launch your next disruptive mobile-based business and help you dominate your niche.