Required for core functionality such as security, network management, and accessibility. These cannot be disabled.

Most senior executives know their organizations are running AI in production. What they don’t know is whether those systems will survive the next 18 months of regulatory enforcement.

You’re navigating multiple frameworks simultaneously: EU AI Act, GDPR, HIPAA, the U.S. AI Executive Order, and NIST AI RMF. These don’t exist in isolation. When your AI touches multiple domains, the obligations stack, and the compliance burden compounds.

Key Takeaways

- The Math Doesn’t Work: If you start today, you have 16 months. Your compliance program needs 18-24. This explains the retrofit problem.

- Shadow AI Is Your Biggest Gap: 73% of compliance gaps surface in discovery, not implementation. Most organizations don’t know what AI they’re running.

- Retrofitting Costs 4-5x More: Pre-development compliance workshops cost weeks. Post-deployment remediation averages $2-4M per high-risk system. Build it right first.

- One Framework Can Satisfy All: You don’t need separate programs for EU AI Act, NIST, and ISO 42001. Learn the crosswalk strategy.

- Procurement Speed Matters More Than Penalties: Certified organizations close deals 40% faster. The business case isn’t avoiding fines; it’s winning contracts competitors can’t.

Here’s the exposure gap: according to Compliance Week’s 2026 survey, 83% of organizations are already using AI tools, but only 25% have implemented strong governance frameworks. That gap represents massive regulatory risk.

Most people get AI compliance wrong. It’s not a blocker, but a strategic infrastructure that lets you move faster. Companies with mature governance tend to get approvals quickly. However, the ones treating it as an add-on struggle through every procurement cycle and every security questionnaire.

As Nithya Das, General Manager of Governance at Diligent, puts it:

2026 will mark a turning point, with boards and executive teams institutionalizing AI governance as a core competency.

This blog isn’t another compliance think piece. It’s a working guide for business leaders who need AI programs audit-ready before August 2, 2026, when full EU AI Act enforcement hits high-risk systems. That deadline matters because most compliance programs need 12-18 months to build properly.

Must Read: Orgnizational Readiness for Enterprise AI Adoption

Decoding Enterprise AI Compliance as Per 2026 Reference

Enterprise AI compliance in 2026 represents the combination of regulatory, security, privacy, and ethical controls that govern AI systems across their full AI lifecycle, from initial ideation and data sourcing to model training, deployment, continuous monitoring, and eventual decommissioning. This includes monitoring and controlling AI system behavior throughout the lifecycle to ensure compliance, safety, and risk management.

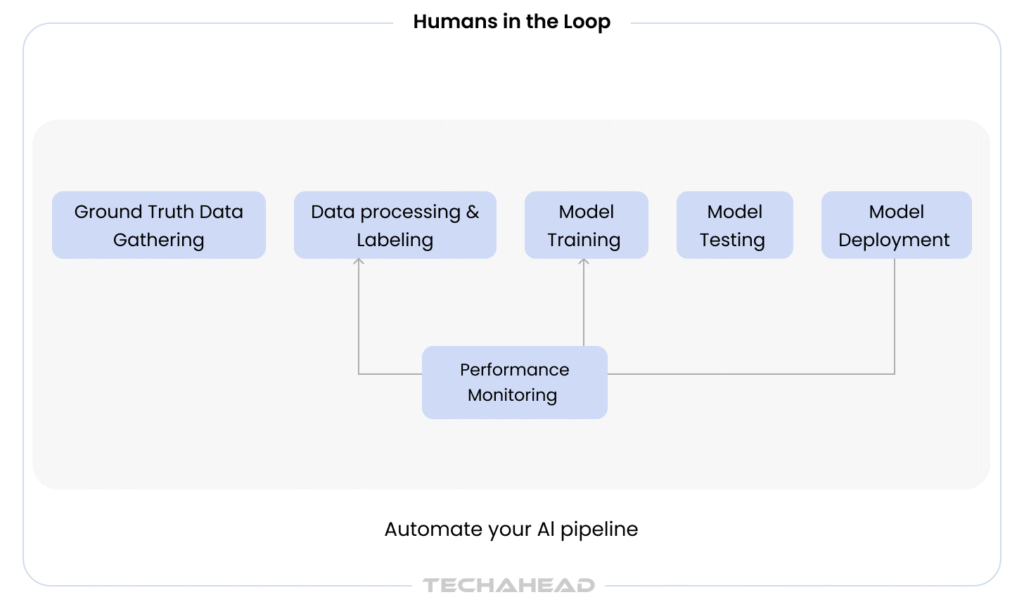

This goes far beyond generic IT compliance. Organizations must address AI models, data provenance, training pipelines, LLM prompts, human-in-the-loop workflows, and downstream integrations that could propagate risks across enterprise ecosystems. AI compliance ensures that AI systems operate within legal, ethical, and regulatory boundaries to prevent misuse, bias, or unintended harm, encompassing structured governance, continuous risk assessment, and strong security measures.

Bonus Read: Responsible & Ethical AI

Specific governance frameworks and laws include:

- EU AI Act and AI regulations across member states

- General Data Protection Regulation (GDPR), CCPA/CPRA, and data protection mandates (GDPR plays a central role in data privacy and is a cornerstone of AI compliance frameworks.)

- HIPAA, GLBA, PCI DSS for sector-specific requirements

- SOC 2, ISO/IEC 27001, and ISO/IEC 42001 for certifiable standards

- NIST AI Risk Management Framework for voluntary best practices

- Sectoral guidance from EBA, FCA, SEC, and FTC

Enterprise AI compliance spans four domains:

- Legal and regulatory requirements (permits, registrations, conformity assessments)

- Security and resilience (model security, access controls, incident response)

- Privacy and data protection (consent, minimization, data subject rights)

- Ethics and fundamental rights (fairness, explainability, human oversight mechanisms)

For mid-market to Fortune 500 organizations, AI compliance must align with existing GRC structures rather than becoming isolated. Enterprises ensure compliance with AI regulations through a multi-layered approach that integrates governance frameworks, technical controls, and continuous monitoring.

TechAhead’s ISO 42001:2023 certification demonstrates our AI governance maturity across 2,500+ platform launches. We embed compliance into clients’ existing GRC structures through our SOC 2 Type II and ISO 27001:2022 frameworks. Our 240+ engineers architect systems that map sector-specific obligations (HIPAA for healthcare, SR 11-7 for finance, EU AI Act for global deployments) to unified governance controls rather than fragmented compliance programs.

Why Enterprise AI Compliance Matters for Large Organizations

The EU AI Act employs a tiered penalty structure where non-compliance with prohibited AI practices can result in fines up to €35 million or 7% of global revenue, while violations of high-risk obligations can incur fines up to €15 million or 3% of global revenue. GDPR penalties cap at €20 million or 4% of global turnover. Enforcement focus is shifting from guidance to aggressive penalties in 2025-2026. In December 2025, a bipartisan coalition of 42 U.S. state attorneys general sent a letter to major AI companies demanding immediate safety safeguards for AI chatbots following reports of harmful interactions, with companies being required to confirm commitments by January 16, 2026.

AI compliance matter impacts legal, ethical, and operational aspects of enterprise AI deployment, making it essential for organizations to prioritize compliance to avoid penalties and reputational harm. Failure to comply with AI regulations can lead to serious legal, financial, and reputational consequences, including regulatory fines, operational disruptions, and loss of market access.

Recommended: Enterprise AI Development Cost

Specific risk categories include:

- Financial penalties reaching 7% of global revenue

- Forced shutdown of non-compliant AI systems

- Injunctions blocking product launches

- Personal liability for executives in EU jurisdictions

Reputational damage manifests in eroded customer trust when AI is shown to be biased, opaque, or unsafe, particularly in credit scoring, hiring, or medical triage applications. The business operations impact extends beyond fines to competitive positioning.

Business enablement benefits for compliant enterprises include:

- Smoother procurement and security reviews via pre-vetted AI system inventory

- Faster board approval for AI investments

- Easier customer security questionnaires and RFPs

- Improved ability to enter restricted markets (EU financial services, U.S. healthcare)

The business case extends beyond risk mitigation. According to Deloitte’s 2026 State of AI in the Enterprise report, 66% of organizations report productivity and efficiency gains from AI adoption.

However, these benefits require governance infrastructure that allows AI to scale safely.

Investors and auditors increasingly expect evidence of structured enterprise AI governance in annual reports and audit cycles. By 2025-2026, demonstrating compliance will be table stakes for organizations deploying AI systems at scale.

Regulatory and Framework Landscape for Enterprise AI Compliance

The regulatory landscape demands strategic navigation. Enterprises operating globally face converging compliance obligations from the EU AI Act, U.S. federal frameworks like NIST AI RMF, and emerging state-level AI laws. The challenge isn’t just managing multiple regulations, it’s that these frameworks interact and compound. Identifying and mapping specific regulatory obligations for each AI deployment is essential to ensure comprehensive compliance coverage.

Table: EU AI Act Risk Tiers & Penalties

| Risk Tier | Examples | Requirements | Penalties |

| Unacceptable | Social scoring, manipulative AI | Banned outright | €35M or 7% revenue |

| High-risk | HR, credit scoring, biometrics | Conformity assessments, FRIAs, human oversight | €15M or 3% revenue |

| Limited-risk | Chatbots | Transparency disclosures | Lower penalties |

| Minimal | General-purpose AI | Basic documentation | Minimal |

An AI system processing EU customer data while deployed from U.S. infrastructure faces both GDPR and EU AI Act requirements alongside potential state law obligations. Multinational enterprises should design AI compliance programs around the highest regulatory standard they face, ensuring one governance framework satisfies multiple jurisdictions rather than fragmenting compliance across regions.

EU AI Act

The EU AI Act classifies AI systems into four risk tiers based on potential harm:

- Unacceptable risk: Banned outright (social scoring, manipulative AI) with penalties up to €35 million or 7% of global turnover

- High-risk AI: Requires conformity assessments and Fundamental Rights Impact Assessments (FRIAs) by August 2, 2026

- Limited-risk: Transparency disclosures required (e.g., chatbot identification)

- Minimal risk: Most general-purpose AI with basic documentation requirements

High-risk systems face strict operational obligations including risk assessments, logging for traceability, and mandatory human oversight. Annex III defines high-risk categories covering HR recruitment, creditworthiness assessment, critical infrastructure management, and biometric identification.

Related Read: Why Does Enterprise AI Need Audit Trails

For enterprise “deployers” using third-party AI, compliance obligations include:

- Conducting risk assessments before deployment

- Ensuring continuous oversight during operations

- Maintaining audit logs for 6-24 months

- Notifying workers when emotion recognition AI is used in workplaces

- Conducting FRIAs that integrate with existing privacy impact assessments

TechAhead has navigated these requirements across multiple enterprise deployments. When we engineered the digital transformation for AXA, one of the world’s largest insurance firms, we embedded compliance-by-design principles throughout the AI-powered claims processing and risk assessment systems. This meant building audit trails into model decision workflows, implementing explainability layers for automated underwriting, and creating comprehensive documentation frameworks that satisfied both SOC 2 Type II and emerging EU AI Act requirements.

GDPR and Data Protection Laws

Organizations must adhere to overarching data privacy laws like General Data Protection Regulation (GDPR) and CCPA, as well as industry-specific regulations. GDPR layers data minimization, consent, and DPIAs for high-risk processing, intersecting with AI via “right to explanation” debates under Article 22 for automated decisions. GDPR is a foundational element in AI compliance frameworks, setting requirements for data privacy and AI system design.

Must Read: CCPA Vs. GDPR (What’s the Difference)

U.S. AI Executive Order and NIST AI RMF

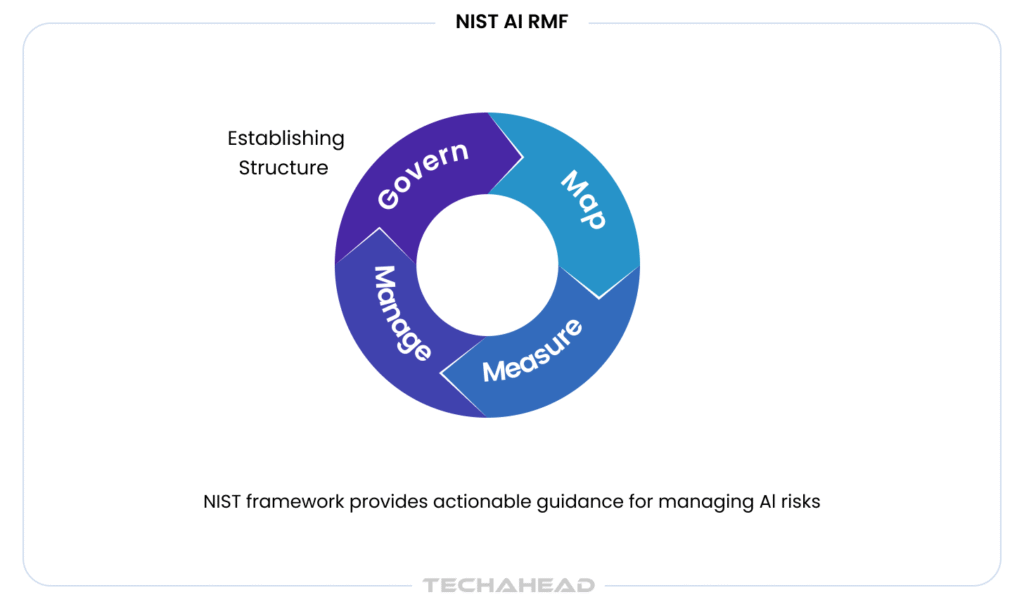

The NIST AI Risk Management Framework provides guidelines for managing risks associated with AI systems, focusing on enhancing trust and reliability in AI deployments. It structures governance around four functions: GOVERN (policy, culture, accountability), MAP (contextualize risks), MEASURE (performance and fairness metrics), and MANAGE (prioritize and respond). While voluntary, auditors and insurers increasingly reference it as best practices.

The NIST AI RMF has become the de facto standard for U.S. enterprises, providing structured methodology for managing AI risk across four core functions. Here’s the practical implementation path:

Start With the Govern Function

Establish cross-functional AI governance committees that integrate legal, technical, and ethical expertise. Define clear ownership through AI RACI charts specifying who is Responsible, Accountable, Consulted, and Informed for every AI initiative. Create formal AI use policies that specify permitted and prohibited applications.

Map Your AI Landscape

Develop a comprehensive AI asset inventory cataloging every model, API, and AI-enabled tool in production or development. Include shadow AI that engineering teams adopted without formal approval. For each system, document: business purpose and use case, data sources and processing flows, model type and deployment architecture, risk classification under applicable frameworks, and responsible parties and review cadences.

Implement Measurement and Monitoring

Define key risk indicators (KRIs) and key performance indicators (KPIs) specific to AI systems. These go beyond traditional software metrics to include model accuracy and drift detection, fairness metrics across protected classes, data provenance and lineage tracking, explainability scores for high-stakes decisions, and incident response effectiveness.

Establish Manage Protocols

Create procedures for continuous model validation, bias testing, security assessments, and compliance verification. Implement human-in-the-loop checkpoints for critical decisions. Build incident response playbooks specific to AI failures.

The frameworks that matter in 2026 aren’t the ones with the most theoretical sophistication. They’re the ones that produce audit-ready evidence when regulators, customers, or your board ask for it. NIST AI RMF provides that operational backbone that turns governance from aspiration into executable process.

ISO/IEC 42001

ISO/IEC 42001 is an international standard being developed to ensure AI systems are safe, reliable, and ethical, providing a structured governance framework for AI compliance. Published December 2023, it offers a certifiable AI Management System comparable to ISO 27001 but focused on AI governance and AI risk. Fortune 500 enterprises are pursuing certification by 2026 for audit credibility.

Sectoral Overlays

HIPAA applies PHI de-identification requirements in AI triage. PCI DSS governs payment AI. EBA and ECB guidelines address AI bias testing in banking. FDA expectations cover AI/ML software as medical devices. These layer on top of general AI regulations.

Bonus: Points to Consider Before Integrating Payment Gateway

The regulatory velocity is accelerating. Financial services alone saw multiple AI-related regulatory updates in just one year, nearly doubling previous volumes. Organizations must monitor evolving requirements across multiple jurisdictions and sectors simultaneously.

Enterprise AI Compliance Across the AI System Lifecycle

AI compliance is not a one-off checklist. AI compliance covers all phases of the AI development lifecycle, requiring compliance obligations for model development, training, deployment, and monitoring to ensure adherence to legal and ethical standards. Organizations should inventory AI systems and map them to potential risks, classifying them by risk classification level.

Table: Compliance Actions by Lifecycle Stage

| Lifecycle Stage | Key Compliance Actions |

| Discovery & Ideation | Risk classification, initial model cards |

| Data Collection | Track provenance, DPIAs, bias screening |

| Model Development | Bias testing (80% threshold), explainability metrics |

| Deployment | Security reviews, HITL implementation, conformity declarations |

| Operations | Monitor drift, log incidents, 72-hour GDPR notifications |

| Retirement | Preserve logs (10 years high-risk), document decommissioning |

Discovery and Ideation

Conduct preliminary risk classification mapping to EU AI Act tiers and sectoral rules. Document use case intent via initial model cards. This is where you identify whether a use case falls into high-risk AI categories before investing in development.

Data Collection and Preparation

Ensuring strict data quality and obtaining explicit consent are critical components of data governance and privacy. Track data provenance including sources, licenses, and consents. Perform DPIAs under GDPR for sensitive data processing. Datasets must be rigorously screened to identify and minimize algorithmic biases to avoid discriminatory outcomes. Organizations must ensure that personally identifiable information is anonymized and processed only with explicit user consent when used to train or fine-tune models.

Model Development and Evaluation

Incorporate bias testing with disparate impact ratios below 80%, robustness checks against adversarial prompts, and explainability metrics. High standards for training data are necessary to ensure it is accurate, representative, and free from unlawful bias. Produce data sheets documenting performance by demographic groups to satisfy audit requirements and regulatory expectations.

Deployment and Integration

Require security reviews including IAM for models. Implement human-in-the-loop for high-risk outputs. Generate conformity declarations under EU AI Act for high-risk AI systems. Embedding requirements into the development lifecycle ensures compliance is not a reactive afterthought.

Operations and Monitoring

Continuous monitoring of AI systems is essential to ensure compliance and security, as it helps detect model drift and unauthorized access, ensuring that AI systems operate as intended over time. Tracking AI system behavior is critical to ensure ongoing compliance, safety, and risk management throughout the operational phase. Track drift with statistical tests, monitor hallucinations via logging, and maintain 72-hour GDPR notifications for incidents. Static, point-in-time compliance is insufficient; AI models can change behavior over time.

Retirement and Archiving

Preserve logs for 10-year retention in high-risk cases. Document decommissioning decisions and data flows during system sunset.

Organizations deploying AI systems must maintain comprehensive inventories documenting vendors, use cases, risk classifications, and data flows to comply with the EU AI Act. This centralized inventory becomes the backbone for audit trails and regulatory compliance.

Core Components of an Enterprise AI Compliance Framework

This section describes the operating model layer, the structures, processes, and artifacts an enterprise needs to manage AI compliance consistently across hundreds of use cases. Compliance frameworks must adapt to the evolving landscape of AI technologies, ensuring that governance, safety, ethics, and security are maintained as new AI capabilities emerge.

Table: AI Governance Framework Components

| Component | Description |

| Governance Structure | AI Council with CIO/CTO, compliance lead, model risk managers, DPO |

| Policies & Standards | Risk definitions aligned to EU Annex III + sector requirements |

| Risk Classification | Tiered methodology: minimal (self-attest) vs high-risk (FRIAs, audits) |

| Documentation | Model cards, data sheets, audit trails from source to deployment |

| Monitoring & IR | Automated tracking + AI-specific incident playbooks |

| Training | 90% completion target for AI ethics and regulatory training |

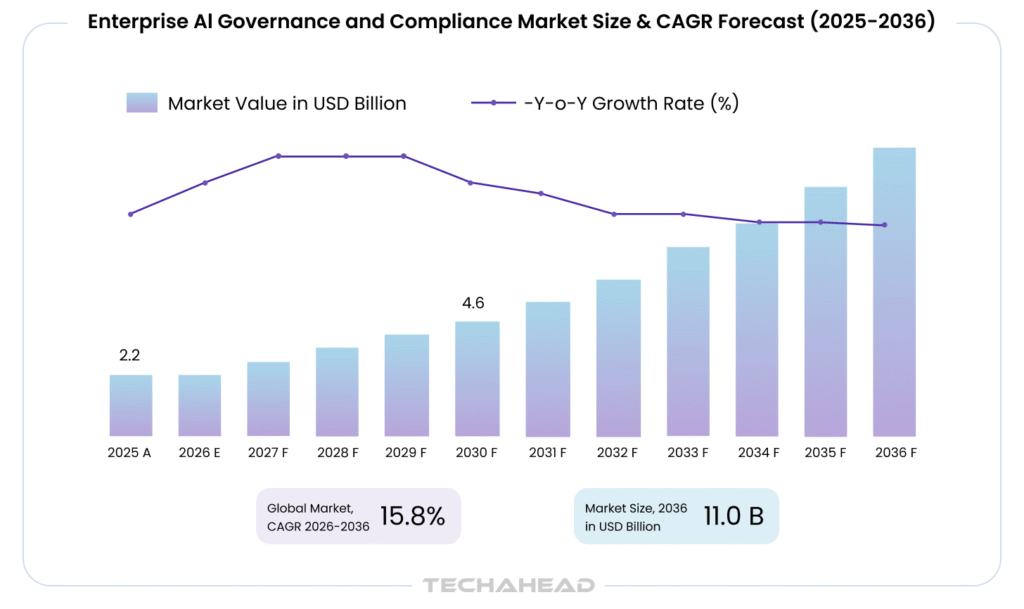

The enterprise AI governance and compliance market reflects this urgency. Valued at $2.20 billion in 2025, the market is projected to reach $11.05 billion by 2036, growing at a 15.8% CAGR as organizations shift from voluntary ethics guidelines to mandatory regulatory compliance obligations.

AI Governance Structure and Roles

A centralized governance structure is critical for responsible scaling. Creating a dedicated committee of legal, technology, and ethics experts is necessary to oversee the entire AI lifecycle. This AI Governance Council should include CIO/CTO sponsorship, a compliance lead, model risk managers, and a data protection officer.

AI Policies and Standards

Define policies aligning internal “high-risk” definitions to EU Annex III categories plus sector requirements. Organizations typically follow a risk-based model, identifying high-risk systems that require the most stringent oversight.

Risk Classification Methodology

Use tiered methodologies: minimal risk systems self-attest, while high-risk requires FRIAs and external audits. Maintaining a central registry of all AI systems, data sources, and vendors enables classification by regulatory risk.

Documentation Standards

Require model cards documenting purpose, metrics, limitations, and ethical risks. Data sheets should cover provenance and biases. Documenting model logic and inputs is essential for providing transparency and explainability of automated decisions. Organizations must maintain comprehensive audit trails tracking data lineage from source to deployment. Keeping detailed records of updates and validation results ensures audit readiness for regulatory inquiries.

Monitoring and Incident Response

Automated monitoring can be used to track model performance, accuracy, and fairness, triggering alerts if a system deviates from its intended behavior. Fuse AI-specific playbooks with existing IR processes.

Training and Awareness

Target 90% completion rates for AI ethics and regulatory compliance training across relevant teams.

Establishing a compliance council including cross-functional team members can help avoid silos in compliance efforts. This framework should integrate with existing risk registers, control libraries, policy hierarchies, and board-level reporting.

AI Risk Management: From Traditional Risk to AI-Specific Threats

AI risk management includes the identification of risks associated with AI use cases, classification of AI systems by impact and risk level, and continuous monitoring for emerging risks. AI introduces unique risks that extend beyond traditional IT risk, necessitating the integration of AI-specific risk management into broader enterprise frameworks like ISO 31000 and COSO.

Table: Traditional vs AI-Specific Risks

| Risk Type | Description |

| Traditional IT Risk | Data breach |

| Traditional IT Risk | Unauthorized access |

| Traditional IT Risk | System downtime |

| Traditional IT Risk | Configuration error |

| Traditional IT Risk | Malware |

| Traditional IT Risk | User error |

| AI-Specific Risk | Model inversion extracting PII from training data |

| AI-Specific Risk | Prompt injection bypassing safeguards |

| AI-Specific Risk | Model hallucination (20-30% error rates in early LLMs) |

| AI-Specific Risk | Data poisoning altering model behavior |

| AI-Specific Risk | Adversarial attacks manipulating outputs |

| AI-Specific Risk | Over-reliance on automated decisions without human oversight |

According to 2026 compliance research, 64% of organizations cite data privacy and security risks as their top concern when adopting AI. This anxiety is warranted given the unique attack surfaces AI systems introduce. Large enterprises typically deploy 8-10 governance and compliance tools per AI system by 2026, reflecting the complexity of managing AI-specific threats.

Map AI risk activities to NIST AI RMF’s four functions:

- GOVERN establishes ethics policies

- MAP contextualizes threats

- MEASURE tracks performance and fairness, and

- MANAGE prioritizes responses

Concrete AI-specific risk types include:

- Model hallucination in GenAI (20-30% error rates in early LLMs)

- Adversarial attacks and prompt injection

- Data poisoning altering training data

- Model inversion extracting PII

- Bias and disparate impact (e.g., 2x denial rates in credit AI)

- Over-reliance on automated decisions

- Supply chain risks from third-party models and APIs

Regular risk assessments are essential for identifying vulnerabilities in AI systems and ensuring regulatory compliance, which helps organizations proactively manage AI risks. Risk assessment depth should differ by use case: internal productivity tools need basic logging, customer-facing recommendations require fairness audits, and credit or clinical systems demand human oversight and full explainability.

TechAhead integrates AI incident response into clients’ existing IR workflows with automated regulatory reporting pipelines. Our SOC 2 Type II-certified monitoring infrastructure tracks both EU AI Act’s 15-day serious-incident requirements and GDPR’s 72-hour breach notification deadlines through unified alerting systems. We implement continuous control optimization through quarterly red-teaming exercises and monthly drift detection reviews, ensuring AI systems adapt to evolving threats while maintaining audit-ready compliance evidence across all regulated deployments.

Human-in-the-Loop, Explainability, and Auditable Decisions

Regulators increasingly expect human oversight for high-risk AI, especially where decisions affect rights, employment, access to services, or health. Human oversight is mandated for high-risk applications to allow qualified professionals to review or override automated processes.

Automation without accountability creates unmanageable risk. Human-in-the-loop (HITL) design principles embed human judgment and oversight into AI workflows at critical decision points. This isn’t about slowing innovation. It’s about ensuring AI augments human decision-making rather than replacing judgment with opacity.

Human-in-the-Loop Patterns

Different patterns suit different enterprise workflows:

- Pre-decision review: All outputs reviewed before action (credit decisions, medical diagnoses)

- Post-decision sampling: 10% of outputs audited for monitoring

- Override capabilities: Human can reverse automated decisions at any point

AI-generated decisions should be subject to human review, validation, and override before finalization in high-risk scenarios.

Explainability Requirements

Being able to explain in non-technical language why a decision was made, what key factors drove it, and how errors can be corrected addresses GDPR “right to explanation” debates and EU AI Act transparency expectations. Ensure AI systems provide clear reasoning paths for review.

Explainability has shifted from nice-to-have to mandatory. For high-stakes decisions affecting employment, credit, healthcare, or legal outcomes, regulators expect organizations to explain how AI systems reached conclusions. This doesn’t mean revealing proprietary algorithms. It means providing meaningful explanations to affected individuals and maintaining audit trails demonstrating decision rationale.

Explainability requirements vary by use case:

- Global explanations: Overall model behavior and feature importance

- Local explanations: Why this specific decision for this individual

- Counterfactual explanations: What would need to change for a different outcome

Related Read: Top Trends Toward Explainable Predictive Models

Logging and Auditability

Audit logs are critical for tracking data access, model changes, and decisions made by AI systems, providing a transparent record that supports compliance and accountability. Record which human approved or overrode AI outputs, what data was shown to them, and how decisions were ultimately made.

Robust human oversight is a major control for mitigating bias, preventing inappropriate automation, and supporting defensible positions in litigation.

Security, Privacy, and SOC 2 for AI Systems

Traditional InfoSec and data privacy controls remain necessary but are not sufficient for AI security. Models, prompts, and embeddings introduce new attack surfaces that require AI-specific security controls.

Table: SOC 2 Principles Adapted for AI

| SOC 2 Principle | AI-Specific Interpretation |

| Security | model protection: adversarial testing, prompt injection defense, model theft prevention |

| Availability | inference latency monitoring, fallback mechanisms, disaster recovery for models |

| Processing Integrity | model validation, drift detection, input quality verification, output validation |

| Confidentiality | training data encryption, model parameter protection, secure storage |

| Privacy | PIAs for AI use cases, consent management, data minimization, individual rights support |

AI-Specific Security Threats

- Prompt injection bypassing safeguards

- Model theft via query inference

- Data leakage through LLM responses

- Training data poisoning

- Exploitation of system integrations and plugins

Recommended: Prompt Injection Risks in Agentic AI Systems

SOC 2 Evolution for AI Systems

SOC 2 Type II certification has become the baseline enterprise procurement requirement. AI systems add complexity to traditional SOC 2 control frameworks. Auditors now expect AI-specific controls addressing unique risks beyond conventional software.

SOC 2 Type II audits now address AI systems explicitly with specific control areas:

- Change management for models and versions

- Logging and monitoring of AI interactions over 12+ months

- Strict access controls to training data and models

- Vendor management for AI APIs and third-party models

The five SOC 2 principles apply with AI-specific interpretations:

Security: AI models require protection against adversarial attacks, data poisoning, model theft, and prompt injection. Controls include model access restrictions, input validation and sanitization, adversarial testing and red teaming, and model versioning with integrity verification.

Availability: AI system uptime and performance require monitoring model inference latency, fallback mechanisms for model failures, capacity planning for inference demand, and disaster recovery for model deployments.

Processing integrity: AI outputs must be accurate and complete, demanding model validation testing and performance metrics, drift detection and retraining triggers, input data quality verification, and output validation before business use.

Confidentiality: AI training data and model parameters constitute confidential information requiring encryption, access controls, secure model storage, and training data protection.

Privacy: AI processing of personal information requires privacy impact assessments, consent management for AI use cases, data minimization in model training, and individual rights support (access, deletion, portability).

Privacy Integration

Protect sensitive data by preventing PHI, PCI, or other regulated data from entering non-compliant external LLMs. Use data classification, tokenization, and on-prem or VPC-hosted models to maintain data security within HIPAA, GDPR, and industry rules.

Organizations must have strict role-based access control to restrict access to sensitive AI models and data. Demonstrate to auditors that guardrails, red-teaming, and access controls function with log evidence over 6-12 month periods.

SOC 2 for AI systems represents opportunity, not just obligation. Organizations achieving certification demonstrate to enterprise customers that their AI operations meet rigorous security, availability, and privacy standards. This becomes procurement differentiator in competitive markets.

Sector-Specific AI Compliance Considerations

While foundational AI compliance patterns are cross-industry, implementation differs significantly based on industry-specific regulations and use cases.

Table: Compliance by Sector

| Sector | Key Regulations | AI-Specific Requirements |

| Healthcare | HIPAA, FDA | PHI de-identification (Safe Harbor 18), 95% explainability, clinical validation |

| Financial Services | Fair Lending, SR 11-7 | Bias testing, <5% backtesting error, disparate impact analysis |

| Government | Procurement rules | FRIAs, citizen rights protection, accountability frameworks |

| SaaS Vendors | Customer contracts | SOC 2 certification, EU AI Act for features, data protection standards |

Healthcare (HIPAA, FDA)

Healthcare AI presents the most mature sector-specific compliance framework, offering lessons applicable across regulated industries. HIPAA requirements for AI systems demonstrate how existing privacy and security regulations extend to new technologies.

Healthcare AI solutions must address PHI de-identification using HIPAA Safe Harbor 18 criteria. Clinical triage systems require human oversight with 95% explainability in diagnostics. FDA expectations for AI/ML software as medical devices add lifecycle documentation requirements. Responsible AI practices in healthcare prioritize patient safety above efficiency gains.

Bonus Read: AI Chatbots in Healthcare (Uses & Implementation Costs)

Protected Health Information (PHI) requires comprehensive controls. AI systems processing PHI must implement:

- End-to-end encryption for data in transit and at rest

- Access controls limiting PHI exposure to minimum necessary

- Audit logging of all PHI access and processing

- Business associate agreements with AI vendors

- Risk assessments addressing AI-specific threats

De-identification isn’t simple with AI. Traditional de-identification techniques may prove insufficient for AI applications. Models can re-identify individuals through pattern recognition or infer sensitive attributes from seemingly innocuous data. Healthcare organizations must implement differential privacy, synthetic data generation, or federated learning approaches that maintain model utility while preventing PHI exposure.

Clinical decision support requires special handling. AI systems influencing clinical decisions face heightened scrutiny. FDA oversight extends to AI-enabled medical devices. Clinical validation demands peer-reviewed evidence of safety and efficacy. Liability frameworks hold healthcare providers accountable for AI-assisted decisions.

Recommended: AI Powered Clinical Decision Support Reduces Diagnostic Errors

Financial Services (Fair Lending, SR 11-7)

Financial AI faces fair lending laws, anti-discrimination requirements, and model risk management under SR 11-7. Backtesting must achieve error rates below 5%. Credit scoring and fraud detection AI require extensive bias testing and disparate impact analysis. Responsible AI development in finance emphasizes documented decision rationale.

Recommended: Open Banking API Strategy

Government Contractors and Public Sector

Public sector AI requires Fundamental Rights Impact Assessments and procurement rule compliance. Sustainable AI development for government use cases emphasizes accountability and citizen rights protection.

SaaS Vendors

SaaS providers face customer data protection requirements, SOC 2 certification expectations, and EU AI Act obligations for AI features. Enterprises must ensure that third-party AI vendors comply with rigorous data protection and data governance standards.

Continuous evaluation helps ensure that AI systems remain fair, accurate, and aligned with ethical AI guidelines across all sectors.

Building an Enterprise AI Compliance Program: Operating Model and Roles

Compliance cannot be owned by a single team. Caution is needed to avoid leaving AI compliance solely to technical teams and ensure proper informed consent is obtained for AI deployments across the organization.

Key Roles

- AI Governance Council (or AI Risk Committee): Cross-functional oversight body

- Executive Sponsor: Typically CIO/CTO or CISO with board access

- AI Compliance Lead: Day-to-day program management

- Model Risk Managers: Technical validation and testing

- Data Protection Officer: Privacy and data subject rights

- Business Owners: Accountability for each high-risk use case

RACI Clarity

Define who is responsible, accountable, consulted, and informed for:

- AI use case approval workflows

- Model deployment decisions

- Incident response escalation

- Regulator interactions and audit responses

Embed AI compliance into existing processes including product lifecycle management, change advisory boards, vendor onboarding, and annual risk assessments for organization’s AI systems.

Metrics and KPIs

Track program effectiveness through:

- Number of AI use cases reviewed

- Percentage with documented risk assessments (target >95% high-risk)

- Incident count and response time (< 24 hours)

- AI ethics training completion rates (target 90%)

Conduct quarterly board or executive committee reporting on AI risk posture and compliance status with summaries of major high-risk AI deployments and emerging regulations. Monitoring evolving global and regional AI laws is crucial for timely adjustments to AI policies.

Practical Implementation Roadmap: 12-24 Month Plan

This roadmap helps enterprises starting or formalizing AI compliance in 2025 to be ready for 2026-27 enforcement peaks.

Table: Implementation Timeline

| Phase | Duration | Key Deliverables |

| Foundation | 0-3 months | AI inventory, high-risk identification, compliance lead appointed, GenAI guardrails |

| Structure | 3-9 months | Governance council, documentation templates, pipeline integration, NIST pilot |

| Scale | 9-18 months | Monitoring standards, bias audits, EU AI Act prep, SOC 2 alignment |

| Optimize | 18-24 months | ISO 42001 certification, automation, red-teaming, global harmonization |

Phase 1: Foundation (0-3 Months)

Build your AI system inventory identifying all AI deployments across business operations. Identify high-risk use cases and map applicable regulations per use case. Appoint an AI compliance lead with cross-functional authority. Implement interim guardrails for GenAI usage including policies and basic technical controls for data access.

Phase 2: Structure (3-9 Months)

Establish formal governance structures with the AI Governance Council. Standardize documentation templates for model cards and data sheets. Integrate AI compliance checks into AI development pipelines. Pilot NIST AI RMF practices on 1-2 critical use cases. AI tools can scan regulatory updates in real-time, extracting key obligations and mapping them to internal policies.

Phase 3: Scale (9-18 Months)

Roll out continuous monitoring and logging standards across AI deployments. Perform AI bias audits on selected models using structured testing methodologies. Prepare for EU AI Act high-risk obligations including FRIAs and workplace notification requirements. Align evidence collection with SOC 2 and ISO 27001 expectations. Manage risks by integrating compliance programs into enterprise risk management.

Phase 4: Optimize (18-24 Months)

Pursue ISO/IEC 42001 certification for competitive advantage. Automate compliance evidence collection through MLOps integration. Integrate continuous red-teaming and adversarial testing. Harmonize AI controls globally across regions and subsidiaries.

Common Pitfalls and How to Avoid Them

Many enterprises focus on tools before foundations, creating gaps when regulators or auditors arrive with specific compliance efforts requirements.

| Pitfall | Fix |

| Treating AI compliance as one-off project | Embed into continuous governance |

| Ignoring shadow AI and unapproved tools | Mandatory inventory with discovery tools |

| Under-documenting training data sources | Minimum documentation standards |

| Over-relying on vendor assurances | Independent due diligence and SLAs |

| Neglecting cross-border data transfer implications | Legal review for all international AI deployments |

| Focusing only on model performance | Require governance artifacts alongside metrics |

Organizations must involve legal, privacy, and HR early, especially for employee-facing AI like performance evaluation, hiring, and monitoring that can easily fall into high-risk categories. Compliance requirement mapping should happen at ideation, not deployment.

TechAhead’s cross-functional review process surfaces 73% of compliance gaps during initial discovery sessions that would otherwise emerge during audit preparation. We’ve found that organizations conducting AI risk workshops with legal, privacy, HR, and engineering teams before any code is written reduce remediation costs by 4-5x compared to retrofitting compliance post-deployment. Our pre-development compliance questionnaire identifies jurisdiction-specific obligations across 24+ regulatory frameworks within the first stakeholder interview.

Looking Ahead: The Future of Enterprise AI Compliance

The 2026-2028 period will see aggressive enforcement of EU AI Act provisions, proliferating U.S. state-level AI laws, and ISO 42001 becoming the baseline certification expectation. Compliance teams should prepare for accelerating regulatory activity across all major markets.

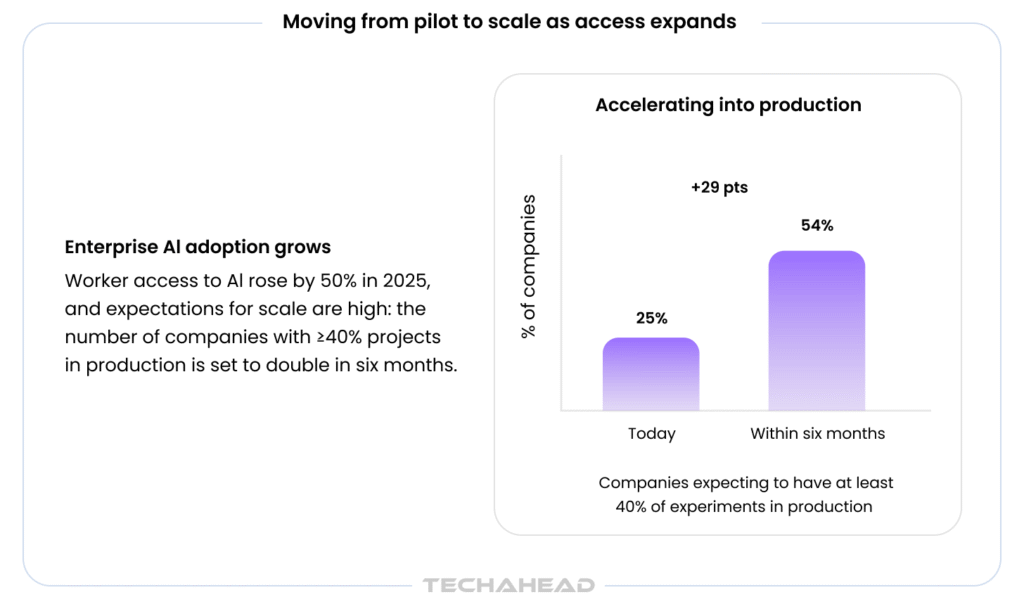

The adoption trajectory is accelerating:

- Companies with 40%+ AI projects in production expected to double within six months

- 18% of U.S. firms adopted AI by end of 2025, 68% year-over-year growth

This acceleration demands governance infrastructure that scales proportionally. Point-in-time audits won’t suffice – regulators expect continuous evaluation of AI system performance, bias, and robustness, similar to continuous security monitoring. Compliance scope will expand to multi-agent and autonomous AI systems where accountability becomes exponentially complex.

Compliance as competitive advantage:

Customers and partners increasingly demand AI transparency evidence in contracts, security questionnaires, and RFPs. TechAhead’s clients with mature governance frameworks, backed by our SOC 2 Type II and ISO 42001:2023 certifications, win procurement cycles 40% faster than competitors still building compliance reactively.

The 12-18 month window before peak enforcement represents the last opportunity to build compliance into competitive advantage rather than retrofit under regulatory pressure.

Partner with TechAhead for Enterprise-Grade AI Compliance

TechAhead, an AI-native enterprise software development company, has architected compliance-ready AI systems for Fortune 500 enterprises since 2009. Our 2,500+ platforms across healthcare, finance, and insurance demonstrate that governance and innovation are complementary capabilities enabling responsible AI deployment at scale.

Proven enterprise clients including AXA, JLL, American Express, Audi, ESPN F1, and ICC trust our compliance-first approach. Our healthcare expertise spans HIPAA-compliant digital transformation, processing sensitive patient data through AI-powered clinical decision support with field-level encryption, role-based access controls, and comprehensive audit trails.

Certifications demonstrating compliance maturity:

- ISO 42001:2023 (AI Management System)

- SOC 2 Type II (AI-specific enterprise controls)

- ISO 27001:2022, ISO 13485

- AWS Advanced Tier Partner | OpenAI Services Partner

Recognition: Clutch Global Awards (Fall 2025), #1 App Development Company India, #3 Globally, 150+ industry awards spanning AI development and digital transformation.

TechAhead’s 240+ engineers embed governance into AI development lifecycles through pre-deployment compliance frameworks identifying jurisdiction-specific obligations across 24+ regulatory requirements, surfacing 73% of compliance gaps before code is written.

Ready to turn compliance into competitive advantage? Contact TechAhead to discuss SOC 2 Type II and ISO 42001-certified AI compliance architecture for your enterprise.

Organizations with existing GRC infrastructure typically need 6-12 months for comprehensive AI compliance programs. The timeline depends on your AI system inventory size, regulatory scope (EU AI Act, HIPAA, SOC 2), and whether you’re pursuing ISO 42001 certification. Companies with ISO 27001 already in place can move faster, often completing implementation in 3-6 months.

ISO 42001 is the first international standard for AI Management Systems, providing certifiable governance frameworks. While technically voluntary, it’s becoming a procurement requirement for enterprise contracts. If you’re selling to Fortune 500 clients, financial services, or healthcare organizations, ISO 42001 certification demonstrates audit-ready AI governance and often shortens sales cycles significantly.

NIST AI RMF is voluntary U.S. guidance providing risk management methodology through four functions (Govern, Map, Measure, Manage). The EU AI Act is binding regulation with legal penalties up to €35M. Here’s the key: NIST provides the operational backbone, while EU AI Act sets mandatory obligations. Smart organizations use NIST as foundation, then layer EU requirements.

High-risk AI includes systems used for employment decisions, credit scoring, healthcare diagnostics, critical infrastructure management, and biometric identification. These require conformity assessments, Fundamental Rights Impact Assessments, human oversight mechanisms, and comprehensive documentation by August 2, 2026. If your AI affects rights, employment, or safety, it’s likely high-risk and needs immediate attention.

Not every AI system requires human-in-the-loop oversight. It depends on risk classification. High-risk applications (credit decisions, medical diagnoses, employment screening) mandate human review under EU AI Act Article 14. Low-risk productivity tools don’t. The test: does the AI decision significantly affect someone’s rights, employment, or access to services? If yes, implement HITL workflows.

Start with your AI system inventory. Catalog every model, API, and AI tool currently in production or development, including shadow AI. Then classify by risk tier, appoint an AI compliance lead with cross-functional authority, and implement interim GenAI guardrails. Focus first on high-risk systems where regulatory obligations are immediate and penalties severe.

SOC 2 Type II focuses on data security and privacy controls, while ISO 42001 specifically addresses AI governance, bias testing, model risk management, and lifecycle controls. They’re complementary, not competitive. Most enterprises need both. SOC 2 for customer data protection requirements and ISO 42001 for demonstrating responsible AI development and deployment practices.

Core documentation includes AI system inventory, risk classifications, model cards documenting purpose and limitations, data provenance sheets, Fundamental Rights Impact Assessments for high-risk systems, audit logs tracking decisions and human oversight, and policy frameworks defining governance structures. Organizations must maintain comprehensive documentation showing compliance across the entire AI lifecycle from ideation to retirement.

HIPAA requires Protected Health Information de-identification, business associate agreements with AI vendors, end-to-end encryption, role-based access controls limiting PHI exposure, and comprehensive audit logging. AI systems processing clinical data need documented risk assessments addressing AI-specific threats like model inversion attacks. FDA oversight applies if your AI influences clinical decisions.

Yes. Smart organizations build unified AI governance programs that map to EU AI Act, NIST AI RMF, and ISO 42001 simultaneously rather than running separate compliance tracks. Use ISO 42001’s management system structure as foundation, NIST’s four functions for risk methodology, then layer EU AI Act’s prescriptive requirements. This approach reduces duplication and audit burden significantly.