Required for core functionality such as security, network management, and accessibility. These cannot be disabled.

Enterprise AI is booming, but most deployments remain fragmented and brittle. This is where Model Context Protocol (MCP) changes the game, enabling AI agents to securely access enterprise tools, data, and workflows through a single standardized interface.

Enterprise AI adoption has exploded: 97 million monthly SDK downloads in March 2026, with 78% of enterprise teams now running AI agents in production.

Yet 86% of AI pilot projects still fail before reaching production, blocked by a single critical problem: fragmented integrations.

The bottleneck isn’t model capability.

Modern LLMs are remarkably powerful at understanding language.

Key Takeaways

- MCP connects AI models to enterprise systems securely and standardly.

- Enterprises achieved 970x SDK growth adoption within just eighteen months.

- Seventy-eight percent of enterprise AI teams have MCP in production.

- Forbes saved eighteen thousand hours annually using MCP-backed AI solutions.

- Model Context Protocol eliminates custom integrations and reduces vendor lock-in.

- Four-phase implementation roadmap takes enterprises from pilot to production quickly.

- 2026 marks the inflection point for widespread enterprise MCP adoption.

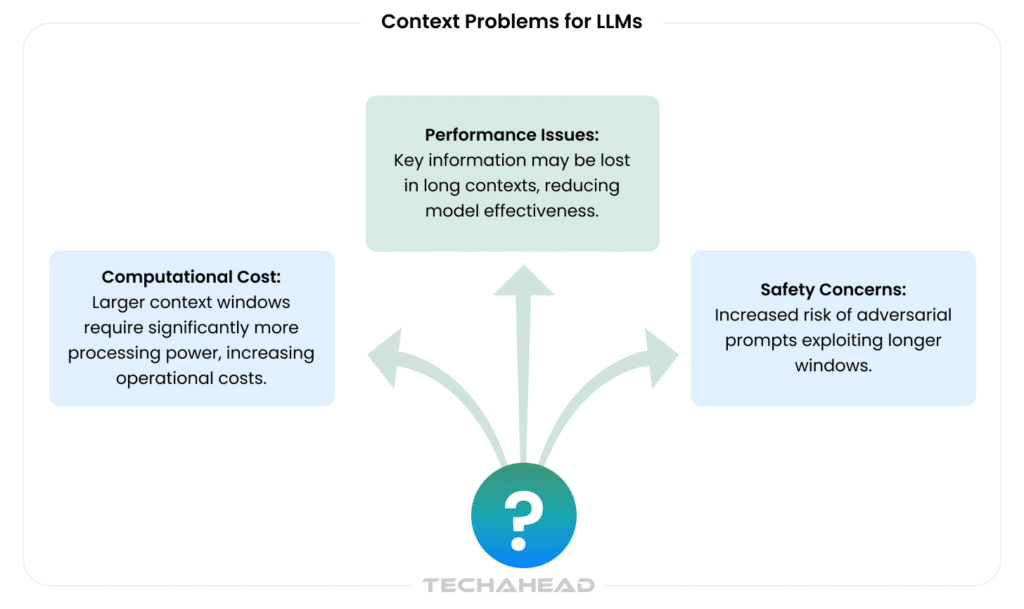

The real problem is context: AI models remain blind to enterprise business data. They cannot reliably access live customer records from your CRM, pull inventory levels from ERP systems, or securely execute workflows across enterprise tools.

This is where Model Context Protocol (MCP) changes the game enabling AI agents to securely access CRM, ERP, APIs, and enterprise workflows through a standardized, vendor-agnostic interface that eliminates custom integrations and reduces implementation time by up to 60%.

Context Problem for LLMs (Source)

In a typical business environment, a new AI use case requires engineering teams to build custom point-to-point integrations.

A sales copilot needs a CRM connector. A customer support bot needs a ticketing system connector. An internal assistant needs connectors to SharePoint, Jira, Salesforce, and whatever else an organization uses.

Scale this across even a mid-size enterprise, multiple teams, dozens of data sources, competing LLM vendors, and you have an integration debt crisis.

This is where Model Context Protocol (MCP) offers a solid solution, which is scalable, precise, and ROI-focussed.

What Is Model Context Protocol (MCP)?

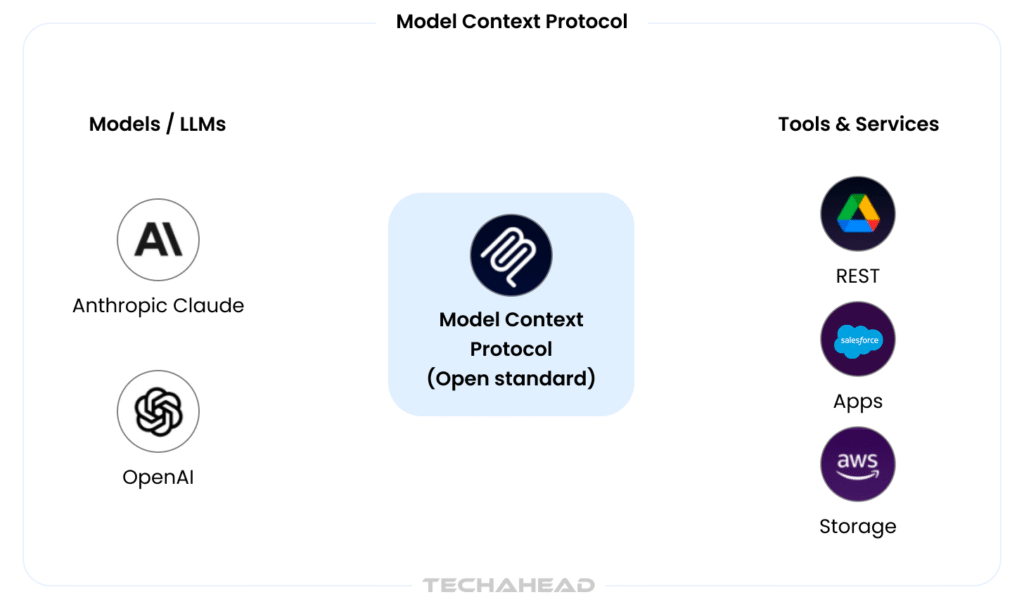

Introduced by Anthropic in November 2024, MCP is an open-source, vendor-neutral standard that has achieved industry-wide adoption, backed by OpenAI, Google, Microsoft, AWS, and the Linux Foundation. Think of it as the “USB-C for AI applications”, a universal connector that allows any AI model to communicate with any tool, data source, or system through a single, standardized interface.

In plain business terms: MCP standardizes how AI agents discover, authenticate to, and safely invoke external tools and data sources. Before MCP, every AI application needed custom integrations for every tool. After MCP, one standard interface replaces thousands of point-to-point connections.

The Growth Trajectory Is Staggering

The adoption curve tells the story better than any explanation. In November 2024, when MCP launched, the SDK had roughly 100,000 downloads. By March 2026, just 18 months later, that number reached 97 million monthly downloads, representing a 970x increase.

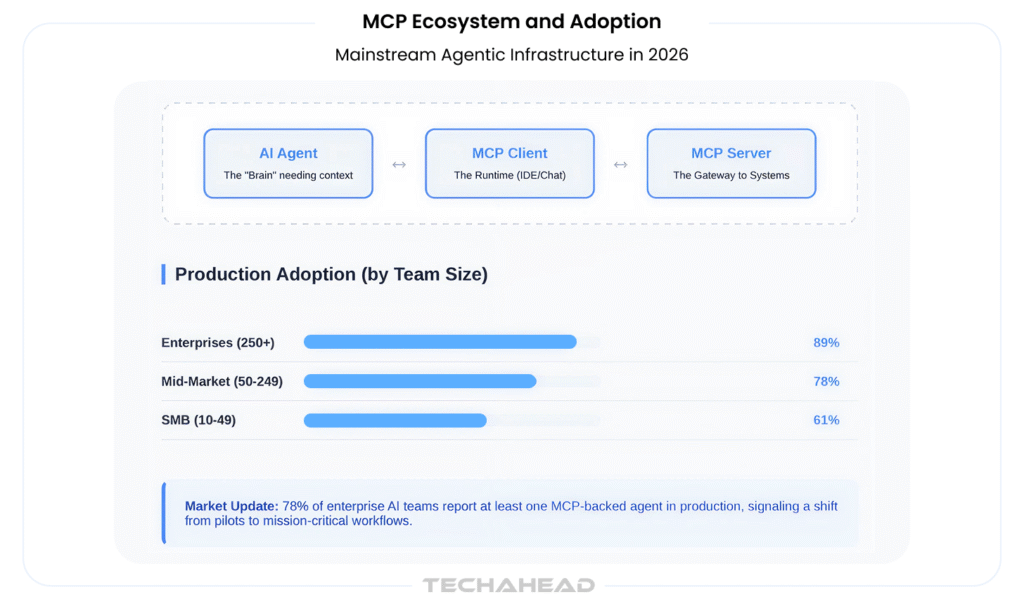

As of Q1 2026, 78% of enterprise AI teams (defined as organizations with 50+ AI/ML practitioners) reported at least one MCP-backed agent in production, up from 31% a year earlier. This is not a niche adoption curve. This is mainstream enterprise infrastructure.

The public MCP server registry grew 7.8x year-over-year, expanding from 1,200 servers in Q1 2025 to 9,400+ in April 2026, with month-over-month growth still tracking at +18%. By April 2026, MCP was implemented on more than 10,000 enterprise servers with over 97 million SDK downloads.

Why MCP Is Different From “Just Calling APIs”

Traditional REST API integrations are ad hoc and fragile. Each system gets its own custom connector. There’s no standard for how tools expose capabilities, no shared way to express permissions, no built-in discovery mechanism.

MCP changes this by introducing:

- Standardized tool discovery: Agents can ask “what tools do I have access to?” and receive a machine-readable, semantically rich response.

- Semantic metadata: Every capability exposed through MCP includes descriptions, parameter types, permissions, and constraints, enabling intelligent agent behavior instead of hardcoded logic.

- Secure context propagation: User identity, role, and permissions flow through each MCP call, enabling fine-grained access control without hardcoding credentials.

- Built-in governance: Every tool invocation is structured, typed, and auditable, critical for regulated industries like finance and healthcare.

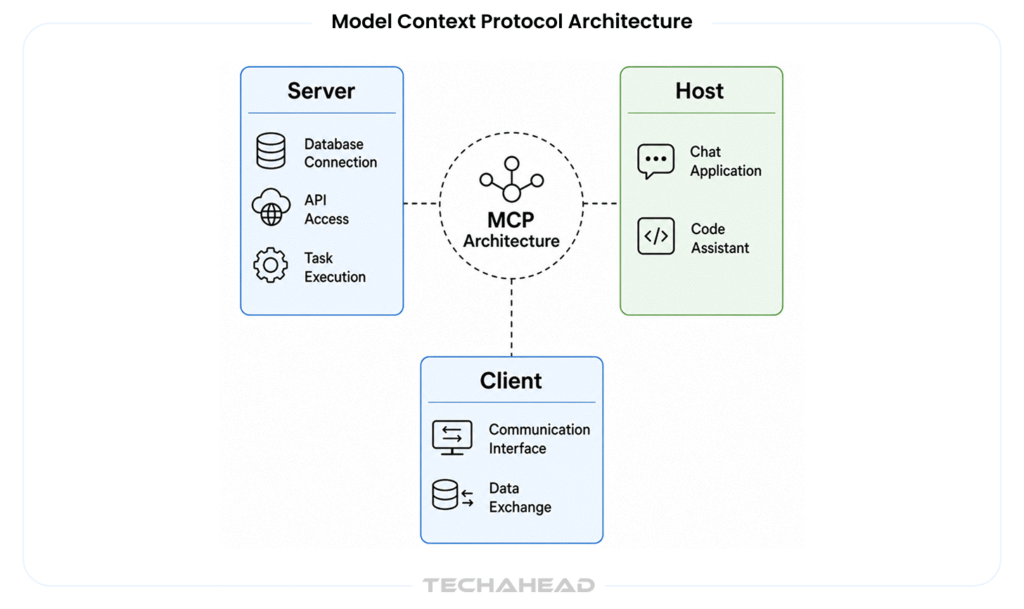

MCP Architecture: Three Key Players

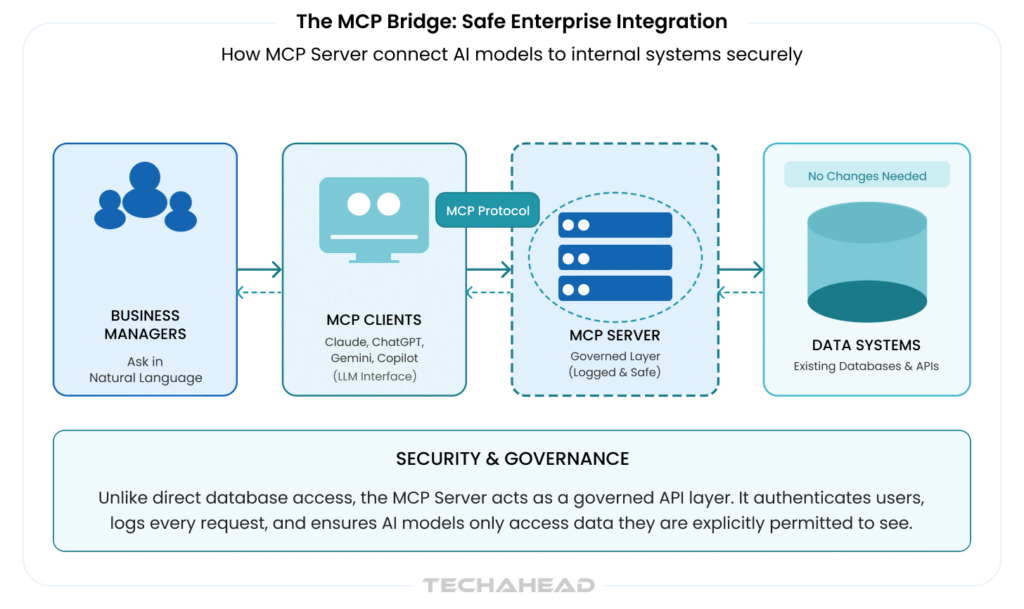

The elegance of MCP lies in its simplicity. Three actors interact:

- AI Model/Agent: The LLM or agentic system that needs to access external context.

- MCP Server: Exposes capabilities (tools, data connectors, workflows) via the standardized MCP protocol.

- MCP Client: The runtime environment (IDE, chatbot platform, enterprise app) where the AI model runs and communicates via MCP.

Communication happens via structured JSON-RPC-style messages with well-defined schemas, enabling deterministic tool calling and standard error handling. This is deliberate: MCP was designed to make AI behavior predictable and auditable in production environments.

Why Enterprises Need MCP Now (And Why 2026 Is the Inflection Point)

The strategic case for MCP comes down to five factors.

1. The Limits of Today’s Approaches Are Becoming Clear

According to CData’s 2026 State of AI Data Connectivity Report, 71% of AI teams spend more than a quarter of their implementation time on data integration alone, time not spent building value. This integration tax is unsustainable. Every team rebuilds the same connectors. Every new tool requires fresh engineering effort. The result is fragmentation, inconsistent security postures, and slow time-to-market.

2. Major Vendors Have Standardized (Finally)

For years, enterprise technology lacked a unified standard. Within eighteen months, MCP achieved what few technology standards accomplish: adoption by OpenAI (March 2025), Google DeepMind (April 2025), and Microsoft, with Anthropic donating MCP to the Linux Foundation in December 2025 for vendor-neutral governance.

This is significant. When OpenAI, Google, and Microsoft all ship support for the same protocol, it stops being a curiosity and becomes infrastructure.

3. Enterprises Are Moving From Pilots to Production

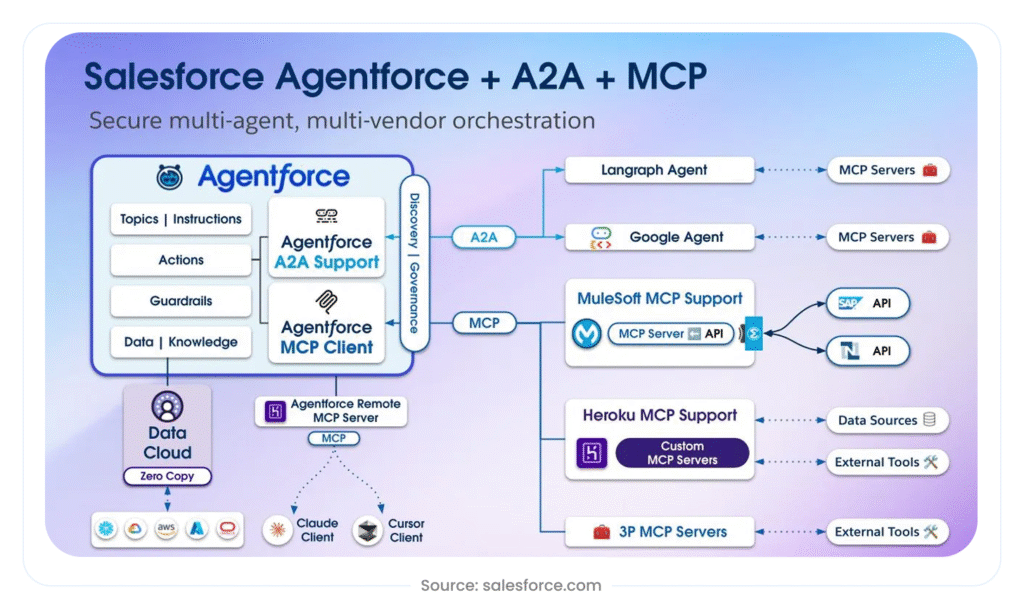

EY, Salesforce, and JPMorgan are orchestrating trillions of data points across thousands of workflows, signaling the mainstreaming of agentic infrastructure for regulated, mission-critical workflows.

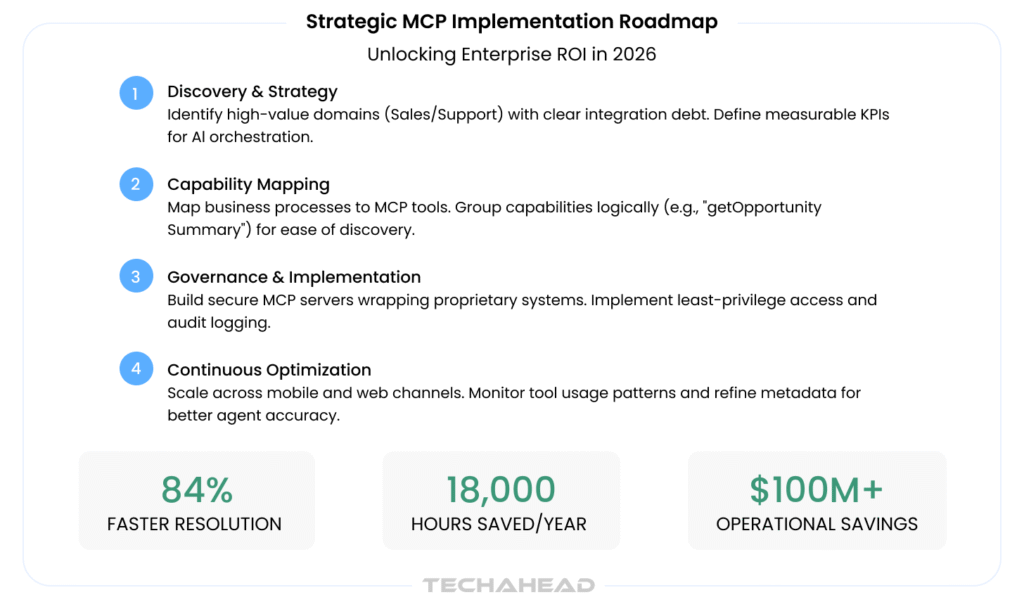

At Reddit, Salesforce’s Agentforce deployment (utilizing MCP) achieved 84% reductions in case resolution times and exceeded $100 million in annual operational savings according to vendor reports.

These aren’t isolated experiments. They’re production AI systems handling mission-critical workflows at scale, exactly the environments where MCP’s governance, security, and auditability matter most.

4. Governance and Compliance Are Moving Center Stage

Security researchers, particularly at RSA Conference 2026, are focusing heavily on MCP security risks, with submissions warning that the combination of ease of integration and expansive permissions creates a risk profile that organizations have not previously managed at scale.

Source: TechAhead AI Team

This is actually a sign of maturity. Early technologies are security afterthoughts. MCP is being evaluated for its security properties precisely because it’s being deployed in real enterprises. The solution is not to avoid MCP, it’s to understand its governance gaps and design systems that close them.

5. The Economics Are Compelling

Forbes saved 18,000 hours annually and doubled landing page conversion rates by removing developer dependency from content creation workflows through MCP-backed AI. This is not theoretical ROI. This is measurable operational impact from day one.

Gartner predicts that 40% of enterprise applications will embed AI agents by the end of 2026, with MCP at the core of this expansion.

Core Enterprise Use Cases for MCP in 2026

MCP isn’t a technology looking for a problem. The use cases are clear and immediately valuable.

Sales and Marketing Copilots

A sales copilot connected via MCP can simultaneously access CRM data (customer history, deal stage, contact info), marketing automation platforms (campaign engagement, content preferences), and internal systems (pricing, inventory, capacity) to generate real-time account briefs, personalized outreach, and next-best-action recommendations.

Before MCP, this required custom integrations. With MCP, a sales team can have a working copilot in weeks.

Customer Support at Scale

MCP enables support AI to access ticketing systems, knowledge bases, product databases, order management systems, and customer communication history to summarize cases, propose resolutions, and even execute actions (escalate to human, create follow-up task) safely and auditably.

Workflow Automation and Multi-System Orchestration

AI agents can safely trigger actions across multiple systems: create Jira tickets, update CRM records, send Slack notifications, generate reports in business intelligence tools. 76% of software providers are already exploring or implementing MCP as their connectivity standard for AI models.

Internal Knowledge and Operations

A unified “front door” to enterprise knowledge, connecting intranets, SharePoint, document repositories, APIs, data warehouses, with proper access controls, role-based context, and audit trails.

Real-World Evidence: MCP in Enterprise Production

The case for MCP moves beyond theory when you examine actual deployments.

Enterprise Adoption Metrics

Among enterprise AI teams, adoption varies by scale: 89% of enterprise teams (250+ AI engineers) have at least one MCP-backed agent in production, 78% of mid-market teams (50-249 engineers), and 61% of SMBs (10-49 engineers).

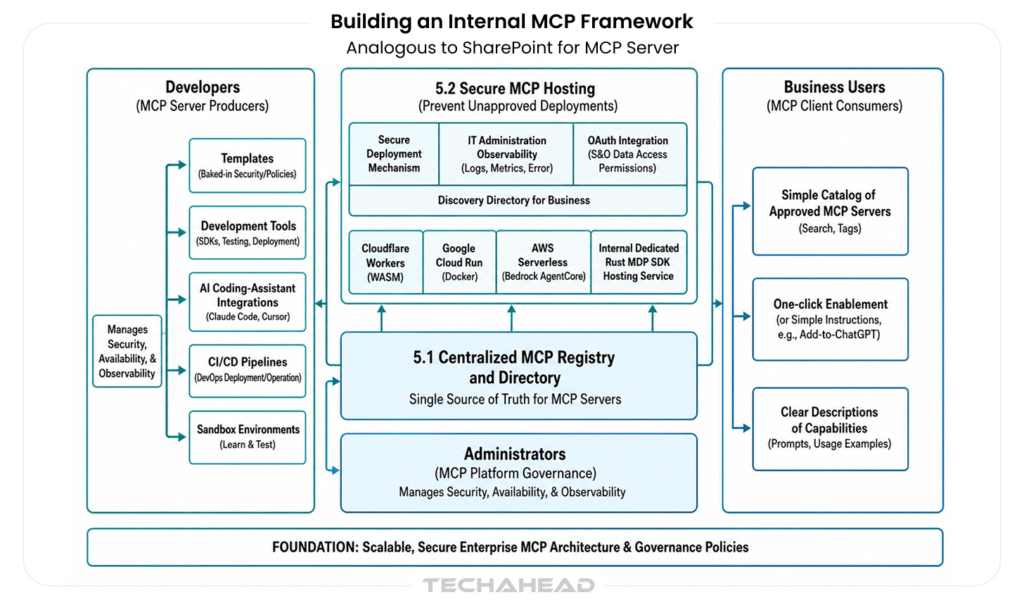

More telling: 41% of enterprise AI teams have built custom internal MCP servers, typically wrapping proprietary systems of record like data warehouses, internal CRMs, or custom workflow engines. This indicates deep adoption, not casual experimentation.

Source: TechAhead AI Team

Server Ecosystem Growth

According to independent census data, there are at least 17,468 MCP servers across registries as of Q1 2026, with the official MCP Registry listing roughly 2,000 first-party vendor servers and MCPMarket.com cataloging additional community implementations.

The distribution is instructive: 80% of public MCP servers implement tools-only capabilities (the practical default), 11% combine tools with data resources, and 4% add prompts for workflow orchestration.

Enterprise Readiness Is Becoming a Priority

The 2026 MCP roadmap explicitly makes enterprise readiness a top priority, acknowledging gaps in audit trails, SSO-integrated authentication, gateway behavior, and configuration portability, exactly the areas that block production deployment at scale.

This signals healthy protocol maturity: the community recognizes what production deployments require and is systematically closing gaps.

Designing MCP-Powered Enterprise Solutions

Building production MCP systems requires a disciplined approach.

Identifying the Right Use Cases

Look for:

- Repetitive tasks that touch multiple systems

- Workflows requiring both retrieval and action (read + write operations)

- Scenarios where governance, auditability, and role-based access are non-negotiable

Mapping Business Capabilities to MCP Tools

Translate business operations into MCP capabilities:

- “Sales needs quick opportunity overviews” becomes an MCP tool like getOpportunitySummary

- “Support needs to resolve tickets faster” becomes tools like searchKnowledgeBase, createTicketNote, escalateToHuman

Well-scoped, clearly named capabilities are maintainable capabilities. Avoid the trap of exposing entire systems as single overly broad tools.

Security and Governance by Design

This is non-negotiable:

- Define safe defaults and constraints per capability (rate limits, allowed parameters, role-based access)

- Implement human-in-the-loop patterns for high-risk actions (approvals before final execution)

- Log every tool call immutably with timestamp, user, context, parameters, and outcome

- Enforce least-privilege access: AI agents get access only to tools they demonstrably need

Challenges, Risks, and Practical Mitigation

MCP adoption is not risk-free. Understanding the challenges is crucial for success.

Technical Challenges

Semantic Modeling Maturity: Teams must learn to think in terms of business capabilities, not low-level APIs. This requires a shift in how organizations approach integration design.

Granularity Decisions: Overly broad capabilities are risky (an agent could do too much). Overly granular capabilities become unmaintainable. Finding the right balance takes discipline and iteration.

Context Window Limitations: MCP servers expose capabilities, but agents still need to fit them within a finite context window. Thoughtful capability design prevents overwhelming agents with too many options.

Organizational Challenges

Staggeringly, 86-89% of AI agent pilots fail before production, overwhelmingly due to gaps in governance, inability to inventory and trace agent actions, insufficient monitoring, and friction across organizational boundaries.

This is not an MCP-specific problem. It’s a general AI deployment problem that MCP can help solve (through governance-by-design) but doesn’t eliminate. Success requires alignment between product, engineering, security, and compliance teams.

Best Practices From the Field

- Start narrow: Pick one high-value domain, build MCP servers and agents there, measure results. Expand only after proving value.

- Standardize naming and documentation: Capability naming conventions matter. Document every tool’s purpose, parameters, constraints, and side effects.

- Invest in observability: Build robust telemetry and feedback loops. You need to know how agents are using tools in production.

- Design for change: Business rules evolve. Plan for capability versioning and graceful deprecation from the start.

How MCP Fits Into TechAhead’s Enterprise AI Approach

At TechAhead, we’ve spent 16+ years building production-grade mobile and web applications that integrate complex backends, third-party APIs, and enterprise systems. We’ve learned what it takes to ship secure, scalable systems that last.

MCP is a natural extension of this expertise into the AI layer. Rather than treating AI as a black box that needs custom connections to every system, MCP lets us architect AI-powered applications the same way we architect enterprise systems: with clear contracts, standardized interfaces, and governance built in.

The TechAhead MCP Engagement Model

We work with enterprise teams across four phases:

- Discovery & Strategy: Identify high-value AI use cases, map existing systems and data sources, understand constraints (compliance, security, organizational).

- Architecture & MCP Design: Design the capability model, define security and authentication patterns, plan integration architecture.

- Implementation: Build MCP servers wrapping your systems, integrate with chosen LLMs (Claude, GPT, Gemini, or multi-model), develop AI-powered apps and copilots.

- Pilot & Scale: Launch pilots with real users, collect data and feedback, refine capabilities, roll out to broader teams.

Solution Blueprints We Deliver

- AI Copilots for Mobile and Web: Field teams, sales, operations, and customer-facing applications that access inventory, orders, customer data, and support systems securely through MCP.

- Customer-Facing AI Assistants: In-app and web-based assistants that check order status, modify bookings, provide personalized recommendations, and handle support inquiries.

- Internal Knowledge and Operations Assistants: Accessible across web, mobile, and collaboration tools (Slack, Teams, email), providing unified access to enterprise knowledge and enabling workflow automation.

Getting Started: A Roadmap for Your Organization

If MCP is new to your strategy, here’s how to move forward systematically.

Step 1: Assess Readiness and Choose a Pilot Domain

Evaluate your current AI initiatives, data landscape, and system inventory. Select a pilot domain with these properties:

- Clear, measurable KPIs (time savings, cost reduction, quality improvement)

- Manageable technical scope (don’t try to integrate 50 systems on day one)

- Manageable organizational scope (one team, not enterprise-wide)

- Low regrettable cost of failure

Step 2: Design Your Capability Model

Bring together business stakeholders (the users who will benefit) and technical teams. List the key actions and data needs. Group them into 5-15 MCP capabilities. Prioritize based on value and implementation complexity.

Step 3: Implement, Test, and Iterate

Build an initial MCP server (using FastMCP, the TypeScript MCP SDK, or similar). Wire it to your chosen LLM. Test with real users. Conduct security and performance reviews. Iterate on tool definitions and metadata.

Step 4: Scale Across Teams and Channels

Reuse MCP capabilities across multiple AI surfaces: mobile apps, web interfaces, internal tools, Slack bots. Build an internal capability catalog so teams can discover and consume tools.

The Convergence: MCP and Enterprise Infrastructure

MCP isn’t operating in isolation. It’s converging with other critical patterns.

API Management: MCP works alongside API gateways and service meshes, not instead of them. Smart organizations treat MCP as an AI-facing layer above existing API management infrastructure.

Event-Driven Architectures: MCP integrates naturally with event-driven systems, enabling AI agents to respond to real-time system events.

Compliance and Observability Platforms: Enterprise identity management, audit logging, and security information and event management (SIEM) systems are evolving to support MCP-specific controls and logging.

Gartner research indicates that 87% of IT leaders now prioritize interoperability for agentic orchestration, with 51% of enterprises preferring hybrid stacks that layer open protocols like MCP on top of extensible, vendor-managed orchestration.

Conclusion: The Competitive Edge Belongs to Early Adopters

The strategic reality is clear: enterprises that adopt MCP early, not today, but within the next 12-18 months, will have significant competitive advantages.

They will innovate faster (building AI-powered features in weeks, not months). They will integrate more securely (governance built into the architecture). They will reduce vendor lock-in (MCP-based applications can switch between LLM providers more easily). They will scale more confidently (because they understand and can audit every AI action).

The inverse is equally true. Organizations that delay adoption will accumulate integration debt, struggle with vendor lock-in, and find it increasingly difficult to compete on AI-driven innovation.

Where To Start

- Identify one high-value AI use case in your organization where multiple systems need to work together: a sales copilot, customer support assistant, or internal knowledge tool.

- Talk to an experienced partner who understands both enterprise architecture and AI integration. (Hint: we’d love to help.)

- Build a proof-of-concept MCP server wrapping your critical system. Test it with a real LLM and real users.

- Measure the impact: time saved, quality improved, costs reduced. Use this to justify broader adoption.

The future of enterprise AI isn’t in better models. We’re past the point where model performance is the constraint. The future belongs to organizations that figure out how to connect AI to real business context reliably, securely, and at scale.

MCP is the infrastructure that makes that possible.

References & Further Reading

- Anthropic. (2024). “Model Context Protocol: Specification and Implementation Guide.”

- CData Software. (2026). “State of AI Data Connectivity Report: 2026 Outlook.”

- Gartner. (2026). “Market Guide for Enterprise AI Agents and Agentic Orchestration.”

- Imagine Works. (2026). “Model Context Protocol for Enterprise Leaders: Architecture, Procurement, and Risk.”

- Linux Foundation. (2025). “Agentic AI Foundation: Governing Open Standards for AI Integration.”

- WorkOS. (2026). “MCP’s 2026 Roadmap: Enterprise Readiness and the Path to Production.”

MCP is an open standard enabling AI systems to securely access and share contextual information across enterprise applications seamlessly.

MCP provides standardized tool discovery, semantic metadata, secure context propagation, and built-in governance instead of ad hoc custom connections.

Faster innovation cycles, reduced integration costs, improved security, better governance, reduced vendor lock-in, and measurable operational impact across teams.

Four phases spanning sixteen weeks: assess (four weeks), design (four weeks), build (eight weeks), launch and scale thereafter.

EY, JPMorgan, Salesforce, Reddit, Amazon, Block, and Bloomberg are all orchestrating production MCP deployments at scale.