Required for core functionality such as security, network management, and accessibility. These cannot be disabled.

Every enterprise rushing to deploy AI is asking the same two questions; how do we keep our data secure, and how do we make sure this AI audit does not become a liability?

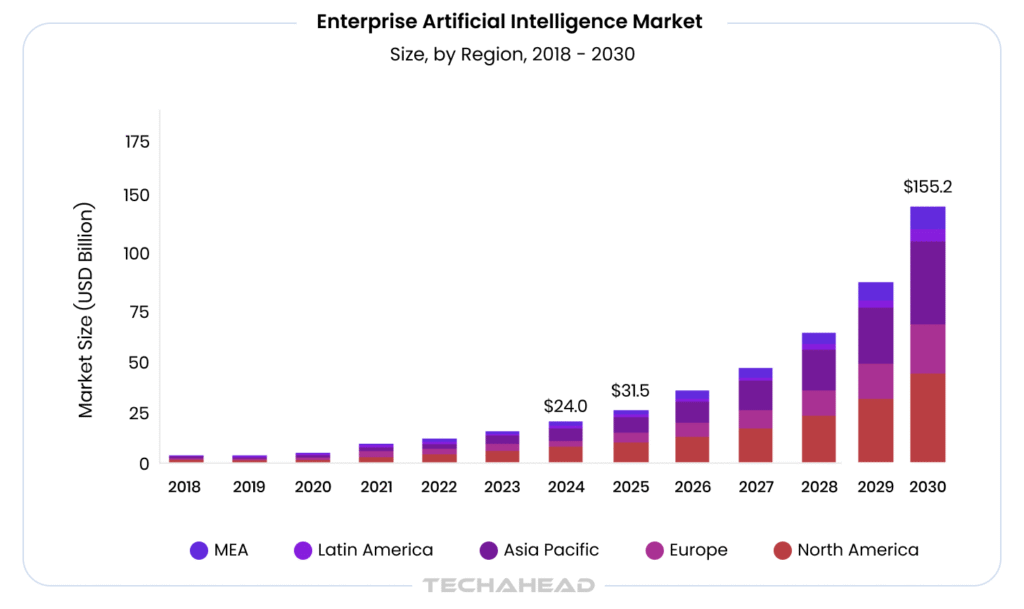

Grand View Research estimates the global enterprise AI market at USD 23.95 billion in 2024, forecasting growth to USD 155.21 billion by 2030 at a 37.6% CAGR. That is an enormous amount of capital flowing into systems that most enterprises cannot fully ‘see, explain, or defend’ when something goes wrong.

And when something does go wrong, a biased decision, a data breach, a compliance violation regulators do not accept “we did not know” as an answer. They ask for documentation. They ask for proof. They ask for an audit trail. Most enterprises do not have one.

Security failures and compliance penalties do not announce themselves in advance.

In this blog, we are going to explore why AI audit trails are the one infrastructure decision every enterprise cannot afford to ignore; what happens without them, which industries are already legally required to have them, and exactly how to build one that holds up under real regulatory and legal scrutiny.

Key Takeaways

- Audit trails transform “black box” AI into transparent, defensible business assets.

- Audit trails act as forensic evidence to identify prompt injection and security breaches.

- Model drift is caught early by continuously monitoring performance through detailed logs.

- High-risk sectors like healthcare and finance face mandatory AI audit requirements.

- Scalability is only sustainable when governance scales alongside your growing AI infrastructure.

What is an AI Audit Trail?

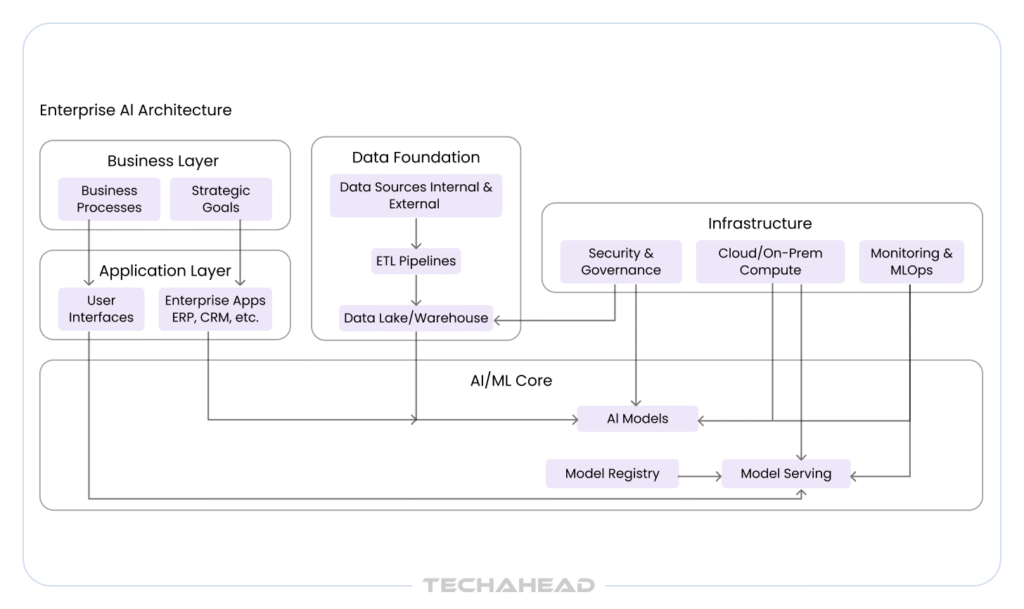

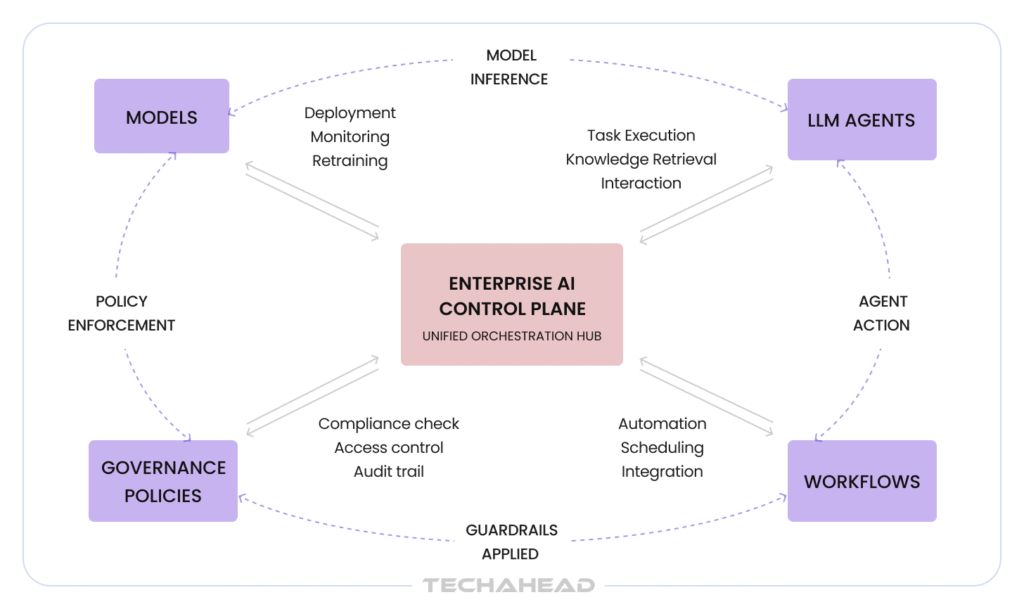

An AI audit trail is a chronological record of every decision, action, and data interaction made by an AI system. It captures who accessed the system, what inputs were used, what decisions were made (For LLMs, it is not just the decision, but the reasoning path or the “Chain of Thought”), and when each event occurred, creating a complete, traceable history of your AI in action.

However, why does it matter for enterprises?

For enterprises, an AI system without an audit trail is essentially a ‘black box’. You cannot see what it decided, why it decided it. However, in regulated industries, that invisibility is a ‘compliance liability’.

Audit trails matter for three reasons:

Regulatory Compliance

GDPR, HIPAA, SOC 2, ISO/IEC 42001 and the EU AI Act all require enterprises to demonstrate accountability over automated decision-making systems.

Security & Risk Management

Audit logs are the first place investigators look when a data breach, unauthorized access, or model manipulation is suspected.

Business Accountability

When an AI system makes a decision that impacts a customer, employee, or financial outcome, enterprises need documented evidence to defend that decision.

Without this layer of visibility, enterprises are building AI on a foundation they cannot inspect or trust when it matters most.

What Happens When Enterprise AI Operates Without an Audit Trail?

Operating enterprise AI without an audit trail is not a technical oversight; it is a business risk that compounds quietly until something goes wrong. And when it does, the damage spreads fast.

You Cannot Explain Your Own AI Decisions

When a customer is denied a loan, or a hiring decision is made by an AI system, the legal teams will ask why. Without an audit trail, there is no answer. That silence alone can trigger investigations or lawsuits.

Security Breaches Become Impossible to Trace

Without a detailed activity log, enterprises cannot identify when unauthorized access occurred, which data was exposed, or how far the breach extended. Cybersecurity Ventures estimates that the average cost of a data breach reached $4.88 million in 2024 and without audit trails, containment becomes harder.

Compliance Violations Go Undetected Until It Is Too Late

Regulatory frameworks like GDPR, HIPAA, and the EU AI Act require documented evidence of AI accountability. Operating without audit trails does not just risk fines, it signals to regulators that governance was never a priority.

The Role of Audit Trails in AI Compliance and Risk Management

Compliance is no longer a checkbox exercise for enterprise AI. It is an ongoing operational responsibility, and audit trails are a crucial part of it.

Meeting Regulatory Requirements Across Multiple Frameworks

Enterprise AI systems operate under a complex web of overlapping regulations. Each one demands documented evidence of accountability and the penalties for gaps are significant.

GDPR and the Right to Explanation

Under Article 22 of GDPR, individuals have the right to contest automated decisions that affect them. Without an audit trail, producing that explanation is practically impossible. Fines reach up to €20 million or 4% of global annual revenue.

HIPAA and Healthcare AI Accountability

Healthcare enterprises deploying AI for patient data must maintain detailed access and activity logs under HIPAA. The HHS Office for Civil Rights issued over $135 million in HIPAA penalties between 2020 and 2023; a significant portion tied to insufficient documentation.

The EU AI Act and High-Risk AI Systems

AI systems used in hiring, credit scoring, and healthcare are legally classified as high-risk under the EU AI Act. Non-compliant enterprises face fines of up to €30 million or 6% of global annual turnover.

SOC 2 and Enterprise Data Trust

SOC 2 compliance requires enterprises to demonstrate continuous monitoring of data access and system activity. Audit trails are the primary mechanism auditors use to verify these controls are functioning.

Managing Enterprise Risk Through Continuous Monitoring

Compliance is reactive by nature; audit trails make risk management proactive.

Identifying Model Drift Before It Creates Liability

AI models degrade as real-world data shifts. Audit trails track model performance continuously, flagging drift before it produces biased or non-compliant outputs. Gartner estimates unmonitored model drift costs and quantifies AI risk through the lens of AI TRiSM (Trust, Risk, and Security Management), noting that organizations that do not manage these risks will see a 50% lower adoption rate and significant “value erosion.”

Detecting Insider Threats Early

Not all AI security risks come from outside. Audit trails monitor internal access patterns, flagging unusual employee behavior before it escalates into a data incident or compliance violation.

Preventing Biased AI Outputs

Audit trails create a record of every AI decision. It allows compliance teams to identify patterns of biased outputs across demographic groups, catching liability risks before they reach regulators or courts.

Building a Defensible Governance Record

When regulators investigate, documentation is everything.

Lower Penalties During Regulatory Investigations

A 2023 Deloitte report found that organizations with mature AI governance frameworks were 2.3x less likely to face regulatory action than those without documented oversight.

Demonstrating Accountability to Enterprise Clients

Large enterprise clients increasingly require proof of AI governance before signing contracts. A documented audit trail infrastructure signals operational maturity, directly influencing partnership decisions.

Reducing Financial Exposure Across the Business

Strengthen your enterprise resilience by embedding AI governance and enabling proactive decision-making with transparent, auditable systems across operations.

Lower Cyber Insurance Premiums

Cyber insurers are factoring AI governance maturity into premium calculations. Enterprises with documented audit trail infrastructure demonstrate lower risk profiles, directly reducing insurance costs.

Avoiding the True Cost of Non-Compliance

The Ponemon Institute found that the average cost of non-compliance for enterprises is $14.82 million annually, nearly 3x higher than the cost of maintaining compliance infrastructure in the first place.

Supporting Internal Audit and Governance Teams

Enterprise AI governance does not live in one department. It spans legal, compliance, IT, and operations; and audit trails give every team the visibility they need.

Giving Legal Teams Evidence They Can Actually Use

When litigation arises around an AI-driven decision, legal teams need timestamped, tamper-proof records. Audit trails provide court-admissible documentation that demonstrates the AI system operated within defined parameters at every stage.

Empowering Compliance Officers With Real-Time Visibility

Rather than waiting for quarterly reviews, compliance officers can monitor AI activity continuously. Real-time audit data allows teams to catch policy violations the moment they occur, not months later during an annual audit cycle.

Aligning IT and Business Teams Around a Single Source of Truth

Audit trails eliminate the ambiguity that creates conflict between IT and business units. Every stakeholder works from the same documented record, which reduces internal disputes and accelerates governance decisions.

Strengthening Third-Party and Vendor Risk Management

Enterprises rarely operate AI in isolation. Third-party integrations, vendor models, and external data sources all introduce risks that audit trails help control.

Monitoring Third-Party AI Model Behavior

When enterprises deploy AI models built by external vendors, audit trails track how those models behave inside your environment. Any deviation from expected behavior is flagged immediately before it creates downstream compliance or security issues.

Maintaining Accountability Across the AI Supply Chain

The EU AI Act specifically holds enterprises accountable for the behavior of third-party AI components integrated into their systems. A 2023 McKinsey report found that 60% of enterprises have limited visibility into the AI models embedded in their vendor software, a blind spot that audit trails directly address.

Enforcing Contractual AI Governance Obligations

Many enterprise contracts now include explicit AI governance clauses. Audit trails provide the documented proof needed to demonstrate compliance with these contractual obligations.

Preparing for the Future of AI Regulation

Regulatory requirements around AI are tightening globally, and the pace is accelerating.

Staying Ahead of Emerging Legislation

Beyond GDPR and the EU AI Act, countries including the United States, Canada, and India are actively developing national AI governance frameworks. Enterprises that build audit trail infrastructure today will be better positioned to meet tomorrow’s requirements without costly system overhauls.

Building an Audit-Ready AI Culture

Regulation aside, enterprises that embed audit trail thinking into their AI development process build stronger, more accountable systems by default. According to PwC’s 2023 AI Predictions report, 75% of enterprise executives believe AI governance will become a direct competitive differentiator within the next three years.

Future-Proofing Your AI Investment

Every AI system your enterprise builds today will eventually face stricter regulatory scrutiny. Building audit trail capabilities from the ground up protects the long-term value of your AI investment.

Industries Where AI Audit Trails are Not Optional; They are Mandatory

In regulated industries, audit trails transform “black box” risks into defensible assets. Without them, enterprises face legal exposure and total loss of trust in their AI deployments.

Healthcare & Life Sciences

HIPAA requires strict documentation of every system that accesses patient data. In healthcare industry, AI used in diagnostics, treatment recommendations, or billing automation must maintain complete activity logs. The HHS Office for Civil Rights actively investigates audit failures, and penalties routinely reach millions per violation.

Fintech & Banking

Financial regulators including the SEC, FCA, and Basel Committee require documented evidence of every AI-driven decision influencing credit, trading, or fraud detection. The EU’s Digital Operational Resilience Act (DORA) makes AI activity logging a direct legal obligation for financial institutions operating in European markets.

Legal & Compliance Services

Law firms and compliance-driven enterprises using AI for contract analysis, case research, or regulatory reporting carry direct professional liability for AI outputs. Courts increasingly demand documented proof of how AI-assisted decisions were reached, making audit trails essential for legal defensibility and professional indemnity insurance coverage.

Government & Public Sector

AI systems deployed in public services; benefits processing, law enforcement, or citizen data management, operate under strict transparency mandates. The EU AI Act classifies most government AI applications as high-risk, requiring audit logs as a baseline legal requirement before deployment is even permitted.

Insurance

AI used in underwriting, claims processing, and risk assessment is under direct regulatory scrutiny across US state insurance commissions and European supervisory authorities. Insurers must demonstrate that AI-driven decisions are fair, explainable, and fully documented.

AI Audit Trails vs. Traditional IT Audit Logs: Key Differences Explained

| Criteria | Traditional IT Audit Logs | AI Audit Trails |

| Primary Purpose | Track system access, user activity, and network events | Track AI decisions, model behavior, data inputs, and outputs |

| What They Record | Login attempts, file access, system changes, error logs | Model predictions, training data usage, decision logic, confidence scores |

| Decision Visibility | Records who did what and when | Records why an AI system made a specific decision |

| Data Complexity | Structured, straightforward log entries | Complex, multi-layered records covering data pipelines and model interactions |

| Regulatory Scope | SOC 2, ISO 27001, general IT compliance | GDPR Article 22, EU AI Act, HIPAA, DORA, sector-specific AI regulations |

| Real-Time Monitoring | Flags system anomalies and unauthorized access | Flags model drift, biased outputs, and unexpected AI behavior |

| Explainability Requirement | Not required — records actions, not reasoning | Required — must explain AI decision logic to regulators and courts |

| Tamper Detection | Detects unauthorized system changes | Detects unauthorized model manipulation and data poisoning attempts |

| Litigation Use | Supports cybersecurity and access control cases | Supports AI liability, discrimination, and regulatory compliance cases |

Common Mistakes Enterprises Make When Setting Up AI Audit Trails

Setting up an AI audit trail is not simply a matter of turning on logging. Most enterprises make crucial mistakes during setup that leave significant compliance and security gaps.

Logging System Events Instead of AI Decisions

The most common mistake. Enterprises apply traditional IT logging to AI systems and assume the job is done. Traditional logs record what happened; AI audit trails must record why a decision was made, what data influenced it, and what the model’s confidence level was. Moreover, SHAP or LIME values (feature importance) or the Chain of Thought (CoT) are most important for AI audit trails.

Building Audit Trails After Deployment

Retrofitting audit capabilities into a live AI system is significantly more expensive and technically complex than building them in from the start. By the time most enterprises realize this, the system is already in production.

No Defined Data Retention Policy

Audit trails generate enormous volumes of data. Without a clear retention policy aligned to regulatory requirements, GDPR mandates specific data storage limitations. Enterprises either store too little to satisfy regulators or too much to manage securely.

Ignoring Third-Party AI Components

Enterprises audit their own AI models but overlook the vendor models and external APIs integrated into their systems. Every component that influences an AI decision must be covered, partial audit trails create full compliance gaps.

Conclusion

The “black box” era of AI is over. For enterprise leaders, the question is no longer just “What can AI do?” but “Can we prove how it did it?” As global regulations move into full enforcement, an immutable audit trail is your only shield. At TechAhead, we do not just build AI; we build Accountable AI. We specialize in architecting transparent ecosystems, from cryptographic data lineage to advanced RAG systems, our AI experts turn your governance from a liability into a high-speed competitive advantage. Ready to lead with confidence? Partner with TechAhead to engineer audit-ready AI that scales securely.

Traditional logs track system events like logins and errors. AI audit trails capture the “reasoning” layer: inputs, specific data retrieved via RAG, model versions, and logic paths. They explain why a decision happened, not just that a system was active.

It provides “forensic proof” during lawsuits or regulatory audits, showing your AI followed safety protocols. This prevents massive non-compliance fines (like those under the EU AI Act) and protects against “cost explosions” by tracking inefficient token usage across departments.

You cannot audit their internal weights, so you must audit the “I/O lineage.” Record the exact prompt sent, the specific metadata, the timestamp, and the raw response. It creates a defensible record of your interaction with the external black box.