Required for core functionality such as security, network management, and accessibility. These cannot be disabled.

The regulatory ground is shifting under AI deployments faster than most organizations can adapt. While the EU AI Act dominates compliance discussions, U.S. enterprises face a different challenge: demonstrating AI governance maturity without mandatory federal mandates. The NIST AI RMF, initiated in 2021 and officially released in January 2023 as a response to the complexities and potential risks associated with artificial intelligence systems, is a voluntary framework and consensus resource developed by the National Institute of Standards and Technology (NIST) through collaboration with over 240 entities from public and private sectors – including AI actors and civil society, filling this gap and becoming the baseline expectation across federal procurement, sector regulators, and enterprise customers. This extensive collaboration makes the NIST AI RMF a highly reputable source for AI regulation guidance.

Key Takeaways

- NIST AI RMF provides voluntary U.S. framework becoming baseline expectation across federal procurement, enterprise customers, and regulatory enforcement guidance.

- Four core functions – Govern, Map, Measure, Manage, organize AI risk management across system lifecycle, addressing bias, transparency, and security continuously.

- Implementation paths include 90-day quick-start for foundational compliance or 6-month comprehensive program integrating with existing GRC infrastructure and certifications.

- NIST AI RMF maps directly to ISO 42001, EU AI Act, and sector regulations, enabling unified governance through single control library.

- Sector-specific implementations for healthcare, finance, manufacturing, and SaaS address HIPAA, fair lending, safety validation, and customer procurement requirements effectively.

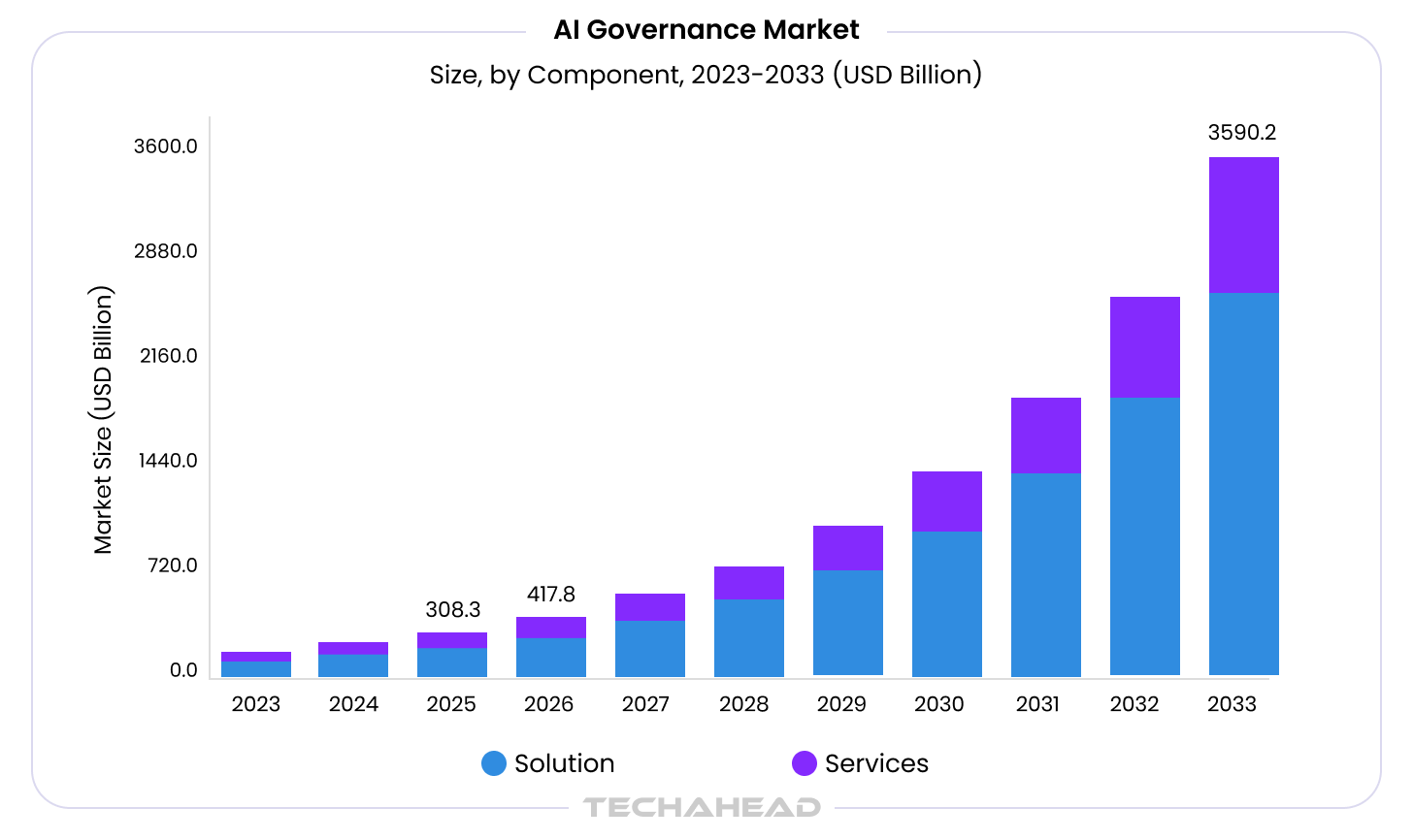

The market response validates this urgency: the global AI governance market, valued at USD 308.3 million in 2025, is projected to reach USD 3.59 billion by 2033 at a 36% CAGR.

Released in January 2023, the NIST AI Risk Management Framework (AI RMF) offers a management framework and structured guidelines for managing risks associated with artificial intelligence systems across the entire AI system lifecycle.

Developed with input from a diverse range of stakeholders, the framework is designed to be flexible and adaptable for a wide range of AI applications, regardless of organizational size, risk level, or context. The framework helps organizations understand and manage risks associated with advanced AI technologies, including generative AI and other emerging AI technologies.

What began as best-practice guidance now appears in procurement requirements, regulatory enforcement expectations, and customer security questionnaires. The Colorado AI Act explicitly references NIST AI RMF for safe harbor protection.

- Federal agencies increasingly require NIST-aligned AI governance as a contracting condition

- Banking regulators map AI obligations to NIST AI RMF principles alongside existing model risk management frameworks

How NIST AI RMF Aligns with Global AI Standards

While voluntary, the AI RMF aligns with emerging global standards like the EU AI Act and the U.S. AI Executive Order. As AI regulations become more pressing, frameworks like the NIST AI RMF provide organizations with guidelines to ensure compliance with emerging laws, such as the EU’s AI Act and California’s Consumer Privacy Act (CCPA).

The NIST AI RMF offers organizations a reputable, collaboratively developed set of guidelines for responsible and ethical AI use, promoting accountability, transparency, and compliance with evolving regulations. Trustworthy AI aims to enhance trust among users, developers, deployers, and the broader public affected by AI systems by aligning with societal values and norms.

- Organizations are adopting the NIST AI RMF to strengthen their AI governance and risk management practices. Implementing NIST AI RMF is often facilitated by tools and templates that help organizations integrate the framework more efficiently and achieve compliance faster.

- AI risk management is essential for addressing potential disruptions and harms caused by AI systems, especially as these technologies are increasingly embedded in critical sectors such as healthcare and finance.

For a broader context on how NIST AI RMF fits within the complete enterprise AI compliance landscape, see our comprehensive Enterprise AI Compliance & Governance Guide.

Why U.S. Enterprises Need NIST AI Risk Management Framework in 2026

The business case for NIST AI RMF implementation extends beyond regulatory compliance. Organizations seeking to improve AI governance and compliance are increasingly turning to the NIST AI RMF for structured guidance. The NIST AI RMF is critical because traditional risk management frameworks are often insufficient for the probabilistic and complex nature of AI. Managing AI risks through the NIST AI RMF helps organizations establish standards and implement risk response strategies, ensuring ethical and secure AI practices.

The framework is designed to enable organizations to adopt responsible AI practices and improve risk management, allowing them to align AI practices with organizational values and responsible AI principles, while supporting risk management efforts that are consistent with industry standards and relevant laws.

Bonus Read: Pillars of AI Security (Protecting the Future of Technology)

Three convergent forces make structured AI risk management essential for U.S. enterprises in 2026:

Regulatory Convergence Around NIST Principles

While technically voluntary, sector regulators increasingly reference NIST AI RMF in enforcement guidance. The FTC, CFPB, FDA, SEC, and EEOC all cite framework principles when evaluating whether AI practices meet reasonable standards of care. Federal contractors face explicit expectations to demonstrate NIST-aligned governance. The Treasury Department’s Financial Services AI RMF, released February 2026, translates NIST principles into 230 control objectives specifically for financial institutions.

Customer Procurement Requirements

Enterprise buyers embed AI governance questions into security questionnaires and vendor risk assessments. Organizations without documented AI risk management programs face longer sales cycles, additional due diligence requests, and competitive disadvantage against vendors demonstrating NIST AI RMF alignment. According to Compliance Week’s 2026 survey, 83% of organizations are already using AI tools, but only 25% have implemented strong governance frameworks. This gap represents massive opportunity for organizations demonstrating mature AI governance through NIST alignment.

Insurance and Liability Considerations

Cyber insurance underwriters are drafting AI-specific endorsements that can exclude or limit coverage when AI systems lack governance controls. Demonstrating NIST AI RMF implementation provides evidence of due diligence that influence coverage decisions and premium calculations. As litigation around AI failures increases, documented risk management processes become critical to liability defense.

As Mukul Mayank, Chief Operating Officer at TechAhead, observes:

“Organizations that frame AI governance as risk management theater rather than strategic enabler will find themselves perpetually behind – both in compliance and innovation.”

Must Read: How to Build an Enterprise AI Roadmap in 90 Days

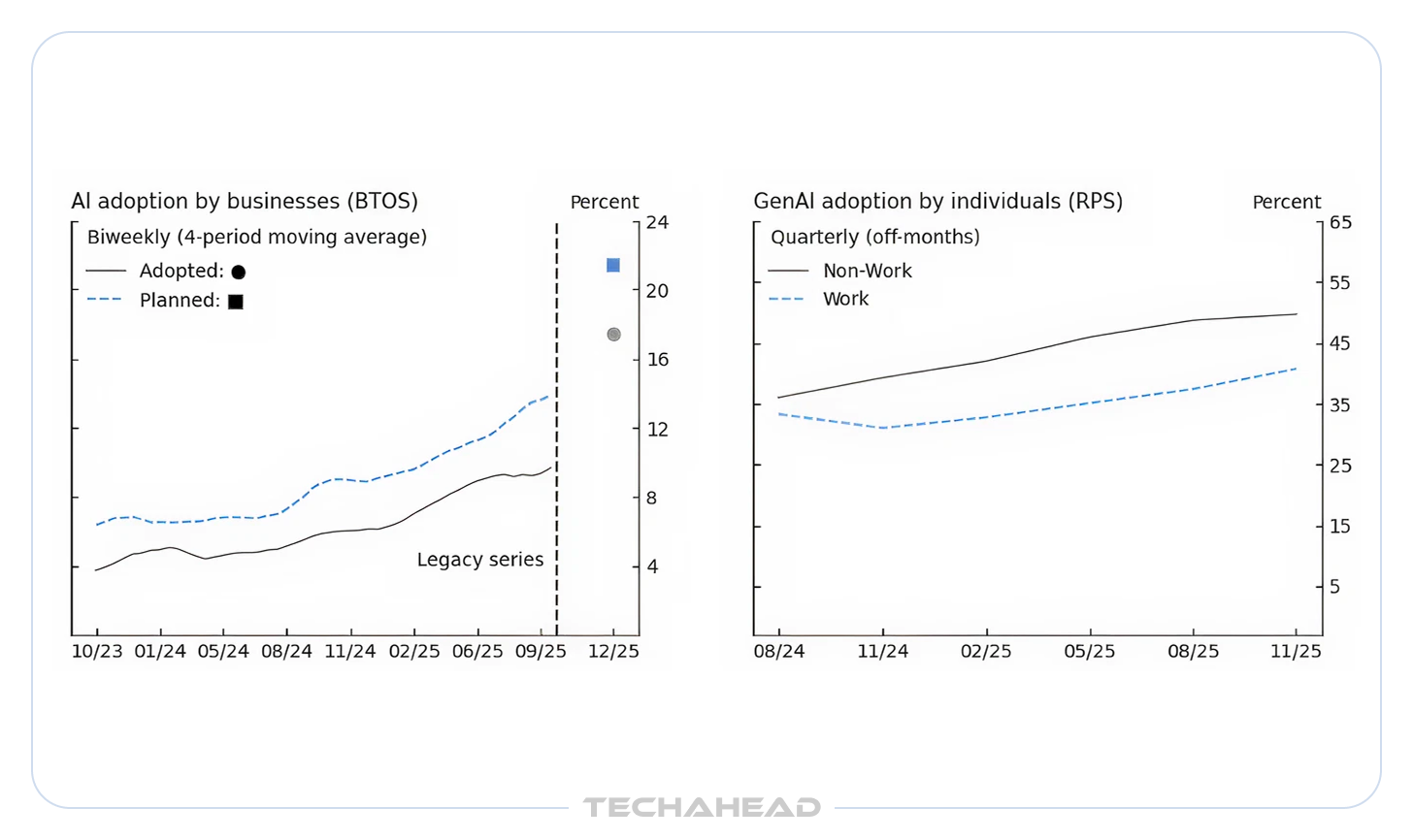

The organizations treating NIST AI RMF as infrastructure rather than compliance checkbox gain competitive velocity. By end of 2025, 18% of U.S. firms adopted AI, representing 68% year-over-year growth. This acceleration demands governance infrastructure that scales proportionally.

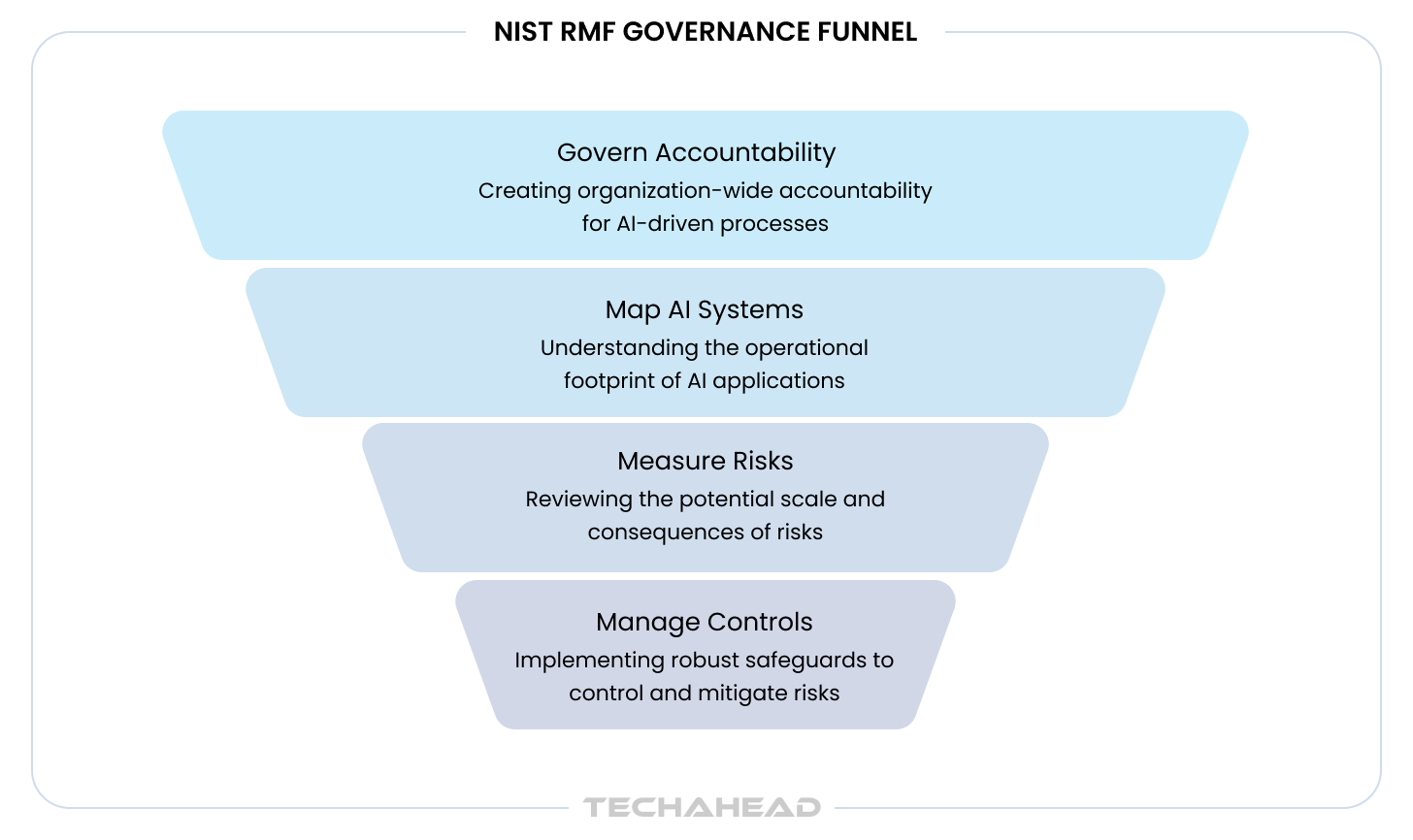

Understanding the Four Core Functions: Govern, Map, Measure, Manage

The NIST AI RMF organizes AI risk management into four core function categories:

- Govern

- Map

- Measure

- Manage

The manage function plays a critical role in risk management and resource allocation within AI systems, helping organizations mitigate risks and maintain trust through appropriate controls and procedures.

They form the AI RMF Core of the AI framework. These core functions are not sequential phases but are designed to be implemented iteratively throughout the AI lifecycle, including AI development, deployment, monitoring, and updating, ensuring continuous oversight and improvement and aligning risk mitigation strategies with each phase.

Related: Is Your Organization Ready for Enterprise-Wide AI Adoption

Govern: Establishing Organizational Accountability

Govern establishes the organizational structures, policies, and culture necessary for effective AI risk management. This function operates continuously across all other framework activities, defining who makes decisions, how risks get escalated, and what standards apply. The NIST AI RMF emphasizes the importance of ethical considerations and stakeholder engagement in the governance process, aiming to enhance accountability and transparency in AI systems.

Recommended: Responsible & Ethical AI (Compliance & Security Guide)

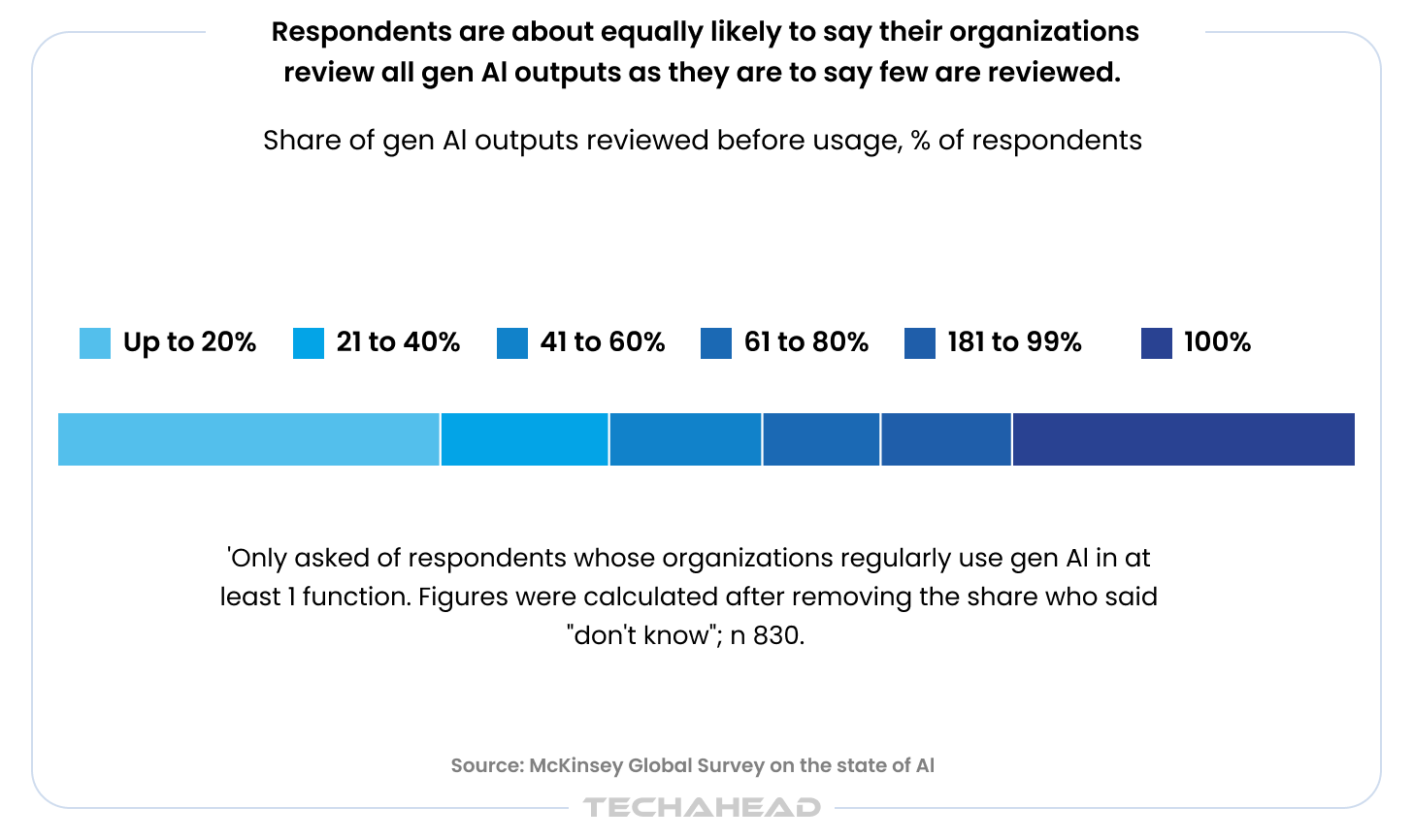

According to McKinsey’s March 2025 State of AI survey, only 28% of organizations report that their CEO is responsible for overseeing AI governance, with just 17% having board-level oversight. This governance gap creates accountability vacuum where AI deployments lack clear ownership, despite research showing CEO oversight of AI governance is one element most correlated with higher bottom-line impact from generative AI use.

Key Govern activities include:

- Establishing AI governance councils with cross-functional representation from legal, compliance, technical teams, and business units

- Defining AI risk tolerance levels aligned to organizational priorities and regulatory obligations

- Creating AI usage policies that specify permitted applications, prohibited use cases, and approval workflows

- Assigning accountability through RACI matrices clarifying who is Responsible, Accountable, Consulted, and Informed for AI decisions

- Building risk-aware culture through training programs and leadership communication emphasizing responsible AI principles

- Documenting governance structures that demonstrate oversight mechanisms to auditors and customers

Map: Contextualizing AI Systems and Risks

Map identifies what AI systems exist, how they work, who they affect, and where potential harms could emerge. This function prevents the “shadow AI” problem where organizations discover AI deployments only during incident investigations or regulatory inquiries. The Map function involves understanding and assessing the AI ecosystem – including data sources, AI models, and their interactions, which is crucial for identifying potential risks and vulnerabilities in how AI operates. Creating an AI bill of materials (AI-BOM), a comprehensive inventory of AI assets, provides visibility into the AI ecosystem and helps assess potential vulnerabilities. The NIST AI RMF emphasizes a socio-technical approach to AI risk management, recognizing that risks extend beyond technical issues to include social, legal, and ethical implications.

Core Map activities:

- Building comprehensive AI inventories cataloging every model, API, and AI-enabled tool in production or development

- Documenting system characteristics including model type, data sources, deployment architecture, and integration points

- Identifying affected stakeholders both internal (employees, contractors) and external (customers, partners, regulated populations)

- Assessing potential impacts across safety, fairness, privacy, security, and transparency dimensions

- Classifying risk levels using tiered methodology aligned to regulatory frameworks (EU AI Act high-risk categories, sector-specific requirements)

- Mapping data flows from collection through training, inference, storage, and deletion

The Map function reveals the complete AI landscape. Organizations consistently discover 40-60% more AI systems during initial inventory exercises than executives believed existed. These discoveries typically include productivity tools employees adopted independently, vendor-provided AI features auto-enabled in SaaS platforms, and proof-of-concept projects that migrated to production without formal approval.

Notably, the framework specifically targets AI challenges such as algorithmic bias, model drift, and black-box opacity, issues that standard IT frameworks may overlook.

Measure: Quantifying AI Performance and Risk

Measure is a key component of the NIST AI RMF, establishing metrics and monitoring systems that track whether AI systems operate as intended and remain within acceptable risk parameters. This function transforms abstract trustworthy AI characteristics into concrete measurements, highlighting the importance of establishing performance benchmarks for trustworthy AI systems to ensure reliability, efficiency, and security. The Measure function assesses AI systems through qualitative and quantitative methods, establishing metrics and benchmarks for AI performance, security, and reliability. The NIST AI RMF emphasizes that trustworthy AI systems should be designed to avoid bias, maintain data privacy, and be resilient against attacks.

Research indicates 96% of leaders think using generative AI makes security breaches more likely, yet only 24% of current generative AI projects include security measures. This measurement gap leaves organizations blind to risks they acknowledge but cannot quantify.

Critical Measure capabilities:

- Defining performance metrics including accuracy, precision, recall, and task-specific success criteria

- Implementing fairness testing with disparate impact analysis across protected demographic groups

- Monitoring model drift through statistical tests detecting when prediction quality degrades over time

- Tracking explainability by measuring the degree to which system decisions can be interpreted and explained

- Validating robustness against adversarial attacks, edge cases, and unexpected inputs

- Documenting measurement methodologies that demonstrate testing rigor to auditors and regulators

AI transparency depends on measurement discipline. Organizations should establish baseline metrics during development, then implement continuous monitoring that alerts when systems deviate from expected behavior.

Recommended: How to Build LLM Observability Into Enterprise AI Systems

Manage: Responding to and Mitigating AI Risks

Manage prioritizes identified risks and implements controls, incident response procedures, and continuous improvement processes. This function converts risk assessments into operational decisions about which AI systems to deploy, modify, or decommission, emphasizing the importance of risk mitigation strategies and managing risks to mitigate risks and prevent unintended harm.

Human oversight is essential in monitoring, controlling, and evaluating AI systems to ensure transparency, ethical compliance, and risk mitigation. Ensuring AI systems are secure, ethical, transparent, and resilient requires robust security measures, dedicated security teams, and proactive identification and mitigation of security threats.

Key Manage activities include:

- Prioritizing risks based on severity, likelihood, stakeholder impact, and organizational risk tolerance

- Implementing controls such as human-in-the-loop review, input validation, output filtering, and access restrictions

- Establishing incident response with AI-specific playbooks covering model failures, bias incidents, and security breaches

- Planning for AI system retirement including data deletion, model decommissioning, and transition procedures

- Documenting risk decisions that explain why certain risks were accepted, transferred, mitigated, or avoided

- Creating feedback loops that capture lessons learned and inform future AI risk management activities

Risk responses fall into four categories: mitigate through controls, transfer via contracts or insurance, accept with documented justification and monitoring, or avoid by discontinuing the AI system. The appropriate response depends on risk severity, business value, and stakeholder impact.

“Regardless of where organizations are on their AI journey, they need cybersecurity strategies that acknowledge the realities of AI’s advancement.”

– Barbara Cuthill, NIST Cyber AI Profile Author

Key principles underpinning trusted AI systems include reliability, transparency, fairness, accountability, and security. The NIST AI RMF guides organizations involved in creating, deploying, or managing AI systems, ensuring ethical considerations are integrated throughout the AI lifecycle and that ethical AI practices are embedded in every stage.

The NIST AI RMF Playbook: Turning Framework into Action

The AI RMF Playbook serves as the operational companion to the framework, providing suggested actions for achieving each of the 72 subcategories. While the framework defines outcomes, the Playbook describes implementation paths.

The Playbook offers flexibility rather than prescription. Organizations adopt suggestions that fit their industry context, risk profile, and maturity level. A healthcare organization deploying clinical decision support requires different controls than a logistics company optimizing delivery routes.

Key Playbook features:

- Suggested actions aligned to each Govern, Map, Measure, Manage subcategory

- Implementation examples showing how organizations operationalize specific controls

- Reference materials linking to standards, research, and technical guidance

- Filtering capabilities allowing users to view recommendations relevant to specific sectors or use cases

The Playbook updates approximately twice annually, incorporating community feedback and evolving best practices. Organizations should treat it as living guidance that adapts as AI technologies advance and new risks emerge.

TechAhead integrates NIST AI RMF Playbook guidance into client AI architectures from initial design workshops. Our ISO 42001:2023-certified governance framework maps all 72 subcategories to executable controls within clients’ existing GRC infrastructure, ensuring NIST compliance doesn’t create parallel governance bureaucracy but strengthens unified risk management.

Implementation Roadmap: 90-Day Quick-Start vs. 6-Month Maturity Model

NIST AI RMF implementation should follow phased approach matching organizational readiness and urgency. Two common paths emerge based on starting conditions and timeline pressure.

90-Day Quick-Start: Foundational Compliance

The 90-day path establishes minimum viable AI governance for organizations facing immediate procurement requirements or regulatory inquiries. This accelerated timeline focuses on critical controls providing audit-ready evidence.

Month 1: Govern Foundation

- Appoint AI compliance lead with executive sponsorship

- Form cross-functional AI governance council

- Conduct rapid AI inventory identifying all systems in production

- Document high-level AI usage policy

- Establish basic risk classification methodology

Month 2: Map and Measure Essentials

- Complete detailed inventory with risk tiers for each AI system

- Document intended use, data sources, and affected stakeholders for high-risk systems

- Implement logging and monitoring for production AI systems

- Define key performance indicators and fairness metrics

- Conduct initial bias testing on customer-facing AI

Month 3: Manage and Documentation

- Establish incident response procedures for AI failures

- Implement human-in-the-loop review for high-risk decisions

- Create model cards documenting system capabilities and limitations

- Prepare evidence package demonstrating NIST AI RMF alignment

- Schedule quarterly governance council reviews

The 90-day path produces compliance posture sufficient for vendor assessments and basic regulatory inquiries. Organizations should view this as foundation requiring continuous enhancement rather than complete program.

6-Month Maturity Model: Comprehensive Implementation

The 6-month timeline builds durable AI risk management infrastructure integrated with existing enterprise risk management and GRC systems.

Months 1-2: Strategic Foundation

- Establish formal governance structure with board oversight

- Conduct comprehensive AI landscape assessment across all business units

- Define organizational AI risk tolerance and approval thresholds

- Develop detailed AI governance policies and standards

- Implement AI ethics training for technical teams and business stakeholders

- Select and deploy AI governance platform or GRC tooling

Months 3-4: Operational Controls

- Build complete AI inventory with automated discovery and tracking

- Implement continuous monitoring across all AI systems

- Establish bias testing and fairness audit procedures

- Create documentation templates (model cards, data sheets, risk assessments)

- Integrate AI controls into development pipelines and change management

- Conduct vendor due diligence and third-party AI risk assessments

Months 5-6: Maturity and Optimization

- Pilot advanced monitoring capabilities (drift detection, adversarial testing)

- Establish AI incident response with tabletop exercises

- Pursue ISO 42001 certification or SOC 2 Type II audit scope expansion

- Create AI RMF crosswalk to EU AI Act and sector regulations

- Document complete evidence package for regulatory readiness

- Implement continuous improvement processes and metrics dashboards

Both implementation paths require sustained commitment. The 90-day quick-start reduces initial investment but creates technical debt requiring future remediation. The 6-month maturity model front-loads governance infrastructure that scales across growing AI portfolios.

How NIST AI RMF Maps to Other Frameworks

Organizations operating globally or in regulated industries must satisfy multiple frameworks simultaneously. The NIST AI RMF serves as operational foundation mapping to regulatory requirements and certification standards.

ISO 42001: AI Management System Integration

ISO 42001 provides certifiable AI management system standard following Plan-Do-Check-Act methodology. The NIST AI RMF maps directly to ISO 42001 requirements:

- NIST Govern → ISO 42001 leadership, policy, and organizational context

- NIST Map → ISO 42001 risk assessment and AI system lifecycle planning

- NIST Measure → ISO 42001 performance evaluation and monitoring

- NIST Manage → ISO 42001 risk treatment and continual improvement

Organizations can implement NIST AI RMF as the risk management methodology inside an ISO 42001 management system. This approach satisfies both frameworks without duplicative effort. The structural difference is certification: ISO 42001 requires formal audit by accredited certification bodies, while NIST AI RMF has no certification mechanism but provides granular operational guidance.

EU AI Act: Regulatory Compliance Bridge

The EU AI Act classifies AI systems by risk level and imposes mandatory requirements for high-risk applications. NIST AI RMF provides methodology for satisfying EU requirements without prescribing specific legal obligations.

Key mapping points:

- Article 9 risk management → NIST Map and Manage functions provide assessment and mitigation frameworks

- Article 10 data governance → NIST Measure includes data quality metrics and provenance tracking

- Article 13 transparency → NIST documentation standards support user information requirements

- Article 14 human oversight → NIST Manage includes human-in-the-loop controls

- Article 61 post-market monitoring → NIST continuous measurement and incident response

Organizations should implement NIST AI RMF as core methodology, then map EU AI Act obligations onto existing controls rather than building separate compliance programs. The frameworks operate at different abstraction levels: NIST describes how to manage AI risk, while the EU AI Act defines what’s legally required.

Read our blog on EU AI Act Compliance Checklist for Software Vendors for detailed guidance on what to verify before you hire a software development company

Financial Services Alignment

Banking regulators apply existing model risk management expectations (Federal Reserve SR 11-7, OCC 2011-12) to AI systems. The Treasury Department’s Financial Services AI RMF builds directly on NIST principles.

Key alignments:

- Model validation maps to NIST Measure and Manage with emphasis on independent review

- Model inventory extends NIST Map to include model ownership and change tracking

- Backtesting requirements align with NIST continuous monitoring and performance measurement

- Third-party model risk incorporates NIST supply chain considerations

Financial institutions should reference NIST AI RMF as baseline framework, then layer sector-specific controls addressing fair lending, consumer protection, and systemic risk management.

TechAhead architects unified governance frameworks satisfying NIST, ISO 42001, and EU AI Act requirements through single control library. Our crosswalk methodology maps 72 NIST subcategories to ISO 42001 clauses and EU AI Act articles, eliminating redundant documentation while maintaining audit trails proving compliance across all three frameworks simultaneously.

Sector-Specific Implementation Considerations

While NIST AI RMF provides universal AI risk management foundation, implementation details vary significantly across industries based on regulatory obligations, risk tolerance, and stakeholder expectations.

Healthcare: HIPAA Integration and Clinical Validation

Healthcare AI faces unique obligations around protected health information, clinical safety, and FDA oversight. NIST AI RMF implementation in healthcare contexts requires enhanced considerations beyond general framework guidance.

| Healthcare Requirement | NIST Function | Key Implementation Actions |

| Enhanced Privacy Controls | Map | Identify all PHI data flows, document legal basis for processing, implement HIPAA-compliant safeguards (encryption, access controls, audit logging, business associate agreements with AI vendors) |

| Clinical Validation | Measure | Establish performance metrics aligned to clinical outcomes (not just technical accuracy), obtain peer-reviewed evidence of safety and efficacy for AI systems influencing clinical decisions |

| Clinician Explainability | Manage | Implement human-in-the-loop workflows with sufficient context for informed decision-making, provide explanation mechanisms that support clinical judgment and provider accountability |

| FDA Medical Device Compliance | Govern + All Functions | Integrate NIST AI RMF practices with existing FDA quality management systems for AI-enabled medical devices |

Healthcare organizations should review our detailed guide on AI-Powered Clinical Decision Support for implementation patterns reducing diagnostic errors.

Finance: Model Risk Management and Fair Lending

Financial services AI intersects with model risk management frameworks, fair lending obligations, and consumer protection requirements.

| Financial Requirement | NIST Function | Key Implementation Actions |

| SR 11-7 Model Risk Management Alignment | Measure + Manage | Incorporate existing MRM practices (independent review, backtesting, sensitivity analysis) while extending to AI-specific risks including bias and explainability |

| Fair Lending Compliance | Map + Measure | Identify protected demographics (ECOA, Fair Housing Act), implement disparate impact testing across demographic groups, ensure AI doesn’t discriminate in credit scoring and underwriting |

| Consumer Transparency (CFPB) | Manage | Implement adverse action notices when AI influences lending decisions, provide explanation mechanisms satisfying regulatory disclosure requirements |

| Systemic Risk Assessment | Govern + Map | Assess whether AI creates concentration risk, interconnection vulnerabilities, or procyclical behaviors that could amplify market stress across financial system |

Organizations in fintech should review our AI in Fintech implementation guide for sector-specific governance patterns.

Manufacturing and Industrial: Safety-Critical Systems

Manufacturing AI often operates in safety-critical contexts controlling physical processes and industrial equipment.

| Industrial Requirement | NIST Function | Key Implementation Actions |

| Safety Validation | Measure | Incorporate safety engineering practices including hazard analysis, safety integrity level (SIL) assessments, failure mode analysis, and redundant safeguards beyond typical software testing |

| Real-Time Decision Constraints | Manage | Implement automated safety interlocks and emergency shutdown procedures for AI operating under timing constraints where human-in-the-loop review isn’t feasible |

| Operational Technology (OT) Integration | Govern + Manage | Apply OT security frameworks requiring air-gapped deployments, change control procedures, and physical access restrictions beyond typical IT environments |

Technology and SaaS: Customer Trust and Procurement

Technology vendors embedding AI into products face customer due diligence requirements and competitive differentiation through governance maturity.

A Deloitte research shows 68% of companies report moderate to severe AI talent shortages, making demonstrated governance maturity a competitive differentiator when customers evaluate vendor capabilities. governance maturity a competitive differentiator when customers evaluate vendor capabilities.

| SaaS Requirement | NIST Function | Key Implementation Actions |

| SOC 2 Type II Integration | Govern + All Functions | Extend SOC 2 audit scope to include AI governance processes, demonstrate control effectiveness over time for enterprise customer trust and procurement requirements |

| Customer-Facing Documentation | Map + Govern | Create transparent documentation explaining AI capabilities, limitations, data usage, and governance practices to build trust and reduce security questionnaire burden |

| Multi-Tenancy Shared Responsibility | Govern + Manage | Clearly delineate governance accountability between provider and customer, prevent gaps where each party assumes the other handles specific AI controls |

Related: How to Secure Your AI Pipeline

Common Implementation Challenges and How to Overcome Them

Organizations implementing NIST AI RMF encounter predictable obstacles. Anticipating these challenges and preparing structured responses accelerates adoption and reduces friction.

Challenge 1: Shadow AI and Incomplete Inventories

The most common implementation barrier is discovering AI systems that bypassed governance processes. Engineering teams adopt productivity tools, business units implement vendor AI features, and proof-of-concepts migrate to production without formal approval.

Solution: Implement automated discovery tools scanning network traffic, API usage, and cloud spend for AI service patterns. Combine technical detection with organizational communication establishing amnesty periods where teams can register previously undisclosed AI without penalty. Make ongoing inventory updates mandatory parts of procurement and change management workflows.

Challenge 2: Resource Constraints and Competing Priorities

Building AI governance programs requires sustained investment in personnel, tools, and executive attention. Organizations struggle balancing compliance activities against feature development and revenue-generating initiatives.

Data shows concerns about data accuracy or bias (45%) and lack of proprietary data to customize models (42%) are significant hurdles to AI adoption. These resource constraints extend to governance implementation where organizations lack dedicated personnel for risk management activities.

Solution: Start with high-risk, high-visibility AI systems providing maximum regulatory and customer value. Phase implementation across AI portfolio rather than attempting comprehensive coverage simultaneously. Leverage existing GRC infrastructure and personnel rather than building separate AI governance teams. Automate evidence collection and control monitoring reducing manual compliance burden.

Challenge 3: Evolving Regulatory Landscape

AI regulations continue developing across federal, state, and international jurisdictions. Organizations face moving targets where compliance requirements change faster than governance programs adapt.

Solution: Build governance foundation on NIST AI RMF principles rather than specific regulatory text. Map regulatory obligations to framework controls allowing single governance program to satisfy multiple requirements. Establish regulatory monitoring processes tracking proposed legislation and enforcement guidance. Join industry working groups sharing compliance approaches and best practices.

Challenge 4: Technical Complexity and Explainability

Modern AI systems, particularly deep learning models and LLMs, resist simple explanation. Organizations struggle translating technical model behavior into governance documentation that non-technical stakeholders and regulators understand.

Solution: Establish partnership between technical teams and governance functions where engineers provide technical accuracy while compliance translates to regulatory language. Create documentation templates with concrete examples showing how to describe model architecture, training data, and performance metrics accessibly. Invest in explainable AI tooling that automates explanation generation rather than relying on manual documentation.

Challenge 5: Cultural Resistance and Change Management

Technical teams may perceive AI governance as bureaucracy slowing innovation. Business units resist approval workflows and documentation requirements. Leadership underestimates governance complexity treating it as checkbox compliance.

Solution: Frame governance as enabler rather than blocker. Demonstrate how structured processes accelerate customer procurement, reduce security questionnaire cycles, and provide competitive differentiation. Share examples where governance prevented costly incidents or enabled deals competitors couldn’t close. Secure executive sponsorship making clear governance is strategic priority, not compliance theater.

Challenge 6: Third-party AI Risk and Vendor Dependencies

In 2026, 59% of security leaders reported AI threats surpassing internal expertise, while 56% of organizations experienced breaches involving third-party vendors in the preceding 6-12 months. This vendor risk creates governance gaps where organizations rely on external AI services without visibility into underlying controls.

Solution: Establish vendor due diligence processes requiring AI service providers to demonstrate NIST AI RMF alignment. Include contractual requirements for documentation access, audit rights, and incident notification. Implement monitoring systems tracking third-party AI behavior and performance independent of vendor assurances. Maintain internal controls around vendor AI integration points where organizational data enters external systems.

TechAhead’s NIST AI RMF Implementation Approach

TechAhead’s NIST AI RMF practice combines deep technical expertise with governance maturity earned across 2,500+ AI platform launches for Fortune 500 enterprises. As an OpenAI Partner and holder of SOC 2 Type II and ISO 42001:2023 certifications, we integrate AI risk management into clients’ existing development lifecycles and GRC infrastructure without creating parallel compliance bureaucracy.

Our implementation methodology includes:

- Rapid AI discovery that surfaces 40-60% more deployments than leadership awareness, including shadow AI, vendor-embedded features, and undocumented proof-of-concepts

- Risk classification workshops mapping each AI system to NIST AI RMF categories and regulatory obligations with cross-functional stakeholder alignment

- Unified governance architecture embedding all 72 NIST AI RMF subcategories into client GRC platforms (ServiceNow, Archer, OneTrust) for single-control-library compliance across NIST, ISO 42001, and EU AI Act

- Automated monitoring infrastructure built by our 240+ engineers providing real-time drift detection, bias testing, and performance tracking with audit-ready evidence generation

Proven sector expertise across:

- AXA’s global AI governance framework for enterprise transformation

- American Express and JLL’s AI risk management implementations

- ICC and Formula 1’s real-time AI platform governance

Each implementation demonstrates how NIST principles adapt to sector-specific requirements while maintaining unified governance framework. Our quarterly governance maturity assessments track client progress against NIST AI RMF implementation tiers, identifying optimization opportunities and ensuring sustained compliance as AI portfolios scale.

Ready to build NIST AI RMF compliance into competitive advantage? Contact TechAhead to discuss how our ISO 42001:2023 and SOC 2 Type II-certified AI governance framework accelerates your AI risk management maturity.

Implementation timelines vary based on organizational readiness and scope. A 90-day quick-start establishes foundational AI governance controls sufficient for vendor assessments. Comprehensive NIST AI RMF implementation with mature AI risk management infrastructure typically requires 4-6 months, though organizations with existing GRC systems often complete core requirements faster.

The framework remains technically voluntary, but that’s increasingly misleading. Federal contractors face procurement requirements for NIST-aligned AI governance. Colorado’s AI Act explicitly references NIST AI RMF for safe harbor. Enterprise customers routinely embed AI governance questions in security questionnaires where NIST alignment creates competitive advantage. Voluntary in name, expected in practice.

Absolutely. Organizations should establish unified AI governance frameworks applying NIST AI RMF principles across entire AI portfolios. The framework’s flexibility supports risk-based approaches where high-risk systems receive intensive controls while low-risk applications get proportional oversight. This prevents governance fragmentation while focusing resources on highest-risk deployments.

NIST AI RMF complements existing frameworks rather than replacing them. It addresses AI-specific risks like hallucinations, bias, and prompt injection that traditional security frameworks don’t cover. Organizations typically achieve 60-85% control overlap, with NIST filling gaps around AI risk management while leveraging existing GRC infrastructure for unified compliance.

NIST AI RMF provides voluntary U.S. guidance focused on operational risk management across four functions. ISO 42001 offers certifiable international management system standards. They’re complementary: most mature programs use NIST AI RMF as the risk management engine inside an ISO 42001 management system, satisfying multiple requirements through single implementation.

Vendor AI governance requires monitoring third-party AI behavior independent of vendor claims. Implement API-level tracking for model performance and bias metrics. Establish vendor assessment frameworks requiring NIST AI RMF alignment through evidence packages. Include contractual requirements for documentation access, audit rights, and breach notification in all AI service agreements.

Primary ownership typically sits with Chief Risk Officer, CISO, or General Counsel, depending on organizational structure. These leaders operationalize AI risk management within broader enterprise strategy, interpret regulatory requirements, and champion framework adoption across departments. Successful implementation requires C-suite sponsorship backed by cross-functional governance councils.

Yes. The Generative AI Profile (NIST AI 600-1) extends the framework specifically for LLMs and foundation models. Released July 2024, it addresses 12 risk categories including confabulation, prompt injection, and data privacy. Organizations layer this profile onto core NIST AI RMF implementation rather than treating generative AI as separate compliance initiative.

While NIST AI RMF doesn’t mandate specific formats, effective implementation requires comprehensive AI inventory, model cards documenting capabilities and limitations, risk assessments for each system, governance policies, incident response playbooks, monitoring dashboards, and audit logs. Organizations should create documentation templates customized to sector requirements rather than generic compliance forms.

NIST AI RMF provides operational methodology organizations use to satisfy EU requirements. The frameworks operate at different levels: NIST describes how to manage AI risk while EU AI Act defines legal obligations. Key mappings include Article 9 risk management to NIST Map/Manage, Article 14 human oversight to Manage controls, and Article 61 monitoring to continuous Measure functions.