Required for core functionality such as security, network management, and accessibility. These cannot be disabled.

Key Takeaways

- When your contracted team builds non-compliant AI, regulatory authorities target the company, placing the system on the market under their brand.

- Most vendors in your evaluation pipeline cannot show completed conformity assessments or Annex IV documentation from actual projects, just promises.

- Development partners who position compliance as end-of-project work don’t understand EU AI Act requires design-stage documentation that cannot be retrofitted.

- Real compliance expertise shows up in documentation frameworks vendors already used on previous projects, not generic templates downloaded from internet.

You’re planning to hire a software development partner to build AI-powered features for your product. The shortlist looks promising. Technical capabilities check out. Project timelines seem reasonable. Your chosen vendor claims they “understand compliance.”

Here is when things get interesting: 78% of organizations have not taken meaningful steps toward EU AI Act compliance, according to an analysis spanning eight industries. That statistic includes the development companies sitting in your evaluation pipeline right now.

The EU AI Act is a comprehensive legal framework established by the European Union to regulate AI systems based on risk, aiming to protect fundamental rights and set global legal standards for AI technology. The AI Act aims to establish clear regulations and requirements for AI developers and deployers, foster innovation, support SMEs, and ensure consumer trust through measures that reduce compliance costs and promote responsible AI use. It classifies AI models and systems according to risk tiers, imposes strict requirements for high-risk applications, and sets transparency obligations for organizations operating in the EU market.

The Act is designed to foster innovation, support small and medium sized enterprises (SMEs) by reducing regulatory burdens, and ensure that AI adoption respects safety, ethical principles, and fundamental rights. As part of a broader landscape of ai regulations and related regulations such as GDPR, this new regulation requires organizations to understand and prepare for compliance, integrating multiple frameworks for effective AI governance. Key stakeholders affected by the Act include private organizations, public authorities, product manufacturers, and the European Commission, all of whom must ensure compliance with its requirements.

August 2, 2026 marks full enforcement for high-risk AI systems under the EU Artificial Intelligence Act. The Act entered into force on August 1, 2024, with phased implementation: prohibitions on unacceptable AI apply from February 2025, and full compliance for high-risk systems takes effect August 2026. If your contracted team builds non-compliant AI into your product, the liability lands on you, not them. Penalties reach €35 million or 7% of global annual turnover, whichever is higher.

This checklist walks you through what to verify when evaluating software development companies, what documentation to request in RFPs, and which certifications actually matter.

Read our Enterprise AI Compliance & Governance 2026 Guide to understand in detail the entire compliance landscape and how to proceed forward.

Why You Can’t Retrofit EU AI Act Compliance

What makes EU AI Act different from previous regulations? You can’t retrofit compliance after launch. The EU AI Act requires design-stage documentation, training data provenance tracking, and architectural decisions that must be made before development starts. If your development partner doesn’t understand this, you’re signing a contract to build a product you legally cannot sell in European markets. The Act’s extraterritorial reach means entities offering AI products or services in the EU, regardless of their location, must comply with these legal standards.

Early documentation and classification are essential, but organizations must also ensure their staff possess sufficient AI literacy to meet the Act’s requirements and avoid penalties. Developing a robust AI strategy is equally important for effective data governance, AI readiness, and building organizational trust.

Robert Gelo, Senior Consultant at Vision Compliance, puts it straight:

“Most organizations are aware the AI Act exists, but very few understand what it actually requires of them. The regulation goes well beyond policy statements. It requires organizations to classify every AI system they operate, document how those systems were built and tested, and maintain ongoing human oversight.”

Over half of the organizations lack systematic AI inventories, which means they can’t even identify which systems need compliance attention. If the development firm you’re evaluating can’t inventory the AI they’re building, they can’t classify it, assess it, document it, or prove compliance for it. Mapping existing AI systems, including identifying distinct tasks performed by AI models, is a critical step for understanding the scope of work and classifying AI systems under the appropriate legal regime of the EU AI Act. It is also essential to appoint a lead or dedicated compliance officer to oversee compliance efforts, ensuring they have the necessary knowledge and resources.

Must Read: Common AI Security Pitfalls and How to Mitigate Them Early

The question isn’t whether your development partner mentions compliance in their proposal. The question is whether they can prove they’ve built it into their engineering workflow with documentation from actual projects.

Why Liability Doesn’t Stay with Your Development Partner

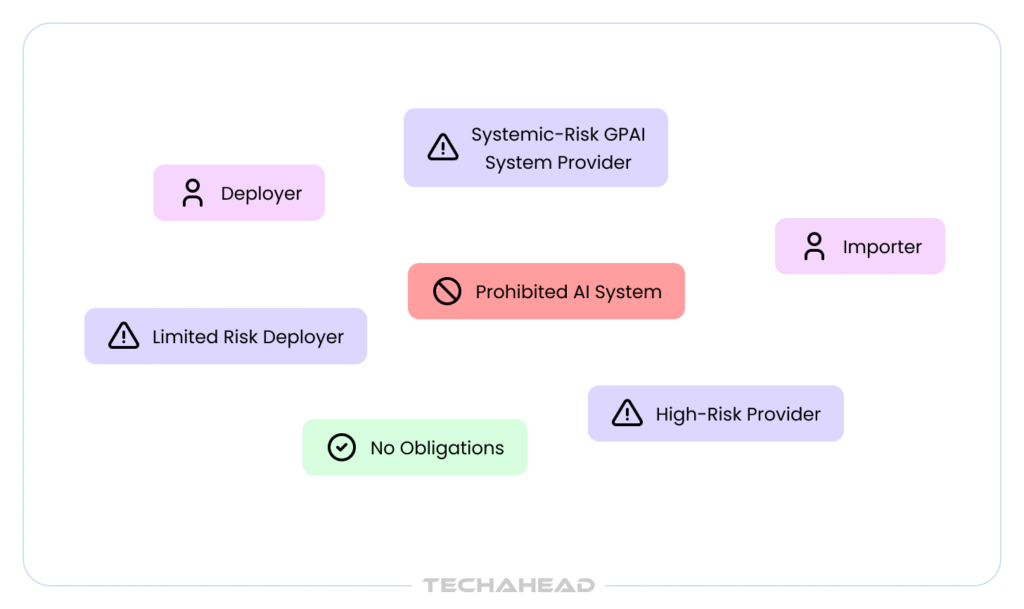

Before you evaluate any development firm, understand who carries what liability under the EU AI Act. The regulation assigns responsibility based on functional roles, and most companies hiring software teams get this completely wrong until it’s too late. When deploying AI systems, it is crucial to assess and manage risks to the health, safety, and fundamental rights of natural persons, ensuring these rights are safeguarded throughout the lifecycle of the AI application. Public authorities, as well as private organizations, are subject to these obligations under the Act.

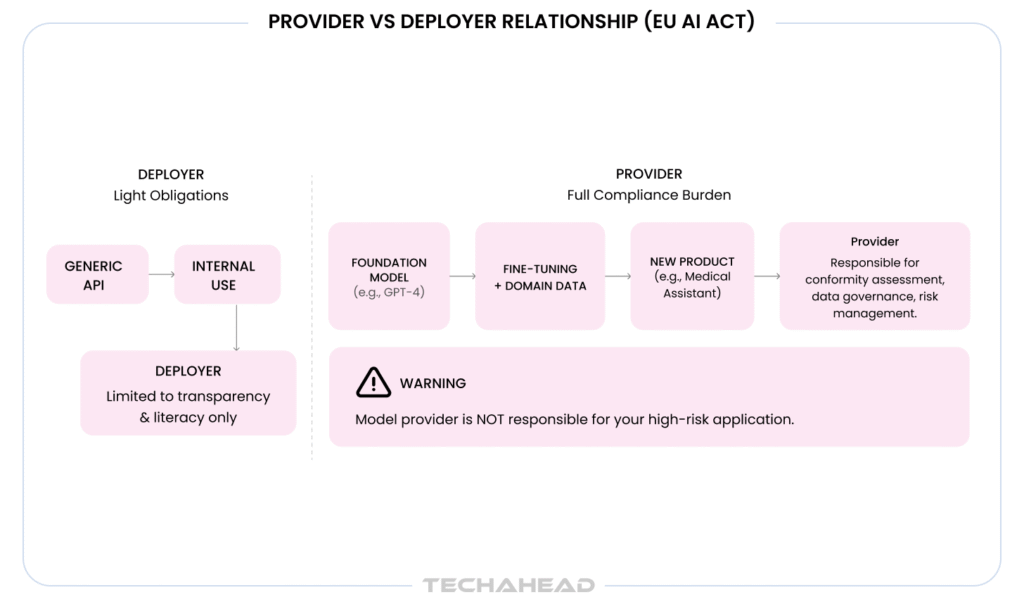

The Provider-Deployer Liability Split

Your contracted development team acts as a provider (developing AI systems), while you become the deployer (using AI systems in a professional context). Providers carry the heaviest obligations:

- Technical documentation (Annex IV requirements)

- Conformity assessment before market launch

- CE marking for high-risk systems

- Post-market monitoring infrastructure

- Quality management system certification

Specific rules apply to different AI system categories such as high-risk AI or AI interacting with individuals – to ensure safety, ethics, and fundamental rights under the EU AI Act. Additionally, certain AI systems used for scientific research, military, defense, and national security purposes are exempt from the Act.

Bonus Read: Why Does Enterprise AI Need Audit Trails

But the liability trap only a few talk about: Even though your development partner is the provider, you’re the company placing the system on the market under your brand. When regulatory authorities conduct enforcement, they target you, not the contracted team.

If your AI-powered recruitment tool discriminates against protected groups, the million-dollar fine lands on you. Your contract might include indemnification clauses, but those trigger after you’ve already paid the penalty and suffered reputational damage.

You’re also the deployer, creating dual obligations:

- Human oversight implementation

- System monitoring and performance tracking

- Incident reporting to authorities within 15 days

- Maintaining audit logs for 6-24 months depending on sector

Recommended Read: How to Ensure Compliance, Security, and Transparency in AI Systems

Why “We’ll Handle Compliance” Means You’re Paying Them to Learn

When your development partner doesn’t have compliance infrastructure already in place, you’re not just paying for development. You’re paying for them to figure out EU AI Act requirements on your project budget. The learning curve shows up in three ways:

Missed requirements during scoping: They quote you for feature development but later discover the AI system requires conformity assessment, adding $100K-$200K they didn’t budget for.

Retrofit documentation costs: They build first and document later, which costs 4-5x more than building documentation into the development process.

Launch delays: You’re ready to ship, but they can’t produce the technical documentation or conformity assessment declarations regulators require, blocking your market entry for 6-12 months.

Sachin Siwal, Technical Manager, Delivery at TechAhead, who has overseen compliance implementation across 500+ AI projects, explains:

“The line between provider and deployer responsibility is where most companies discover hidden compliance gaps after the contract is signed. A development team that claims compliance expertise but can’t produce process documentation is a massive risk.”

What to Actually Verify in Your RFP Process

Stop accepting vague compliance commitments. Request specific evidence:

ISO 42001:2023 Certification:

This is the international standard for AI management systems. If they don’t have it, ask when their certification audit is scheduled. If they say “we’re working toward it,” that means no.

Annex IV Documentation Templates:

Ask for anonymized samples from previous AI projects. If they can’t provide actual templates they’ve used in production, they’ve never completed conformity assessment for anyone.

Risk Classification Methodology:

Request their documented process for mapping AI features to Annex III categories. If they say “we evaluate case-by-case,” they don’t have a repeatable methodology.

Completed Conformity Assessments:

Ask for client references who launched high-risk AI systems in EU markets. Verify those clients actually went live, not just completed development.

Evidence of Embedded Compliance:

Request examples showing how their development process generates compliance documentation during sprints, not as an afterthought. If documentation happens “at the end of the project,” it’s a retrofit work priced into your contract.

TechAhead’s ISO 42001:2023 and SOC 2 Type II certifications aren’t marketing materials. They’re third-party audited proof that our AI governance framework operates across every client engagement. When prospects ask us to demonstrate compliance capability, we show them the actual documentation frameworks, risk classification matrices, and quality management procedures we used on 2,500+ platform launches across healthcare, finance, and regulated enterprise markets. Not promises. Evidence that’s already been validated by regulatory auditors.

Also Read: GenAI in Data Governance

Checkpoint 1: Verify Their Risk Classification Expertise

The EU AI Act categorizes AI systems into four risk tiers. Correct classification determines whether your product needs a $10,000 review or a $500,000 conformity assessment. If your development partner gets this wrong, you discover it during regulatory audit, not during code review.

When evaluating AI systems, it’s important to recognize that general purpose AI (GPAI) and general purpose AI models such as GPT or Copilot, are versatile systems capable of competently performing a wide range of tasks across different domains. Under the EU AI Act, these models must demonstrate reliable operational competence to meet regulatory and performance standards.

What Competent Firms Can Demonstrate

Ask potential partners: “How do you classify AI systems by risk tier?”

Red flag answers:

- We’ll work with your legal team to figure that out

- Most AI isn’t high-risk, so we usually don’t worry about it

- We classify everything as high-risk to be safe

Answers that demonstrate expertise:

- Describes a documented methodology mapping features to Annex III categories

- Explains the difference between unacceptable, high-risk, limited-risk, and minimal-risk tiers

- Cites specific examples from previous projects where they identified high-risk systems early

The Four Risk Tiers:

Unacceptable-risk AI (banned): Social scoring by governments, manipulative AI, and certain real-time biometric identification in public spaces are classified as unacceptable risks and are prohibited practices under the Act due to their threat to safety, rights, or societal values. AI systems in this category are not permitted under any circumstances.

High-risk AI (full compliance required): Employment screening, credit scoring, healthcare diagnostics, biometric systems, and AI used in critical infrastructure or law enforcement. These must comply with strict requirements such as risk assessment, data quality, technical documentation, CE marking, and post-market monitoring.

Limited-risk AI (transparency only): Chatbots, deepfakes, AI-generated content. These require transparency obligations, such as informing individuals when interacting with AI systems.

Minimal-risk AI (negligible obligations): Internal tools, productivity features, entertainment AI, games, and spam filters. These minimal risk AI systems can be used freely without stringent regulatory requirements under the EU AI Act.

Present this scenario to shortlisted companies: “We want to build a SaaS platform with inventory forecasting, a customer support chatbot, and automated credit approval. How would you classify each component?”

If they answer “the whole platform is high-risk,” they don’t understand that individual features require separate classification.

TechAhead’s risk classification methodology caught a fintech client’s “intelligent loan routing” feature crossing into high-risk creditworthiness assessment territory during requirements gathering. Early identification prevented a six-month launch delay and $800K in remediation costs.

To streamline this process, organizations can utilize a compliance checker – a tool designed to help determine adherence to the EU AI Act and provide a clear overview of complex compliance logic.

Checkpoint 2: Demand Proof of Documentation Capabilities

According to compliance professionals: Some development firms now charge 20-30% more to reflect certification costs and engineering overhead. Translation: most companies you’re evaluating don’t have documentation infrastructure built in. They’re pricing the cost of building it into your contract, which means you’re funding their compliance capability development, not benefiting from existing expertise.

Recommended: LLM Observability (The Link Between Quality and Accountability in AI Inputs)

What to Request in Your RFP

Include this specific requirement: “Please provide a sample Annex IV technical documentation template from a previous AI project (anonymized to protect client confidentiality).”

If they can’t provide this, they’ve never completed conformity assessment for anyone. You’ll be their first attempt, and first attempts rarely go smoothly when regulatory deadlines are involved.

If they provide generic templates downloaded from the internet, they’re not actually using them in production. Ask follow-up questions about specific sections to see if they understand what each documentation requirement actually means in practice.

If they provide actual documentation frameworks integrated into their development workflow, verify they can explain how the templates populate automatically during development versus requiring manual completion at project end.

Check Out: How to Write an RFP for Your Mobile App Requirement?

The Eight Documentation Areas That Expose Inexperience

Annex IV requires comprehensive documentation across eight distinct areas. Ask your shortlisted partners to walk you through their process for each. Most development teams can fumble through explanations for 2-3 of these. Competent compliance-ready firms can provide concrete examples for all eight with specific tools and processes they use.

| Documentation Area | Key Questions to Ask |

| System Purpose & Limitations | How do they document intended use cases, contraindications, and scope boundaries? Do they use requirements management tools or spreadsheets? |

| Architecture & Design Choices | Do they maintain ADRs (Architecture Decision Records)? Can they show decision history linking requirements to implementations? |

| Data Governance | How do they track training data provenance? Can they prove data lineage from source to model with quality metrics and versioning? |

| Training & Validation | Are training procedures automatically logged or manually documented? How do they capture hyperparameters and validation methodologies? |

| Performance Metrics | Do they test accuracy across demographic groups? What fairness metrics do they track? How do they document edge cases? |

| Risk Management | How do they identify and document risks during development? What’s their process for tracking mitigation strategies? |

| Human Oversight | Can they show concrete human-in-the-loop workflow examples? How do they document override capabilities? |

| Change Management | How do they link model versions to documentation updates? Does documentation auto-update when models are retrained? |

The gap between firms with mature documentation practices and those without shows up immediately when you ask these questions. Firms without established processes give vague answers about “best practices” or “working with your team.” Firms with real expertise walk you through specific tools, templates, and automated workflows they use on every project.

Recommended Read: How to Secure Your AI Pipeline

Checkpoint 3: Assess Their Conformity Assessment Experience

Conformity assessments cost $10,000-$50,000 per system, and timelines run 3-12 months. Ask: “Have you completed conformity assessments for other clients? Can you share anonymized declarations of conformity?”

- If they say “we’re working toward that capability” → You’re paying for them to learn.

- If they say “we partner with compliance consultants” → Verify those consultants have actual EU AI Act experience.

- If they say “yes” and provide evidence → Request client references who launched AI products in EU markets.

Self-assessment requires quality management systems, complete Annex IV documentation, risk management activities, bias testing with statistical results, human oversight implementation, and automatic logging.

Ask: “Walk me through your quality management system for AI projects.” If they can’t, they’re not ready.

Conformity assessment isn’t just regulatory theater. It’s the technical proof point that enterprise buyers demand. Development firms without completed assessments can’t close enterprise deals.

Checkpoint 4: Evaluate Their Post-Market Monitoring Infrastructure

Compliance doesn’t end at launch. Large enterprises spend $1 million annually on compliance once systems are in production.

Ask potential development partners: “What monitoring infrastructure do you build into AI systems to support post-market obligations?”

Red flags:

- “We can add logging if you need it”

- “Your ops team will handle monitoring”

Answers demonstrating expertise:

- Describes automated drift detection and performance tracking

- Explains how they log AI decisions for regulatory audit trails

- Discusses retention periods (6-24 months depending on use case)

- Shows examples of monitoring dashboards from previous projects

Under the EU AI Act, serious incidents must be reported to authorities within 15 days. If they haven’t thought through incident detection, classification, and reporting procedures, your first regulatory violation becomes their learning experience.

Under the EU AI Act, serious incidents must be reported to authorities within 15 days. If they haven’t thought through incident detection, classification, and reporting procedures, your first regulatory violation becomes their learning experience.

TechAhead’s SOC 2 Type II-certified monitoring infrastructure tracks both EU AI Act’s 15-day serious-incident requirements and GDPR’s 72-hour breach notification deadlines. Our quarterly red-teaming exercises and monthly drift detection reviews surface issues before regulators do.

Checkpoint What to Verify Red Flags 1. Risk Classification Expertise Documented methodology mapping features to Annex III categories. Ask: “How do you classify AI by risk tier?” Demand specific project examples. “We’ll figure it out with your legal team” or “We classify everything as high-risk” 2. Documentation Capabilities Anonymized Annex IV templates from completed projects. Verify auto-generated documentation during sprints, not end-of-project manual work. “Generic internet templates or ‘we document after development'” 3. Conformity Assessment Experience Anonymized declarations of conformity. Client references with launched AI products in EU markets and completed assessments. “We’re working toward that” or unverified compliance consultant partnerships 4. Post-Market Monitoring Automated drift detection, bias monitoring, incident routing meeting 15-day requirements built into deployment from day one. “We can add logging later” or “Your ops team handles monitoring”

Additional Compliance Considerations for General Purpose AI Models and Data Governance

Providers of general purpose AI models (GPAI), such as GPT or Copilot, must adhere to transparency rules, copyright compliance, and maintain technical documentation as part of their obligations under the EU AI Act. Organizations should also raise awareness and train teams on the implications of the Act, including the risks and opportunities linked to AI use and related regulations.

To process the obligations of the EU AI Act, organizations must implement necessary security measures, establish technical documentation, and ensure compliance with data governance requirements and transparency standards. Data privacy is a key aspect of data governance, protecting sensitive information and building trust and transparency in AI systems.

The EU AI Act complements the General Data Protection Regulation (GDPR) by establishing a baseline for global standards, influencing compliance practices, and promoting innovation, transparency, and consumer confidence in AI and data management.

What This Actually Costs (And Why Lower Bids Are Dangerous)

Understanding the true cost of EU AI Act compliance helps you evaluate proposals accurately. Development firms price compliance in dramatically different ways depending on whether they have built-in infrastructure or are learning on your budget.

Development Firms with Built-In Compliance Infrastructure

Documentation (embedded in development sprints): 10-15% of development budget

This appears as line items distributed throughout the project, not lumped together as “compliance overhead.” You’re paying for documentation that generates automatically during development, not retrofit work after the fact.

Conformity assessment preparation: $100K-$400K (includes QMS implementation)

This happens in parallel with development for firms with mature processes. The assessment draws from documentation already created during sprints, so it doesn’t block your launch timeline. This includes the actual conformity assessment ($10K-$50K per system), Quality Management System implementation if needed ($200K-$350K), and integration of compliance frameworks into existing workflows.

Ongoing monitoring infrastructure (Annual): $150K-$300K (includes Bias Monitoring, Incident Classification)

Post-market monitoring systems built into your deployment architecture from day one, with automated drift detection, incident logging, and compliance reporting already operational when you launch. This covers continuous performance tracking, bias monitoring across demographic groups, incident classification and reporting infrastructure, and quarterly compliance validation reviews.

Bonus Read: Is Your Organization Ready for Enterprise-wide AI Adoption?

Development Firms Without Built-In Compliance Infrastructure

This is where proposals that look cheaper upfront become catastrophically expensive.

Retrofit assessment (discovered after development): $50K-$150K

They’ll need to reverse-engineer design decisions they didn’t document during development. This often surfaces 6-8 months into the project when someone finally asks about conformity assessment timelines.

Retrofit documentation creation: $200K-$500K

Creating Annex IV documentation retrospectively costs 4-5x more than building it during development. Why? Because engineers who wrote the code 8 months ago can’t remember why they made specific architectural decisions. Data scientists who trained models didn’t log hyperparameter selection rationale. No one tracked which data sources fed into which model versions.

Related Read: Strategies for Gen AI and LLM Security

The Hidden Costs Nobody Talks About

Beyond direct compliance expenses, non-compliant development partners create cascading financial damage that doesn’t appear in project budgets. These costs compound over time and often exceed the original development investment.

Lost Market Access

Every month you can’t sell in EU markets because of compliance blockers is revenue you never recover. For enterprise software companies, EU markets often represent 30-40% of total addressable market.

Enterprise Procurement Delays

Development firms with mature compliance infrastructure help their clients close enterprise deals 40% faster because buyers receive compliance documentation during security reviews instead of promises to “handle it later.”

Investor Diligence Failures

If you’re raising capital, investors conducting technical due diligence will discover compliance gaps. This either tanks your valuation or blocks the round entirely until remediated.

The development partner with the lowest bid often delivers the highest total cost of ownership when compliance infrastructure is missing.

Your Final Evaluation Checklist: What to Verify Before Signing

When reviewing proposals from potential development partners, don’t accept surface-level compliance commitments. Dig deeper with these specific verification steps.

Certifications (Non-Negotiable Requirements)

ISO 42001:2023 (AI Management System):

This certification proves the firm has implemented systematic AI governance processes that external auditors verified. Ask when their last audit occurred and request a copy of the certificate. If they say “we’re pursuing certification,” ask for the audit schedule. No schedule means no real plans.

SOC 2 Type II (with AI-specific controls):

Standard SOC 2 isn’t enough. The certification must explicitly cover AI system controls including model versioning, bias testing, and post-market monitoring. Request the SOC 2 report and verify Section 4 includes AI-specific controls, not just generic IT security measures.

Related: Pillars of AI Security

Evidence to Request in Your RFP (Not Optional)

Anonymized Conformity Assessment Declarations:

Request redacted declarations of conformity from at least two previous high-risk AI projects. These should include the CE marking process, notified body details (if third-party assessment), and conformity dates. If they’ve never completed one, they’ll be learning the process on your project.

Sample Technical Documentation:

Don’t accept generic templates. Request actual, anonymized Annex IV documentation from a completed project showing how they documented system purpose, data governance, training procedures, and risk management. The difference between template and completed documentation reveals whether they’ve actually done this work.

Client References with Launched Products:

Get contact information for at least three clients who launched AI systems in EU markets using this development partner. Verify these clients aren’t just still in development but actually completed conformity assessment and went live. Ask those references: “Did compliance blockers delay your launch? Did retrofit documentation costs exceed original estimates?”

Red Flags That Should Disqualify Immediately

Beyond missing certifications, watch for these deal-breakers in your evaluation:

| Red Flag | Why It Matters |

| We’ll handle compliance at the end of development | EU AI Act documentation must track design decisions during development. You can’t document design rationale retrospectively with credibility. |

| Cannot explain their risk classification methodology | If they can’t walk you through high-risk determination using Annex III categories, they don’t understand the regulation’s core framework. |

| No examples of previous conformity assessments | Claims of compliance expertise without completed conformity assessments means you’re paying them to learn for the first time. |

| Vague timelines for certification | “We’re working on ISO 42001” without specific audit dates means it’s not happening. Real certification has concrete timelines. |

| Treating EU AI Act like GDPR | Talking about it as “just privacy and data protection” misses technical documentation, conformity assessment, and lifecycle monitoring requirements. |

How TechAhead Integrates EU AI Act Compliance Into Every AI Product

We’re not selling compliance as an add-on service that inflates your project budget by 20-30%. It’s embedded in how we build software, which is why our clients launch AI products in EU markets without the retrofit costs and delays that plague companies who chose development partners learning compliance on their budgets.

Our ISO 42001:2023 and SOC 2 Type II certifications are third-party audited proof that our engineering workflows, documentation standards, and quality management systems meet the highest AI governance standards available. We’ve launched 2,500+ platforms for clients including Starbucks, American Express, Audi, AXA, ESPN F1, JLL, and Hoag Memorial Hospital across healthcare, finance, automotive, and regulated enterprise markets where compliance failures don’t just delay launches, they block them entirely.

What This Looks Like in Practice

| Phase | What We Do | What You Get |

| Before Development (Month 1-2) | Risk classification workshops mapping features to Annex III categories and regulatory obligations (Article 10, Article 14, sector standards). | Compliance scope during estimation. Technical + compliance roadmap together. |

| During Development (Month 3-5) | Auto-update AI inventory when engineers add services/models. Annex IV documentation generates with code commits (training procedures, hyperparameters, design rationales captured automatically.) | No manual tracking. No surprise dependencies. Documentation is automatic, not end-of-project. |

| At Launch (Month 6-9+) | Deliver complete conformity assessment documentation, CE marking, risk management evidence, operational post-market monitoring. | Real compliance evidence for customer security reviews, not promises. |

| After Deployment (Ongoing) | SOC 2 Type II monitoring: automated drift detection, bias monitoring, incident routing meeting EU AI Act’s 15-day requirement. | Proactive issue detection. Continuous compliance without manual reviews. |

Quarterly red-teaming exercises where we actively try to break the AI systems surface vulnerabilities before malicious actors do. Monthly compliance validation reviews ensure documentation stays current as you add features or modify models.

This infrastructure accelerates our clients’ enterprise sales cycles by 40%, security questionnaires get answered with documentation instead of vague promises. We enable day-one EU market access instead of 6-12 month remediation delays. Our compliance framework demonstrates the engineering maturity enterprise buyers demand.

Ready to connect with development partners who treat EU AI Act compliance as engineering infrastructure instead of legal paperwork added at the end?

Contact TechAhead to discuss how our certified governance framework delivers compliant, market-ready AI products without the retrofit costs that are currently pricing non-compliant competitors out of EU markets.

Ask them to show completed conformity assessment declarations from previous projects. Real compliance expertise shows up in documentation frameworks they’ve already used, not promises about what they’ll build. If they can’t walk you through their risk classification methodology using Annex III categories, they’re learning on your budget.

Providers develop AI systems and place them on the market, carrying the heaviest obligations including technical documentation and conformity assessment. Deployers use AI systems professionally. Here’s the catch: when you hire a development partner to build AI for your product, they’re the provider but you face enforcement actions because it’s under your brand.

No. Retrofit compliance costs 4-5x more than building it in from the start because EU AI Act documentation must track design decisions made during development. Engineers can’t recreate architectural rationale months later. You can’t document training data provenance, model selection logic, or risk management decisions retrospectively with any credibility regulators will accept.

Yes, if your AI systems serve EU customers or process EU resident data. The regulation has extraterritorial reach similar to GDPR. Location of your headquarters doesn’t matter. What matters is where your AI operates and who it impacts. Non-EU development partners building high-risk systems for EU markets must comply fully.

Request anonymized Annex IV technical documentation templates from completed projects, not generic downloads. Ask for client references who actually launched high-risk AI systems in EU markets with conformity assessment completed. Verify their ISO 42001:2023 and SOC 2 Type II certifications cover AI-specific controls, not just generic IT security measures.

Present them with a real scenario: “We’re building a SaaS platform with credit approval, customer chatbot, and inventory forecasting. How would you classify each component?” Competent firms explain the difference between high-risk, limited-risk, and minimal-risk tiers with Annex III mappings. Red flag: they call everything high-risk or say they’ll “figure it out later.”

You are. Regulatory authorities target the company placing the system on the market under their brand, not the contracted team. Even though your development partner acts as the provider with technical obligations, enforcement actions hit you first. Your indemnification clauses trigger after you’ve already paid penalties and suffered reputational damage.

When they say “we’ll handle compliance at the end of development.” This reveals they don’t understand that EU AI Act documentation must track design decisions during development sprints. Any partner positioning compliance as an end-of-project add-on instead of embedded engineering practice will deliver retrofit costs you didn’t budget for.

Conformity assessment verifies your high-risk AI system meets all regulatory requirements before market launch. Most Annex III systems use internal self-assessment (Annex VI). Systems embedded in regulated products like medical devices require third-party notified body assessment. Development partners without completed assessments can’t show you the process, only promise they’ll figure it out.

Compliant partners build automated drift detection, performance tracking across demographic groups, and incident classification into deployment architecture from day one. Post-market monitoring isn’t optional logging your ops team adds later. Under EU AI Act, serious incidents require reporting to authorities within 15 days, which demands automated detection infrastructure, not quarterly manual reviews.