Required for core functionality such as security, network management, and accessibility. These cannot be disabled.

Agentic AI development is the process of designing, building, and deploying AI systems that can reason, plan, use tools, and execute multi-step tasks autonomously toward a defined goal without requiring human prompting at each step. Unlike generative AI, which responds to instructions, agentic AI acts on objectives.

Most enterprise AI investments to date have followed a familiar pattern: a model receives a prompt, generates an output, and waits. That pattern is changing.

The shift toward agentic AI represents a fundamental change in how organizations deploy artificial intelligence. Instead of systems that answer questions, enterprises are now building systems that take actions, coordinate workflows, connect to internal tools, and operate continuously toward business goals.

Key Takeaways

- 96% of enterprises already use AI agents in some form, signaling rapid mainstream adoption. Organizations that scale responsibly outperform those rushing into fragmented deployments.

- AI agents can speed up business processes by 30–50%, especially in multi-step workflows. Architecture matters more than models. Success depends on orchestration, memory, and tool integration—not just LLM choice.

- Agentic AI is goal-driven, not prompt-driven. It executes tasks autonomously instead of waiting for instructions.

- Governance is not optional. Audit trails, access control, and escalation must be built from day one.

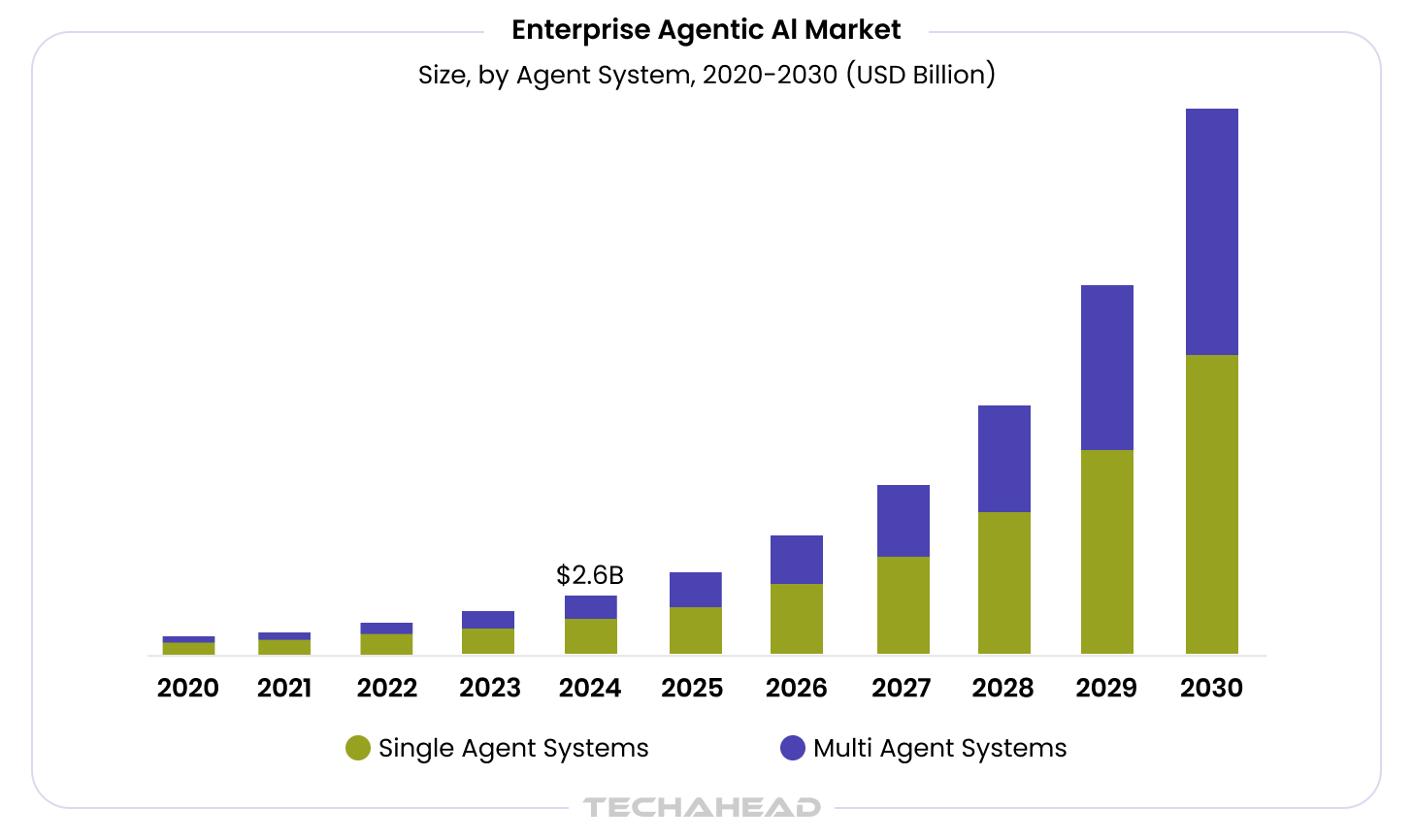

Consequently, the global enterprise agentic AI market size that was estimated at $2.58 billion in 2024 is now projected to reach $24.50 billion by 2030, showing a CAGR of 46.2% from 2025 to 2030. This immaculate growth is a clear implication that Agentic AI adoption is substantial. Thus, organizations looking for custom agentic AI development want to move beyond experimentation and invest in scalable, governed systems that deliver measurable business outcomes while integrating seamlessly with their existing enterprise architecture.

This guide covers what agentic AI development actually involves, what architecture and infrastructure it requires, what realistic implementation looks like, and how to measure the business case. It also covers where agentic AI is not the right choice and what separates production-ready deployments from failed pilots.

Why This Matters Now: The State of Enterprise Agentic AI

The data clearly shows that enterprise Agentic AI adoption is scaling. 2x as many executives expect AI agents to make autonomous decisions in processes and workflows by 2027. Whereas, industries are expected to see full autonomous robotic systems with embodied AI by 2030. (IBM)

Gartner projects that 40 percent of enterprise applications will include task-specific AI agents by the end of 2026, compared to near zero in 2024.

BCG research finds that well-deployed AI agents accelerate business processes by 30 to 50 percent. These numbers are not projections. They reflect outcomes from organizations that have moved agentic AI into production.

Agentic AI is expected to autonomously resolve 80% of common customer service issues without human intervention by 2029. This will lead to a 30% reduction in operational costs. Organizations that wait do not avoid agentic AI. They inherit an environment shaped by ungoverned decisions made by teams that moved without them.

What is Agentic AI?

Agentic AI refers to autonomous AI systems that independently perceive environments, set goals, reason through complex multi-step problems, and execute actions across enterprise systems to deliver outcomes. It proactively monitors conditions, makes decisions, and coordinates workflows with minimal human oversight.

These systems combine large language models for reasoning with tool integrations, memory, and orchestration layers to handle dynamic enterprise challenges. A logistics agent, for example, might detect a shipping delay, reroute deliveries, update inventory across ERP systems, and notify customers—all within defined governance boundaries.

The term emphasizes agency: the capacity to act purposefully toward objectives rather than passively await instruction. Enterprises deploy agentic AI for end-to-end automation in operations, customer service, IT management, and revenue workflows where traditional RPA or copilots fall short.

What Makes Systems Agentic

Three properties define an agentic AI system and distinguish it from simpler AI applications:

- Autonomy: The system takes actions toward a goal without human approval of each step. The degree of autonomy is configurable and governed.

- Tool use: The system calls external tools, APIs, databases, or other systems to gather information or execute actions. Tool access is what makes agentic AI operationally useful rather than advisory.

- Memory: The system maintains context across steps, storing intermediate results, tracking progress, and using prior interactions to inform current decisions.

What Is Agentic AI Development?

Agentic AI development is the practice of building AI systems designed to pursue goals rather than answer prompts. A generative AI model waits for input and returns a response. An agentic AI system receives a goal, breaks it into tasks, executes those tasks using available tools, evaluates the results, and continues until the objective is met.

The distinction matters for enterprise architecture. Agentic systems require different infrastructure, different oversight mechanisms, and different governance frameworks than conversational AI or traditional automation.

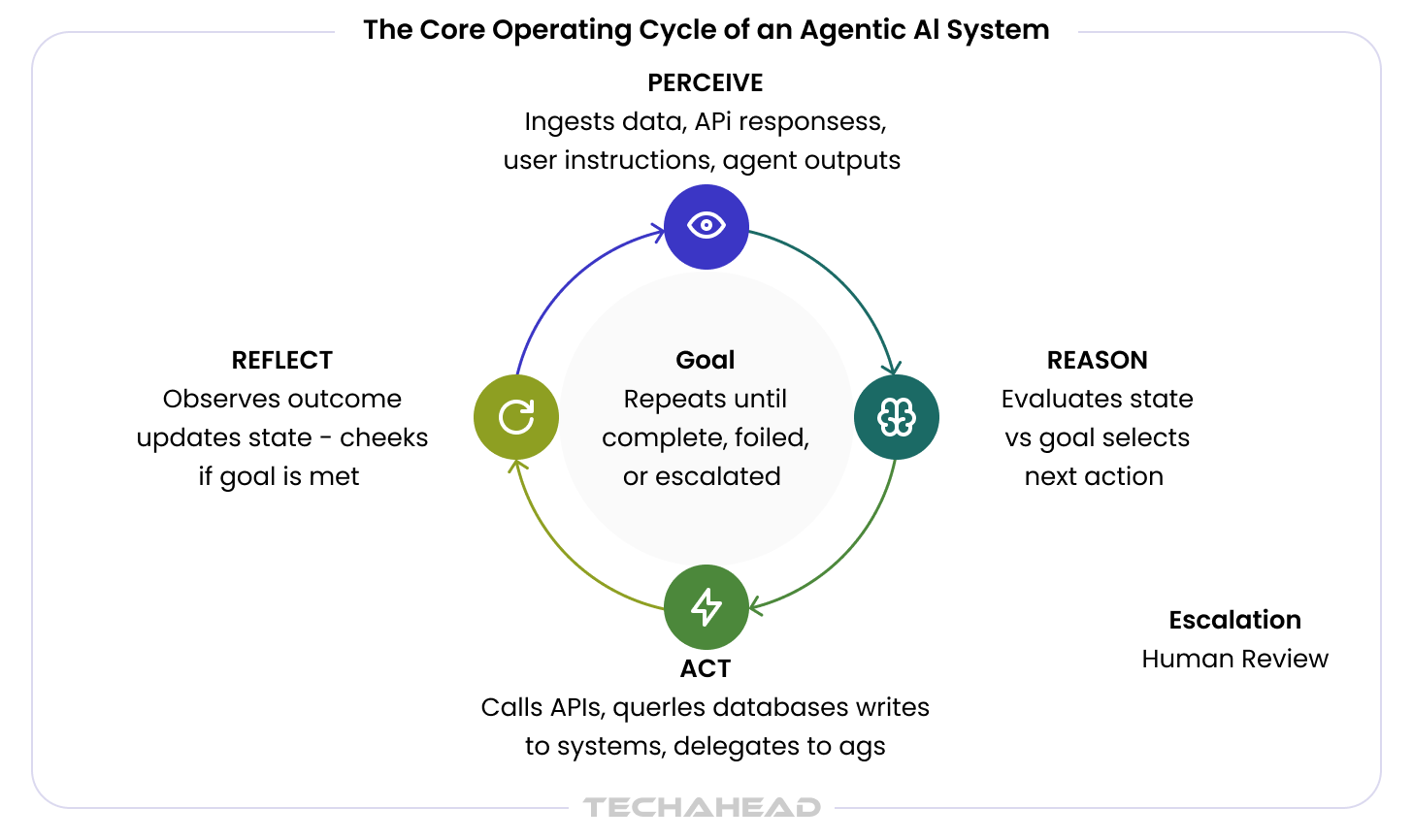

The Core Operating Cycle of Agentic AI System

Every agentic AI system operates on a loop that repeats until a goal is achieved or an escalation condition is triggered:

- Perceive: The agent ingests input from its environment — data streams, API responses, user instructions, or the outputs of other agents.

- Reason: The agent evaluates the current state against its goal, considers available tools and actions, and selects a next step.

- Act: The agent executes the selected action, calling an API, querying a database, writing to a system, or delegating to another agent.

- Reflect: The agent observes the outcome of its action, updates its understanding of state, and determines whether the goal has been reached or a new action is required.

This loop is what separates agentic systems from generative models. A generative model runs one forward pass and stops. An agentic system runs iteratively until completion, failure, or escalation.

How Agentic AI Differs from Generative AI, Copilots, and Traditional Automation

Enterprise leaders often arrive at agentic AI evaluations with mental models shaped by prior AI investments. Those models require updating. Agentic AI is not an improved chatbot, not a smarter RPA tool, and not a more capable copilot. It is a categorically different architecture with different integration requirements and different governance demands.

| Traditional Automation / RPA | Generative AI | Copilots / AI Assistants | Agentic AI | |

| Trigger | Rule or schedule | User prompt | User prompt | Goal or event |

| Action scope | Deterministic steps | Single response | Augments human action | Multi-step, autonomous |

| Human oversight | Defined by workflow | Per-output review | Continuous — human acts on output | Configurable gates and escalation |

| Handles ambiguity | No | In language only | Partially | Yes — reasons across uncertainty |

| Integration depth | Structured data, fixed systems | Minimal | Moderate | Deep — tools, APIs, databases, ERPs |

| Governance complexity | Low | Low to medium | Medium | High — requires agent-specific controls |

| Best fit | Repetitive, structured tasks | Content, summarization, Q&A | Productivity augmentation | Complex, goal-oriented workflows |

The practical implication: agentic AI does not replace the investments organizations have made in RPA, generative AI, or copilots. It extends the range of what can be automated to include tasks that require judgment, multi-system coordination, and adaptive planning. Knowing which tool fits which problem is the first decision any enterprise must make.

Autonomy Levels in Enterprise Deployments

Autonomy in agentic AI is not binary. Enterprise deployments typically operate across a spectrum:

- Level 1: The agent provides recommendations; a human takes every action.

- Level 2: The agent executes low-risk actions autonomously and escalates high-risk decisions.

- Level 3: The agent executes most actions autonomously within defined scope limits, with periodic human review.

- Level 4: The agent operates fully autonomously within a governed policy framework, with exception-based human involvement.

Most production enterprise deployments operate at Level 2 or 3. Full Level 4 autonomy is appropriate only in highly bounded, low-risk workflows with mature observability and rollback capability.

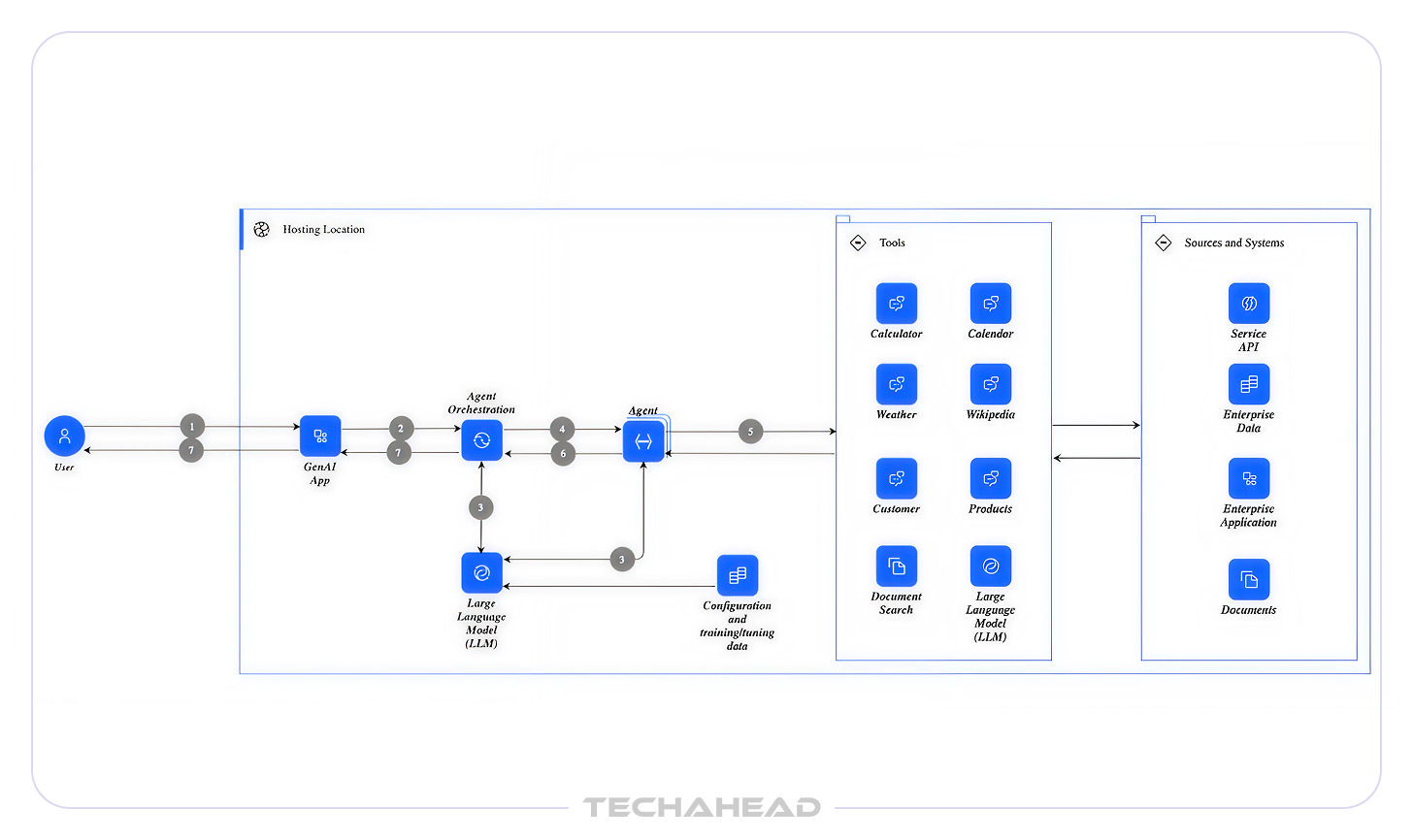

Agentic AI Architecture: Core Components

Agentic AI development requires building or integrating five architectural layers. Each layer has enterprise-specific requirements that differ from typical AI or software projects.

Layer 1: The Reasoning Core

The reasoning core is the large language model (LLM) that drives agent decision-making. Model selection has significant downstream consequences: capability profile, inference cost, latency, context window size, and fine-tuning compatibility all affect what workflows an agent can handle reliably. Enterprise deployments should avoid single-model dependency. A well-architected agentic system can route tasks to different models based on cost, capability, and compliance requirements.

Layer 2: Tool and API Integration

Agents execute actions by calling tools — APIs, databases, code interpreters, internal systems, and external services. Tool design is where most of the engineering work in agentic AI development lives. Each tool must be scoped precisely: what the agent can call, what parameters it can pass, and what conditions trigger a fallback or escalation. Poorly scoped tools are the primary source of agent errors in production. The Model Context Protocol (MCP), introduced as an open standard, is emerging as the preferred framework for structuring tool access across enterprise environments.

Layer 3: Memory and Context Management

Agents require three types of memory to function reliably across multi-step workflows:

- In-context memory: The active working state of the agent within a single session. Limited by model context window size.

- External long-term memory: Persistent storage, typically in a vector database, that the agent queries for historical context, prior decisions, or reference knowledge.

- Shared agent memory: State accessible across multiple agents in a multi-agent system, enabling coordination without redundant computation.

Memory architecture is often under-specified in early agent designs and becomes the source of reliability failures at scale.

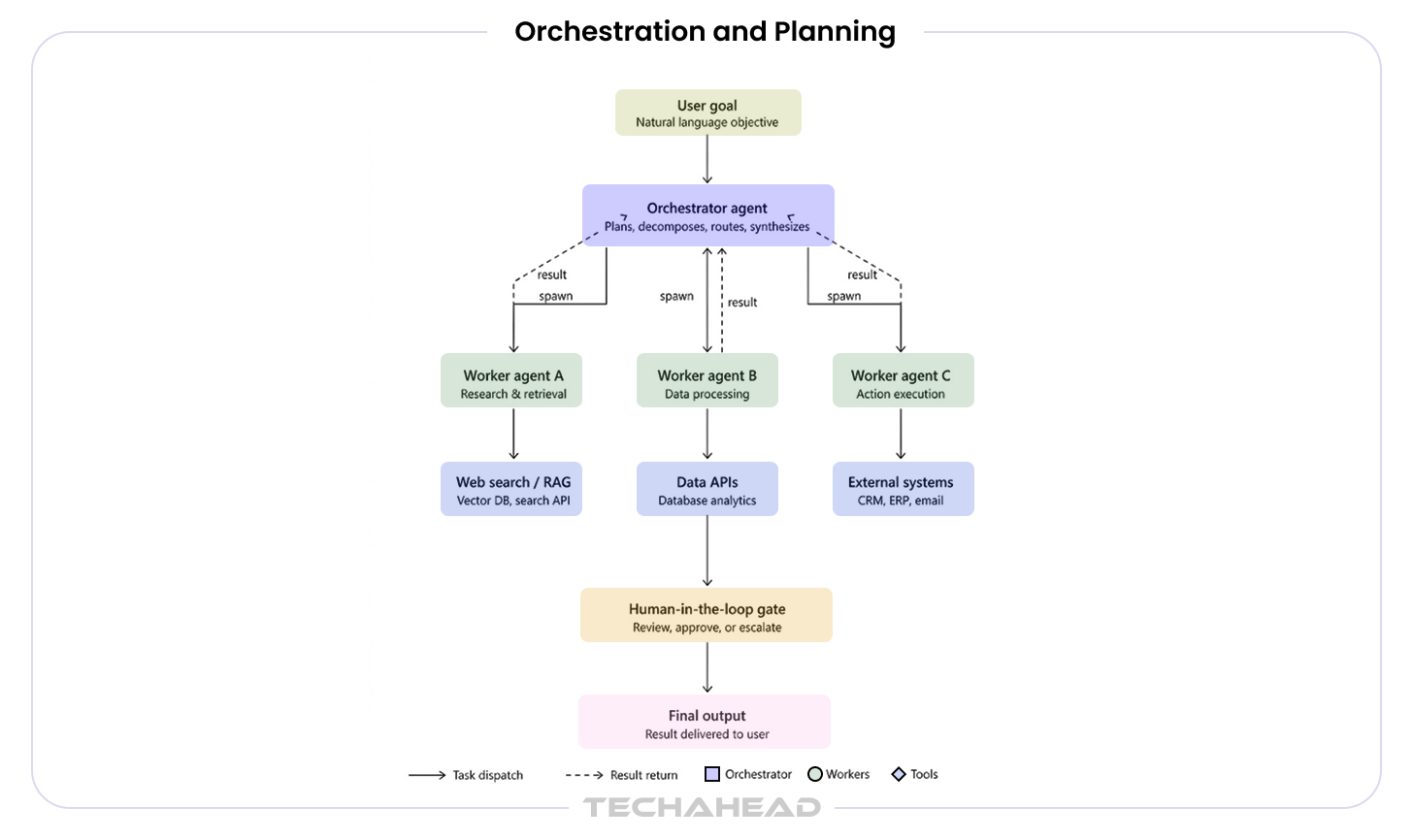

Layer 4: Orchestration and Planning

Orchestration manages how an agent decomposes a goal into sub-tasks, sequences or parallelizes execution, handles failures, and synthesizes results. In multi-agent architectures, an orchestrator agent coordinates worker agents, each specialized for a specific function. Leading orchestration frameworks include LangGraph, AutoGen, and CrewAI. Framework selection should be driven by workflow complexity, team familiarity, and long-term maintainability rather than benchmark performance.

Layer 5: Observability and Human-in-the-Loop Interface

Production agentic systems require continuous runtime monitoring. Unlike traditional software, agent behavior can diverge in ways that are not detectable without trace-level logging of reasoning steps, tool calls, and decision points. Observability is not a post-deployment addition. It must be designed into the system from the start. Human-in-the-loop interfaces define the escalation and override mechanisms that keep agents operating within acceptable bounds. These are governance artifacts, not UX features.

What Enterprises Gain from Agentic AI Development

The case for agentic AI is no longer theoretical. Organizations that have moved it into production are reporting outcomes that compound across functions, from operations to finance to HR. Here is what enterprise leaders are consistently measuring.

1. Faster Execution Across Core Business Processes

Agentic AI systems operate continuously, without handoffs, delays, or the coordination overhead of human-driven workflows. Unlike automation tools that handle only structured, predictable tasks, agentic systems adapt to context, handle exceptions, and keep workflows moving without constant human intervention.

2. Sharper, Faster Decision-Making

Agents aggregate data from multiple systems, apply policy constraints, evaluate options, and surface recommendations or take action directly within approved limits in a fraction of the time a human team would require. For time-sensitive functions like fraud detection, credit decisioning, or supply chain response, this speed differential is a structural advantage.

3. Significant Operational Cost Reduction

Agentic AI is helping various industries lower operational costs. For instance, it save costs in supply chain by automating procurement monitoring, supplier evaluation, and reorder workflows. In HR, enterprises report savings through agentic management of candidate screening and onboarding coordination. These are not one-time savings, but they scale with deployment scope.

4. Continuous Operations Without Human Handoffs

Agentic systems don’t pause at the end of a business day. They monitor data streams, respond to triggers, and execute workflows around the clock. In IT operations, this has translated to mean time to resolution improvements, with agents correlating alerts, identifying root causes, and executing remediation steps before incidents escalate. In healthcare, prior authorization cycle times have been compressed from days to hours.

5. Scalability Without Proportional Headcount Growth

Traditional scaling means hiring. Agentic AI decouples output capacity from headcount. Once a governed agent workflow is in production, it can be extended to handle greater volume, additional use cases, or new geographies without rebuilding from scratch.

6. Competitive Differentiation That Compounds

Enterprises adopting agentic AI at scale are building structural advantages that are difficult for late movers to replicate quickly. Early deployments build institutional knowledge, governance maturity, and integration depth that compound over time. The competitive gap between organizations that move now and those that wait is not linear, but it widens.

Most enterprises we work with at TechAhead are not ready for agentic AI at scale. They face it challenging to capture full value that requires rethinking systems, data, and governance to support scalable, safe agent deployment.

Organizations that deploy agents without a coherent architecture strategy end up with agent sprawl, i.e., multiple isolated deployments, inconsistent governance, and mounting technical debt. Therefore, we evaluate agentic AI initiatives against three criteria: integration fitness (can the agent connect reliably to existing systems?), governance readiness (are observability, audit, and override mechanisms in place?), and scalability (can the architecture support additional agents and workflows without redesign?).

High-Value Enterprise Use Cases for Agentic AI

The most successful enterprise agentic AI deployments share a common characteristic: they target workflows that are high-volume, multi-step, and require both structured data processing and contextual judgment. The following Agentic AI use cases represent the strongest first bets across major industry verticals.

Financial Services

- Fraud detection and investigation: Agents monitor transaction streams in real time, identify anomalous patterns, cross-reference account history and external watchlists, and autonomously escalate confirmed risks for human review. Banks using agentic AI for fraud detection have reduced review backlogs significantly while improving detection accuracy.

- Regulatory compliance automation: Compliance agents track regulatory changes across jurisdictions, assess impact on existing policies, draft internal guidance updates, and flag affected processes for review — a workflow previously requiring weeks of manual analyst time.

- Credit and underwriting decisioning: Agents aggregate applicant data across structured and unstructured sources, apply underwriting policies, generate decision rationale, and route edge cases to human review, reducing cycle time while maintaining policy compliance.

Healthcare and Life Sciences

- Prior authorization: Agents integrate payer rule sets with electronic health record data to prepare and submit prior authorization requests autonomously, reducing approval cycle time from days to hours and freeing clinical staff for patient care.

- Clinical trial coordination: Agents manage site communications, track protocol deviations, flag eligibility issues, and synthesize cross-site data, reducing the administrative burden on clinical operations teams.

Manufacturing and Supply Chain

As per Mordor Intelligence, the Agentic AI supply chain and logistics market size is expected to reach $16.84 billion by 2030, projecting a CAGR of 14.20% during the forecast period (2025-2030).

- Procurement agents: Agents monitor supplier signals, track inventory thresholds, evaluate vendor performance data, and autonomously initiate reorder workflows within defined parameters. McKinsey reports up to 25 percent lower operational costs in supply chain deployments using agentic AI.

- Predictive maintenance orchestration: Agents correlate sensor data, maintenance history, and parts availability to schedule preventive interventions before failures occur, reducing unplanned downtime.

IT Operations and Software Development

- Incident response: Agents correlate monitoring alerts from multiple systems, identify root cause candidates, execute predefined remediation steps, and escalate unresolved issues with full diagnostic context. Mean time to resolution improvements of 40 percent have been documented in enterprise deployments.

- Automated code review and security scanning: Agents analyze pull requests against coding standards, identify security vulnerabilities, suggest fixes, and generate documentation — accelerating development cycles without reducing quality gates.

HR and Legal

- Onboarding automation: Agents coordinate across HRIS, payroll, IT provisioning, and learning platforms to execute onboarding workflows from offer acceptance to day-one readiness. HR teams report savings of approximately 20 hours per recruiter per month.

- Contract review and risk flagging: Legal agents analyze contract language against standard templates and organizational risk policies, flagging non-standard clauses and generating redline summaries for attorney review.

What Enterprises Need Before Starting Agentic AI Development

The majority of failed agentic AI initiatives fail not because of technology limitations, but because the organizational foundations required for agent deployment were not in place before development began. A realistic AI readiness assessment across five domains is the most valuable first investment any enterprise can make.

Agentic AI Readiness Checklist

| Domain | What to assess | Readiness signal |

| Data readiness | Are data sources accessible via API? Is data quality sufficient for agent consumption? Is sensitive data governed and access-controlled? | APIs exist and are documented. Data has defined ownership. |

| Integration readiness | Which enterprise systems will agents need to access? Are those systems API-enabled? Are integration credentials and permissions manageable? | Target systems have accessible APIs. IT integration team is available. |

| Governance posture | Does the organization have an AI acceptable use policy? Are audit and logging requirements defined? Is there a process for reviewing agent actions? | Basic AI policy exists. Compliance team is engaged. |

| Team capability | Is there AI/ML engineering capacity? Are domain experts available to define workflow logic? Is there a dedicated project owner with authority to make decisions? | Core team is identified and allocated. |

| Leadership alignment | Is there executive sponsorship? Is there a defined success metric and a defined scope for the first deployment? Is there tolerance for a controlled pilot period before full rollout? | Executive sponsor is named. Success metric is agreed. |

Organizations that score poorly across more than two domains should address those gaps before beginning development. A well-scoped readiness assessment typically takes two to three weeks and prevents the far costlier delays caused by discovering structural gaps mid-project.

Agentic AI Technology Stack Including Frameworks and Models

With technical shifting at a fast pace, enterprises should keep an eye out for the latest frameworks and models for Agentic AI development. Below is a table that introduces technologies you might come across while building an Agentic AI solution.

| Category | Technologies & Frameworks |

| Agent Frameworks | LangGraph | AutoGen (Microsoft) | CrewAI | LangChain | LlamaIndex | Semantic Kernel |

| Cloud Agent Services | AWS Bedrock Agents | AWS Bedrock Guardrails | Azure AI Agent Service | Google Vertex AI Agents |

| LLMs & Foundation Models | GPT / OpenAI | Claude 3 (Anthropic) | Gemini 1.5 | LLaMA 3 (Meta) | Mistral |

| Vector Databases (RAG) | Pinecone | Weaviate | Qdrant | pgvector (PostgreSQL) | Chroma | Azure AI Search |

| Orchestration & Workflow | Apache Airflow | Prefect | Temporal | AWS Step Functions | Kubernetes |

| Monitoring & Observability | LangSmith | Arize AI | WhyLabs | Prometheus + Grafana | AWS CloudWatch |

| Core ML / Data | PyTorch | TensorFlow | Scikit-learn | Hugging Face Transformers | Python |

| Infrastructure & Cloud | AWS | Azure | GCP | Docker | Kubernetes | Terraform |

Agentic AI Implementation Roadmap: From Pilot to Production

Whether you want an automated customer service or an intelligent business process automation for HR & finance, you have to follow a strategic approach to building AI agents. Our blog “Building AI Agents and Copilots for Enterprise Workflows” explore this in detail.

Below is the Agentic AI development process to build a robust, fully-automated AI system.

Phase 1: Discovery and Use Case Selection (Weeks 1–4)

The first phase is not about building anything. It is about selecting the right thing to build. The highest-risk decision in agentic AI development is the choice of first use case. Prioritize workflows that are bounded in scope, have a clear and measurable success metric, involve data that is available and accessible, and carry relatively low compliance complexity.

Avoid launching with enterprise-wide transformation ambitions. Gartner’s finding that 40 percent of agentic AI deployments will be cancelled by 2027 points directly to programs that set scope too broad and timeline too aggressive for a first deployment.

Phase 2: Architecture Design and Proof of Concept (Weeks 5–10)

Once a use case is selected, the architecture work begins. This phase covers framework selection, agent topology design, tool integration mapping, memory schema definition, and observability baseline setup. A proof of concept should demonstrate that the agent can complete the target workflow end-to-end on representative data, not that it can perform well on idealized test cases.

Phase 3: Controlled Pilot with Human-in-the-Loop (Weeks 11–18)

The pilot runs the agent in a production-like environment with real data and real stakes, but with a limited user population and active human oversight. This phase surfaces the edge cases, integration failures, and reasoning gaps that never appear in controlled testing. Escalation protocols should be tested deliberately, not just documented.

Phase 4: Governance Hardening and Production Readiness (Weeks 19–24)

Before general availability, the system must pass a governance review. This covers access controls, audit logging, anomaly detection, escalation flows, and compliance documentation. Governance should be a delivery artifact with defined acceptance criteria, not a checklist review at the end of a sprint.

Phase 5: Scale and Continuous Improvement (Ongoing)

Production deployment opens the path to additional workflows, but scale should follow evidence of reliability, not confidence in the technology. A formal agent registry, versioning policy, performance monitoring protocol, and retraining trigger framework should be in place before extending to new domains.

Common Implementation Failure Modes for Agentic AI

- Scope creep in Phase 1: The use case expands before the first agent is stable. Prevention: define explicit scope boundaries in writing and hold them through Phase 3.

- Data quality discovered late: Agents expose data quality issues that were invisible to traditional systems. Prevention: conduct a data audit in Phase 1, before architecture design begins.

- Observability treated as optional: Agents deployed without trace-level logging cannot be debugged when they fail. Prevention: observability is a Phase 2 deliverable, not a Phase 5 addition.

- Under-resourced governance: Governance roles are assigned to people who already have full-time jobs. Prevention: governance ownership must be explicit and resourced in the project plan.

- No escalation protocol tested under pressure: Escalation paths exist on paper but have never been exercised. Prevention: red-team escalation scenarios in Phase 3.

Agentic AI Development Cost and Timeline: What to Expect

Cost transparency is one of the most significant gaps in enterprise agentic AI content. Most vendor proposals quote development fees without addressing the full cost profile of a production deployment. The following ranges reflect realistic market rates as of 2025–2026.

| Scope | Typical Duration | Indicative Range | Key cost drivers |

| Single-workflow pilot | 8–14 weeks | $75K–$200K | Integration engineering, prompt design, observability setup, governance documentation |

| Domain-level production deployment | 5–7 months | $250K–$700K | Multi-agent orchestration, enterprise SSO, compliance review, user training, monitoring infrastructure |

| Enterprise-wide multi-agent platform | 12–24 months | $1M+ | Platform architecture, cross-system integration, agent governance framework, change management, ongoing model operations |

The cost of agentic AI development varies based on scope, integration complexity, and governance requirements, but enterprise benchmarks are beginning to stabilize. A single-workflow pilot typically ranges from $75,000 to $200,000 and can be delivered within 8 to 14 weeks. Expanding to a domain-level deployment with multiple agents and deeper integrations increases costs to $250,000 to $700,000 over 5 to 7 months. For enterprise-wide, multi-agent platforms that require advanced orchestration, compliance controls, and cross-system integration, investments can exceed $1 million. Beyond development, organizations must also account for ongoing costs such as model inference, observability tooling, and human oversight, which significantly impact total cost of ownership.

Total Cost of Ownership: What Most RFPs Miss

Development fees represent a fraction of the true cost of an agentic AI deployment. The following items are frequently absent from vendor proposals:

- Model inference costs: Agents make many model calls per workflow completion. Inference costs scale with agent usage and tool call frequency. A high-volume workflow can generate inference costs that exceed development costs within the first year.

- Vector database hosting: Long-term agent memory requires persistent vector storage. Hosting costs scale with the number of agents and memory scope.

- Observability tooling: Trace-level monitoring for agentic systems requires dedicated tooling. This is not covered by standard application monitoring platforms.

- Human review capacity: Human-in-the-loop gates require staffing. The cost of the review function is often excluded from AI project budgets.

- Retraining and model updates: As workflows evolve and model providers release updates, agents require regular review and adjustment. This is an ongoing operational cost, not a one-time expense.

Do you want to know the total cost of building an AI-driven solution? Read our blog on software development cost to understand where every dollar of your software budget goes.

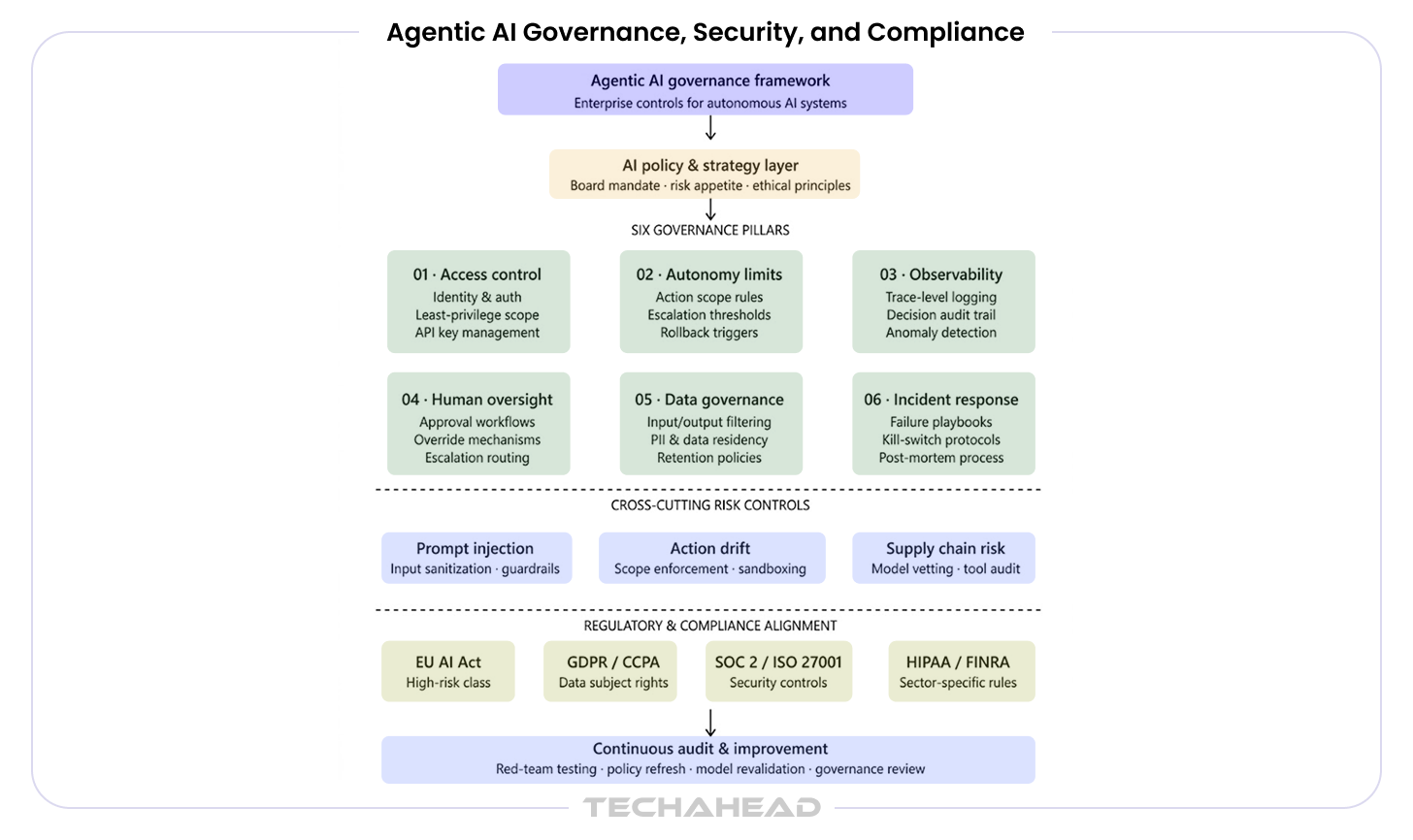

Agentic AI Governance, Security, and Compliance

As per a State of AI Development report, 94% of enterprises are concerned that AI sprawl is increasing complexity, technical debt, and security risk. Only 12 percent have implemented a centralized approach to agentic AI governance. This gap is the most consequential risk in enterprise agentic AI deployment today.

Guardrails must be built in from the start, not bolted on later. Governance retrofitted onto a live agentic system is expensive, incomplete, and disruptive.

The Six Pillars of Agentic AI Governance

- Agent identity and access management: Each agent should have a distinct identity with scoped credentials. Agents should not share service account credentials, and their access should follow the principle of least privilege — no more access than the specific workflow requires.

- Action scope controls: Define explicitly what each agent is permitted to do, what systems it can write to, and what actions require human approval before execution. This is a configuration decision with security implications, not a UX preference.

- Audit trails and traceability: Every agent action — tool calls, decisions, escalations, outputs — must be logged in a format that supports post-incident review. Regulators and internal audit functions require this. Build it before the first production deployment.

- Data residency and privacy controls: Agents that process personal data must comply with GDPR, CCPA, HIPAA, or other applicable frameworks. Data handling policies must be implemented at the tool layer, not assumed at the model layer.

- Model version control: Model updates can change agent behavior. Version-pinning, staged rollout procedures, and rollback protocols are operational requirements for production agentic systems.

- Human escalation protocols: Define the conditions under which an agent must pause and request human review. These conditions must be tested, not just documented, before production deployment.

Security Risks Specific to Agentic AI

Agentic systems introduce security risks that do not exist in traditional applications or single-turn AI deployments:

- Prompt injection: Malicious content in data sources can instruct an agent to take actions outside its intended scope. This is an agent-specific attack vector with no direct equivalent in traditional software security.

- Credential exposure in multi-agent chains: When orchestrator agents pass instructions to worker agents, credentials and session tokens can be exposed across the chain. Each agent-to-agent handoff is a potential credential leakage point.

- Lateral movement via orchestration: A compromised agent can, under some architectures, direct other agents to perform unauthorized actions. Agent isolation and communication controls are security-critical design decisions.

- Tool misuse: Agents with broad tool access can invoke tools in sequences or contexts their developers did not anticipate. Narrow tool scoping and action logging are the primary mitigations.

Regulatory Considerations

Enterprise agentic AI deployments must be assessed against applicable regulatory frameworks. In the United States, relevant frameworks include HIPAA for healthcare data, GLBA and SOC 2 for financial services, and CCPA for consumer data. In cross-border deployments, the EU AI Act’s classification of high-risk AI systems is directly relevant to agentic applications in healthcare, financial services, employment, and critical infrastructure.

Compliance documentation for an agentic AI system must include the agent’s decision scope, tool access profile, data handling procedures, escalation protocols, and audit log architecture. This documentation should be produced as a delivery artifact, not reconstructed after deployment.

ROI and Business Value: How to Measure Agentic AI Performance

Measuring the return on an agentic AI investment requires moving beyond technology metrics to business outcome metrics. The following framework provides a consistent structure for evaluating ROI across different deployment contexts.

Core Business Outcome Metrics

- Cost per transaction: Compare the fully loaded cost of completing a target workflow before and after agent deployment, including human review time, error correction, and infrastructure.

- Decision latency: Measure the time from workflow trigger to completed output. Reduction in decision latency is often the most immediately visible outcome of agentic AI deployment.

- Error rate and rework cost: Agents operating on well-scoped workflows typically reduce error rates in structured data processing. Quantify rework cost avoided.

- FTE hours reclaimed: Measure the hours previously spent on the automated workflow by human staff. Reclaimed time should be tracked against redeployment to higher-value activities, not simply headcount reduction.

- Revenue impact: For customer-facing agents, measure impact on conversion, resolution time, and customer satisfaction scores.

Realistic Timeline to ROI Gains from Agentic AI

Organizations that scope their first pilot tightly and select high-volume workflows typically see measurable ROI signals from Agentic AI within 8 to 14 weeks of production deployment. Domain-level ROI — meaningful cost reduction or revenue impact attributable to the agent — typically requires 6 to 12 months. Enterprise-wide ROI from a multi-agent platform requires 12 to 24 months and depends heavily on how consistently the governance and scaling framework holds across additional deployments.

Delayed ROI is almost always caused by poor scoping in Phase 1 or insufficient governance in Phase 4, not by technology limitations.

When Enterprises Should Not Use Agentic AI

Deploying an agentic system in the wrong context generates costs, governance burden, and reputational risk without commensurate business value.

- Highly deterministic, structured workflows: If a workflow can be fully specified as a decision tree with no ambiguity, RPA or traditional automation delivers the same outcome at a fraction of the cost and governance burden of an agentic system.

- Low-volume workflows: The infrastructure and governance overhead of an agentic AI system is difficult to justify for workflows that process fewer than a few hundred instances per month. The economics do not work at low volume.

- Insufficient data quality: Agents that operate on poor-quality data produce poor-quality outputs and are difficult to debug. If the target data sources cannot pass a basic quality audit, the data infrastructure problem must be solved first.

- Insufficient governance maturity: Organizations without a defined AI governance policy, an audit logging capability, and an escalation process in place should not deploy production agentic systems. Deploying first and governing later creates compounding risk.

- High-stakes irreversible decisions without human review: Agentic AI should not be deployed to autonomously execute irreversible high-stakes decisions — financial transactions above defined thresholds, patient treatment decisions, or legal commitments — without a human review gate in the workflow.

Build, Buy, or Partner: Making the Right Decision for Agentic AI Development

The build-versus-buy decision in agentic AI is less binary than it appears. The realistic options are: build entirely in-house, configure commercial platforms with internal customization, or partner with a specialist development firm for design, build, and integration.

- Build in-house: Appropriate when the workflow involves proprietary data or processes that cannot be exposed to third parties, when the organization has a mature AI engineering team, and when long-term internal ownership and continuous improvement are strategic priorities. The primary risk is timeline: in-house builds without prior agentic AI experience consistently take longer than anticipated.

- Configure commercial platforms: Appropriate for standard enterprise workflows where a platform vendor’s pre-built agent templates are a close fit. Faster time-to-value for straightforward use cases, but limited flexibility for complex multi-system workflows and often constrained on governance customization.

- Partner with a specialist: Appropriate for first deployments where internal agentic AI experience is limited, for complex integration requirements, for compliance-sensitive industries, or when speed-to-production is a strategic priority. A well-chosen partner reduces the risk of the failure modes described in the implementation section and accelerates governance maturity.

How to Choose the Right Agentic AI Development Company

The vendor evaluation criteria for an agentic AI development company differ meaningfully from those applied to general software development or generative AI consulting. The following framework is designed for enterprise RFP processes and executive review.

| Evaluation criterion | What to look for | Red flags |

| Enterprise delivery track record | Production deployments in enterprise environments, not just pilots or demos. References from organizations of comparable size and complexity. | Portfolio of chatbot or copilot projects presented as agentic AI experience. |

| Architecture depth | Demonstrated capability with multi-agent systems, not just single-agent chains. Understanding of orchestration frameworks, memory design, and tool scoping. | Proposals that default to a single LLM API call dressed as an agent. |

| Security and compliance methodology | A defined approach to agent identity management, access scoping, audit logging, and compliance documentation. This should be part of the standard delivery methodology, not an add-on. | Security described in general terms without agent-specific controls. |

| Observability capability | A defined approach to runtime tracing, anomaly detection, and performance monitoring for agentic systems. Ask specifically what observability tooling is included in the delivery. | No mention of observability in the proposal. Monitoring described as a post-launch activity. |

| Post-deployment support model | Defined SLAs for production support, model update management, and performance review. Agentic systems require ongoing operational support in a way that static software does not. | Support described as ‘time and materials’ with no defined response protocol. |

| IP ownership and data handling | Clear contractual terms on IP ownership of custom agents, prompt libraries, and integration code. Explicit data handling commitments for any data processed during development. | Ambiguous IP terms. Development workflows that require sharing production data with third-party model providers without explicit consent. |

| Model-agnostic capability | Architecture that is not tied to a single model vendor. Ability to route workloads across models based on cost, capability, and compliance requirements. | Proposals built entirely around a single model provider with no stated path to model portability. |

Questions to Ask Any Agentic AI Development Vendor

- Can you walk us through a production agentic AI deployment you have delivered for an enterprise of comparable size and industry? What were the specific integration points and governance mechanisms?

- What observability tooling does your delivery include, and what trace-level visibility will we have into agent reasoning and tool calls in production?

- How do you scope and enforce tool access for agents? What is your approach to the principle of least privilege?

- What is your approach to prompt injection and tool misuse? Can you describe specific mitigations you have implemented in prior deployments?

- What does your governance documentation package include, and at what point in the delivery is it produced?

- How do you handle model updates and version changes after production deployment? What is the process for testing and rolling out model changes?

- What does your post-deployment support model look like for a production agentic system?

- Who owns the IP on the custom agents, prompt libraries, and integration code delivered under this engagement?

Why Choose TechAhead for Enterprise Agentic AI Development

TechAhead brings enterprise-grade experience across the full agentic AI development lifecycle, from use case discovery and architecture design through production deployment, governance implementation, and ongoing support.

As a leading Agentic AI development company, our delivery methodology is built specifically for enterprise contexts: phased governance reviews at each project milestone, observability designed into the architecture from Phase 2, model-agnostic frameworks that avoid single-vendor lock-in, and compliance documentation produced as a standard delivery artifact across HIPAA, SOC 2, GDPR, and relevant industry frameworks.

We work with enterprise organizations across financial services, healthcare, manufacturing, and technology to scope, build, and scale agentic AI systems that are production-ready and governable from day one.

For example, TechAhead integrated AI-driven automations across the talent acquisition enterprise platform for our client ERIN, which was later was recognized by Lighthouse Research & Advisory and honored at UNLEASH America 2025 for transforming enterprise hiring through innovation and automation.

We integrated features like referral scoring models, predictive matchmaking, and bot-based alerts. With these innovative functions, we turned the platform into a smart, agentic AI-driven referral engine that proactively assists employees and HR teams with personalized, automated hiring workflows.

Along with reduced manual efforts, the platforms led to many lucrative outcomes like 13% conversion rate from referral to hire, 45% of referrals originated from mobile devices, 2.2M+ employee referrals submitted, and so on.

Conclusion: Building Agentic AI That Lasts

Agentic AI development has moved from a research frontier to an enterprise implementation priority. The organizations getting results are not necessarily those with the most advanced AI capabilities. They are the organizations that scoped their first deployments precisely, built governance into their architecture from the start, and chose implementation partners with the depth to deliver production-ready systems rather than impressive prototypes.

The competitive window is open. The difference between organizations that capture durable value from agentic AI and those that accumulate expensive, fragmented pilots will be determined largely by the quality of the architecture, governance, and implementation decisions made in the next 12 to 18 months.

TechAhead partners with enterprise organizations to make those decisions well. If your organization is evaluating an agentic AI initiative, we would welcome the conversation.

Agentic AI development is the process of designing, building, and deploying AI systems that reason, plan, use external tools, and execute multi-step tasks autonomously toward a defined goal. Unlike generative AI, which responds to prompts, agentic AI pursues objectives — taking actions, observing results, and adjusting until the goal is achieved.

Agentic AI operates on a continuous loop: it perceives inputs from its environment, reasons about the best next action toward a goal, executes that action using available tools or APIs, observes the outcome, and repeats. This iterative cycle continues until the objective is met or a human escalation condition is triggered.

Generative AI receives a prompt and returns a single response. Agentic AI receives a goal and executes a sequence of actions autonomously — calling tools, querying databases, coordinating with other agents, and adapting based on results. The key differences are autonomy, tool use, and multi-step execution.

A well-scoped single-workflow pilot typically takes 8 to 14 weeks from kickoff to production deployment. A domain-level production deployment covering multiple workflows requires 5 to 7 months. An enterprise-wide multi-agent platform requires 12 to 24 months, depending on integration complexity and governance requirements.

A single-workflow pilot typically ranges from $75,000 to $200,000. A domain-level production deployment ranges from $250,000 to $700,000. Enterprise-wide multi-agent platforms begin at $1 million and scale with complexity. Total cost of ownership includes model inference, observability tooling, human review capacity, and ongoing model operations — costs that are frequently absent from initial vendor proposals.

Five prerequisites are critical: accessible, quality-controlled data with defined ownership; API-enabled enterprise systems at integration points; a defined AI governance policy with audit requirements; an allocated team including AI engineering, domain expertise, and a governance owner; and executive sponsorship with an agreed success metric for the first deployment.

Enterprise governance of agentic AI requires six controls: agent identity and access management with least-privilege scoping; action scope limits with defined human approval gates; complete audit trails of every agent action; data residency and privacy controls at the tool layer; model version control with staged rollout protocols; and tested human escalation procedures. Security controls must address prompt injection, credential exposure in multi-agent chains, and tool misuse.

Multi-agent orchestration is the coordination of multiple AI agents toward a shared goal, where an orchestrator agent assigns sub-tasks to specialized worker agents, manages execution state, handles failures, and synthesizes results. It is used when a workflow exceeds the scope, speed, or specialization that a single agent can handle reliably.