Required for core functionality such as security, network management, and accessibility. These cannot be disabled.

Key Takeaways

- Agentic AI requires a completely new measurement paradigm. An agent that is fast, productive by volume metrics, and inexpensive per token can still be failing if its outputs are unreliable, untrusted by the people using them, or disconnected from any measurable business outcome. The measurement system has to catch up to the technology.

- Productivity in agentic AI has three dimensions — Speed, Quality, and Autonomy — and the real value lives at their intersection. Measuring any single dimension in isolation creates a blind spot.

- 88% of executives from agentic AI early adopter orgs see ROI on at least one gen AI use case. The difference between those that see ROI and those that do not is almost never the agent. It is the absence of a baseline, a governance structure, and a connected metrics layer that can actually prove what the agent delivered.

- 2× the number of independent decisions AI agents will make in enterprise business processes by 2027 compared to today. Every enterprise AI deployment running without a structured measurement framework today is compounding an accountability gap.

Has your agentic AI deployment been live long enough to process real workflows, orchestrating tasks, and executing decisions around the clock?

The real question is: what did you actually get for the million dollars you invested?

If you do not have a clear answer, the problem is not the technology. It is the absence of measurement. And in enterprise AI, that becomes the most expensive silence.

According to research from IBM and Oracle, by 2027, business leaders expect AI agents to make twice as many independent decisions in business processes as they do today. McKinsey estimates that effective, scaled agentic AI deployments could deliver productivity improvements of three to five percent annually and lift growth by ten percent or more. Many organizations report ROI at a use-case level, yet struggle to translate that into enterprise-wide financial impact, largely due to fragmented pilots, weak data foundations, and the absence of any coherent measurement discipline.

The gap between what agentic AI promises and what enterprises actually capture is not a gap in capability. It is a gap in accountability. Organizations that close it are the ones that treat measurement as a design decision, not an afterthought.

This blog lays out a complete framework for measuring productivity gains from agentic AI deployments, one that speaks the language of the boardroom as clearly as it speaks to engineering and operations. By the time you reach the conclusion, you will have a named, repeatable model that your leadership team can table at the next quarterly review.

Why Your Existing KPIs Are Already Obsolete for Measuring AI Productivity Gains

Most enterprises arrive at agentic AI carrying the measurement habits of every system that came before it. They track uptime, ticket resolution rates, API call volumes, and model response times. These are the right metrics for the wrong technology.

Agentic AI is fundamentally different from robotic process automation, from rule-based workflows, and from the generative AI assistants that preceded it. An agentic system does not respond to prompts. It reasons, plans, sequences tool use, self-corrects mid-execution, and pursues multi-step goals with limited human input. That is a different category of capability entirely, and it demands a different category of measurement.

Consider how enterprise measurement has evolved across three generations of automation:

| Generation | Technology | What Was Measured |

| First | RPA and Rule-Based Automation | Task throughput, cost per transaction, error rate |

| Second | Generative AI and Copilots | Prompt quality, user adoption rate, time saved per task |

| Third | Agentic AI | Workflow outcomes, decision quality, business impact, autonomy level |

Most organizations are using first-generation metrics to evaluate third-generation technology. The result is a persistent measurement illusion: dashboards full of activity data, and boardrooms full of unanswered questions about actual business value.

McKinsey’s analysis of leading agentic AI deployments found that organizations realizing meaningful impact have abandoned volume metrics entirely. Instead, they track conversation quality, task-completion accuracy, escalation precision, and what McKinsey calls learning velocity: how effectively agents incorporate feedback and adapt to changing conditions. These are outcome signals, not activity signals, and the distinction matters enormously for how agentic AI effectiveness gets evaluated across the enterprise.

The Core Principle

If a metric cannot be traced to a business outcome, it is instrumentation data. Instrumentation data tells you the system is running. It does not tell you where it is going.

The Measurement Illusion: Why Most Enterprises Are Overestimating Their AI Returns

Many organizations declare AI deployments successful well before any rigorous analysis has been completed. The claims are genuine. The measurement behind them often is not.

Three structural forces drive this gap between perception and reality.

Cultural Bias Toward Celebration

Organizations that prioritize innovation tend to amplify early wins and quietly archive early AI failures. A pilot that improves one workflow by forty percent becomes a headline. The five workflows where the agent underperformed are filed under “learnings.” Culture is not the enemy of measurement, but unchecked, it becomes a distorting lens.

Stakeholder Pressure on Reporting

Investor and board reporting cycles create a structural incentive to surface positive AI stories. When there are no agreed standards for what a successful agentic AI deployment looks like, reporting becomes selective. The metrics chosen tend to be the ones that confirm the narrative rather than test it.

Absence of Standardized Benchmarks

The enterprise AI industry has not yet converged on a common measurement vocabulary. What one organization calls a productivity gain, another treats as a baseline expectation. Without agreed-upon industry benchmarks, evaluation is subjective by default, and subjective evaluation almost always leans optimistic.

Self-Assessment: Five Signs Your AI Measurement Is Theater, Not Science

- No documented baseline exists from before deployment.

- ROI figures exclude infrastructure, change management, and error correction costs.

- The metrics being reported were chosen after go-live.

- Engineering and finance are tracking completely different things.

- The last measurement review happened at launch.

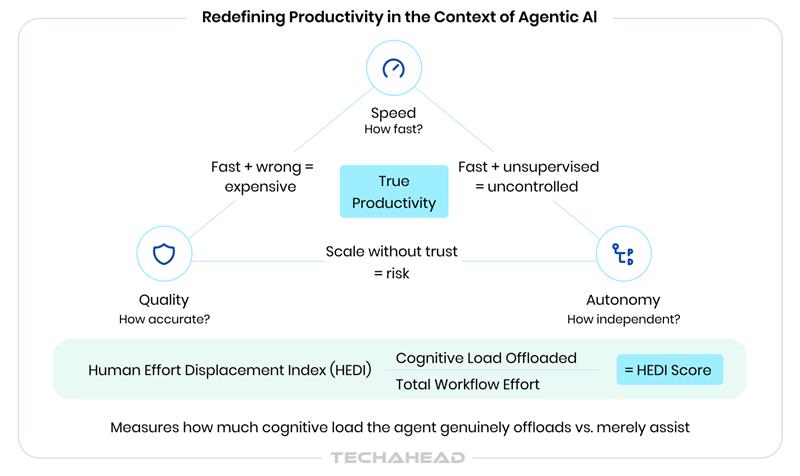

Redefining Productivity in the Context of Agentic AI

Before any framework can be applied, the term productivity needs to be redefined for the agentic context. Most enterprise leaders equate productivity with speed. Speed matters, but in isolation, it is a trap. A fast agent producing inaccurate outputs at scale is not productive. It is expensive.

Agentic AI productivity operates across three dimensions simultaneously, and the productive value of any deployment lives at the intersection of all three:

| Dimension | Definition | The Risk of Measuring It Alone |

| Speed | Task completion time versus documented human baseline | Fast plus wrong equals expensive at scale |

| Quality | Accuracy, compliance, and usability of outputs without rework | Quality without speed gains loses the efficiency argument |

| Autonomy | Percentage of tasks completed without human override or correction | High autonomy on low-value tasks is not a productivity win |

Fourth concept: Human Effort Displacement Index

A composite measure of how much human cognitive load, decision-making time, and manual effort an agent is genuinely offloading, as distinct from merely assisting. An agent that helps a human complete a task faster is an assistant. An agent that removes the human from the loop for an entire category of work is a productivity multiplier. These require different metrics and produce different ROI calculations.

Productivity from agentic AI must also be measured at three levels simultaneously, because progress at one level can mask failure at another.

- Task level: did this specific agent action succeed?

- Workflow level: did the end-to-end process perform measurably better?

- Business outcome level: did the organization move closer to a strategic goal?

Most organizations measure only at the task level and declare victory. The business outcome signal, which is the only signal the board actually cares about, lives one or two levels above where most enterprise AI measurement currently operates.

Core Technical Metrics: What Your AI Team Must Track from Day One

Google Cloud’s framework for evaluating production agentic AI systems makes a point that too few enterprises act on: for multi-step agentic workflows, you must evaluate the trajectory, the sequence of reasoning and tool calls, not just the final output. An agent that reaches the right answer through flawed reasoning is not a reliable agent. It got lucky.

Here is the complete technical metrics stack, organized into four categories. Each category targets a different layer of agent behavior, and all four are required for a complete picture of agentic AI performance.

Task-Level Agent Performance

| Metric | Definition | Target at Maturity |

| Task Completion Rate (TCR) | Percentage of tasks fully completed without human override | Above 85 percent |

| Task Success Rate (TSR) | Percentage of completed tasks meeting defined quality thresholds | Above 90 percent in production |

| Step Accuracy Rate | Percentage of individual steps in multi-step pipelines executed correctly | Above 95 percent |

| Retry and Fallback Rate | How often the agent loops back, fails mid-task, or triggers an exception | Below 10 percent |

| Plan Adherence | Whether the agent executed its planned sequence of tool calls in the correct order | Track deviation trend |

Efficiency and Throughput Metrics

| Metric | Definition |

| Average Task Duration | Time per task measured against the documented human baseline for that process |

| Throughput Rate | Number of tasks handled per hour or per day at production scale |

| End-to-End Latency | Total time from task initiation to final resolution across the full agent trace |

| Pipeline Completion Rate | End-to-end workflow success rate across multi-agent orchestration sequences |

| Planning Efficiency | Whether the agent minimizes unnecessary reasoning steps by using tools effectively |

Reliability and Trust Metrics

These are the metrics that matter most to enterprise risk and compliance functions, and the ones most commonly absent from early-stage AI dashboards.

| Metric | Definition | What to Watch |

| Human Override Rate | How often humans correct or take over agent actions | Track the trend downward. A sudden spike signals a regression. |

| Agent Escalation Rate | How often tasks are routed to human queues | Should decline as the agent matures and learns |

| Argument Hallucination Rate | How often the agent invents parameters for a function call, it does not have context for | Critical in financial and regulated environments |

| Error Severity Index | Frequency multiplied by the downstream business cost of errors | A few high-severity errors can erase all efficiency gains |

| Consistency Score | Given the same input ten times, how much does the agent’s tool usage path vary? | High variance indicates reasoning instability |

Observability and Auditability Metrics

| Metric | Definition |

| Trace Coverage | Percentage of agent actions that are fully logged and inspectable at the step level |

| Mean Time to Detect (MTTD) | How quickly anomalous or underperforming agent behavior is identified |

| Audit Trail Completeness | Percentage of autonomous decisions with a full reasoning chain logged, critical in regulated industries |

| Learning Velocity | How quickly the agent measurably improves after feedback, retraining, or recalibration |

Learning Velocity is the metric most organizations forget. It is also the one that separates agents that compound in value from agents that plateau at their initial deployment performance.

Business KPIs: What the C-Suite Dashboard Must Show

Technical metrics are the foundation. Business KPIs are the building blocks. The two must be connected by design, not assembled after the fact. What follows is a role-differentiated view of the business KPIs that enterprise AI programs should be producing, organized by the executive audience that acts on each one.

Operational KPIs

- FTE Equivalent Output: How many human work-hours per week does the agent replace or substantively augment? Expressed as full-time equivalent units, this is the most immediately legible metric for operational leaders evaluating headcount and capacity decisions.

- Process Cycle Time Reduction: The percentage reduction in end-to-end workflow time measured against the pre-deployment baseline. This connects directly to SLA performance and customer experience outcomes.

- Manual Effort Reduction Rate: Hours saved per process per month, monetized at the fully-loaded labor cost for that role.

- SLA Adherence Rate: Are AI-driven workflows meeting or exceeding the service levels that human teams previously delivered? A drop in SLA performance after deployment is a signal that the agent is not ready for production at its current autonomy level.

Financial KPIs

- Cost Per Task: A fully-loaded cost comparison between AI-executed and human-executed tasks, including compute, licensing, integration, maintenance, and error correction. Google Cloud’s framework makes an important point here: cost-per-token metrics are misleading for agents. If an agent costs ten cents per run but fails half the time, the actual cost per successful outcome doubles.

- Cost Avoidance: The costs that would have been incurred without the agent. In high-volume, process-heavy operations, this is often the single largest value category, and it is consistently underreported.

- Revenue Impact Attribution: Revenue enabled or accelerated by AI-driven speed improvements, including compressed quote-to-cash cycles, faster customer resolution, and reduced churn among high-value segments.

- Error-Cost Reduction: The financial value of compliance failures, rework cycles, and manual corrections that the agent prevented. This connects directly to the Error Severity Index from the technical metrics layer.

Strategic KPIs

- AI Adoption Rate: Percentage of target workflows operating on agentic AI versus the original roadmap commitment. Consistent underdelivery against the adoption roadmap is an early signal of a governance or change management problem.

- Decision Velocity: Time from data signal to insight to action, measured before and after AI deployment. This is the strategic productivity metric: how much faster is the organization able to move?

- Employee Productivity Lift: Output per employee in AI-augmented teams compared to matched, non-augmented teams. This provides a controlled view of incremental productivity gains from agentic AI beyond pure automation.

- AI Maturity Score: Where the organization sits on a defined capability scale, used for competitive benchmarking and investment prioritization across the portfolio of AI deployments.

Is your organization ready for AI adoption? Read our blog on AI Readiness Assessment to find out.

The PACE Framework: A Model for Measuring Agentic AI ROI

Frameworks earn their place when they do something lists cannot: they create sequence. They force an organization to do things in the right order, which is rarely the order that feels most natural. The PACE Framework is a four-stage model for measuring productivity gains from agentic AI deployments that works for a startup deploying its first agent and for an enterprise managing a portfolio of fifty. Unlike traditional ROI models, PACE separates autonomy from cost impact, which is critical for agentic systems.

PACE stands for Performance Baseline, Autonomy Measurement, Cost-Impact Calculation, and Evolution Tracking. Each stage builds on the one before it, and none can be skipped without compromising the integrity of what follows.

P: Performance Baseline

Nothing in the PACE Framework works without this stage, and this stage must happen before deployment begins. Document the current state of every process the agent will touch with precision:

- Fully-loaded process cost per unit, including labor, error correction, and overhead

- Average cycle time per process unit

- Current error rate and the average downstream cost of each error

- Human hours consumed per week across all participants in the workflow

- Output quality score, whether defined by accuracy, customer satisfaction, or compliance adherence

A: Autonomy Measurement

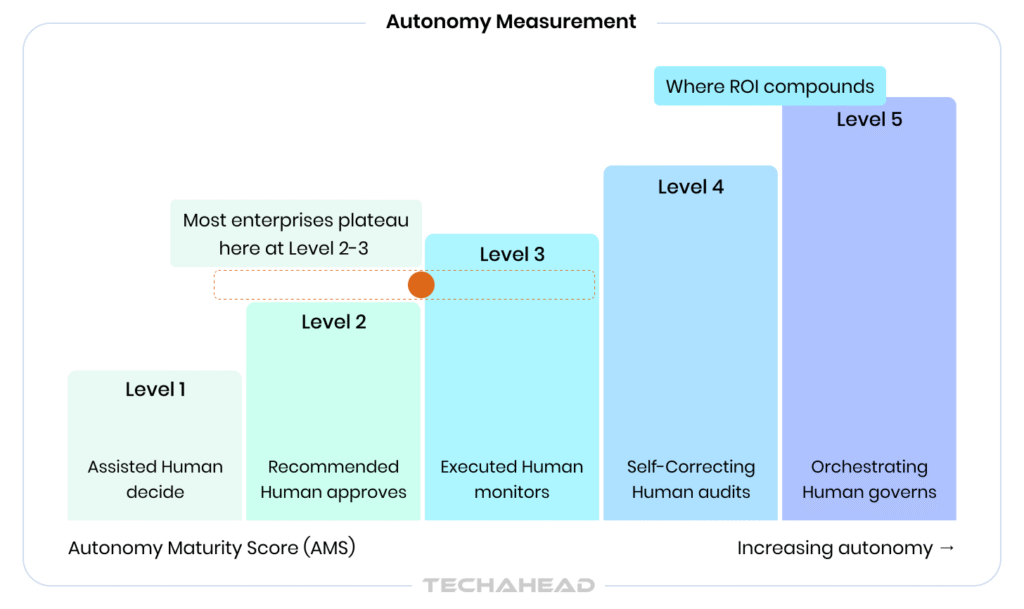

The first thirty to ninety days of a deployment are the autonomy measurement phase. The central question is not whether the agent is performing tasks. It is how genuinely autonomous that performance is. The Autonomy Maturity Score (AMS) provides a structured answer on a five-level scale:

| AMS Level | Description | Human Role |

| Level 1 — Assisted | Agent provides suggestions; human makes all decisions | Decides |

| Level 2 — Recommended | Agent recommends actions with rationale; human approves | Approves |

| Level 3 — Executed | Agent executes tasks; human monitors and can intervene | Monitors |

| Level 4 — Self-Correcting | Agent executes and corrects its own errors; human audits periodically | Audits |

| Level 5 — Orchestrating | Agent coordinates other agents; human governs the system | Governs |

Track the AMS at thirty, sixty, and ninety days. The trajectory is more informative than the point-in-time score. An agent that moves from Level 2 to Level 4 in ninety days is scaling correctly. An agent that stays at Level 2 after three months has an adoption or capability problem that needs diagnosis before the Cost-Impact Calculation will mean anything.

C: Cost-Impact Calculation

Starting at month three, with sufficient data volume and a clear autonomy trajectory, the Cost-Impact Calculation becomes meaningful. Two formulas anchor this stage.

Productivity Gain Formula

Productivity Gain (%) = [(Human Baseline Output Cost − AI-Augmented Output Cost) divided by Human Baseline Cost] x 100.

- Adjusted for: error correction costs + human override time + infrastructure and licensing + change management and reskilling costs

AI ROI Formula

AI ROI (%) = [(Total Business Value Gained − Total AI Investment Cost) divided by Total AI Investment Cost] x 100

- Total AI Investment includes: licensing + compute + integration + maintenance + training + change management

- Total Business Value includes: labor cost avoided + error cost reduced + revenue accelerated + compliance cost avoided

E: Evolution Tracking

The PACE Framework is a continuous discipline. Quarterly evolution tracking answers three questions that determine the future investment case for every deployed agent:

- Is the agent’s Learning Velocity positive? Is it still improving, or has it plateaued at its initial deployment performance?

- What is the Portfolio AI Health Score across all deployed agents? Which ones are compounding in value, and which are consuming resources without proportional return?

- Which agents have proven their ROI case and are ready to scale to additional workflows or user groups?

Evolution Tracking turns agentic AI from a project into a program. It shifts the organizational posture from “did this work?” to “how do we make this work better and where do we expand next?” That shift is what separates enterprises that build durable AI capability from those that cycle through expensive pilots indefinitely.

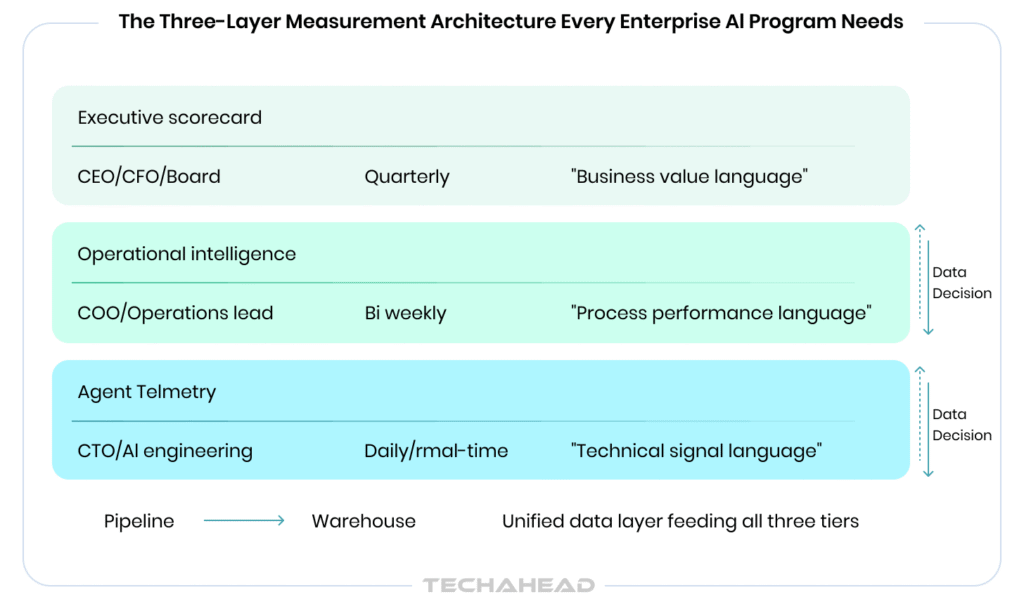

The Three-Layer Measurement Architecture Every Enterprise AI Program Needs

The metrics exist. The formulas exist. What many organizations are missing is the AI infrastructure to collect, aggregate, and surface this information to the right people in the right format at the right cadence. The solution is a three-layer measurement architecture that mirrors the organizational levels it serves.

Layer 1: Agent Telemetry (Engineering View)

Raw operational data: logs, traces, token usage, latency, step-by-step execution records, error events, and tool call sequences. This layer captures what the agent actually did, in granular detail, at every step. LLM observability platforms handle this layer. The primary audience is the AI engineering team and ML operations function. Review cadence: continuous, with automated alerting on anomaly thresholds.

Layer 2: Operational Intelligence (Operations View)

Aggregated KPIs derived from the telemetry layer: Task Completion Rate trends, SLA status, throughput curves, override rate patterns, and pipeline health scores. This layer answers the question: is the deployment performing as expected across the operational environment? BI integration tools connect agent logs to operational dashboards.

Layer 3: Executive Scorecard (Board View)

Distilled business outcomes: ROI tracking against baseline, cost avoidance realized, FTE equivalent output delivered, PACE Framework progress score, and portfolio-level AI health across all agents. This layer speaks the language of the boardroom.

Seven Measurement Mistakes That Derail Enterprise AI Programs

These are the patterns that appear consistently across agentic AI programs that fail to demonstrate value. Some are technical. Most are organizational. All of them are avoidable with a structured approach.

- Measuring before sufficient data volume exists. Declaring ROI at week three of a ninety-day deployment is not analysis. Agents need volume and time to stabilize. Point-in-time measurement captures the worst performance of the agent’s lifecycle and is the fastest way to undermine internal confidence in a program that is actually working.

- Falling into the vanity metrics trap. API calls, tokens processed, and model response times tell you the system is running. They do not tell you what the system is delivering. Measuring activity instead of outcomes is how organizations end up with impressive-looking dashboards and unanswerable questions.

- Skipping the baseline. The most common and most costly mistake in enterprise AI measurement. Without a documented pre-deployment baseline, productivity gains from agentic AI cannot be proven. They can only be asserted. Assertions do not survive budget scrutiny.

- Ignoring error costs in ROI calculations. Counting only the efficiency wins while excluding the cost of AI mistakes systematically inflates every ROI figure.

- Running siloed measurements across teams. When engineering tracks latency and finance tracks cost savings with no shared translation layer, the organization has data but not intelligence. The metrics disconnect between technical and business teams is the primary reason that working AI programs lose executive support.

- Treating ROI as a launch event. Agentic AI ROI compounds over time as agents learn, as workflows mature, and as the organization builds confidence in autonomous execution. Measuring ROI only at launch captures the deployment at its least capable point and produces the least favorable numbers of the entire program lifecycle.

- Excluding change management costs. People, process redesign, reskilling programs, and stakeholder adoption are real costs that belong in every ROI calculation. Organizations that exclude them produce numbers that look compelling in the first budget cycle and create credibility problems in the second.

Your Enterprise AI Measurement Roadmap: A Phased Approach

The measurement discipline described in this framework does not need to be built all at once. It needs to be built in the right sequence. Here is a phased roadmap that applies to organizations at any stage of their agentic AI journey.

Phase 1: Pre-Deployment Foundation (Weeks 1 to 4)

- Document process baselines across all target workflows with precision

- Agree on metric definitions across engineering, operations, and finance before any agent goes live

- Select, configure, and validate observability tooling against the three-layer architecture

- Establish governance structure, audit cadence, and ethics oversight before deployment begins

Deliverable: An AI Measurement Charter. A single-page internal document that defines what success looks like, how it will be measured, and who owns each metric, is agreed upon before go-live.

Phase 2: Early Signal Capture (Days 1 to 90 Post-Deployment)

- Track Autonomy Maturity Score weekly across all deployed agents

- Monitor technical metrics daily through the Layer 1 telemetry dashboard

- Produce the first operational intelligence report at Day 30

Deliverable: A 30-Day Agent Performance Report covering technical metrics, early AMS progression, and the first business signal.

Phase 3: ROI Validation (Months 3 to 12)

- Apply the PACE Framework Cost-Impact Calculation with full cost inclusion

- Present the first formal ROI report at month three

- Begin portfolio-level AI health scoring across all active agents

Deliverable: A Quarterly AI ROI Report in boardroom-ready format, tracking the PACE Framework through all four stages.

Phase 4: Continuous Optimization (Month 12 Onward)

- Quarterly evolution tracking and expansion or retirement decisions for each agent

- Annual AI measurement framework review and calibration against industry benchmarks

- Competitive benchmarking of AI maturity scores against sector peers

Deliverable: An Annual AI Maturity and ROI Assessment that feeds directly into the capital allocation decision for the following year’s AI investment.

Readiness Checklist: Is Your Organization Ready to Measure Agentic AI?

Use this checklist before any agentic AI deployment to assess measurement readiness. If more than two items are unchecked, the deployment plan is incomplete.

Readiness Questions:

- Do we have documented baselines for all AI-targeted processes?

- Have we defined metric ownership across engineering, operations, and finance?

- Is our observability infrastructure configured and validated before go-live?

- Do we have a governance and audit structure in place before deployment?

- Does our ROI calculation include all cost categories, including change management?

- Are we tracking Learning Velocity as a forward-looking performance indicator?

- Do we have a board-ready reporting cadence established for each stakeholder level?

- Have we defined what each Autonomy Maturity Score level looks like for our use case?

Conclusion

The organizations pulling ahead in the agentic AI era share a common discipline. They did not start with the most sophisticated agents. They started with the clearest measurement frameworks. They knew what success looked like before they deployed, they instrumented to capture it from day one, and thus, collaborated with an experienced Agentic AI company that built the governance structures to drive trustworthy results.

Measuring productivity gains from agentic AI deployments is not a technical challenge. It is an organizational one. It requires engineering, operations, and finance to share a vocabulary. It requires leadership to treat measurement as a pre-deployment decision rather than a post-deployment rationalization. And it requires the intellectual honesty to report on what the agents are actually delivering, not what the investment case projected they would deliver.

Enterprise AI success metrics are not a reporting exercise. They are a management system. The companies that build it correctly will not just be able to answer the question in board meetings. They will be able to answer the more important question that follows: where should we expand next, and what will it return?

The AI measurement view should sit at three levels: strategic adoption, business velocity, and financial return. Specifically: AI Adoption Rate (percentage of target workflows live versus the roadmap commitment), Decision Velocity (how much faster the organization moves from data signal to action after deployment), Employee Productivity Lift (output per employee in AI-augmented teams versus matched non-augmented teams), and the AI Maturity Score (where the program sits on a defined capability scale for competitive benchmarking).

ROI calculation for agentic AI requires two inputs that most organizations get wrong: a complete cost picture and a pre-deployment baseline. The formula is straightforward: Total Business Value Gained minus Total AI Investment Cost, divided by Total AI Investment Cost, multiplied by 100. The errors happen on both sides of that equation. Total AI Investment Cost must include licensing, compute, integration, maintenance, reskilling, and change management. Total Business Value must include labor cost avoided, error cost reduced, revenue accelerated, and compliance cost avoided.

There is no universal benchmark because task completion rate depends heavily on the complexity of the workflow and the maturity of the deployment. As a general reference point, well-configured production agents in operations-heavy workflows typically reach above 85% task completion at maturity, with task success rates. The more useful question is not what the rate is at a single point in time, but whether it is improving. Google Cloud’s production framework identifies plan adherence and argument hallucination rate as the two metrics that surface agent problems fastest track both alongside completion rate from day one. A declining completion rate after an initial high is a reliability signal. A completion rate that is high but paired with a high human override rate means the agent is completing tasks that humans are then correcting, which is a quality problem disguised as a completion success.

AI productivity metrics live at the technical and operational layer: task completion rates, latency, throughput, override rates, and learning velocity. They tell engineering and operations teams whether the agent is functioning correctly and improving over time. AI business KPIs live at the outcome layer: FTE equivalent output, cost per task, revenue impact attribution, decision velocity, and SLA adherence. They tell the organizations whether the AI program is delivering value. The critical error most enterprises make is treating these as two separate conversations. The organizations that struggle most with AI measurement are those that try to show the board everything instead of translating operational metrics into five to eight strategic indicators that inform decisions. Every technical metric needs a named business owner who translates it into a business decision; that translation layer is what separates a measurement program from a reporting exercise.

An agent is ready to scale when it has demonstrated four things simultaneously over a sustained period: a Task Completion Rate consistently above 85 percent, a Human Override Rate that has declined to below 10 percent and is stable, a positive Learning Velocity trajectory (still improving, not plateauing), and a positive ROI calculation that includes all cost categories. In Autonomy Maturity Score terms, an agent at Level 3 or above has earned the confidence required for expansion. The pattern that consistently works in enterprise agentic AI adoption is to take one high-value use case to full production first, validate the measurement framework, then scale to additional workflows once multi-user authorization and governance are proven. Scaling an agent that has not yet earned its ROI case in a single workflow simply distributes the measurement gap across a larger surface area.