Required for core functionality such as security, network management, and accessibility. These cannot be disabled.

Enterprises across manufacturing, logistics, real estate, and utilities are investing in Internet of Things (IoT) platforms to gain real-time visibility, automate operations, and reduce manual dependencies. What started as small pilot deployments has quickly evolved into large-scale, multi-device ecosystems driving core business functions.

Key Takeaways

- The basic IoT app development cost starts from $58,000 and can reach as high as $1,600,000, depending on the level of complexity.

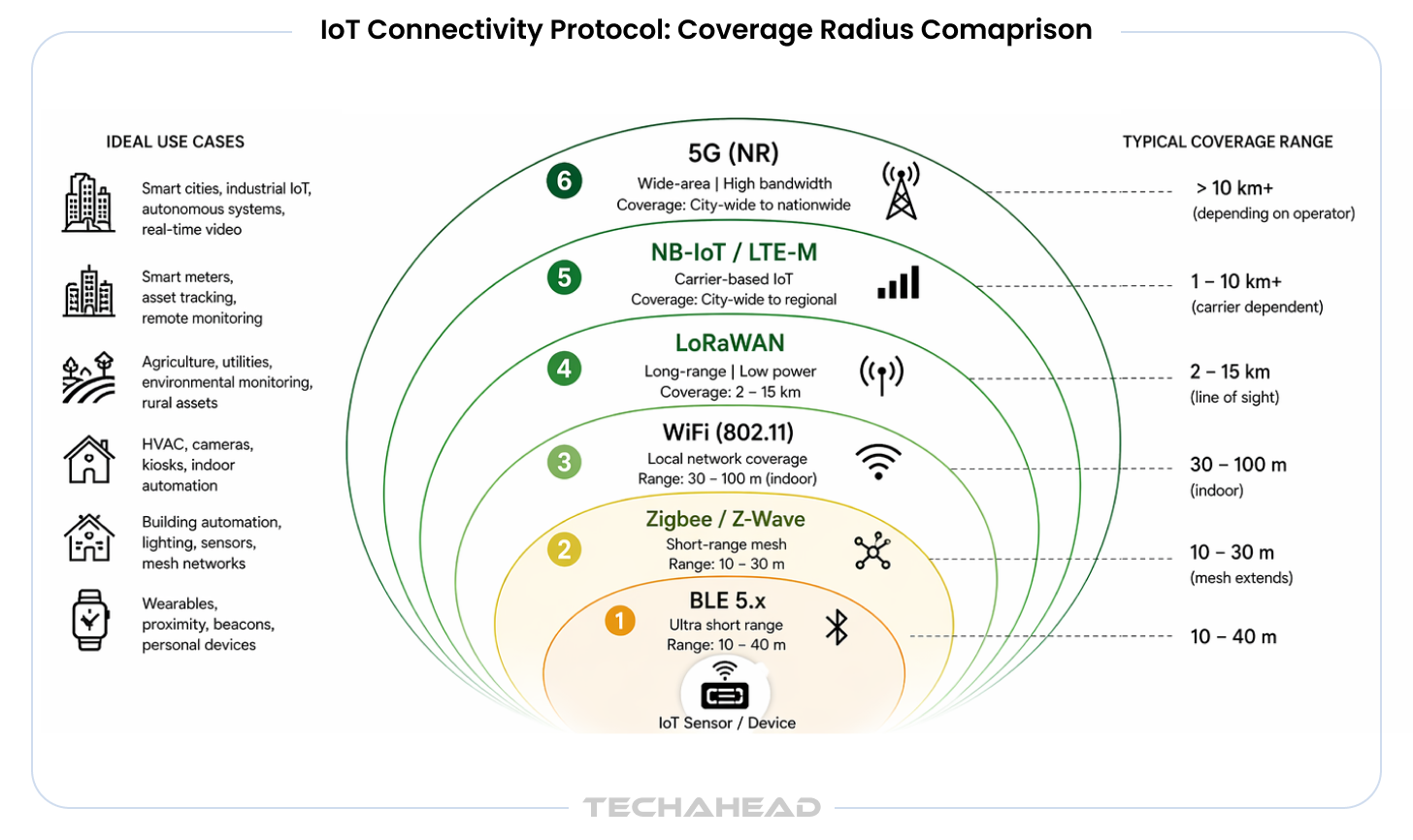

- Protocol selection locks in 5-year TCO. Choosing LTE-M over LoRaWAN for a 1,000-device deployment can cost $300,000 more over five years. This decision must be made at architecture stage, not implementation stage.

- Industrial hardware costs 10–50x consumer hardware — for good reason. A ruggedized $150 MCU vs. a $10 commodity unit reflects real differences in temperature tolerance, certification compliance, and 10-year field life.

- Security is 15–25% of budget and non-negotiable. Value-engineering security out of early IoT budgets is the most common cause of costly mid-project reinstatement and post-launch remediation.

- Every IoT project needs 2–3 hardware prototype cycles. Budget $15,000–$40,000 per iteration. Teams that skip this end up absorbing the cost in production failures.

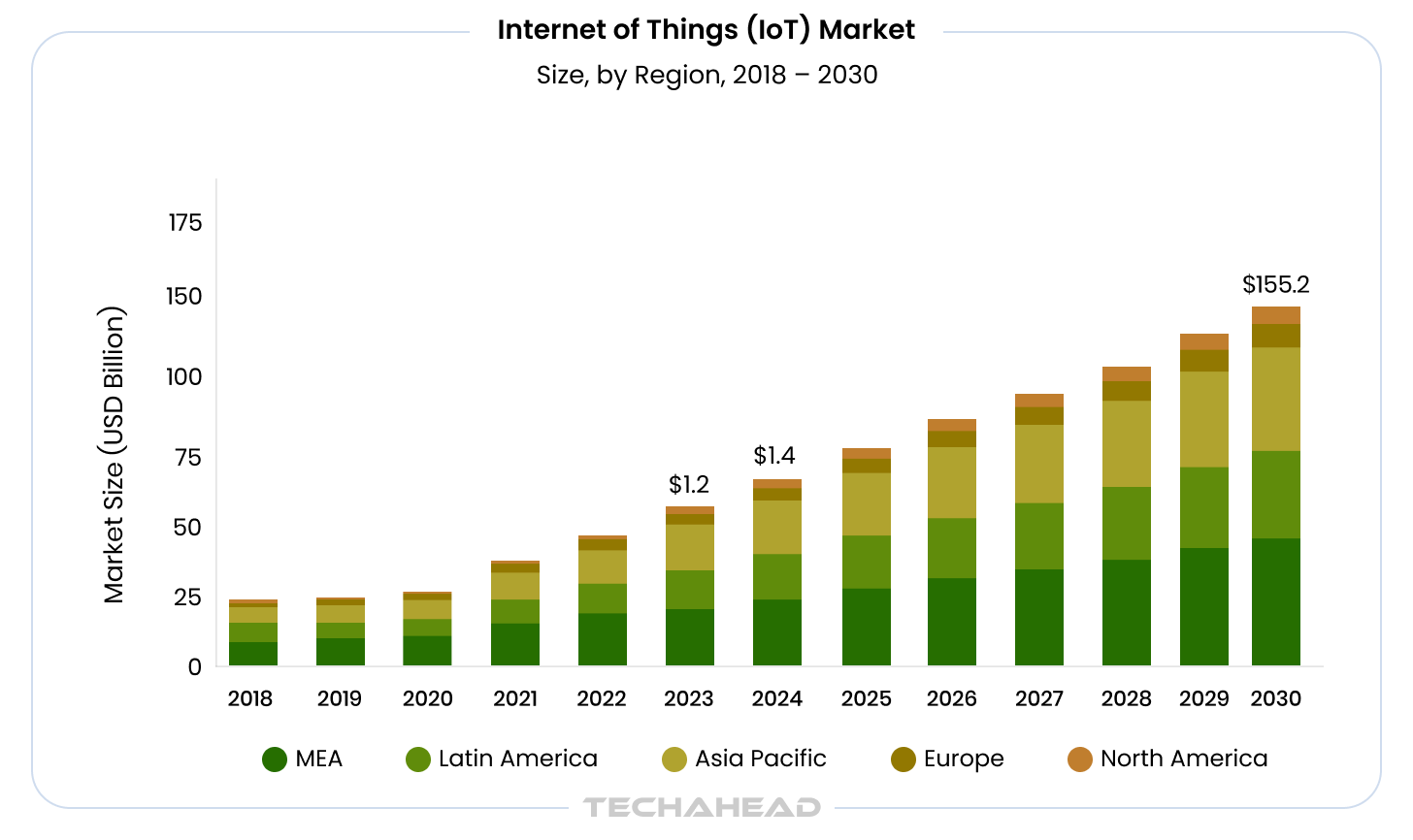

As per Grand View Research, the global Internet of Things (IoT) market size is projected to reach USD 2.65 trillion by 2030, growing at a CAGR of 11.4% from 2024 to 2030. This is massive growth, driving many regulated industries like healthcare, finance, Energy & Utilities, and transportation & logistics.

But behind every IoT deployment is a cost structure that is often underestimated.

Enterprise IoT projects routinely surprise finance teams. The hardware budget looks manageable, the cloud estimate seems reasonable, and then the full cost of connectivity infrastructure, RF certification, security tooling, and ongoing device management comes into view. For a 500-node industrial monitoring deployment, those “secondary” line items can equal or exceed the cost of the original software build.

As a result, IoT app development costs vary widely. A small-scale deployment may start around $58,000, while enterprise-grade platforms with thousands of devices can exceed $1,600,000, depending on architecture complexity, scale, and operational requirements.

This guide breaks down every cost layer, including hardware, connectivity protocols, edge computing architecture, cloud backend, security, and operations, with real price ranges for the deployment scales that matter to enterprise buyers in 2026. It is written for operations leaders, CIOs, and product managers who need to build defensible budgets, not for developers who already know the stack.

In a real estate IoT engagement with Boxlty, TechAhead implemented Bluetooth-enabled smart locks and remote access systems across multiple properties, enabling seamless property access without physical presence. However, the multi-site deployment introduced connectivity variability across locations, requiring additional effort in network optimization and device provisioning at scale. This directly impacted the initial cost estimate, as ensuring consistent performance across distributed environments added both infrastructure and integration overhead.

The Full IoT Cost Stack: Seven Layers That Drive the Budget

Before quoting any ranges, it helps to enumerate all the layers a budget must cover. Vendors who give low early estimates tend to omit layers three through seven.

- Hardware and firmware: sensors, microcontrollers (MCUs), and the embedded software that runs on them

- Connectivity: the radio protocol, SIM or gateway infrastructure, and recurring carrier or spectrum costs

- Edge computing: on-device inference, gateway compute, or a hybrid, each with a different CapEx and OpEx profile

- Cloud backend: IoT broker (AWS IoT Core, Azure IoT Hub, or equivalent), time-series storage, and analytics pipeline

- Mobile/web application: the operator dashboard, consumer app, or both

- Security: device authentication, OTA update infrastructure, and end-to-end encryption

- Ongoing operations: device management, telemetry storage at scale, firmware OTA rollouts, and support

Most enterprise IoT app development engagements allocate roughly 40-50% of the total budget to software development (layers 3-5), with the remainder split across hardware, security, and ongoing operations.

Hardware and Firmware: Where Commodity Ends and Industrial Begins

Hardware is the most visible cost, but the per-unit figure is far less important than the total bill of materials multiplied by device count, plus the cost of iteration.

Microcontroller Cost Tiers

| Hardware Category | Unit Cost Range | Application |

| Commodity MCU (ESP32, STM32 entry-level) | $2–$15 | Smart home devices, low-frequency sensors |

| Mid-tier connected module (Nordic nRF, Particle) | $15–$50 | Asset trackers, building sensors |

| Industrial-grade MCU/SoM (NXP i.MX, TI Sitara) | $50–$200 | Factory automation, edge compute nodes |

| Ruggedized industrial unit (IP67+, wide temp range) | $200–$500+ | Outdoor utilities, heavy equipment, harsh environments |

A 500-device industrial monitoring deployment using $150 ruggedized units carries $75,000 in hardware alone before any software. The same deployment using $10 commodity MCUs costs $5,000 in hardware, but commodity units cannot meet the temperature tolerances, certification requirements, or 10-year field life expected in industrial settings.

Firmware Development Cost

Embedded firmware is a distinct engineering discipline from mobile or cloud development. Expect $10,000-$30,000 for a basic firmware layer on commodity hardware, and $40,000-$100,000+ when the device requires custom driver development, proprietary communication stack integration, or multi-protocol support. Firmware engineers in the US bill at $90,000-$145,000 annually in salary terms, which translates to blended agency rates of $120-$180/hour for this specialty.

Hardware Iteration Cycles

Most IoT projects require two to three prototype rounds before a production-ready hardware design is locked. Each iteration includes PCB redesign, component sourcing, assembly, and re-testing. Budget $15,000-$40,000 per hardware iteration cycle for a typical sensor node, more for devices with complex RF or power management requirements. If your device has a custom radio design rather than a pre-certified module, add another $10,000-$40,000 for FCC certification testing alone, plus $8,000-$20,000 for CE marking if the European market is in scope.

Connectivity Protocol Selection: The Decision That Locks In 5-Year TCO

Protocol selection is one of the highest-leverage architecture decisions in any IoT project. The right choice depends on device count, message frequency, range requirements, power budget, and latency tolerance, not on whichever protocol the development team has used most recently.

Connectivity Protocol Comparison

| Protocol | Module Cost | Range | Power Draw | Monthly Carrier Cost | Best Use Case |

| WiFi (802.11) | $3–$8 | 30–100m indoor | High | None (private LAN) | High-bandwidth, fixed devices; HVAC, kiosks |

| BLE 5.x | $2–$6 | 10–40m | Very low | None | Short-range sensors, wearables, proximity |

| Zigbee / Z-Wave | $4–$10 | 10–30m (mesh extends) | Low | None | Building automation mesh, lighting control |

| LoRaWAN | $8–$15 | 2–15km | Very low | $0 (private) / $2–$5/device/year (network server) | Wide-area sensor networks, utilities, agriculture |

| NB-IoT | $5–$12 | Carrier-dependent | Very low | $0.50–$3/device/month | Smart meters, low-frequency municipal sensors |

| LTE-M | $15–$35 | Carrier-dependent | Low–medium | $1–$5/device/month | Asset tracking, mobile devices, voice + data |

| 5G (NR) | $50–$150 | Carrier-dependent | High | $5–$20/device/month | High-bandwidth industrial, real-time video |

5-Year TCO matters more than module cost. A LoRaWAN deployment of 1,000 sensors carries a 5-year connectivity cost near zero on unlicensed spectrum, versus $60,000-$300,000 in carrier fees for the same devices on LTE-M. However, LoRaWAN’s bandwidth ceiling (250 bps-11 kbps effective data rate) rules it out for any application requiring frequent large payloads.

For mixed-signal environments, a manufacturing floor where some assets are fixed and others mobile, a hybrid protocol architecture (LoRaWAN for stationary sensors, LTE-M for mobile assets) often delivers the best TCO, but adds integration complexity and should be scoped into the backend design from day one.

In the IMI Heatmiser smart home engagement, TechAhead worked with a combination of IoT technologies to enable centralized temperature control through a single mobile application. While the case study does not explicitly document the protocol selection, the system required reliable real-time communication across multiple in-home devices and user interfaces. This implies that protocol and connectivity decisions were driven by the need for seamless control, low latency, and consistent user experience across residential environments.

Edge Computing: The Architecture Decision That Shapes Cloud Spend

The question of where computation happens, on the device, at an edge gateway, or in the cloud, has direct implications for cloud infrastructure cost, latency, and resilience. There is no universally correct answer; the optimal placement depends on data volume, required response time, and connectivity reliability.

Three Edge Architecture Profiles

On-device inference (TinyML): Processing happens inside the MCU or embedded module. This is the lowest-latency and lowest-connectivity-cost option, but requires more expensive hardware (ML-capable MCUs like the Cortex-M55 or Espressif ESP32-S3 start around $3-$20 in quantity) and firmware engineering effort to optimize models for constrained environments. Best for: anomaly detection on vibration sensors, keyword spotting, local threshold alerts.

Edge gateway compute: A local gateway (industrial PC, NVIDIA Jetson, or equivalent, priced $200-$2,000 per unit) aggregates data from tens to hundreds of field devices, runs inference or business logic, and pushes only relevant events to the cloud. This architecture can reduce cloud data ingestion costs by 60-80% in telemetry-heavy applications. The tradeoff is gateway hardware CapEx, firmware maintenance for an additional tier, and physical deployment cost per site.

Cloud-centric processing: All raw sensor data flows to the cloud broker for processing. This minimizes edge hardware cost but maximizes cloud ingestion, storage, and egress fees, especially at scale. Cellular or satellite-connected devices also incur per-MB transmission charges for every payload transmitted; at $0.40/MB on many cellular IoT plans, a device sending 1 MB/day across 1,000 units costs $146,000/year in data transmission alone.

Enterprises with cloud engineering services experience increasingly adopt a hybrid model: lightweight event filtering on-device, edge aggregation at the gateway, and full analytics in the cloud. This three-tier approach generally yields the best balance of latency, cost, and operational flexibility for industrial deployments.

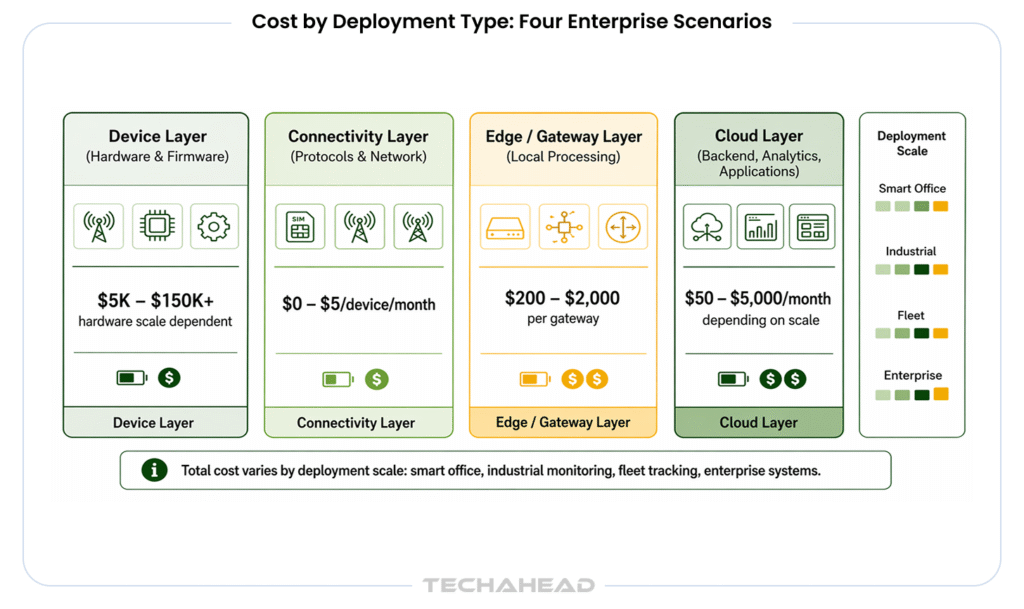

Cost by Deployment Type: Four Enterprise Scenarios

The ranges below assume a US-based development team with standard enterprise quality requirements (SOC 2-aligned data handling, device authentication, OTA infrastructure). Costs do not include ongoing operations (modeled separately below).

| Deployment Type | Device Count | Connectivity | Hardware Budget | Software + Cloud | Security + Cert | Total Project Range |

| Smart office (occupancy, HVAC, access) | ~50 devices | BLE / Zigbee / WiFi | $5,000–$25,000 | $45,000–$80,000 | $8,000–$15,000 | $58,000–$120,000 |

| Industrial monitoring (vibration, temperature, pressure) | ~500 devices | LoRaWAN / NB-IoT + edge gateway | $60,000–$150,000 | $120,000–$200,000 | $25,000–$50,000 | $205,000–$400,000 |

| Fleet tracking (vehicles, heavy equipment) | ~5,000 assets | LTE-M / 5G | $250,000–$750,000 | $150,000–$300,000 | $40,000–$80,000 | $440,000–$1,130,000 |

| Enterprise smart building (multi-site, multi-system) | 1,000–10,000+ devices | Multi-protocol hybrid | $200,000–$1,000,000+ | $250,000–$500,000 | $50,000–$100,000 | $500,000–$1,600,000+ |

These ranges align with TechAhead’s observed deal sizes: MVP and pilot deployments run $50,000-$100,000, mid-market programs $100,000-$250,000, and full enterprise platforms $250,000-$500,000 or more depending on device count and integration complexity.

Cloud Backend Cost: AWS IoT Core vs. Azure IoT Hub vs. Google Cloud IoT

The cloud backend is where IoT platforms diverge most sharply, not just in features but in pricing model, which affects TCO dramatically depending on your traffic pattern.

Platform Comparison

AWS IoT Core uses a granular pay-as-you-go model: connectivity ($0.08/million minutes of connection), messaging ($1.00/million messages at the base tier), Device Shadow operations ($1.25/million operations), and Rules Engine actions ($0.15/million). For a 1,000-device deployment where each device reports 100 messages per day, expect $50-$100/month in base cloud costs. AWS’s model is cost-efficient for bursty or low-duty-cycle fleets (asset trackers reporting twice daily), but a firmware bug that triggers a retry loop can generate bill shock in hours, AWS Budgets and IoT Device Defender throttling rules are mandatory, not optional.

Azure IoT Hub uses tiered unit pricing: Basic B1 starts at $10/unit/month for 400,000 messages/day; Standard S1 is $25/unit/month with the same quota but adds device twins, cloud-to-device commands, and IoT Edge support. For predictable, high-frequency industrial telemetry (vibration sensors reporting at 1 Hz continuously), Azure’s capacity model can yield a lower per-message cost than AWS once a unit is fully saturated, but stepping from one unit to the next for a 0.25% traffic increase effectively doubles cost.

Google Cloud IoT discontinued its dedicated IoT Core service in 2023. Workloads now run on Cloud Pub/Sub, BigQuery, and Cloud IoT partner solutions. A 1,000-device deployment with moderate data volume runs $50-$200/month but requires a more custom integration architecture and carries higher implementation effort than either AWS or Azure. Google Cloud is strongest for data-analytics-heavy IoT applications where BigQuery’s capabilities justify the architectural overhead.

Selection guidance: AWS for variable traffic and consumer device fleets; Azure for industrial high-throughput deployments, Digital Twin Graph use cases, and organizations already on the Microsoft stack; Google Cloud for analytics-first workloads where sensor data feeds directly into ML pipelines.

At 100,000 devices reporting at a moderate rate, cloud infrastructure costs run $1,500-$5,000/month across all three platforms, depending on message size and feature usage.

Security Cost Adder: Why 15-25% Is Not Optional

Security is the line item most commonly value-engineered out of early budgets and most commonly reinstated after the first security review. For enterprise IoT, the security cost adder runs 15-25% of the total development budget and covers four non-negotiable components:

Device authentication (X.509 certificates): Each device requires a unique cryptographic identity. Provisioning X.509 certificates at scale requires a PKI infrastructure (AWS Certificate Manager Private CA starts at $400/month per CA, plus $0.005 per certificate), integration with the device provisioning workflow, and secure key injection during manufacturing. For a 5,000-device fleet, certificate infrastructure adds $15,000-$30,000 in setup and $5,000-$10,000/year in ongoing management.

OTA firmware update infrastructure: A production OTA system requires signed firmware packages (to prevent unauthorized updates), rollback capability (blue-green slot architecture so a failed update does not brick devices in the field), staged rollout controls, and device health monitoring. Purpose-built OTA platforms like Memfault or AWS IoT Jobs cost $0.01-$0.10/device/month at scale; building a custom OTA pipeline adds $30,000-$80,000 to development cost. Without OTA, every firmware vulnerability requires a physical truck roll at $150-$500 per device visit; this becomes untenable for any fleet above a few hundred devices.

End-to-end encryption: TLS 1.3 or DTLS for transport, with application-layer encryption for sensitive payloads (health data, location data, financial telemetry). Implementation effort is $8,000-$20,000 depending on protocol constraints; some constrained MCUs require hardware security modules or cryptographic accelerators that add $5-$15/unit to BOM cost.

Compliance and audit readiness: For deployments in regulated industries or with enterprise clients who carry SOC 2 Type II or ISO 27001 requirements (as TechAhead does), security documentation, threat modeling, and penetration testing add $15,000-$40,000 to an enterprise engagement.

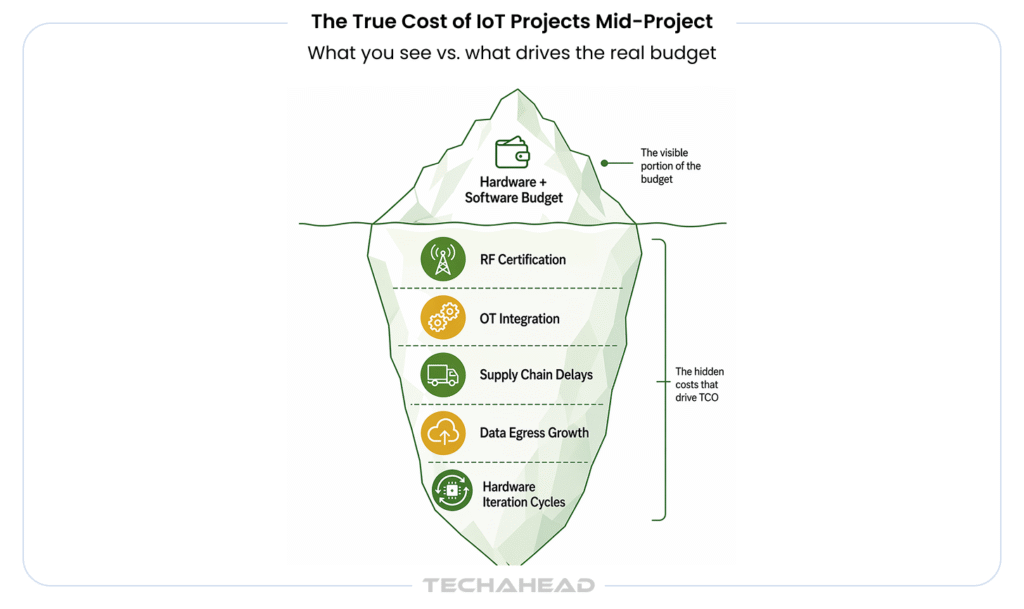

Hidden Costs: What Blows Up IoT Budgets Mid-Project

The following costs are real, predictable, and routinely underestimated in initial scoping:

RF certification (FCC and CE): Any IoT device with a radio transmitter sold in the US requires FCC authorization. Using a pre-certified radio module and qualifying under the Supplier’s Declaration of Conformity (SDoC) route limits lab costs to $3,000-$8,000. Designing a custom radio (intentional radiator, Subpart C) requires testing in an FCC-recognized lab and can cost $15,000-$40,000 or more. CE marking for EU markets adds another $5,000-$20,000 and 4-8 weeks of testing time. Combined, RF certification for a device targeting both markets runs $10,000-$50,000, plus 6-12 weeks of calendar time. Missing this in a schedule means missing a product launch date.

Supply chain delays and component substitutions: Lead times for industrial MCUs ranged from 20-50 weeks through 2023 and remain elevated for some families in 2026. A hardware design locked around a single MCU variant that becomes allocated can require a board redesign, adding one full hardware iteration cycle ($15,000-$40,000) and weeks of delay.

Integration with legacy OT systems: Industrial deployments frequently encounter equipment running Modbus, OPC-UA, or proprietary protocols with no native IP connectivity. Bridging this OT-to-IT gap requires protocol translation at the gateway layer and custom driver development, which adds $20,000-$60,000 and is almost never scoped in early estimates.

Data egress and storage growth: IoT deployments generate exponentially more data than estimated. A 500-device industrial deployment generating 1 KB/message at 1-minute intervals produces 720 MB/day, 260 GB/year. At standard cloud storage rates ($0.023/GB/month on S3), storage alone runs $144/month, modest at first, but time-series data needs indexing, replication, and retention policy management that pushes real costs 5-10x higher in production.

In industrial IoT projects, legacy OT compatibility can introduce unplanned integration costs when protocol translation, gateway configuration, or custom driver development is required. To avoid budget overruns, these dependencies should be scoped early during planning and estimation.

Ongoing Operational Cost Model: What You Pay After Go-Live

IoT projects do not end at launch. Unlike traditional software where operational cost is primarily infrastructure, IoT has a physical dimension where devices in the field require firmware updates, connectivity management, and eventual hardware refresh.

Annual maintenance for IoT software development (excluding hardware) runs 15-25% of the initial development cost. For a $200,000 development project, budget $30,000-$50,000/year for bug fixes, platform updates, and security patches.

Telemetry storage scales with device count and message frequency. A 1,000-device fleet at 1 message/minute generates roughly 525 million messages/year. At AWS IoT Core rates, that is approximately $525/year in messaging fees plus $200-$500/month in time-series storage (using AWS Timestream or equivalent).

Firmware OTA at scale: For a 5,000-device fleet, a single firmware release requires careful staged rollout, 1% of devices first, then 10%, then full fleet, with automated health checks at each stage. If using a managed OTA platform, expect $500-$2,500/month at this fleet size. If operating your own OTA infrastructure on AWS IoT Jobs or Azure IoT Hub, compute and storage for the update campaign is included in the base platform cost but requires dedicated DevOps time to manage releases.

Device management and monitoring: Platforms like AWS IoT Device Defender or Azure Defender for IoT provide fleet-wide anomaly detection and certificate lifecycle management. Costs run $0.10-$0.30/device/month; for 5,000 devices, that is $6,000-$18,000/year, which is a justified line item given the cost of a single undetected compromise in an industrial environment.

Hardware refresh: Enterprise IoT devices deployed in industrial environments carry a 7-10 year hardware lifecycle expectation. Budget for a 10-15% annual device attrition rate (damage, failure, obsolescence) and factor in the cost of provisioning replacement units with current firmware and certificates.

Questions That Reveal Real IoT Engineering Depth in a Vendor

When evaluating IoT app development companies or interviewing potential enterprise AI partners, the quality of the questions a vendor asks during scoping reveals whether they understand the full cost stack or only the software layer. A vendor working from genuine IoT experience will ask:

- “What is the target battery life for field devices, and have you modeled duty cycle against your intended message frequency?” This question surfaces whether the hardware architecture (MCU selection, radio protocol, power management firmware) can physically achieve the operating requirement. A team that never asks this will discover the problem in prototype testing, after hardware has been ordered.

- “Which FCC/CE authorization route applies to your radio design, pre-certified module or custom radio, and is that timeline already in the project schedule?” Most software vendors do not know the difference between SDoC and FCC Subpart C certification routes. An IoT specialist does, because certification timelines directly gate product launch dates.

- “What is your tolerated data loss window if cellular connectivity drops, seconds, minutes, or hours, and should edge devices buffer to local storage?” This question determines whether the architecture requires local store-and-forward capability, which adds firmware complexity and storage hardware to the BOM.

- “Do you have existing OT equipment on the floor with Modbus or OPC-UA interfaces, and have you confirmed those protocols are accessible without PLC vendor licensing fees?” This is the question that prevents the OT integration cost surprise described in the hidden costs section.

In IoT scoping engagements, TechAhead often asks: “How will devices be securely provisioned and authenticated at scale—hundreds vs. tens of thousands—and what is the rollback strategy if provisioning fails?” This question surfaces hidden complexity in device lifecycle management, where provisioning, certificate handling, and OTA recovery workflows can significantly impact both architecture design and long-term operational cost.

For industrial logistics technology buyers, the ability to answer these questions before a proposal is written, not during development, is the clearest signal that a partner has built real IoT systems at scale.

Why TechAhead Clients Get Accurate IoT Cost Estimates

TechAhead has been building connected systems since 2009, with 240+ engineers across hardware integration, firmware, cloud backend, and mobile application disciplines. The firm holds SOC 2 Type II, ISO 27001, and ISO 42001 certifications and operates as an AWS Advanced Tier Partner and Microsoft Gold Certified Partner, certifications that matter when an IoT platform must live inside a client’s existing cloud infrastructure with enterprise security requirements.

Enterprise clients including Disney, American Express, Audi, AXA, JLL, and ESPN F1 have engaged TechAhead for projects spanning connected vehicle platforms, building automation systems, and real-time tracking infrastructure. The cost modeling framework in this article reflects the actual layers we scope in every IoT engagement, not a marketing approximation.

In a connected fitness IoT engagement, TechAhead developed a platform for a Shark Tank-backed brand that enabled users to remotely control cold plunge and sauna systems through a mobile application. The solution integrated IoT firmware, mobile interfaces, and cloud connectivity to deliver real-time device control and a seamless user experience. This highlights how tightly integrated device and application layers are essential for delivering reliable IoT-enabled consumer products.

IoT App Development Cost in 2026: Updated Figures, Real Breakdowns

Most IoT cost guides publish ranges so wide they’re useless — “$10,000 to $500,000” tells you nothing. What actually determines your cost is complexity tier, team location, device count, integration depth, and the ongoing operational overhead that most budgets completely ignore. Here’s every number you need, updated for 2026.

Complexity-Tier Cost Breakdown

This is where budgeting starts. Before region, before team size, before platform — your project’s complexity tier sets the cost floor.

| Tier | What It Includes | Devices | Timeline | Cost Range |

| Proof of Concept / Pilot | Single use case, 1 device type, basic cloud connectivity, simple dashboard, no enterprise integrations | 10–50 | 6–12 weeks | $15,000–$40,000 |

| Simple IoT App | Basic device management, single platform (AWS or Azure), mobile companion app, push notifications, standard auth | 50–500 | 2–4 months | $40,000–$90,000 |

| Medium Complexity | Multi-device type support, REST API integrations, real-time dashboard, role-based access, OTA updates, 1–2 enterprise integrations | 500–5,000 | 4–7 months | $90,000–$200,000 |

| Complex / AI-Enabled | Predictive analytics, ML anomaly detection, digital twin integration, multi-platform cloud, edge computing layer, advanced security | 5,000–50,000 | 7–12 months | $200,000–$500,000 |

| Enterprise Platform | Multi-tenant architecture, full compliance controls (HIPAA/PCI-DSS/CRA), custom firmware, global device fleet management, agentic AI workflows | 50,000+ | 12–24 months | $500,000–$2,000,000+ |

What Pushes Projects to the Top of Each Range

Every tier has a floor and a ceiling. Here’s what consistently drives costs toward the higher end:

- Custom hardware integration — proprietary protocols or non-standard device SDKs add 20–35% to development time

- Regulatory compliance — HIPAA, PCI-DSS, EU Cyber Resilience Act, or FDA requirements add 15–25% across all tiers

- Offline-first architecture — building for intermittent connectivity with local processing and sync logic adds significant backend complexity

- Multi-platform deployment — iOS, Android, and web dashboard simultaneously adds 40–60% over single-platform

- AI/ML feature layer — predictive maintenance models, anomaly detection, and computer vision add $30,000–$120,000 depending on model complexity

- Legacy system integration — connecting to older ERP, SCADA, or CMMS systems that weren’t built for APIs is slow, expensive engineering

Regional Developer Rate Comparison: 2026

Hourly rate is the most visible cost lever — and the most misunderstood. Low rates with high rework cycles cost more than mid-range rates with clean delivery. That said, the rate differences are real and worth understanding.

| Region | Embedded / Firmware Engineer | IoT Backend Engineer | Mobile App Engineer | IoT Architect / Tech Lead | Blended Team Rate |

| USA / Canada | $120–$200/hr | $130–$210/hr | $120–$200/hr | $180–$280/hr | $140–$220/hr |

| Western Europe (UK, Germany, Netherlands) | $80–$130/hr | $90–$140/hr | $80–$130/hr | $120–$180/hr | $90–$140/hr |

| Eastern Europe (Poland, Romania, Czech Republic) | $45–$75/hr | $50–$85/hr | $45–$75/hr | $70–$110/hr | $50–$80/hr |

| Latin America (Colombia, Mexico, Argentina) | $35–$65/hr | $40–$70/hr | $35–$65/hr | $60–$95/hr | $40–$70/hr |

| India / Southeast Asia | $20–$40/hr | $22–$45/hr | $20–$40/hr | $35–$65/hr | $22–$45/hr |

| Hybrid (US lead + offshore execution) | — | — | — | — | $65–$110/hr blended |

IoT Hardware Cost Ranges: 2026

Software gets most of the budget conversation. Hardware is where IoT projects most consistently underestimate.

| Hardware Component | Unit Cost Range | Notes |

| Basic sensor (temperature, humidity, motion) | $5–$50/unit | Volume pricing available at 1,000+ units |

| GPS tracker / asset tracking device | $80–$250/unit | Cellular module cost adds $10–$30/unit |

| Industrial IoT sensor (vibration, pressure, flow) | $200–$1,500/unit | Ruggedized, industrial-grade |

| Smart lock / access control device | $150–$600/unit | Varies significantly by security certification |

| Edge gateway / computing device | $300–$3,000/unit | Processing power determines cost range |

| Smart wearable / health monitoring device | $100–$800/unit | Medical-grade certification adds 30–50% |

| Environmental monitor (air quality, CO2, particulates) | $150–$800/unit | Multi-sensor units at higher end |

| IoT development board (prototyping) | $10–$150/unit | Arduino, Raspberry Pi, ESP32 range |

| Custom PCB hardware development | $15,000–$80,000 | One-time NRE cost; volume drives unit cost down |

Hardware Procurement Rules

- Prototype phase: budget 3–5x the per-unit cost for development boards and early hardware

- Pilot phase (10–100 units): expect 40–60% premium over production pricing

- Production (1,000+ units): negotiate volume pricing — typical savings of 25–45% vs pilot pricing

- Certification costs: FCC, CE, UL, and industry-specific certifications add $15,000–$80,000 per device type — budget this separately

IoT Platform and Cloud Infrastructure Cost: 2026

The platform layer is where recurring costs compound fastest. Most enterprises underestimate Year 2 and Year 3 cloud costs by 40–60%.

| Platform / Service | Pricing Model | Cost Range | Notes |

| AWS IoT Core | Per message + per device minute | $1–$8/device/month at scale | Varies significantly by message frequency |

| Azure IoT Hub | Per message tier | $0–$25/month (free: 8,000 msg/day) to $2,500+/month | Tier pricing based on message volume |

| Google Cloud Pub/Sub | Per GB processed | $0.04/GB after free tier | Add Vertex AI costs for ML workloads |

| AWS Greengrass (edge) | Per device per month | $0.16/device/month | For edge computing deployments |

| Azure Digital Twins | Per operation + queries | $0.001–$0.01 per operation | Depends heavily on update frequency |

| IoT platform licensing (PTC ThingWorx, Siemens MindSphere) | Annual license | $50,000–$500,000/year | Enterprise industrial IoT platforms |

| MQTT broker (HiveMQ, Mosquitto managed) | Per connection/month | $500–$5,000/month | At 10,000+ device scale |

| Data storage (time-series: AWS Timestream) | Per GB written + queried | $0.50/GB written + $0.01/GB queried | Time-series data grows fast at scale |

Ongoing IoT Maintenance Cost: The Budget Nobody Plans For

This is the cost that kills IoT ROI projections. Development is a one-time expense. Maintenance is permanent — and it’s consistently underbudgeted by 50–70% in enterprise IoT projects.

Understanding where maintenance spend goes helps you budget accurately and prioritise the right areas.

| Maintenance Category | What It Covers | Annual Cost |

| Cloud infrastructure | Platform fees, data storage, message ingestion, CDN, monitoring tools | $6,000–$60,000 |

| Security patches & updates | Firmware vulnerability patches, dependency updates, certificate rotation | $8,000–$30,000 |

| OS & platform compatibility | iOS/Android OS update testing and fixes, cloud API deprecation handling | $5,000–$20,000 |

| OTA firmware updates | Engineering, testing, and staged rollout of firmware updates to deployed fleet | $10,000–$40,000 |

| Bug fixes & performance | Production issue resolution, performance degradation investigation | $8,000–$25,000 |

| Compliance monitoring | Ongoing regulatory compliance verification, audit preparation | $5,000–$30,000 |

| Feature development | New capability based on user data and business roadmap evolution | $20,000–$100,000+ |

| Support & monitoring | 24/7 system monitoring, alerting, SLA-based incident response | $10,000–$50,000 |

The Three Maintenance Mistakes That Destroy IoT ROI

Mistake 1: Budgeting maintenance as a percentage of build cost without accounting for device count

A $200,000 build with 500 devices has very different maintenance requirements than a $200,000 build with 10,000 devices. Device count is a better predictor of maintenance cost than build cost.

Mistake 2: Not budgeting for regulatory changes

The EU Cyber Resilience Act’s September 2026 reporting requirements will require firmware updates and process changes for every IoT deployment selling into EU markets. Teams that did not budget for this are now funding unplanned engineering work.

Mistake 3: Treating OTA updates as optional

Every month a security vulnerability that sits unpatched in a deployed firmware is a liability. OTA update infrastructure is not a nice-to-have; it’s the mechanism that keeps your device fleet secure after ship. Budget for it as a fixed operational cost, not a project expense.

Get an Accurate IoT Development Estimate for Your Deployment

IoT budgets that blow up do so because the initial scope was built on software-only thinking. Hardware iteration cycles, RF certification, OT integration, and security infrastructure are not optional line items; they are the difference between a proof of concept and a production system your operations team can maintain for a decade.

TechAhead’s IoT scoping engagements start with a technical discovery phase ($8,000-$15,000) that produces a defensible hardware architecture, connectivity protocol recommendation, and full cost model before any development begins. For enterprise programs, this upfront investment routinely saves $50,000-$200,000 in mid-project rework.

Talk to TechAhead’s IoT team to get a scoped estimate for your industrial monitoring, fleet tracking, or smart building deployment. Tell us your device count, target environment, and existing infrastructure, we will tell you what it actually costs.

Enterprise IoT development costs range from $58,000–$120,000 for a small smart office deployment (~50 devices) to $500,000–$1,600,000+ for a multi-site enterprise smart building platform with 1,000–10,000+ devices. Mid-market industrial monitoring programs (500 devices) run $205,000–$400,000. These figures include hardware, connectivity, software, cloud backend, and security — but exclude ongoing operational costs after launch.

The most underestimated IoT cost items are: RF/FCC certification ($10,000–$50,000 per device), integration with legacy OT systems running Modbus or OPC-UA ($20,000–$60,000), hardware iteration cycles ($15,000–$40,000 per round), and data egress and telemetry storage growth, which can run 5–10x the initial estimate in production. Vendors who give low early estimates omit these entirely.

Basic firmware on commodity hardware costs $10,000–$30,000. When custom driver development, proprietary communication stack integration, or multi-protocol support is required, firmware development costs rise to $40,000–$100,000+. Firmware engineers in the US bill at $120–$180/hour at blended agency rates.

For a 1,000-sensor deployment, LoRaWAN on unlicensed spectrum carries near-zero 5-year connectivity cost. The same deployment on LTE-M costs $60,000–$300,000 in carrier fees over five years. However, LoRaWAN’s limited bandwidth (250 bps–11 kbps) makes it unsuitable for high-frequency or large-payload applications.

Security adds 15–25% to the total IoT development budget. Key components include X.509 device authentication ($15,000–$30,000 setup for a 5,000-device fleet), OTA firmware update infrastructure ($30,000–$80,000 for a custom build), end-to-end encryption ($8,000–$20,000), and compliance/penetration testing ($15,000–$40,000 for regulated industries).

Annual IoT software maintenance (excluding hardware) runs 15–25% of the initial development cost. For a $200,000 project, budget $30,000–$50,000/year for bug fixes, security patches, and platform updates. Device management and monitoring tools (like AWS IoT Device Defender) add $0.10–$0.30/device/month on top of this.

AWS IoT Core is more cost-efficient for bursty or low-duty-cycle fleets (e.g., asset trackers reporting twice daily), using a pay-as-you-go model. Azure IoT Hub’s tiered unit pricing becomes more economical for high-frequency industrial telemetry where units are fully saturated. At 100,000 devices at moderate reporting rates, both platforms run $1,500–$5,000/month depending on message size and features.

An edge gateway architecture can reduce cloud data ingestion costs by 60–80% in telemetry-heavy applications. For example, a device sending 1 MB/day across 1,000 units at $0.40/MB on a cellular IoT plan costs $146,000/year in transmission alone — edge filtering dramatically cuts this. The tradeoff is gateway hardware CapEx ($200–$2,000/unit) and additional firmware maintenance.

IoT MVP and pilot deployments run $50,000–$100,000. Mid-market programs cost $100,000–$250,000, and full enterprise platforms cost $250,000–$500,000 or more depending on device count and integration complexity. A technical discovery/scoping phase before development ($8,000–$15,000) can prevent $50,000–$200,000 in mid-project rework.