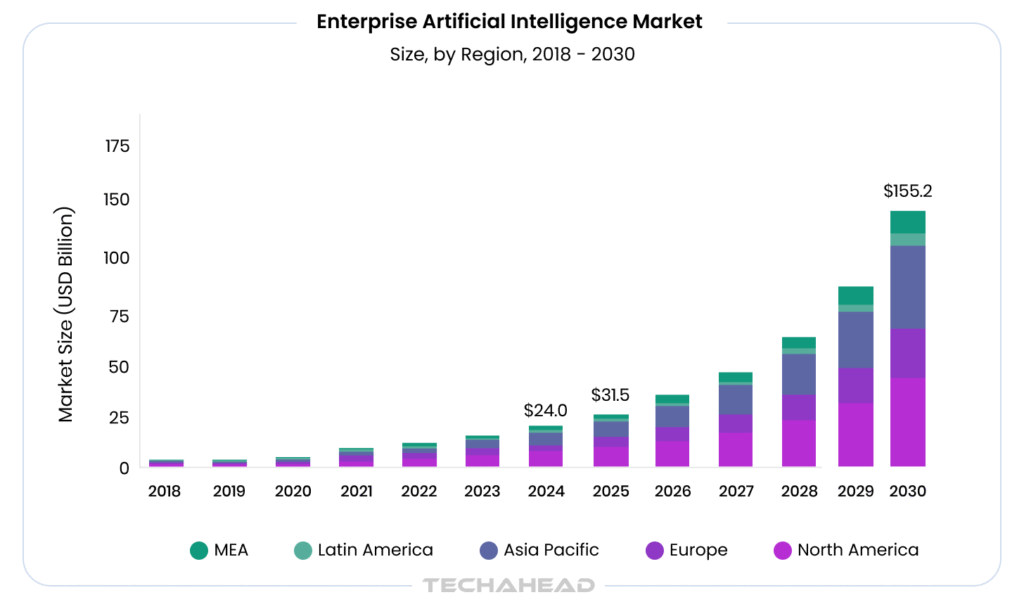

Every year, enterprises pour millions into AI pilots that never see the light of production. The global enterprise AI market was valued at USD 23.95 billion in 2024 and is projected to reach USD 1,55,210.3 million by 2030, growing at a CAGR of 37.6% from 2025 to 2030. The money is moving. The ambition is there. Yet 60% of AI pilots quietly die before they ever scale.

For enterprise owners, two concerns dominate every AI conversation: security and cost. How do you protect sensitive business data inside an AI system built on third-party infrastructure? And how do you justify a seven-figure investment when the odds of scaling are historically stacked against you?

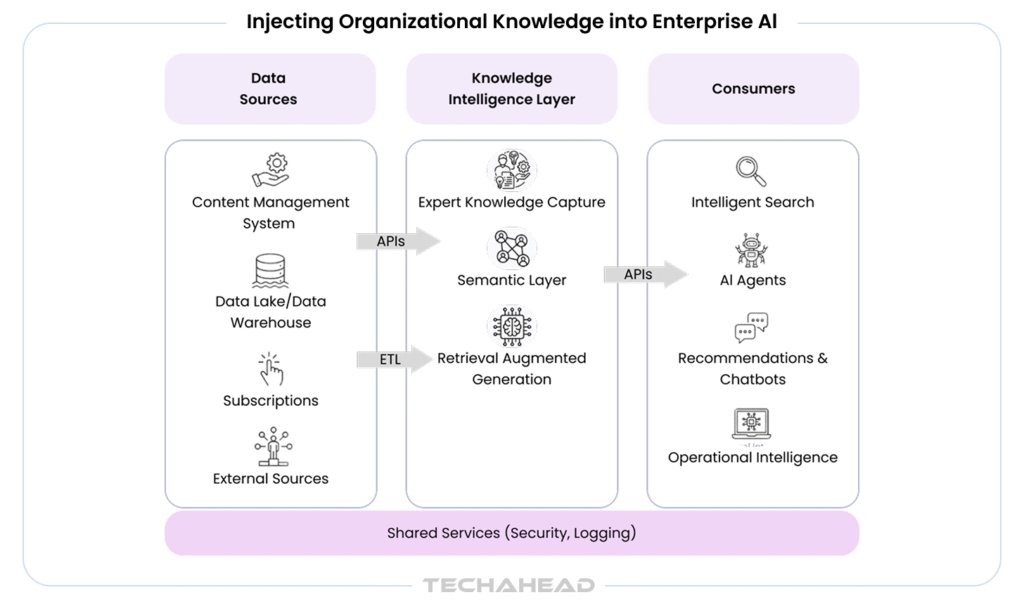

Beneath these concerns lie two root causes most enterprises never address upfront; a data maturity gap that leaves AI models starved of clean, reliable data, and AI infrastructure that was never built to handle real production demands.

These are crucial decisions that determine whether your AI initiative becomes a competitive advantage or an expensive lesson.

In this blog, we are going to explore the reasons enterprise AI pilots fail to scale and the exact steps your organization needs to take to be in the 40% that actually succeed.

Key Takeaways

- Poor strategy, not poor technology is behind most AI pilot failures.

- Assign a dollar value to the problem before building anything with AI.

- Bad data does not just slow pilots down; it kills them entirely.

- Sandbox environments behave nothing like real production systems in practice.

- Moving AI to production costs 5-10x more than building the pilot itself.

What the 60% Failure Rate Really Means for Your Enterprise?

This failure rate in enterprise AI pilots is not just an industry statistic; it is a warning sign that most organizations are spending significant budget/time and resources on AI initiatives. Most probably, that will never move beyond the testing phase.

However, what does it actually mean?

- Companies spend an average of $500K-$5M on AI pilots that never reach production

- While your pilot stalls, competitors who scale AI successfully pull further ahead

- Repeated failed pilots create internal resistance, which makes future AI adoption even harder

- Failed pilots make leadership hesitant to approve future AI budgets

The real danger is not the failed pilot itself; in most cases, the problem is never the technology. It is the strategy behind it.

The Hidden Reasons Enterprise AI Pilots Get Stuck in “Pilot Purgatory”

Most enterprise AI pilots quietly stall; they sit in an endless loop of reviews/revisions and “we need more data” conversations until the budget runs dry.

No Clear Definition of Success

Most pilots launch without defining what “ready to scale” actually looks like. Without measurable success criteria set from day one, there is no moment where leadership confidently says “this works, let us move forward”.

Disconnected from Business Outcomes

When AI pilots are owned entirely by IT or data science teams, they often optimize for technical performance. For example, a model that is 94% accurate means nothing if it does not reduce costs, increase revenue, or solve a real operational problem.

Lack of Executive Sponsorship

Pilots without a business sponsor lose momentum fast. When budget cycles change or leadership priorities shift, unsupported pilots are the first initiatives to get ‘deprioritized’ or cancelled entirely.

Infrastructure was Never Built to Scale

Most enterprise level pilots build in isolated environments that cannot connect to live systems, real user workflows, or production-grade infrastructure. As a result, it makes the jump from pilot to deployment far more expensive than anticipated.

Mistake #1: Solving the Wrong Problem with AI

Enterprises rush into AI pilots excited by the ‘latest innovation’, not the real business challenges. It is the initial level, where things go wrong first.

Chasing Trends Instead of Business Pain Points

That is why Gartner reports show 63% of AI projects are initiated because competitors are doing it (AI FOMO), and 80% of CEOs believe AI is a top priority, but only 47% have a clear business case. Indeed, it is an expensive way to follow a trend.

What Does This Look Like in Practice?

Teams build AI tools for processes that a simple automation or an Excel macro could fix in a week. The pilot runs for months. It solves nothing meaningful.

The Cost of Misaligned AI Investments

McKinsey found that companies misaligning AI with core business problems waste up to 40% of their AI budget in the first year alone. Forty percent. Gone.

How to Fix It Before You Start

- Map your top 5 operational bottlenecks first

- Ask: does this problem genuinely need AI, or just better process design?

- Validate the problem with frontline employees, not just leadership

So, in short, pick the right problem. Everything else follows.

Mistake #2: Treating AI as a Technology Project, Not a Business Initiative

According to McKinsey, only 15-20% of “High Performers” are seeing meaningful business impact from AI, largely because most initiatives never leave the technical team’s hands. So, it means:

Who Owns the AI Pilot Matters: IT-Led vs. Business-Led Initiatives

IT teams measure success in system uptime, model accuracy. Business leaders measure it in revenue, customer outcomes. These are two completely different scorecards. When both are not aligned from the start, the pilot solves the wrong problem.

The Cost of Misalignment: When Strategy and Technology Do Not Speak the Same Language

Gartner found that 80% of AI projects stall due to misalignment between technical teams and business stakeholders. One department builds. Nobody adopts. The budget disappears. The pilot becomes a case study in what not to do and nothing more.

Mistake #3: Ignoring Data Readiness Before Deployment

Bad data does not just slow AI down, it breaks it completely. According to Gartner, poor data quality costs organizations an average of $12.9 million per year. Yet most enterprises jump straight into AI deployment without ever auditing what is underneath.

Why Data Quality Determines AI Success?

AI models are only as good as what you feed them. Inconsistent formats, duplicate records, missing values do not just reduce accuracy; they produce decisions that actively damage operations.

The Three Data Problems Enterprises Ignore

- Data Silos: 80% of enterprise data sits trapped across disconnected systems, according to Forrester, which leads to “Context Collapse” when AI tries to scale.

- Outdated Records: Stale data trains AI on yesterday’s reality, not today’s.

- No Data Governance: Without ownership & standards, data quality degrades faster than any AI team can fix it.

So, in short, fix your data foundation first and then everything else comes after.

Mistake #4: Underestimate the Change Management Challenge

AI does not fail in the algorithm. It fails in the conference room.

According to McKinsey, 70% of change programs fail due to employee resistance, lack of management support. Yet most enterprises pour millions into AI technology while allocating almost nothing toward preparing their people for it.

Employees fear job displacement. Middle managers resist tools that challenge their authority. These are real, human reactions. A Prosci study found that projects with strong change management are six times more likely to meet their objectives.

Mistake #5: No Clear Path from Pilot to Production

Most AI pilots live in sandboxed environments, run on cleaned sample data, and are demonstrated in controlled conditions. Then comes the real world, then everything breaks.

The Pilot-to-Production Gap is Wider Than You Think

Gartner reports that only 53% of AI projects make it from prototype to production. That is not a technology problem. It is a planning problem.

No Defined Handoff Process

Who owns the AI system after the pilot ends? In most enterprises, nobody does. The data science team built it. IT was never looped in. Operations has no idea it exists.

Infrastructure was an Afterthought

Pilots run on isolated environments that were never designed to connect with live data pipelines, existing enterprise systems, or real user workflows.

Scaling Costs are Always Underestimated

IBM found that moving an AI model to production costs 5-10x more than building the pilot itself. Security reviews, compliance checks, API integrations, retraining pipelines all add up fast.

No Rollout or Change Management Plan

Production deployment means real employees, real workflows, real resistance. Without a structured rollout plan, even a technically solid AI system will fail in the hands of unprepared users.

Why Most Enterprise AI Pilots Run Out of Budget before Proving ROI?

Budget death is slow. It does not happen overnight; it happens across dozens of small decisions that nobody tracks until the money is gone.

McKinsey found that 44% of companies report difficulty quantifying AI’s business value! Because of a lack of clear ROI framework set upfront.

Most pilots underestimate three cost areas: data preparation, talent, iteration cycles. These alone can consume 60-70% of the total pilot budget before a single result is produced.

Leadership then pulls funding. Not because AI failed, but because nobody defined what success looked like in dollar terms from the start.

How to Build an AI Pilot That is Designed to Scale from Day One?

Most enterprises skip the planning that actually makes AI scale. They jump straight into the building. Follow this process before writing a single line of code:

Step 1: Define the Business Problem First

Do not start with technology. Start with the problem. Indeed, it sounds obvious, but most pilots fail here. Here are the aspects you should remember:

- Identify one specific operational pain point with a measurable business impact

- Assign a dollar value to the problem. Deloitte found that AI pilots tied to clear financial outcomes are 2x more likely to reach production

- Avoid broad goals like “improve efficiency.” Instead, target something specific; reduce invoice processing time by 40% or cut customer churn by 15%.

Step 2: Set Measurable Success Criteria

If your team cannot answer “how will we know this worked?” in one sentence: you should stop and go back.

- Define 3-5 KPIs that connect directly to business outcomes, not just technical performance metrics

- A model with 95% accuracy that does not reduce operational costs is not a success

- Set a minimum performance threshold required to proceed to production, and document it formally

- According to McKinsey, companies that define ROI benchmarks before piloting are 1.8x more likely to scale their AI initiatives successfully.

Step 3: Audit Your Data Before Building Anything

Bad data kills good models. Gartner’s more recent estimate that poor data quality costs organizations an average of $12.9 million annually. Most pilots discover data problems halfway through, which burns time, budget, and team morale.

- Assess data completeness, accuracy, consistency, accessibility across all relevant sources

- Identify gaps, silos, and ownership conflicts early; these are the issues that delay production deployments by months

- Involve your data engineering team from day one, not after the model is already built

- If clean, structured data is not available, budget for a data preparation phase before the pilot begins

Step 4: Build With Production Infrastructure in Mind

This is where most technical teams make crucial mistakes. Pilots built in isolated sandbox environments (running on cleaned, static datasets) behave completely differently once they hit real production conditions. The gap between a controlled test environment and a live enterprise system is wider than most teams expect.

Mirror your live infrastructure as closely as possible during the pilot phase. Loop in IT, security, and compliance teams from the beginning, not three weeks before launch.

API integrations, authentication protocols, and data pipeline requirements need to be addressed early, not treated as post-pilot problems. Gartner found that 60% of AI deployment failures are caused by infrastructure misalignment between pilot and production environments. Building right the first time is always cheaper than rebuilding under pressure.

Step 5: Identify & Train Your Internal System

Technology does not drive adoption. People do. Without internal advocates, even the most technically sound AI system will sit unused.

- Identify 2–3 department leads who will lead the AI tool within their teams

- Run structured training sessions; not a one-hour demo, but hands-on workflow integration training

- According to PwC, enterprises that invest in employee AI training during the pilot phase see 3x higher adoption rates post-deployment

- Address resistance early by showing employees how the AI removes friction from their work, not replaces it.

Step 6: Run a Controlled Real-World Test Before Full Deployment

Before scaling company-wide, test with a small, real-world user group under actual working conditions. Select a representative group of 10-25 users from the target department and run the AI system alongside existing workflows; do not replace anything yet. This phase is not about perfection. It is about discovering what breaks before it breaks at scale.

Collect both quantitative performance data and qualitative user feedback simultaneously. The numbers will tell you if the model is performing. The feedback will tell you if people will actually use it.

Gartner recommends a phased rollout strategy, which reduces deployment failure risk by up to 40%. Edge cases, workflow gaps, and user experience issues that never appear in sandboxed testing always surface here, and it is far better to find them now than after a full company-wide rollout.

Step 7: Build a Cost and Timeline Roadmap for Full Scaling

Scaling is expensive, and most enterprises are blindsided by just how expensive it gets. IBM research shows that moving an AI model from pilot to full production costs 5-10x more than building the pilot itself. Teams that do not plan for this reality walk into leadership meetings unprepared and walk out without approval.

Break down scaling costs across infrastructure, integration, workforce training, ongoing maintenance, and compliance requirements. Do not estimate in round numbers, get specific.

Build a 6-12 month deployment roadmap with clear milestones, budget checkpoints, and contingency provisions for unexpected technical debt. Present this roadmap to leadership before requesting production approval.

It signals that your team is thinking like a business unit, not just a technology function and that distinction matters enormously when budget decisions are being made.

Step 8: Measure, Report, and Secure Executive Sign-Off

Numbers win budget approvals. Narratives do not. Present your results directly against the KPIs defined in Step 2.

- Build a one-page executive summary showing pilot performance vs. defined benchmarks

- Quantify the projected business impact of scaling: cost savings, revenue impact, time saved

- Anticipate pushback on risk and cost; have answers ready before the room asks

- Companies that present structured business cases for AI scaling secure executive approval faster than those that present technical reports alone, according to Forrester Research

Conclusion

Most enterprise AI pilots do not fail because the technology does not work. They fail because the strategy behind them was never built to scale. Poor data readiness, unclear success metrics, infrastructure gaps, these are problems that no AI model can fix on its own. The enterprises that successfully scale AI treat it as a business initiative from day one. If your organization is planning an AI pilot or has one stuck in purgatory right now, you need the right development partner.

TechAhead specializes in building enterprise AI solutions that are designed to scale. From initial strategy and data readiness to full production deployment, we bring the technical depth into your enterprise AI initiatives.

Enterprises scale AI by aligning pilots with business goals, building secure data pipelines, and adopting MLOps. Partnering with TechAhead, a leading enterprise AI development company, accelerates production-ready deployments.

Data quality is crucial for scaling AI solutions, as inaccurate or inconsistent data leads to poor model performance. Generally, well-structured data offers reliable insights, better predictions, and successful AI adoption.

MLOps allows seamless deployment, monitoring, and management of AI models at scale. It automates workflows, maintains model consistency, and improves collaboration, helping enterprises transition efficiently from AI pilots to production systems.

Legacy infrastructure limits AI scalability by restricting data processing speed, integration, and flexibility. Modernizing systems with cloud and scalable architectures allows enterprises to efficiently deploy, manage, and scale AI solutions.

Companies can follow data security by implementing encryption, access controls, and compliance frameworks. Regular audits, secure data pipelines, and governance policies help protect sensitive information while scaling AI across enterprise environments.