Required for core functionality such as security, network management, and accessibility. These cannot be disabled.

How does music recognition work with the Shazam app?

It’s a typical scenario: You listen to a song at a restaurant or an event, and then that song stays with you, haunting you, forcing you to find its source and singer.

Earlier, the only option was to replicate that song or a few verses and ask friends and family to hunt for the source.

But since 1999, this changed because a magical app called Shazam was born that year.

What Exactly Is Shazam? Let’s explore with the leading app development company.

Shazam is an app that can recognize music, movies, advertising, and television shows and showcase the source and other details about that content.

It seems magical, right?

In this blog, we will decode the internal workings of Shazam and find out how it works.

But first, let’s overview Shazam and find some startling facts about this app.

Shazam: A Brief History

Shazam was launched in 1999 by Chris Barton, Philip Inghelbrecht, Avery Wang, and Dhiraj Mukherjee, who teamed up to create a system that can recognize music and other related content and reveal everything about them.

In 2018, Apple acquired Shazam for $400 million, making it one of the most significant mobile app acquisitions at that time.

As of now, Shazam is not only available in the Apple App Store but also on Android, macOS, iOS, Wear OS, watchOS, and as a Google Chrome extension.

Stunning facts about Shazam

As of September 2022, Shazam has more than 225 million global monthly users, and it’s expanding at a rapid pace.

To recognize songs and TV/video content, Shazam has acquired 200 patents; as of now, the app has more than 12 billion tags, which can categorize music and video content based on user inputs.

In 2015, it was found that 5% of all music downloads across the world originated from Shazam, making it one of the most extensive databases for music content anywhere in the world.

One significant statistic for businesses looking for a solid platform to advertise their products: Advertisers on the Shazam app receive an average of 1 million clicks daily!

And one exciting trivia: The most searched (Shazamed) song ever is Dance Monkey, which has been searched 41 million times, and Drake is the most Shazamed artist ever with 350 million hits.

An incredible achievement for a tech-powered mobile app!

How Shazam works: Understanding music recognition algorithms & fingerprinting

The operational model of the Shazam app is simple: The app listens to max 20 seconds of a song or video content from TV, a movie, ads, etc., and it can be a chorus, verse, or a mere intro, and then instantly recognize that content, and show the results.

An important thing to note: No matter how long that song or content is, the Shazam app will only read the first 20 seconds.

Now, once that data is fed into the Shazam app, then it will:

- Create a fingerprint record that sample

- Create a fingerprint record that sample

- Deploy music recognition algorithms to tell you exactly which song or content it is.

Now, this process of recognizing music is not that simple!

There are tons of processes and algorithms that work in tandem to reveal the exact source of the music and content.

In 2013, one of the inventors of the Shazam app: Avery Li-Chung Wang, shared the magic behind the Shazam app via research paper and, for the first time, revealed how this app works.

Understanding the elements of sound

First, let’s understand what sound is.

As per science, sound is a vibration that propagates via mechanical waves comprising pressure and displacement, and the medium is mostly air or water in some cases.

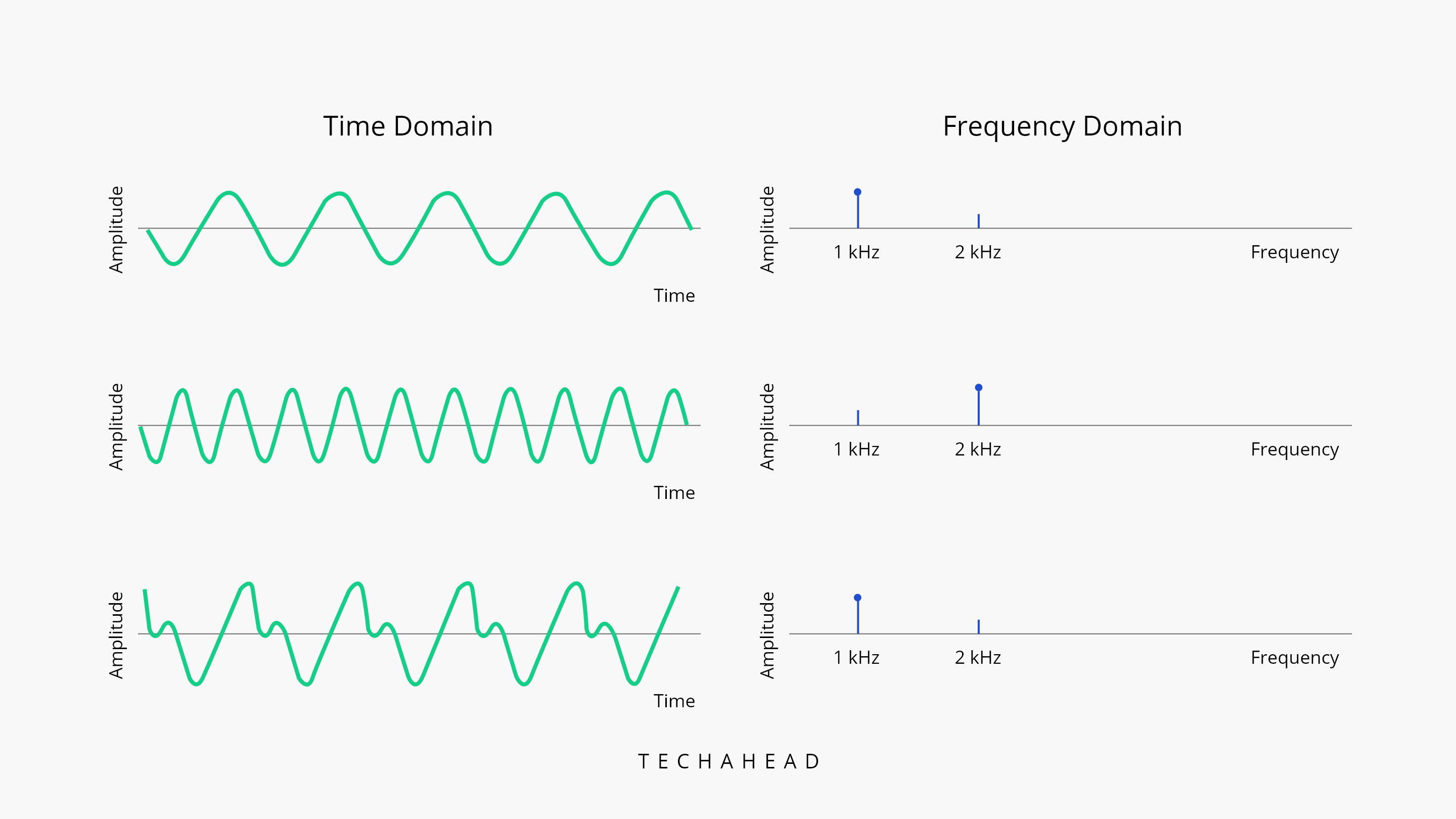

The three main components of sound are frequency, time, and amplitude.

Amplitude is the loudness of the sound, which is the size of the vibration.

Source:

https://www.toptal.com/algorithms/shazam-it-music-processing-fingerprinting-and-recognition

Frequency, measured in Hertz (Hz), is the rate at which the vibration occurs. A human being can only listen to sound whose frequency lies between 20Hz and 20,000Hz.

Time is crucial because it shows at which interval a sound has occurred in relation to other sounds.

This is important to know because when a song is produced, it has sounds from different instruments that vary in frequency and amplitude as they move through time in relation to one another.

This is the reason that the same song having two different versions will still generate a unique fingerprint due to the complexity of frequency, amplitude, and time.

Creating unique audio fingerprint

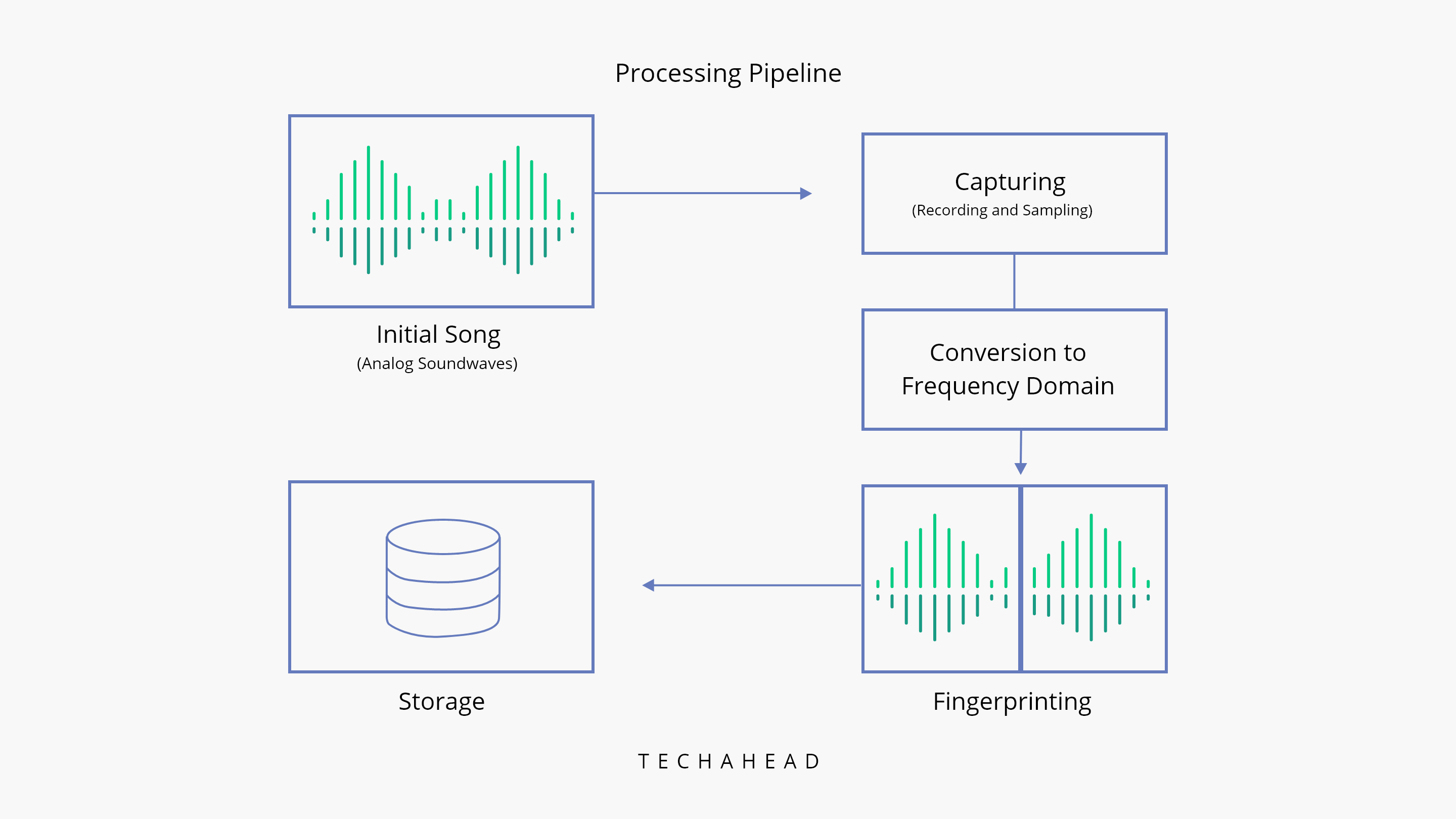

Once the Shazam app records the first few seconds of a song or any audio content (max is 20 seconds of recording), it will create a unique audio fingerprint of that song.

For that, the recorded analog sound is converted into a spectrogram, wherein the X-axis represents time, the Y-axis represents frequency, and the density of the shading represents amplitude.

For each section of an audio file, the algorithm chooses more substantial peaks, and gradually, the spectrogram is reduced to a scatter plot. A point comes when the amplitude is no longer needed.

And this is the crux of Shazam’s operations.

Two unique fingerprints are created, and then they are matched to find the exact song that is being fed into the system.

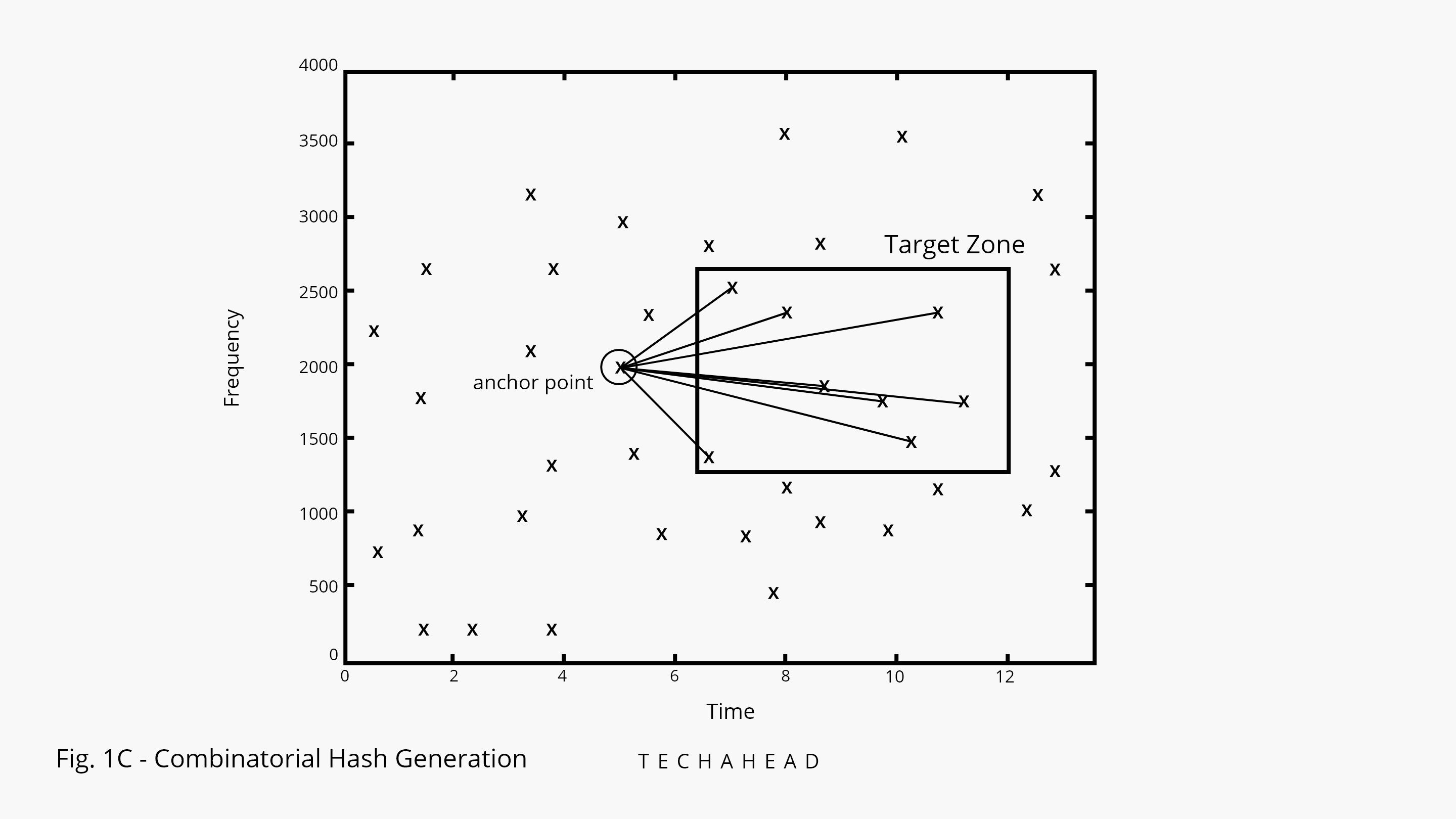

There is an advanced process called combinatorial hashing, which is deployed to create exact and unique fingerprints of the audio files and ensure that the matching is perfect.

This is how it works:

-

- Each anchor-point pair is first stored in a table containing the anchor frequency, the frequency of the point, and the time consumed between the anchor and the point called Hash. (Table #1)

- There is another table that contains the time between the anchor and the beginning of the audio file. (Table #2)

- Hash is then linked with Table #2

- The files in the database also have unique IDs, which are used to extract more information about the song, such as the singer’s name, the song’s title, and more.

How does Shazam match the songs & provide the results?

At this point, we have the unique fingerprints of both audio files. Now, the actual process of matching the songs starts.

This is how it works:

-

-

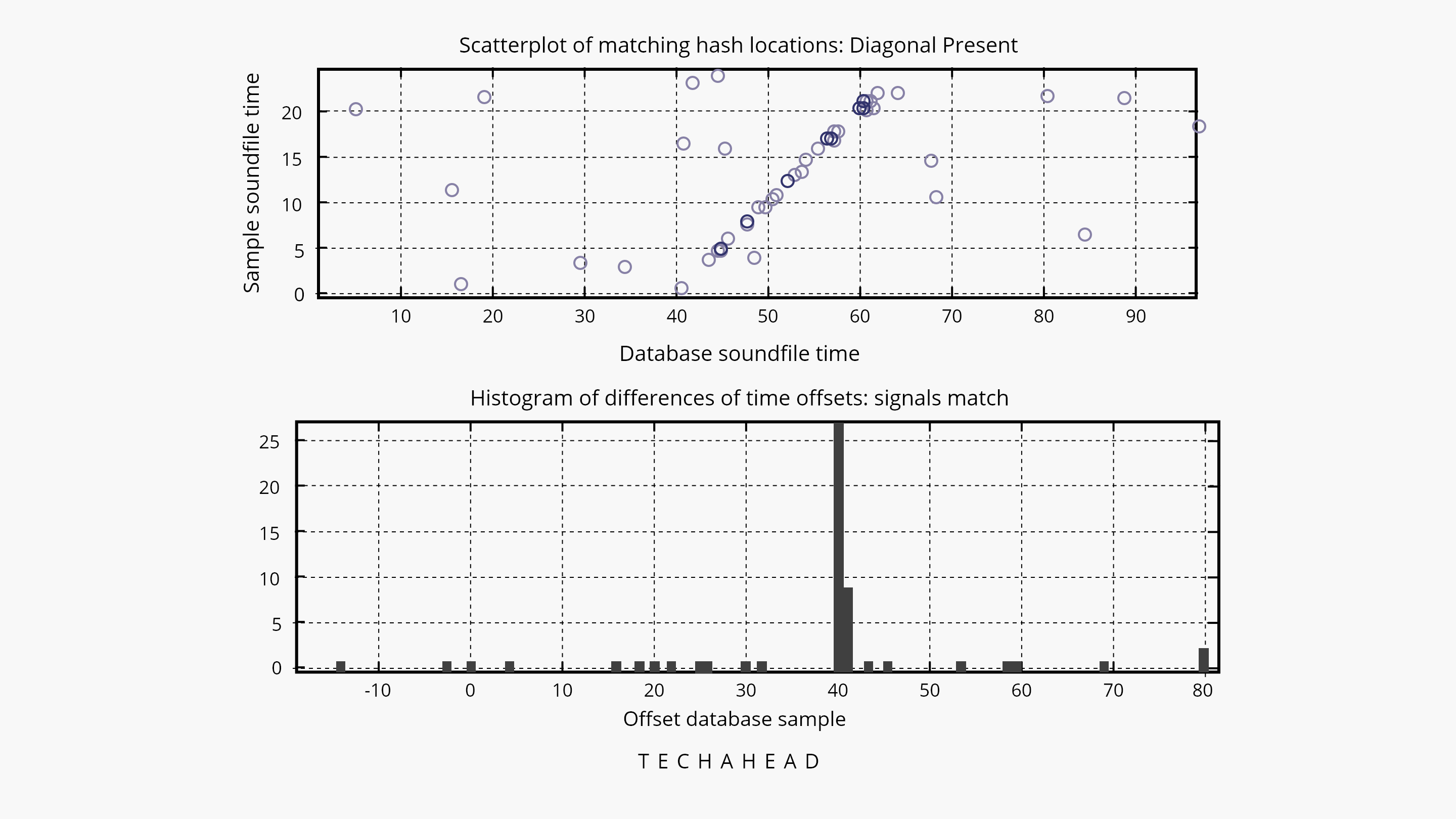

- The anchor-point pairs from the user’s recordings are first sent to Shazam’s database for searching the exact match of anchor points in the database.

- This search will return the audio fingerprints of all those songs that contain this Hash (formulated from combinatorial hashing)

- Once all the possible matches are located for the user’s recordings, the time offset between the beginning and the beginning of the potential matches is found out.

- If many matching hashes have been offset simultaneously, then bingo! It’s the same song.

-

Suppose we plot this process of the matching process onto a scatter plot, wherein the Y-axis represents the time at which hash occurs in the user’s recording. The X-axis is the time at which the hash occurs in the database’s recording. In that case, the matching hashes will form a diagonal line.

Source: https://medium.com/@treycoopermusic/how-shazam-works-d97135fb4582

At the same time, if this plotting is done on a histogram, then there will be a spike at the correct offset time.

Here’s a summary of this entire process, in brief:

-

-

- The song is recorded by the user via the Shazam app

- This song is in Analog form, which is converted into Digital form

- This digital form is converted into the frequency domain via the Fourier Transform

- Unique audion fingerprint is created using Spectrogram

- This fingerprint is compared with all the possible matches in the Shazam database

- Via combinatorial hashing, the exact match is found

- The user gets the details of the audio content

-

And it hardly takes seconds to complete this entire process of finding the exact match.

Key Features of the Shazam App

- Music Recognition and Lyrics Display: Shazam is a music recognition app that helps users identify songs. It analyzes audio and provides information about the track, artist, and album. Additionally, the app displays lyrics for many of the identified songs, allowing users to either sing along with or learn the lyrics to their favorite songs.

- Song Recommendations: Shazam also offers song recommendations based on users’ identified songs to help them discover new music that aligns with their preferences.

- Music Charts: The app compiles and displays charts showcasing the most Shazamed songs globally or in specific regions, allowing users to explore popular music trends. Furthermore, users can save identified songs and access them later, even offline.

- Integration with Streaming Services: Shazam also enables users to connect their accounts with various music streaming services, making it easy to listen to full tracks, create playlists, and explore more content.

- AR Experience: The app has incorporated augmented reality (AR) features, allowing users to engage with content related to their favorite artists through visual experiences.

- Artist Information: In addition to song details, Shazam provides information about the artist, including their discography, biography, and related content.

- Social Sharing: Users can also share their identified songs and music discoveries on social media platforms, allowing friends to see and listen to the same tracks.

Explore the power of Shazam’s algorithm with TechAhead. Our team of expert Mobile App Developers can help you create a personalized app using this cutting-edge technology. Let us guide you towards success.

Schedule a no-obligation, free consulting session with our team right here!