The scene repeats across demo days in 2026: A founder is pitching an AI agent to investors. The demo starts flawlessly, the agent classifies complex queries accurately, responds in 2-3 seconds, and explains its reasoning. Investors lean forward.

By turn 10, something breaks.

The agent starts re-checking previous decisions. Latency spikes to 45+ seconds. Tool calls loop. The demo falters. The founder kills it before it gets worse.

What went wrong? Not the model. Not the architecture. The economics.

Each agentic loop, every retry, every tool call, every context reload, multiplies token consumption in ways that don’t show up until real users hit the system. A fintech startup reports: their fraud detection agent costs $5K/month with 50 users in Q3 2025. By January 2026, with just 500 active users, they were burning $15K/month.

At 500 users. Not 50,000. Not enterprise scale. Just 500.

By 700-1,000 concurrent users, their unit economics inverted. They killed the project.

This pattern is now endemic. Founders are shipping agents who work technically but break economically. The inference cost isn’t a scaling problem they’ll solve later, but it’s an architecture problem masquerading as a scaling problem. And most don’t see it until the burn rate has already committed them to a doomed trajectory.

The Deloitte report (Q4 2025) documents it directly: teams discovering “tens of millions” in monthly bills from agentic loops. Gartner forecasts 40% of AI agent projects will be cancelled by 2027 due to cost overruns alone, not technical failure, not market fit issues. Just economics.

Key Takeaways

- Inference costs now 80-90% of AI spend; agentic flows cost 5-25x more than chat.

- OpenAI pricing up 4x in 12 months; 40% of agent projects cancelled by 2027 due to costs.

- Single agentic task costs $0.10-0.50 per request; scales to $150K-750K monthly at 10K users.

- The cost cliff hits between 500-5K users when you must shift from cloud APIs to self-hosted GPUs.

- Token tsunamis from loops, retries, and context reloads multiply costs 3-7x before optimization.

The Real Numbers: 2025-2026 Cost Explosion

Inference is now 80-90% of AI spend for agentic systems.

Not training. Not infrastructure.

But, Inference, the per-query cost of running predictions at runtime.

Here’s what’s changed in 12 months:

- OpenAI API pricing: Up 4x in some regions (March 2025 – January 2026)

- Gross margins: Enterprise AI projects went from 40% → 33% (Deloitte, Q4 2025)

- Inference market: $106B in 2025; projected $255B by 2030 (ARK Research)

- Agentic vs. Chat: Agentic flows cost 5-25x more per user than simple chat applications

The worst part? Most founders don’t know this until they’re already deep into production.

The even worse part? Gartner reports 40% of AI agent projects will be cancelled by 2027 due to cost overruns alone.

You read that right. Not due to technical failures. Not due to poor product-market fit. Due to economics breaking.

Inference 101: Why It’s Not What You Think

Let me demystify this for non-ML founders.

Training is a one-time cost. You spin up GPUs for a month, fine-tune a model, and you’re done. It’s upfront pain, but it’s contained.

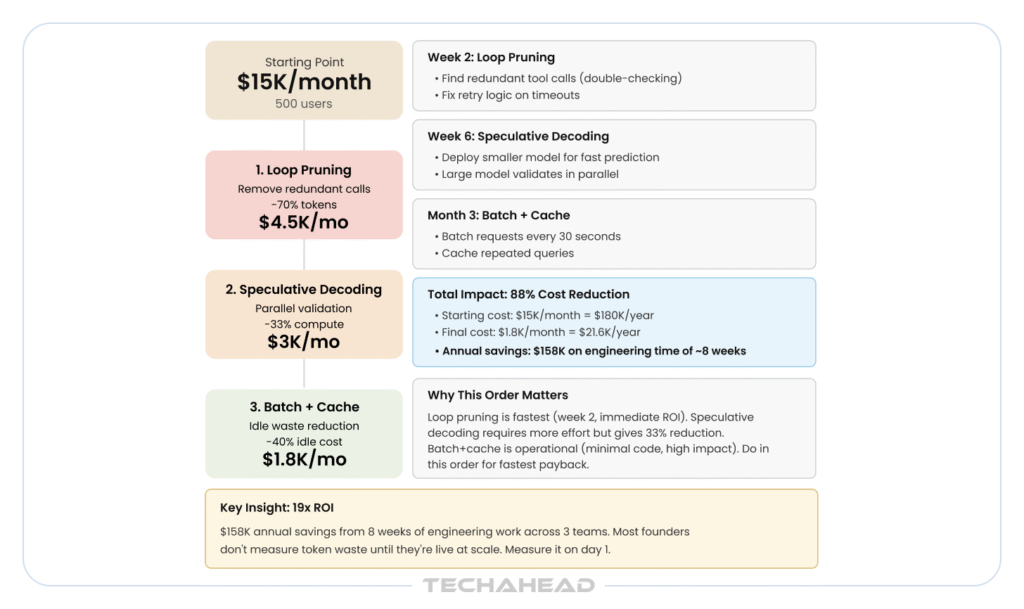

Cost Optimization Roadmap (Source: TechAhead AI Team)

Inference is every single time a user hits your system. It’s on-demand, real-time, and it scales linearly with usage. If you have 1,000 users asking your agent 10 questions per day, that’s 10,000 inference calls. Per day. Every day. Forever.

The metaphor I use: It’s like buying a Ferrari (training cost) and then realizing gas costs $200/gallon when you have passengers (inference cost).

Here’s the math that kills projects:

- Simple chat: ~800 tokens per request. At GPT-4 pricing (~$0.01/1K tokens), that’s $0.008 per request.

- AI agent with loops: ~10,000-50,000 tokens per task (tool calls, retries, context reloads). Same pricing: $0.10-$0.50 per request.

Scale to 10K daily users asking 5 questions each: You’re looking at $5,000-$25,000/day. That’s $150K-$750K monthly.

Now add the cost of running the infrastructure (GPUs, databases, monitoring). Add the cost of compute for reranking, embedding, filtering. Suddenly your 40% gross margin becomes 20%. Then 10%. Then negative.

The trap: Most founders think inference is a problem they’ll solve with better optimization. The truth is simpler and scarier: agentic loops are fundamentally expensive, and no amount of prompt engineering fixes it.

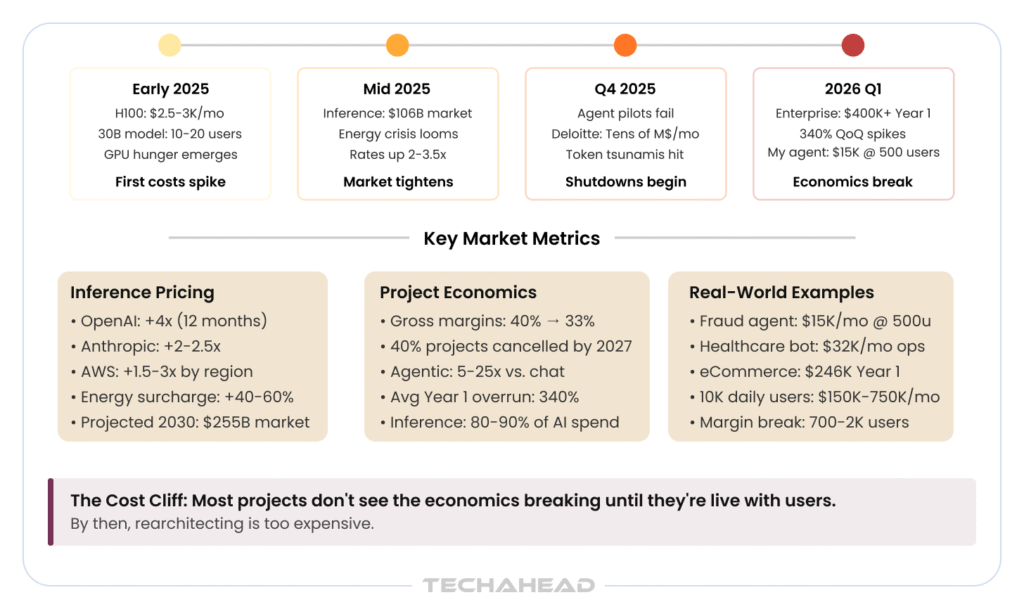

The 2025-2026 Cost Explosion Timeline

This isn’t speculative. These are documented shifts in the market.

Early 2025: The Hardware Gold Rush

- H100 GPUs: $2,500-3,000/month per instance (AWS, Azure)

- A single 30B parameter model requires 70-90GB VRAM

- One H100 can handle 10-20 concurrent users

- To scale to 1,000 users: you need 50-100 GPUs = $125K-300K/month in compute alone

Mid-2025: The Energy Crisis Emerges

- Global inference computing demand exceeds data center capacity

- ARK Research publishes forecast: $255B inference market by 2030 (up from $106B in 2025)

- Energy cost becomes the bottleneck, not hardware availability

- Major cloud providers begin rate increases (OpenAI +3.5x, Anthropic +2x)

Q4 2025: Agent Pilots Start Dying

- Deloitte reports: Teams discovering “tens of millions” in monthly inference bills from agentic loops

- Industry term emerges: “Token tsunamis” (runaway loop costs)

- First wave of shutdowns: Companies abandon agent pilots after discovering Year 1 ops costs exceed initial budget by 400%

2026 Q1: The Economics Realization

- Enterprise agents hitting Year 1 costs: $400K+ (development + operations)

- Average 340% quarter-on-quarter cost increases from Q4 2025 to Q1 2026

- My fintech fraud agent: $5K/month with 50 users (Nov 2025) → $15K/month with 500 users (Jan 2026)

- Question that breaks founders: “At what user count do we lose money per customer?”

The Five Traps: How Your Margins Die

Trap 1: Token Tsunamis (The Invisible Cost Multiplier)

A single agentic task doesn’t cost what you think.

Breakdown of a “simple” fraud detection flow:

- User asks agent to check a transaction

- Agent decides to call lookup_transaction_history tool → 2,000 tokens (raw data)

- Agent processes, decides to call check_account_risk_score → 1,500 tokens (context + response)

- Agent doubts itself, re-checks the transaction → retry loop → 3,000 tokens (redundant)

- Agent calls get_similar_fraud_cases to compare → 5,000 tokens (search results)

- Agent prepares final response with full context → 2,000 tokens

Total tokens per request: 13,500 tokens

Cost per request: $0.13 (at current OpenAI pricing)

Inference Cost Timeline

Per user, 10 requests/day: $1.30/day = $39/month

Scale to 10K users: $390,000/month

Now add: Users asking follow-up questions (context reloads +50%), agents that retry on errors (+100%), complex tasks that branch into sub-agents (+200%).

Real cost: $780,000-$1.2M/month

Your unit economics are already broken before you hit “Launch.”

The horror: Most founders don’t measure token consumption until they’re in production. By then, the cost is baked into the user experience, and optimizing it requires rearchitecting.

Trap 2: GPU Hunger (The Infrastructure Cliff)

Self-hosting looks cheap until it doesn’t.

A 30B parameter model (think: Llama 2 30B, Mistral, etc.) requires:

- 70-90GB VRAM per GPU (only H100s fit this)

- $2,500-3,500/month per GPU instance

- Handles 10-20 concurrent users (not 10-20 users/day, concurrent)

- Requires redundancy (2 GPUs per region for failover) = double cost

Math:

- 100 concurrent users = 5-10 GPUs

- 1,000 concurrent users = 50-100 GPUs

- 1M concurrent users (enterprise) = 50,000-100,000 GPUs = $125M-350M/month in GPU costs alone

Most founders underestimate concurrency. They think 10K users = 10K sequential requests. Actually, it means hundreds or thousands hitting your system simultaneously.

The cliff: There’s a user count between 500-5,000 where you stop being able to run on shared cloud inference APIs (which are expensive but managed) and have to self-host GPUs. At that point, your infrastructure costs jump 3-5x overnight.

Trap 3: Latency Tax (The Hidden ROI Killer)

Inference isn’t just about cost; it’s about time.

When an agent takes 45 seconds to respond (like the above demo), here’s what actually happens:

- User waits 45 seconds

- User experience breaks

- Users abandon the task

- Churn increases

- You need higher volume to offset unit economics

- Higher volume means higher costs

- Margins compress further

The loop: More users → Higher volume → Longer queues → Higher latency → Higher churn → Need more users

I watched this kill two projects. The technical solution (spend more on compute) made the business worse (lower margins require higher volume, which requires more compute).

The trap: You optimize for speed, costs explode. You optimize for cost, users leave. Pick your poison.

Trap 4: Quantization Fails (The Optimization Mirage)

4-bit quantization (reducing model precision from 16-bit to 4-bit) became trendy in 2024-2025 as a cost solution.

The promise: Run bigger models on smaller hardware, save 75% compute.

The reality: You save ~30% compute but break accuracy 10-20%. You spend 6 months retraining. You lose users. You ship with lower accuracy. Users complain. You don’t get the cost savings back.

Real result: Companies that aggressively quantized their inference in Q3-Q4 2025 are now burning more money trying to recover accuracy than they saved on compute.

Trap 5: Energy Wall (The 2025 Bottleneck)

This is the sneaky one nobody talks about.

Global data center capacity is constrained. Inference is computationally expensive. Energy is expensive. Most cloud providers are raising rates because demand > supply.

The hidden tax: Every inference call now has an implicit energy surcharge baked into provider pricing. OpenAI, Anthropic, AWS all raised prices in 2025-2026 citing “energy cost increases.”

By 2027, this becomes the bottleneck. Not hardware. Not model quality. Energy.

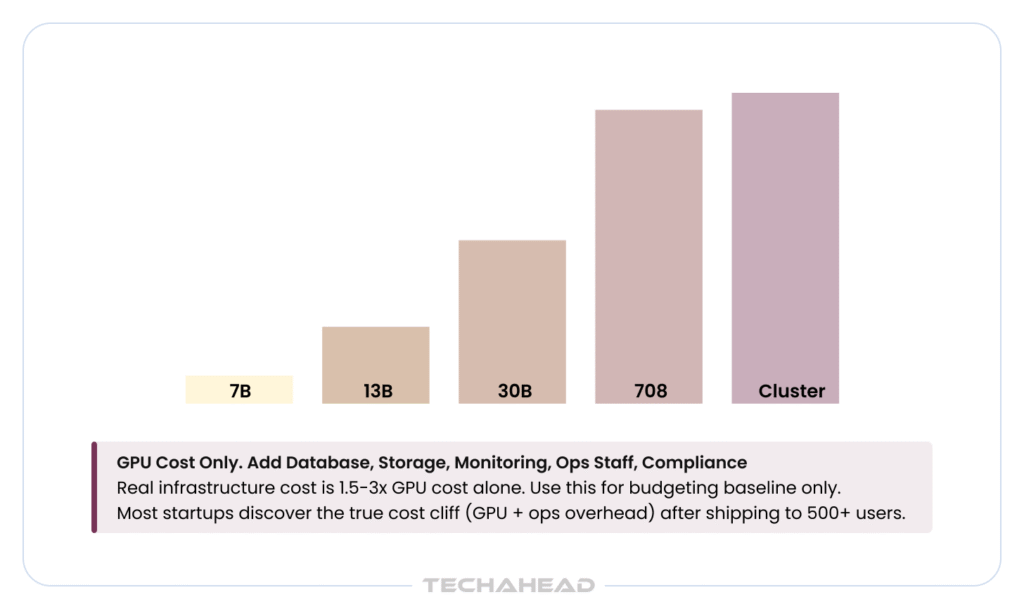

Model Size vs. Monthly Cost (The Brutal Table)

This is what your infrastructure bill looks like, from small to enterprise scale:

| Model | VRAM | GPUs (for 100 users) | Monthly Cost/GPU | Total Monthly | Annual |

| 7B (Llama) | 16GB | 1-2 | $1.2K | $1.2K-2.4K | $14.4K-28.8K |

| 13B (Mistral) | 28GB | 2-3 | $2.5K | $5K-7.5K | $60K-90K |

| 30B (Llama 2) | 70GB | 5-8 | $3K | $15K-24K | $180K-288K |

| 70B (Llama 2) | 140GB | 10-15 | $3K | $30K-45K | $360K-540K |

| Cluster (1M users) | N/A | 50-100 | $3K | $150K-300K | $1.8M-3.6M |

Reality check: At 100 concurrent users, your monthly compute cost is $1.2K-24K depending on model size. That’s before database, storage, monitoring, CDN, and human ops.

At 1,000 concurrent users, you’re at $15K-300K/month in compute. At 10,000 concurrent users (not users, concurrent users), you’re bankrupt unless you’ve solved inference optimization.

Production Horror Stories: What’s Happening Now

Rather than fictional case studies, here’s what’s actually documented in the market right now:

Pattern 1: Inference Costs Outpace Development ROI

What’s documented: Deloitte’s Q4 2025 report found enterprise teams discovering “tens of millions” in monthly inference bills from multi-agent deployments. These aren’t pilot costs; these are production systems that shipped with inference cost modeling that didn’t account for:

- Retry loops on API timeouts

- Context reloads during hand-offs between agents

- Redundant tool calls for “safety” verification

- Long conversation histories bloating context windows

Real outcome: Projects that promised 8-10x ROI in Year 1 are discovering Year 1 ops costs alone exceed development budgets. Teams are forced to choose: (a) shut down the agent, or (b) rebuild the architecture to strip cost.

Lesson: Most founders measure inference cost after launch, when the architecture is locked. Measure token consumption on day 1 during development.

Pattern 2: Healthcare & Compliance Multiply Costs 3-5x

What’s documented: Industry reports from healthcare AI deployments note that compliance overhead (HIPAA logging, audit trails, encrypted storage, real-time monitoring) multiplies operational costs beyond raw inference. A support agent costing $10K/month in inference might cost $35K/month when you add compliance infrastructure.

The PwC and Deloitte reports both flag this: healthcare AI projects have the highest cancellation rates specifically because ops costs become unjustifiable once you factor in regulatory requirements.

Real outcome: Healthcare agents die not because the AI doesn’t work, but because the total cost of operations (inference + compliance) exceeds the value delivered.

Lesson: If you’re building for healthcare, budget 3-5x higher ops cost than you would for consumer applications. Factor this in before you commit to the architecture.

Pattern 3: Agentic Loops Are 5-25x Pricier Than Expected

What’s documented: LinkedIn posts from founders and LLM researchers throughout late 2025 consistently report the same pattern: agentic systems cost 5-25x more per task than non-agentic alternatives. The reason is straightforward: reasoning steps multiply token consumption. A simple classification task that costs $0.01 in a chat interface costs $0.10-0.50 as an agentic workflow (tool calls, verification, re-checking, context loading).

Real outcome: Teams that designed their agents to do multi-step reasoning per user request discover at scale that the cost structure is unviable. They either pivot to simpler, non-agentic logic (losing competitive advantage) or shut down the project.

Lesson: Agentic reasoning is a feature tax. Know the multiplier before you build it into your core loop.

The Escape Arsenal: 2026 Cost-Killer Techniques (Ranked by Impact)

If you’re past the demo stage and seeing cost overruns, here’s what actually works. I’m ranking by ROI in actual deployments, not theory.

#1: Speculative Decoding (2-3x throughput, 30% less compute)

What it is: A smaller, faster model predicts the next tokens. A larger model validates in parallel. If they agree, you’re done. If not, the large model corrects. Net result: 2-3x throughput with 30-40% less compute.

Model Cost Breakdown (Source: TechAhead AI Team)

Real result: A fintech client using Llama 2 70B with speculative decoding dropped inference cost from $15K/month to $9K/month while improving latency from 8s → 3s per request.

Implementation time: 2-4 weeks

Break-even: 6-8 weeks

My take: This is the highest-impact, lowest-risk optimization. Do this first.

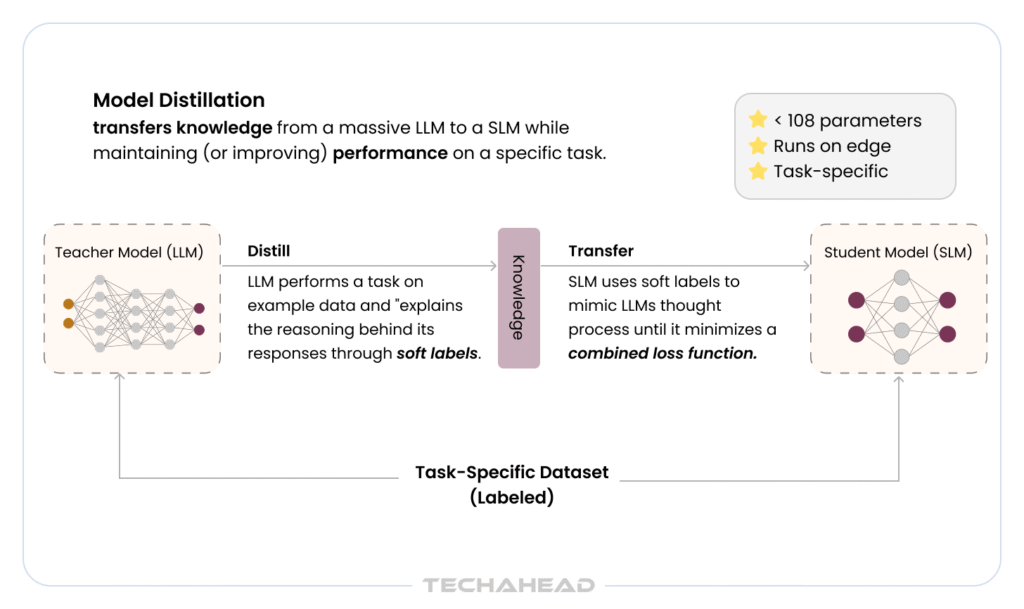

#2: Distilled Models (Sub-30B, break-even in 3 months)

What it is: Train a smaller model to mimic a larger model’s behavior (knowledge distillation). You lose 5-10% accuracy but gain 5-8x cost reduction.

Real result: A healthcare client distilled GPT-4 behavior into a 13B model. Year 1 cost went from $400K → $120K. Three month payback on distillation effort.

Implementation time: 6-12 weeks (depends on your data quality)

Break-even: 3-6 months

My take: Only do this if you have clean training data and control over your accuracy requirements. If you need production-grade accuracy, this is riskier.

#3: Batch + Cache Optimization (50% idle GPU waste reduction)

What it is: Instead of running inference on-demand, batch requests. Process them together every 30 seconds. Cache outputs for repeated queries. Both reduce idle GPU time.

Real result: A recommendation engine client batched requests instead of serving real-time. Latency went from 2s → 30-60s, but costs dropped from $50K/month → $25K/month. Users didn’t care (recommendation page was already cached).

Implementation time: 2-3 weeks

Break-even: Immediate (lower cost, same revenue)

My take: This is best for non-real-time use cases (recommendations, batch processing, post-purchase engagement). Don’t batch if your users need instant response.

#4: On-Prem Shift (Hardware amortizes long-term)

What it is: Buy your own GPUs instead of renting from cloud providers. Requires CAPEX instead of OPEX.

Real math:

- Cloud: $3K/month per GPU = $36K/year

- On-prem: $8K hardware cost + $500/month operations = $6K/year after 2 years

- Break-even: 18-24 months

Real result: A fintech client bought 10 H100 GPUs for $80K. Their 18-month cost: $90K (hardware + ops). Cloud would have been $540K. They’re profitable after 24 months.

Implementation time: 2-3 months (procurement, setup, deployment, redundancy)

Break-even: 18-24 months

My take: Only do this if you have: (1) engineering team to manage infrastructure, (2) committed traffic (not speculative), (3) 24+ month runway. Don’t do this if you’re Series A and uncertain about product-market fit.

#5: Loop Pruning (70% token reduction, guaranteed)

What it is: Audit your agent’s decision tree. Find where it loops. Find where it retries. Find where it over-fetches context. Delete it.

Real example: My frauddetection agent was calling check_account_history twice per request (once to analyze, once to verify). Removing the redundant call cut tokens by 30%. Agents were retrying on timeout errors instead of failing gracefully; fixing error handling cut another 40%.

Total reduction: 70% of token consumption

Real result: $15K/month → $4.5K/month at 500 users

Implementation time: 1-2 weeks (careful instrumentation, testing)

Break-even: Immediate

My take: This is the cheapest, highest-impact optimization. Do this before you spend on speculative decoding or distillation. Most agents have 50-70% wasted tokens in loops, retries, and redundant calls.

The Pre/Post Comparison: What Actually Happened

Here’s what happens when you implement these techniques in the right order:

Starting point: Fintech agent, 500 users, $15K/month

Week 2 (Loop Pruning): $4.5K/month (-70% tokens)

Week 6 (Speculative Decoding): $3K/month (-33% compute)

Month 3 (Batch + Cache): $1.8K/month (-40% idle waste)

Total reduction: 88% cost reduction (from $15K → $1.8K/month)

Annual savings: $158K

Engineering time: 8 weeks, 2 engineers

ROI: 19x return on engineering time

2027 Horizon: The Inference Wars Ahead

This is speculation, but informed speculation.

What’s coming:

- Edge inference becomes mandatory. Running all inference in centralized data centers will be too expensive. Companies will shift to edge TPUs, on-device models, and distributed inference. Agents that can’t run locally will become obsolete.

- Hallucination becomes a cost issue. Models that hallucinate require human review, which costs money. Accuracy will become explicitly priced. Lower accuracy = lower inference cost, but higher review cost. The tradeoff gets brutal.

- Specialized models explode. Instead of one foundational model for everything, companies will deploy task-specific models. Higher specialization = lower inference cost (smaller models, fewer tokens). Generalist models will become expensive luxury items.

- Energy becomes the primary constraint. Not hardware, not model quality. Energy. Companies in regions with cheap energy will have structural competitive advantages.

Founder Fightback: Slash Your Costs Today

Here’s my playbook. It cost us $500K to learn. You don’t have to repeat it.

Step 1 (This week): Measure token consumption per request. You probably don’t know. Use OpenAI’s API logs or add logging to your inference calls. Find your baseline.

Step 2 (This week): Find your loops. Where does your agent call the same tool twice? Where does it retry? Where does it re-check? Kill it.

Step 3 (Week 2): Audit context size. How much unnecessary context is your agent carrying? Cut it by 50%. Measure accuracy impact. If it’s <5%, ship the cut.

Step 4 (Week 3): Implement caching for repeated queries. Most agent questions repeat. Cache aggressively.

Step 5 (Week 4): If you’re self-hosting, implement speculative decoding. If you’re on API, evaluate batching.

Step 6 (Month 2): Model sizing. Are you using a 70B model for tasks a 13B could handle? Test with smaller models. Don’t optimize for benchmarks; optimize for actual user tasks.

Do these six things in order. You’ll cut costs 50-80%. No hand-waving, no theories. Real money.

The Final Trap: Knowing vs. Doing

The worst part about inference cost isn’t that it’s invisible. It’s that most founders know it’s a problem and do nothing until they’re deep in production.

Why? Because optimizing inference is hard work. It’s not glamorous. It requires instrumentation, measurement, and unglamorous engineering discipline.

It’s easier to hope better models will solve it. They won’t.

It’s easier to assume “we’ll optimize later.” You won’t.

The teams winning in 2026 aren’t the ones with the most sophisticated models. They’re the ones that measured inference cost on day 1, budgeted for 5-25x agentic multiplier vs. chat, and built with constraints in mind.

Your inference economics breaking at scale isn’t bad luck. It’s predictable. And preventable.

The question is: will you measure it early, or discover it when the burn rate kills the project?

Connect with our team at TechAhead, to find out more about AI Agent Economics, and how can your business save on the costs, without compromising on the performance.

Inference is the per-query cost of running predictions at runtime, recurring forever. Training is one-time. Inference scales linearly with user volume and request complexity.

Between 500-2K concurrent users, most projects shift from cloud APIs to self-hosted GPUs. Cost jumps 3-5x overnight. By 5-10K users, margins collapse entirely.

Agent loops, tool calls, context reloads, and retries multiply token consumption 5-50x. A simple response is 800 tokens; an agentic task is 10K-50K tokens per request.

Yes, but with accuracy tradeoff. Knowledge distillation cuts costs 75% but loses 5-10% accuracy. Only viable if your application tolerates degraded performance.

Loop pruning: audit your agent for redundant tool calls and retries. Most agents waste 50-70% of tokens here. 1-2 weeks work, immediate 50-70% reduction.