Required for core functionality such as security, network management, and accessibility. These cannot be disabled.

Worldwide IT spending was estimated to turn around $5.06 trillion in 2024 and is expected to surpass $8 trillion before the end of the decade. Yet despite the scale of investment, most organizations lack a coherent framework for making the software architecture, methodology, vendor, and cost decisions that determine whether that spending delivers competitive advantage or simply sustains the status quo.

This software development guide addresses that gap. It is not a primer on how to write code. It is a structured decision-support resource for organizations that need to evaluate their options, align their teams, and make the right calls on technology, process, and partners.

Key Takeaways

- Enterprise IT spending is projected to reach $6.15 trillion in 2026, according to Gartner — yet most organizations still lack a coherent framework for architecture, methodology, and vendor decisions.

- McKinsey estimates that tech debt amounts to 20 to 40% of the entire technology estate, making legacy modernization the highest-ROI software investment most enterprises can make.

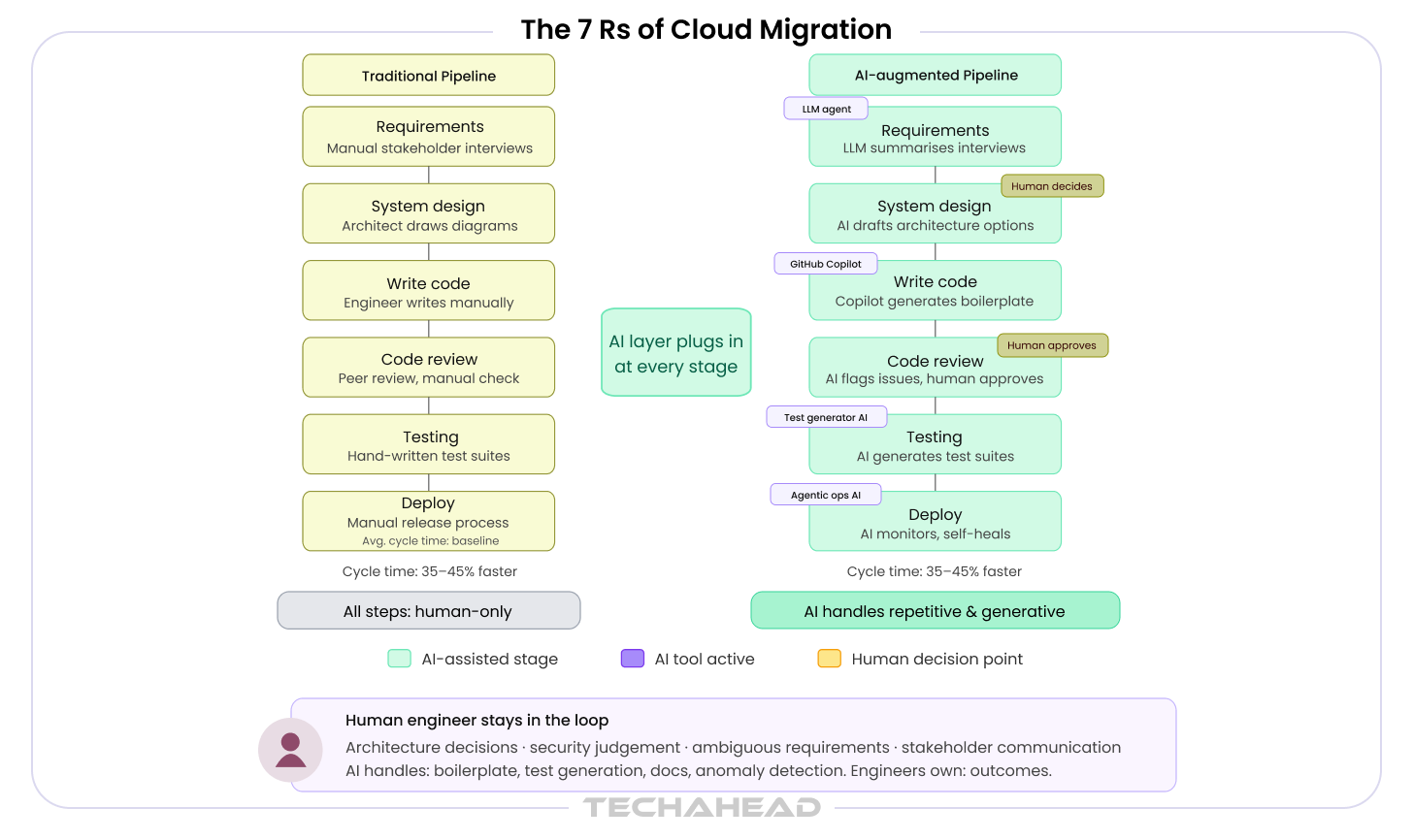

- Developers using AI coding assistants complete boilerplate, unit test, and documentation tasks 35–45% faster, a productivity shift that is already compressing enterprise software development timelines.

- The right SDLC model is determined by your project context, not industry preference. Agile with embedded DevOps fits most enterprise product delivery.

- Selecting a software development partner on hourly rate is the single most reliable predictor of budget overruns.

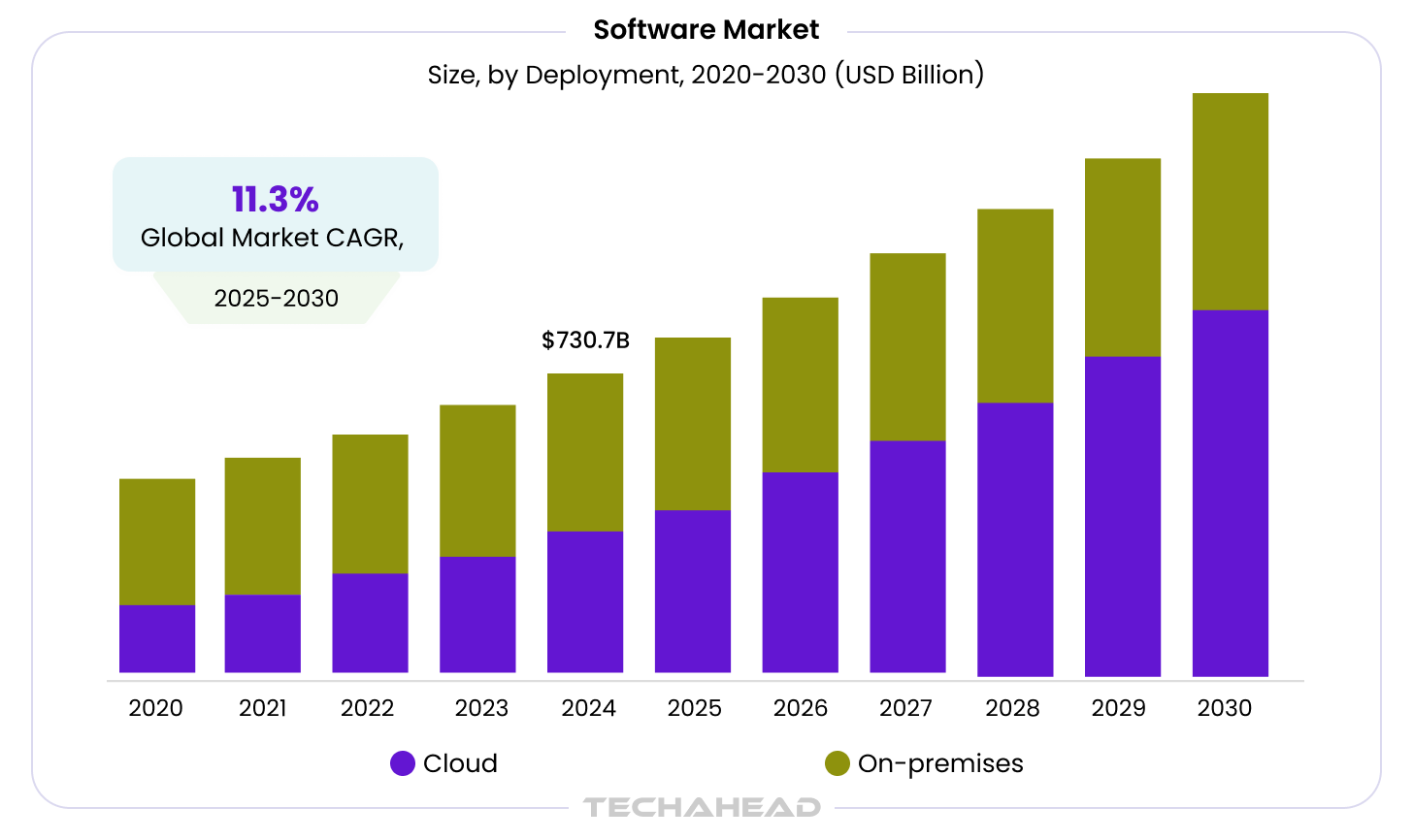

Since the global software market size is expected to reach 397.31 billion by 2030, projecting 11.3% CAGR from 2025 to 2030, it is significant for industries to understand how they can lay the foundation of software development. The global demand for custom software development services is driven by the need for businesses to innovate, automate operations, and differentiate their products in a competitive market.

What follows covers the full software development life cycle: from system design and architecture patterns to DevOps practices, legacy modernization, cost planning, and the practical impact of AI on how enterprise software solutions gets built in 2026.

TechAhead is an AI-native application and enterprise software development company and an OpenAI Services Partner, with 16+ years of delivery experience across web, mobile, and AI-powered platforms for global enterprises. The perspective in this guide reflects that experience. For information on our software development services, visit our services page.

What Is Software Development? The Enterprise Definition

Ask ten experienced developers what developing software means and you will get ten overlapping but incomplete answers. For the leaders making investment decisions, it is worth establishing a working definition that reflects enterprise reality rather than classroom theory.

Beyond Writing Source Code: What Enterprise Software Development Actually Involves

Enterprise software development is the end-to-end process of designing, building, deploying, and evolving software systems that support critical business functions. This is fundamentally different from building a personal app or a startup MVP, not because the code is harder, but because the stakes, constraints, and complexity are categorically different.

Custom software development means building systems tailored to your specific business logic, workflows, and data models. Off-the-shelf products and SaaS platforms offer speed and standardization, but they impose process compromises — and in regulated, competitive, or high-complexity environments, those compromises compound into structural disadvantages over time.

Software has moved from an operational support function to a primary competitive differentiator. For most enterprises, the question is not whether to invest in software — it is how to make those investments produce outcomes rather than just output.

The Core Components of a Software Development Project

Every enterprise software project, regardless of methodology or technology stack, operates across six functional layers:

| Development Layer | What It Covers |

| Requirements & Scoping | Business case, stakeholder alignment, functional and non-functional specifications, PRD/BRD |

| Architecture & Design | System design, database schema, API contracts, technology stack selection, security model |

| Engineering & Build | Frontend, backend, API, database development; code standards; peer review |

| Testing & QA | Unit, integration, regression, performance, and security testing; QA gates; acceptance criteria |

| Deployment & DevOps | CI/CD pipelines, infrastructure provisioning, release management, rollback protocols |

| Maintenance & Modernization | Bug triage, performance monitoring, feature evolution, technical debt management |

Understanding these layers matters because cost, risk, and quality issues in enterprise projects are almost never evenly distributed. They concentrate in the gaps between layers, the handoff from requirements to architecture, from development to QA, from QA to production. A rigorous custom software development engagement manages these interfaces, not just the individual phases.

The Software Development Lifecycle (SDLC): A Complete Breakdown

The SDLC typical phases include planning, analysis, design, implementation, software testing, deployment, and maintenance, which can run sequentially or in parallel depending on the project.

The SDLC provides the structural framework for how software gets built. For enterprise teams, it serves a second purpose: it is the audit tool for identifying where your current software development process breaks down.

The 7 Phases of the SDLC (What Happens in Each)

- Phase 1 — Planning & Feasibility: Define the business goals, estimate resource & project requirements, identify risks, and confirm organizational readiness. Projects that skip this phase rarely fail at the code level — they fail here.

- Phase 2 — Requirements Analysis: Translate business objectives into functional and non-functional specifications. Distinguish between what stakeholders ask for and what the system needs to do. Product Requirements Documents (PRDs) and Business Requirements Documents (BRDs) formalize this software requirements specification layer.

- Phase 3 — System Design: Architecture decisions happen here — high-level system design, database schema, API contracts, technology stack selection, security model. This is the most consequential phase for long-term cost.

- Phase 4 — Implementation: Development of frontend, backend, API, and database layers against agreed specifications. Code standards, peer review, and documentation standards determine the quality of what gets built. Adhering to industry standards ensures that software remains maintainable, scalable, and secure.

- Phase 5 — Testing & QA: Unit, integration, regression, performance, and security testing are the quality assurance methods. QA gates prevent defect escape to production. Also, ensure that the operating systems support the developed application software. Shift-left testing integrates QA earlier in the cycle and fixes defects to reduce the cost of defect remediation.

- Phase 6 — Deployment: CI/CD pipelines, staging environments, release management, and rollback protocols bring tailored solutions to production. Zero-downtime deployment strategies (blue-green, canary) protect business continuity as well as accelerate delivery.

- Phase 7 — Maintenance & Evolution: Post-launch bug triage, performance monitoring, security patching, feature backlog management, and modernization cycles are necessary for maintaining software. Budget 15 to 20 percent of the initial build cost annually for ongoing maintenance.

SDLC Models: Which One Fits Your Enterprise?

Different software development models, such as Waterfall, Agile, and Spiral, dictate how teams sequence and govern the lifecycle, impacting project workflows and outcomes.

The software engineering model you choose determines how the seven phases are sequenced, how decisions are made, and how teams are organized.

| Model | Best For | Typical Timeline | Key Risk |

| Waterfall | Compliance-heavy, fixed-scope projects (government, regulated industries) | 12–24+ months | Inflexibility when requirements change mid-project |

| Agile (Scrum/Kanban) | Evolving product requirements, high stakeholder involvement | 2–4 week sprints, ongoing | Scope creep without strong product ownership |

| DevOps | Continuous delivery environments, product-led engineering teams | Continuous | Organizational resistance to shared ownership model |

| Scaled Agile (SAFe) | Multi-team enterprise coordination across large programs | Program increments (8–12 weeks) | Process overhead if applied to small teams unnecessarily |

| Spiral Model | High-risk, complex systems (defense, aerospace, large enterprise platforms) | Iterative model cycles (each loop can span weeks–months) | Complexity in management and cost overruns due to continuous risk analysis |

| V-Model (Verification & Validation) | Systems requiring strict testing alignment (healthcare, embedded software) | Similar to Waterfall (12–24+ months) | Rigid structure, expensive to change late-stage |

| Incremental Model | Large systems that can be delivered in parts with early value | Multiple releases over months | Integration challenges between increments |

| Rapid Application Development (RAD) | UI-heavy applications, prototypes, fast validation cycles | 2–6 months | Weak scalability and technical debt if rushed |

| Lean Software Development | Efficiency-driven teams focused on waste reduction | Continuous | Misinterpretation of “lean” leading to under-documentation |

Agile methodologies emphasize iterative development, allowing teams to adapt to changing requirements through short cycles called sprints, which ensure continuous feedback and improvement.

On the other hand, the Lean methodology focuses on maximizing customer value while eliminating waste such as unnecessary features or excessive documentation.

The Waterfall model is a linear software development methodology that progresses through a series of defined phases. These phases include requirements, design, implementation, testing, and maintenance, making it suitable for projects with well-defined requirements.

The Spiral model combines elements of both iterative and waterfall approaches. It focuses on risk assessment and allowing for repeated refinement of the software through multiple iterations, making it ideal for large and complex projects.

Clean Code & SOLID Principles include various standards, such as:

- DRY (Don’t Repeat Yourself)

- KISS (Keep It Simple, Stupid)

- SOLID design principles to ensure code is readable and modular

TechAhead Approach

We default to Agile with integrated DevOps practices for the majority of enterprise engagements. Where compliance requirements dictate sequential delivery, like healthcare, fintech, and government, we choose the methodology that serves the business context.

You can see the prime example showcasing our seamless software management tactics through our project, where we reimagined a digital streaming platform. From engineering a cross-platform streaming ecosystem, including spans LG, Samsung, Android TV, Roku, and web interfaces, to implementing real-time verification and subscription management features, we transformed the experience for both our clients and their users.

Our team marveled at the engineering concept in this project to help Agora TV get this technical breakthrough and generic results, such as:

- 96% retention achieved across multi-platform sessions

- 152% content creator applications increased

- 87% authentication abandonment rate reduced

- 134% growth in subscription conversions

Read our detailed guide on applying Agile development methodology to enterprise product delivery, including sprint structuring and backlog management for complex organizations.

Types of Software Development (Choosing the Right Approach)

One of the most consequential decisions in enterprise software is made before the first line of code is written: build, buy, or subscribe. Getting this wrong sets a cost and flexibility ceiling that can take years to break through.

Custom vs. Off-the-Shelf vs. SaaS: The Build-vs-Buy Decision

Custom software is built for your specific business logic. You own the IP, you control the roadmap, and the system is designed around your workflows rather than forcing your workflows to fit someone else’s product. The upfront investment is higher, but for complex or differentiating use cases, the total cost of ownership over five to ten years typically favors custom solutions.

Off-the-shelf software offers fast deployment and lower initial cost but imposes vendor dependency, limited customization, and a roadmap you do not control. For non-differentiating functions, like accounting, HR administration, basic CRM, it remains the sensible default.

SaaS platforms sit between the two: subscription-based, rapidly deployable, and continuously updated by the vendor. The trade-off is customization depth and data sovereignty. For enterprises in regulated industries or those handling sensitive proprietary data, SaaS architectures require careful evaluation of data residency, audit controls, and exit options.

A detailed comparison of SaaS vs traditional software is available in our dedicated analysis.

Choose custom software when: your workflows are genuinely unique, software is a competitive differentiator, compliance requires fine-grained data control, or existing SaaS platforms force you to compromise on core business processes.

Web Application Development

Enterprise web applications range from internal portals and workflow tools to customer-facing platforms handling millions of transactions. Key architectural decisions: frontend framework selection (React, Angular, Vue, each with different enterprise trade-offs), API architecture (REST, GraphQL, event-driven), scalability model, and authentication/authorization standards (SAML, OAuth 2.0, OpenID Connect).

Mobile Application Development

The enterprise mobile development decision has narrowed to native versus cross-platform. Native (Swift for iOS, Kotlin for Android) delivers peak performance and direct access to device capabilities but doubles the development and maintenance investment. Cross-platform frameworks, like Flutter, React Native, and .NET MAUI for Microsoft-ecosystem enterprises, have closed the performance gap significantly and now represent the pragmatic default for most enterprise mobile projects. Key enterprise requirements: MDM integration, offline capability, security measures, and app store governance.

API and Integration Development

Enterprise technology stacks are heterogeneous by nature: a mix of existing systems, including legacy and commercial platforms, and custom applications that must exchange data and orchestrate workflows reliably. API and integration development is frequently the hidden cost driver in enterprise software projects, which is underestimated in scope, underinvested in architecture, and the source of the majority of post-launch operational problems. RESTful APIs, GraphQL, event-driven architectures via message brokers (Kafka, RabbitMQ), and iPaaS platforms (MuleSoft, Boomi) each address different integration patterns and scale requirements.

AI-Powered Software Development

45% of software developers report over 10% productivity gains from AI. Moreover, it is projected that software developer roles will grow by 57% from 2025 to 2030, driven by AI. Hence, by 2030, productivity will be a baseline, not a differentiator.

Artificial intelligence (AI) tools are increasingly used in software development to generate new code, review and test existing code, and assist teams in continuously deploying new features. It includes creating code snippets and full functions from generative AI based on natural language prompts or code context, significantly speeding up the development process. Moreover, AI-powered tools can automate testing processes, allowing for more comprehensive coverage and quicker identification of potential issues in software code.

AI tools, such as GitHub Copilot, are increasingly used for code generation and testing, indicating a shift towards AI-native engineering in software development. AI solutions enhance the continuous integration/continuous delivery (CI/CD) pipeline by optimizing code changes and ensuring that new features are deployed without disrupting service.

As an OpenAI Services Partner, TechAhead builds enterprise applications that integrate OpenAI’s models and APIs directly into business workflows, from agentic automation pipelines to RAG-based knowledge retrieval systems.

The investment decision for AI-powered software varies significantly by complexity. For a full breakdown of what enterprise software projects actually cost by type, see our software development cost guide.

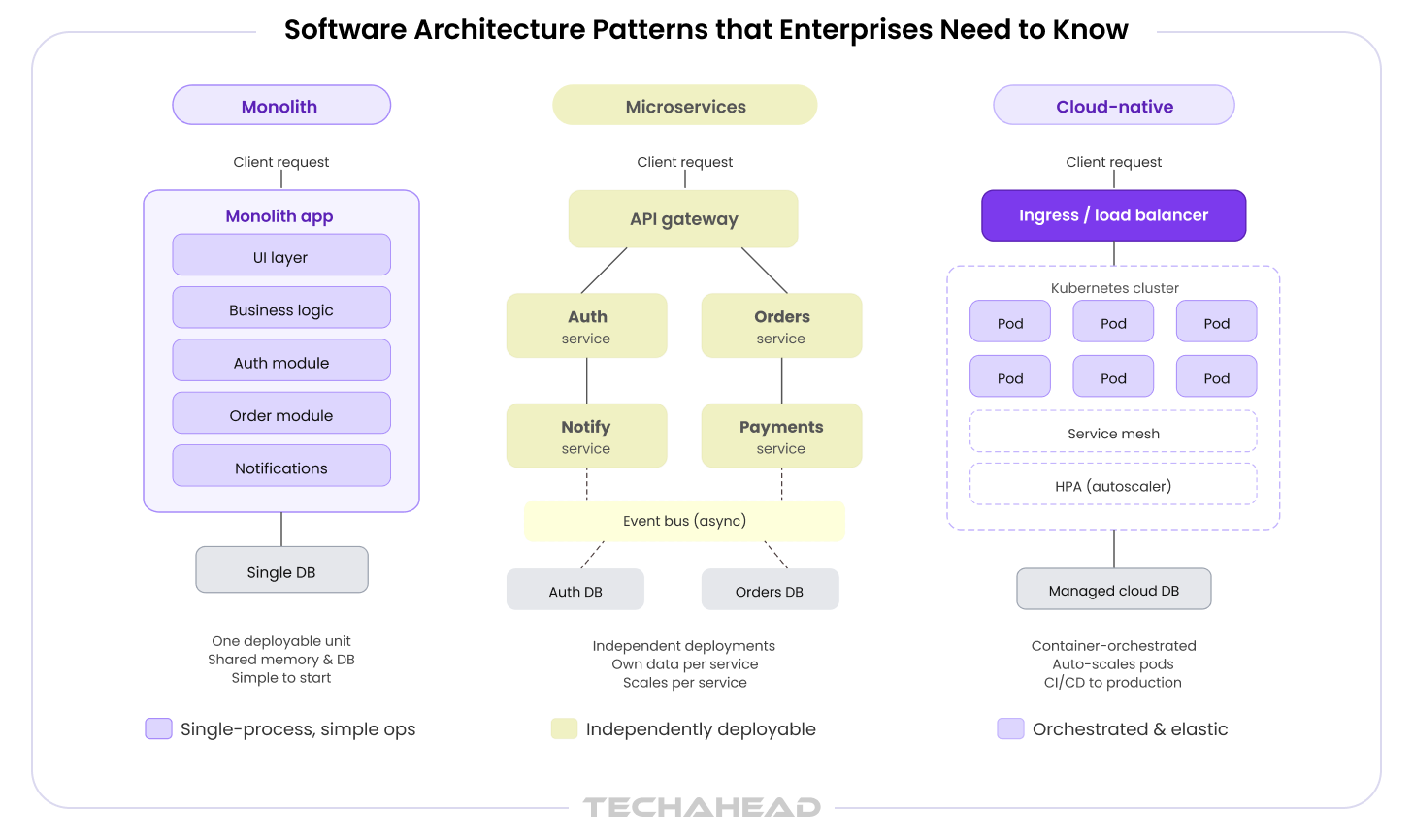

Software Architecture Patterns that Enterprises Need to Know

Architecture is the most financially consequential technical decision in programming software. It determines long-term scalability, security posture, operational overhead, and the ease or difficulty of future modernization. Choosing the wrong architecture does not produce an immediate, visible failure — it produces compounding costs that become apparent two to three years into production.

Why Architecture Decisions Define Long-Term Software Cost

Three enterprise failure modes appear repeatedly in large-scale software engagements. First: monolithic architectures that cannot scale, requiring expensive re-architecture at the point of maximum business pressure. Second: microservices implementations that introduce operational complexity before teams are ready to manage distributed systems. Third: cloud migrations that were designed for on-premises infrastructure and simply ‘lifted and shifted’ without architectural rethinking.

The software architecture and design decisions made in Phase 3 of the SDLC will constrain or enable every subsequent investment. They deserve the same board-level attention as the business case that justified the project.

Monolithic Architecture: When It Still Makes Sense

A monolith — a single, tightly coupled codebase — is not inherently wrong. For early-stage products, small engineering teams (fewer than 10 developers), or applications with tightly interdependent business logic, a well-structured monolith is faster to build, easier to deploy, and simpler to reason about than a distributed system.

The problem is scale. As team size grows and product complexity increases, a monolith’s shared codebase becomes a coordination bottleneck. Deployments require the entire system. A change in one module can break another. Testing cycles lengthen. The point at which migration becomes necessary is typically around 30 to 50 engineers working on the same codebase, or when release frequency drops below what the business requires.

Microservices Architecture: Enterprise Implementation

Microservices decompose a system into independently deployable services, each owning a bounded domain of functionality. The architectural benefits are real: independent deployability, technology diversity per service, fault isolation, and horizontal scalability at the service level.

The operational requirements are equally real. Managing a microservices system requires a service mesh (Istio, Linkerd), API gateway, distributed tracing (Jaeger, OpenTelemetry), and a mature DevOps culture. Conway’s Law applies directly here: your architecture will mirror your organizational structure. Enterprises that adopt microservices without restructuring their teams typically reproduce the coordination problems of a monolith at the service boundary level.

Netflix decomposed its monolithic architecture into 700+ microservices to support 260 million subscribers, which is a perfect instance of what microservices enable at extreme scale. The full architectural breakdown is covered in our microservices architecture at Netflix analysis.

Cloud-Native Architecture: The Default for Modern Enterprise Software

Cloud-native applications leverage cloud computing benefits such as automated provisioning through infrastructure as code (IaC) and efficient resource utilization, enabling organizations to deliver applications faster and more reliably. The integration of cloud-native development with practices like DevOps and continuous integration emphasizes agility and scalability, allowing teams to respond quickly to changing business needs and market demands.

Cloud-native is a design philosophy, not a deployment location. It means building software that is containerized, dynamically orchestrated, designed for resilience, and delivered via automated pipelines. The core stack is Kubernetes for orchestration, Docker for containerization, Helm for package management, and GitOps practices for infrastructure management.

Platform engineering is the organizational complement to cloud-native architecture: building Internal Developer Platforms (IDPs) that standardize how teams provision, deploy, and operate cloud-native applications. Organizations with mature platform engineering capabilities deploy software 4 to 6 times more frequently than those without them.

For a comprehensive technical guide to cloud-native applications, including container strategy, Kubernetes architecture, and platform engineering patterns, see our dedicated resource.

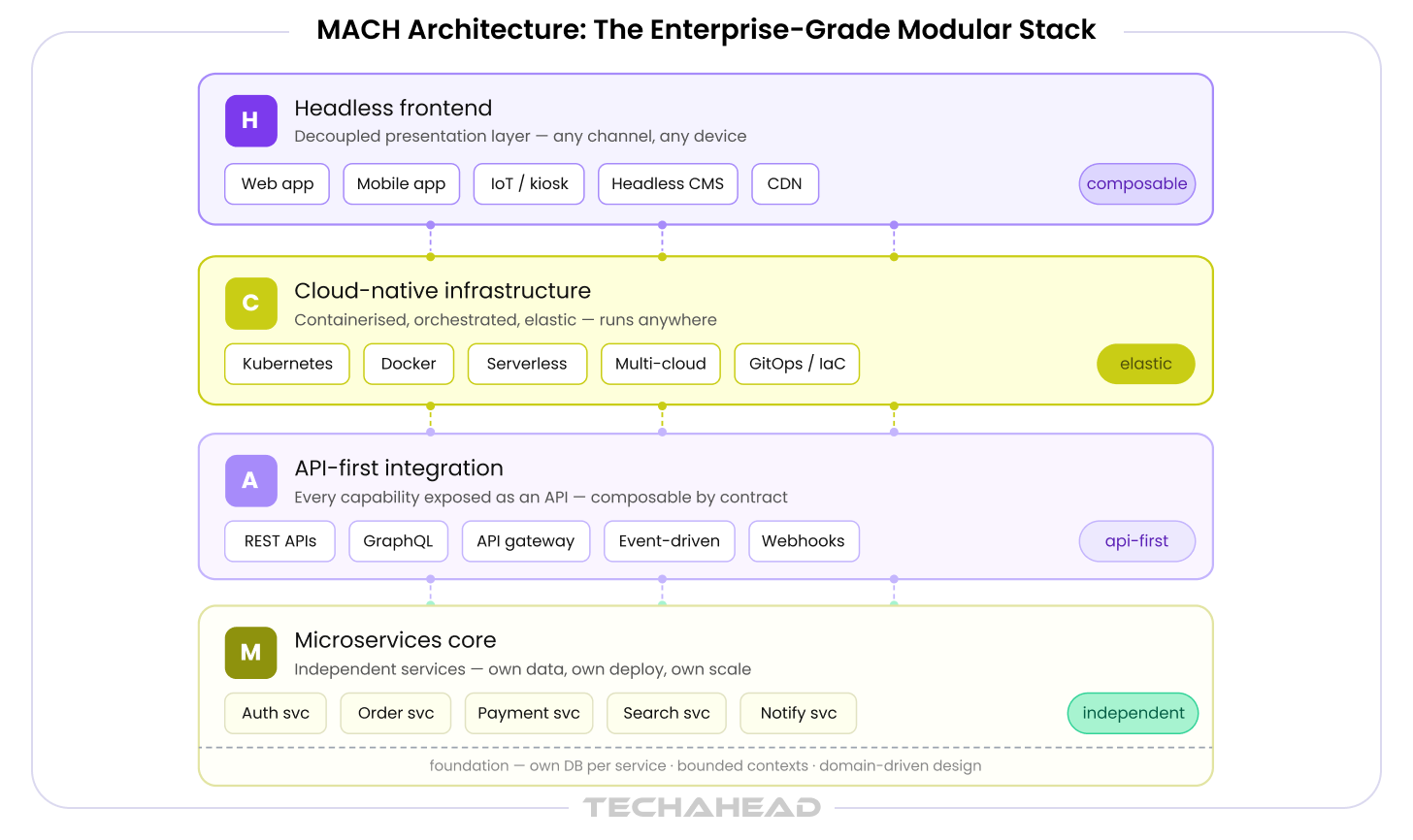

MACH Architecture: The Enterprise-Grade Modular Stack

MACH: Microservices, API-first, Cloud-native, Headless — is gaining significant enterprise adoption as organizations replace monolithic commerce, CMS, and ERP platforms with composable architectures. The core principle is best-of-breed assembly: each component of the stack is independently selected, deployed, and evolved. No vendor lock-in. No all-or-nothing migration decisions.

The operational requirement for MACH is strong API governance and integration architecture competency. Composable stacks that are not well-governed quickly become integration labyrinths. Our analysis of MACH architecture for enterprise applications covers implementation patterns, vendor selection, and governance frameworks in depth.

DevOps & Engineering Practices: How High-Performance Teams Build Software

Modern development emphasizes agility, security (DevSecOps), and automation, with best practices focusing on simplicity, code reusability (DRY), and frequent testing. DevOps is the most consistently misunderstood concept in enterprise software. Organizations regularly treat it as a tooling decision — ‘we need to implement Jenkins and Kubernetes’ — when it is fundamentally a cultural and organizational shift that restructures how development and operations share responsibility for software delivery and reliability.

What DevOps Actually Means at Enterprise Scale

At enterprise scale, DevOps requires three organizational changes, not just one: shared ownership between development and operations (no more ‘throw it over the wall’), platform teams that build and maintain the delivery infrastructure, and developer experience investment that reduces friction in the deployment path.

The vocabulary around DevOps has proliferated. DevSecOps embeds security engineering into the development pipeline. GitOps manages infrastructure state via Git repositories. Version Control involves using tools like Git to track changes, manage branches, and collaborate on codebases. Platform Engineering builds the Internal Developer Platform that abstracts cloud complexity from application teams. These are not competing paradigms — they are complementary layers of a mature engineering operating model.

CI/CD Pipeline Architecture

Continuous Integration means every code commit triggers an automated build, runs unit tests, and performs static code analysis. This catches integration problems immediately rather than discovering them in a multi-week integration sprint. Continuous Delivery means software processes are always in a releasable state. Continuous Deployment means every passing build is automatically deployed to production — appropriate for some organizations and too risky for others (particularly in regulated environments where deployment requires approval gates).

Pipeline tooling choices: GitHub Actions and GitLab CI for source-integrated pipelines; Jenkins for complex legacy pipeline requirements; ArgoCD and Tekton for Kubernetes-native continuous deployment. The tool matters less than the practice discipline around it.

Infrastructure as Code: Automating Environment Management

Infrastructure as Code eliminates environment drift — the divergence between development, staging, and production environments that produces the classic ‘it works on my machine’ failure mode. IaC makes infrastructure reproducible, version-controlled, and auditable.

Terraform remains the dominant cloud-agnostic IaC tool; Pulumi adds programmatic flexibility using familiar languages; AWS CDK and Azure Bicep serve cloud-specific environments. For enterprises operating multi-cloud or hybrid environments, IaC is not optional — it is the only practical way to maintain environment consistency at scale. A detailed implementation guide is available in our Infrastructure as Code in DevOps resource.

Testing Strategy for Enterprise Software

The testing pyramid — unit tests at the base, integration tests in the middle, end-to-end tests at the top — is the correct investment model for enterprise software. Most organizations have it inverted: heavy investment in slow, expensive E2E tests and under-investment in fast, cheap unit and integration tests. Shift-left testing corrects this by integrating quality checks at the design and coding stages rather than treating QA as a gate before release.

Performance, load, and chaos engineering are non-optional for enterprise-scale systems. They are not ‘nice to have’ quality investments — they are the difference between a system that fails gracefully under pressure and one that produces a production incident during peak traffic.

Observability: Monitoring Beyond Uptime

Observability is the practice of understanding a system’s internal state from its external outputs. The three pillars are logs (what happened), metrics (how the system performed), and traces (how a request flowed through the system). Tools like Datadog, Grafana with Prometheus, and OpenTelemetry provide the instrumentation layer; the operational discipline of defining SLOs (Service Level Objectives), SLIs (Service Level Indicators), and error budgets translates observability data into engineering decisions.

Platform Engineering: The Next Evolution of DevOps

Platform engineering consolidates the DevOps function into a dedicated team responsible for the Internal Developer Platform — the tooling, services, and standards that application teams consume rather than build. Backstage, the open-source developer portal from Spotify, provides the reference architecture for enterprise IDPs. Platform engineering reduces cognitive load on application developers, standardizes security and compliance controls, and accelerates deployment velocity across large engineering organizations.

See our blogs Azure DevOps for enterprise delivery and emerging practices like self-healing code for autonomous incident remediation.

Software Modernization & Cloud Migration: Upgrading Without Disruption

Legacy system modernization represents the single largest category of deferred enterprise software investment. According to McKinsey, 10 to 20% of the technology budget dedicated to new products is diverted to resolving issues related to tech debt. Moreover, it is estimated that tech debt amounts to 20 to 40% of the entire technology estate is before depreciation, amounting to hundreds of millions of dollars of unpaid debt for larger organizations. The challenge is not identifying the problem — it is sequencing and executing the solution without disrupting the business systems that depend on the legacy infrastructure.

Defining Legacy: When Does Software Become a Liability?

Legacy is not primarily an age designation. A ten-year-old system that is well-maintained, adequately documented, and supported by available talent is an asset. A five-year-old system built on abandoned frameworks, with no test coverage, maintained by two engineers approaching retirement, is a liability regardless of its chronological age.

Signs that software has crossed from ‘old but stable’ to ‘old and brittle’ include:

- Inability to scale — the system cannot handle increasing load without architectural change

- Security vulnerabilities — the framework or runtime is no longer receiving security patches

- Talent scarcity — the skills required to maintain the system are increasingly rare and expensive

- Integration friction — connecting the system to modern APIs or data pipelines requires disproportionate effort

- Deployment bottlenecks — releases require manual steps, long freeze windows, or risk-averse approval cycles

The true cost of legacy is never just the maintenance budget. It includes opportunity cost (features that cannot be built), security risk (breaches and compliance failures), and talent retention impact (engineers who leave organizations running legacy stacks for organizations running modern ones).

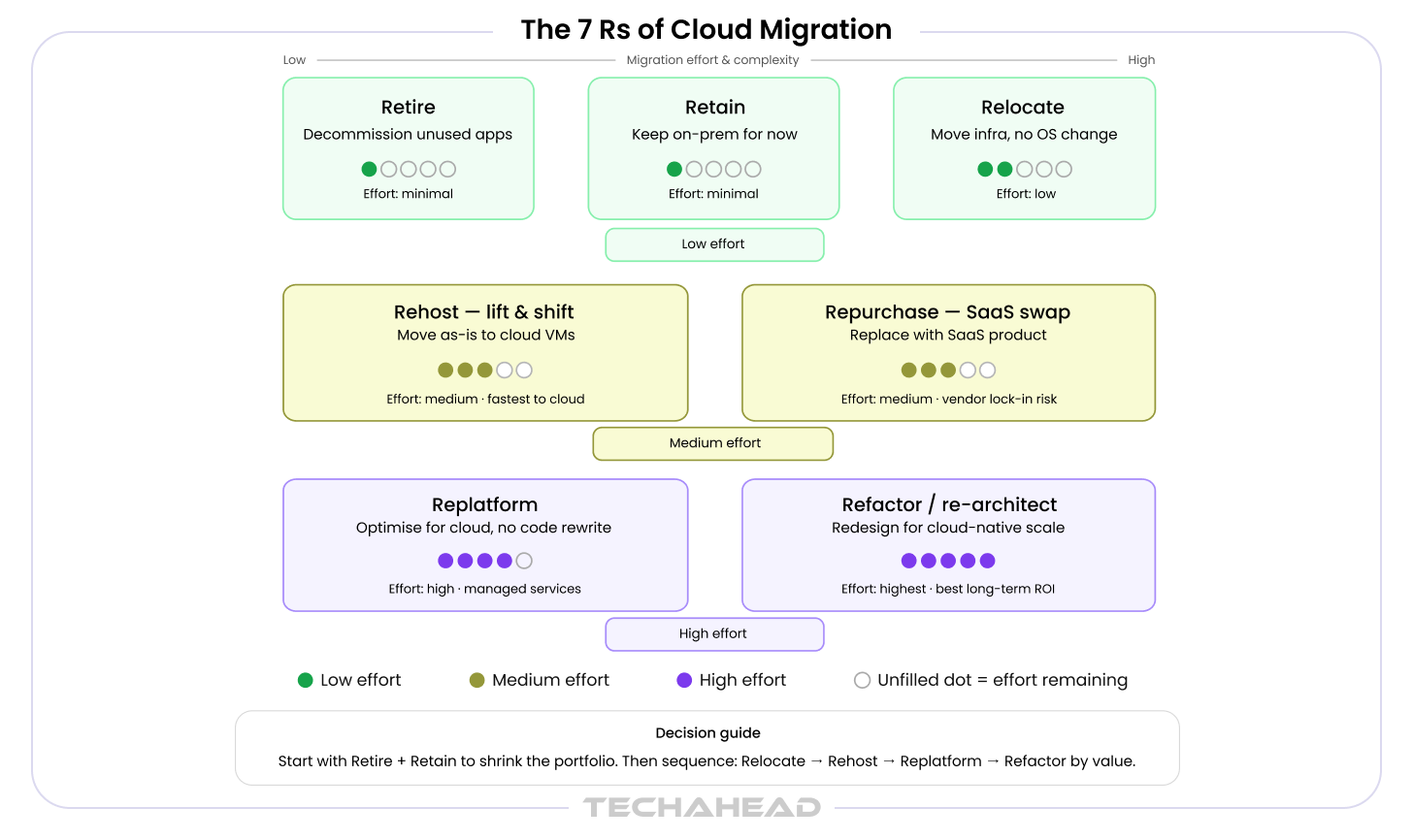

The 7 Rs of Cloud Migration

7 Rs framework provides the decision vocabulary for enterprise cloud migration planning. Each application in your portfolio maps to one R based on business criticality, modernization ROI, and risk tolerance:

| Migration Strategy | When to Use | Complexity | Cost Range |

| Rehost (Lift & Shift) | Applications with low technical debt; fast migration priority | Low | $30K–$150K |

| Replatform | Modernize infrastructure without code changes (e.g., managed DB) | Low–Medium | $50K–$300K |

| Refactor / Re-architect | High-value applications requiring scalability or cloud-native benefits | High | $200K–$1M+ |

| Repurchase | Replace with SaaS alternative where differentiation is low | Low | SaaS license + migration |

| Retire | Applications with no active usage or redundant functionality | None | Cost savings |

| Retain | Systems not yet ready for migration (compliance, dependency) | None | Status quo |

| Relocate | Migrate infrastructure to cloud without changing OS/app | Low | $20K–$100K |

AI-Accelerated Modernization

Generative AI is changing the economics of legacy modernization. Tools built on LLM pipelines can scan and document legacy codebases — COBOL, Oracle Forms, .NET Framework 2.x — producing structured documentation that previously took months of manual effort. Automated test generation creates coverage for systems that have none, enabling safe refactoring. Automated refactoring pipelines generate modernized equivalents of legacy code for engineer review and approval.

These capabilities reduce modernization timelines by 30 to 40 percent for large legacy estates, according to early enterprise case studies. TechAhead’s AI-native approach applies OpenAI APIs directly to brownfield modernization workflows, compressing both cost and calendar time. For a detailed analysis, see our resource on how AI is transforming legacy modernization.

Overcoming Legacy System Complexities in Cloud Migration

The technical challenges of cloud migration are well-documented. The organizational challenges are not. Change management — the human and organizational dimension of migration — is the most common non-technical failure mode. Business stakeholders who depend on legacy systems resist migration out of risk aversion. Engineering teams face dual maintenance burdens during migration. Leadership faces pressure to demonstrate ROI before the migration is complete.

Technical complexity concentrates in three areas: data migration (schema mapping, ETL pipelines, zero-downtime strategies using blue-green or canary deployment patterns), integration debt (legacy ESBs, SOAP services, and tightly coupled databases that resist containerization), and dependency mapping (understanding what talks to what before anything changes). The software modernization guide and our resource on overcoming legacy complexities in cloud migration address these patterns in detail.

Tech Stack Selection: How to Choose the Right Technologies for Your Project

Technology selection conversations often degenerate into framework debates driven by developer preference rather than business requirements. This section takes the opposite approach: technology decisions are output of four business-facing criteria, not input to them.

The Four Dimensions of Tech Stack Decisions

- Scalability requirements: Expected load, geographic distribution, growth trajectory, and multi-region needs determine which architectural and infrastructure choices are viable.

- Team expertise and talent availability: The best technology stack is one your team can build, maintain, and evolve. Factor hiring market depth — React and Node.js talent is abundant; Elixir and Rust talent is scarce and expensive.

- Ecosystem maturity: Library richness, security patch cadence, community activity, and long-term vendor support. Enterprise software has a ten-year horizon — choose technologies with equally long roadmaps.

- Total cost of ownership: Commercial licensing, cloud infrastructure spend, developer salaries, and migration risk all factor into TCO. Open-source does not mean free — it means the cost is operational rather than transactional.

Frontend: React, Angular, Vue, and Cross-Platform Mobile

React holds the largest developer ecosystem and the highest component library density. Its flexibility is both a strength (adaptable to any product pattern) and a weakness (requires opinionated architecture decisions that Angular provides by default). Angular’s full-framework structure and TypeScript-first design make it the preferred choice for enterprises that prioritize consistency and long-term maintainability over ecosystem breadth. For cross-platform mobile, Flutter delivers native performance parity; .NET MAUI and Blazor serve enterprises deeply invested in the Microsoft ecosystem.

Backend: Node.js, Python, Java, .NET, Go

Backend selection should follow team expertise and performance requirements, in that order. Python dominates AI and data pipeline workloads. Java and .NET remain strong in regulated enterprise environments with existing platform investment. Go is the increasingly preferred choice for high-throughput, latency-sensitive microservices. At microservices scale, polyglot architectures — different languages per service — are architecturally sound and organizationally viable if API contracts are enforced rigorously.

Cloud Providers: AWS, Azure, GCP, Multi-Cloud

The cloud provider decision is increasingly made in enterprise procurement, not engineering. Existing enterprise agreements, compliance certifications (FedRAMP, ISO 27001, HIPAA BAAs), regional data residency requirements, and managed service coverage drive shortlisting. Multi-cloud strategies avoid vendor lock-in but introduce real operational complexity — evaluate total engineering overhead honestly before committing to a multi-cloud architecture.

Low-Code and No-Code: Where It Fits in an Enterprise Stack

Low-code platforms are not a replacement for custom development. They are a delivery layer for non-differentiating workflows: internal approval processes, reporting dashboards, simple automation, and department-level tooling. The governance risk is shadow IT — ungoverned low-code deployments that create data sovereignty issues, integration debt, and security exposure. A structured low-code development platform strategy defines where low-code is permitted, what integration standards apply, and who owns the governance.

Software Development Cost: What Enterprises Actually Spend and Why

Cost is the most searched, most avoided, and most poorly understood topic in enterprise software development. Vendors avoid publishing ranges because every project is different. Buyers ignore the question until they need a budget. The result is budget surprises that derail projects at the worst possible moment. This section establishes honest baselines.

The Five Cost Drivers in Enterprise Software Development

- Complexity and scope: Number of integrations, volume of custom business logic, compliance requirements, and data migration scope. Complexity is the primary cost variable — not geography, not technology.

- Architecture decisions: Microservices and cloud-native infrastructure add DevOps overhead. A simpler architecture is cheaper to build and more expensive to scale. The cost trade-off is temporal, not absolute.

- Development Team composition: In-house, nearshore, offshore, or partner-led each carry different cost and quality trade-offs. Offshore reduces hourly rates by 40 to 60 percent; it does not reduce complexity, communication overhead, or coordination risk. Moreover, the cost changes as per the hired software product team, such as project managers, business analysts, back end development experts, and front end designers.

- Technology choices: Technical requirements like commercial licensing, programming languages choice, cloud infrastructure spend, low code development tools, third-party API costs, and specialized tooling all contribute to the total software development cost. OSS-first strategies reduce licensing cost but require engineering investment in configuration and maintenance.

- Quality investment: Testing, security audits, performance engineering, and documentation are the costs most commonly reduced in budget negotiations. They are also the costs that, when cut, produce the largest post-launch expenditures.

Typical Cost Ranges by Project Type

| Project Type | Typical Investment Range |

| Simple internal tool or workflow automation | $30,000 – $80,000 |

| Mid-complexity web or mobile apps | $80,000 – $250,000 |

| Enterprise platform (multiple integrations, compliance) | $250,000 – $750,000+ |

| Full-stack digital transformation or modernization | $500,000 – $2M+ |

| AI-powered product with custom model integration | $150,000 – $600,000+ |

Total Software Development Cost Range

Ranges assume mid-market development partner rates. Offshore teams typically reduce cost by 30 to 50 percent; enterprise consultancies (Big 4, Accenture, IBM) add a 60 to 120 percent premium. TechAhead operates in the senior mid-market tier: senior-level engineering at efficient delivery structures.

The Hidden Costs Most Budgets Miss

- Infrastructure and DevOps: CI/CD tooling, monitoring stack, cloud compute, and security tooling are consistently under-budgeted by 20 to 30 percent in initial estimates.

- Security and compliance: Penetration testing, SOC 2 or ISO 27001 alignment, and GDPR engineering are not optional line items — regulators and enterprise customers increasingly require them.

- Post-launch maintenance: Budget 15 to 20 percent of build cost annually for ongoing maintenance, security patching, dependency updates, and performance optimization.

- Technical debt: Underfunding QA and architecture in Phase 1 creates technical debt that compounds in cost through every subsequent phase. The cheapest time to invest in quality is before the codebase exists.

How to Evaluate a Software Development Partner on Cost

Compare on total value, not hourly rate. A senior engineer at $150 per hour who moves fast and makes correct architectural decisions delivers better ROI than a $40 per hour team with high coordination overhead, frequent rework, and architectural decisions that require expensive correction.

Fixed-price contracts transfer scope risk to the vendor. Time and materials contracts transfer it to you. Neither is inherently better — the right model depends on how well requirements can be specified upfront. When in doubt, start with a fixed-price discovery phase and move to T&M for execution. Always ask for a cost breakdown by SDLC phase rather than a single project total — it reveals how a vendor thinks about risk and where they plan to protect their margins.

For the complete cost analysis including benchmarks by technology type, team structure, and project complexity, see our software development cost guide. For comparative vendor assessment, see our ranking of the top custom software development companies.

AI in Software Development: How Artificial Intelligence Is Changing How Software Is Built

The impact of AI on the software development process is real, uneven, and frequently overstated in both directions. In fact, it is researched that the global AI in software development market size is expected to reach $15,704.8 million by 2033, showing a CAGR of 42.3% from 2025 to 2033.

AI is not going to replace software engineers. It is going to change what software engineers spend their time on — and enterprises that understand this distinction will build better software faster. Those that do not will pay for AI tooling without capturing its productivity value.

AI-Assisted Development: From Copilot to Autonomous Code Generation

GitHub Copilot and similar code-generation tools produce measurable productivity gains on specific task types: boilerplate code, unit test generation, documentation, and API client scaffolding. McKinsey research indicates developers using AI coding assistants complete these tasks 35 to 45 percent faster. What AI tools do not replace is senior architectural judgment, system design reasoning, or the ability to navigate ambiguous requirements toward a coherent technical solution.

The more significant near-term shift is the emergence of AI agents capable of owning discrete development tasks within a supervised pipeline: writing a REST endpoint to a specification, generating a database migration script, running a test suite and triaging failures. TechAhead’s approach positions AI as handling the repetitive and generative; engineers own the complex, the ambiguous, and the consequential.

Building AI-Powered Applications: What Enterprises Need to Know

Building AI features into enterprise software requires distinguishing between two patterns. Embedding AI capabilities — recommendations, NLP classification, computer vision — uses established model APIs and is primarily an integration engineering challenge. Building AI-native architectures — LLM pipelines, agentic systems, retrieval-augmented generation — requires new engineering disciplines: prompt engineering, vector database management, evaluation pipelines, and output validation frameworks.

Model selection criteria for enterprise AI: capability on your specific use case, cost per million tokens, inference latency, data privacy and residency controls, and vendor roadmap stability. As an OpenAI Services Partner, TechAhead has direct access to OpenAI’s enterprise APIs, fine-tuning infrastructure, and early access to new model capabilities — which compresses time-to-deployment for OpenAI-based enterprise applications.

RAG (Retrieval-Augmented Generation) architectures connect LLMs to enterprise knowledge bases — document repositories, support tickets, internal wikis, structured databases — enabling AI responses grounded in accurate, organization-specific information. For enterprises deploying AI in customer-facing or decision-support applications, RAG is the architecture that makes LLM output trustworthy enough to act on.

AI for Legacy System Modernization

AI-powered code analysis tools are reshaping the economics of legacy modernization. LLM-based systems can map COBOL or Oracle Forms codebases, generate structured documentation, identify dead code and redundant logic, and produce candidate modernized equivalents for engineer review. This changes the modernization calculus from ‘too expensive and too risky’ to ‘accelerated and de-risked.’ The impact of AI on core system modernization is explored in detail in our dedicated resource.

Agentic AI in Enterprise Software

Multi-agent AI systems, networks of specialized AI agents that collaborate to complete complex tasks, are moving from research to production in enterprise DevOps pipelines. Agents monitor production metrics and historical data as well. It triggers automated remediation and also offers valuable insights.

Agents also review code quality against architectural standards and validate deployment configurations before release, which also supports cost effectiveness.

Governance requirements scale with agent autonomy. Enterprises deploying agentic AI systems need prompt injection defenses, output validation frameworks, human-in-the-loop controls for high-consequence actions, and audit trails that satisfy both operational and regulatory requirements.

How to Choose a Software Development Partner: A Decision Framework for Enterprises

The vendor selection decision is where most enterprise software engagements either get set up for success or start accumulating problems. The criteria that matter most are not the ones that appear in RFP scoring matrices.

The 7 Criteria That Separate Good Partners from the Rest

- Technical depth: Can they architect, not just code? Ask how they have handled system design trade-offs on comparable projects — not what frameworks they use, but what decisions they made and why.

- Domain and compliance experience: HIPAA, GDPR, SOC 2, FedRAMP — certifications and audit history matter for regulated industries. Ask for evidence, not claims.

- Communication and transparency: Time zone overlap, dedicated project management, reporting cadence, and how they communicate bad news. A partner’s behavior during a project problem is more predictive of success than their performance on a greenfield sprint.

- AI and modern stack fluency: Partners who cannot speak to LLM integration, cloud-native architecture, DevOps maturity, and security-by-design will deliver software that is already a generation behind on delivery.

- IP and ownership: Confirm full IP assignment from day one. Review their standard agreement. Some development companies retain IP on reusable components without disclosing it during the sales process.

- Post-launch support model: Does the engagement end at go-live, or is there a structured support and evolution path? Software that is handed off and abandoned degrades — build the ongoing relationship into the commercial structure from the start.

- References from comparable enterprises: Ask specifically for clients in your industry and at your organizational scale. Scaling from 5 to 50 engineers and scaling from 50 to 500 require fundamentally different partner capabilities.

Questions to Ask in a Software Development RFP

- How do you handle scope change mid-project? Show me a real example.

- What does your QA process look like, and what is your defect escape rate to production?

- How do you manage technical debt across a multi-year engagement?

- What is your approach to security — embedded in development, or applied at the end?

- Can you walk me through a project that went wrong and how you handled it?

In-House vs. Outsourced vs. Partner-Led Development

| Model | Trade-offs |

| In-House Team | Full control and deep domain knowledge; high overhead, slow to scale, and talent acquisition is the critical constraint |

| Freelance / Staff Augmentation | Cost-efficient for defined tasks; coordination overhead, IP risk, and no accountability for system-level outcomes |

| Offshore Development Shop | Low cost; timezone challenges, variable quality, and high management load on the enterprise side |

| Technology Partner (TechAhead) | Full-lifecycle ownership, architecture expertise, AI-native capability; accountable for outcomes, not just outputs |

For a comparative analysis of leading providers, see our ranking of the top 10 custom software development companies.

Future Trends in Enterprise Software Development (2026 and Beyond)

Enterprise software decisions made today will shape architectural and operational reality for the next five to seven years. The following trends represent forces that are already shaping enterprise software strategy — not speculative predictions about what might happen, but inflection points that are underway.

Agentic AI and Autonomous Software Engineering

The shift from AI-assisted to AI-directed development pipelines is underway. Agents that own discrete tasks in a supervised development workflow are moving into production. The enterprise implication is significant: engineers shift from writing code to defining constraints, reviewing AI-generated output, and owning architectural decisions. The investment priority now is establishing AI governance frameworks, model evaluation pipelines, and secure API integration practices before the agents arrive — not after.

Platform Engineering as a Core Competency

The DevOps function is consolidating. What began as shared responsibility between development and operations is evolving into a dedicated platform engineering discipline responsible for the Internal Developer Platform — the standardized tooling, services, and golden paths that application teams consume. Enterprises without a platform engineering strategy will face escalating developer productivity debt as the complexity of cloud-native delivery outpaces what individual teams can manage independently.

Cloud-Native Becomes the Non-Negotiable Baseline

By 2030, the global cloud native applications market size is expected to reach $30.24 billion, projecting a CAGR of 23.8% from 2024 to 2030. Therefore, cloud-native architecture will be the expected starting point for enterprise software, not a differentiator. The competitive advantage has already shifted to what you build on top of cloud-native: AI capabilities, developer experience, and operational efficiency. Enterprises still debating whether to adopt cloud-native are already behind the organizations they compete with.

Security-First Engineering at Scale

Regulatory pressure is making security-by-design non-optional. The EU AI Act, SEC cybersecurity disclosure requirements, and evolving data protection regulations are creating legal accountability for security failures that previously had only operational consequences. DevSecOps embeds security controls in CI/CD pipelines: SAST (static analysis), DAST (dynamic analysis), SBOM (Software Bill of Materials) generation, and software supply chain security validation. Security is no longer a phase at the end of the SDLC — it is a continuous engineering practice.

Composable Architecture and the Decline of the Monolithic Suite

Enterprises are systematically deconstructing monolithic ERP, CMS, and commerce platforms into composable stacks assembled from best-of-breed components. MACH architecture provides the design vocabulary; strong API governance and integration architecture competency provide the operational foundation. The enterprises making this shift are not primarily motivated by technology preference — they are motivated by the organizational agility that composable architectures enable: the ability to replace one component without rebuilding the whole system.

Building Better Software Starts with a Better Strategy

Enterprise software success is the product of architecture decisions, methodology discipline, engineering culture, and the right partner working in combination. Technical execution matters — but it is rarely where enterprise software projects fail. Delivering solutions fails in requirements that were never properly specified, architectures that were chosen for the wrong reasons, DevOps practices that were never established, modernization decisions that were deferred too long, and partner relationships that optimized for rate rather than outcomes.

This software development guide has covered the strategic landscape: the SDLC, types of software development, architecture patterns, DevOps and engineering practices, modernization frameworks, technology selection, cost drivers, and the AI transformation underway in how software is built.

TechAhead is an AI-native enterprise software development company and OpenAI Services Partner, with 16+ years of delivery experience across web, mobile, cloud, and AI-powered platforms for global enterprises. We bring full-lifecycle ownership to every engagement: from architecture and engineering through DevOps, security, and ongoing modernization.

If you are evaluating your software development options — whether building a new product, modernizing a legacy system, or scaling an existing platform — talk to TechAhead’s software development team about your requirements.

The software development lifecycle is the structured process through which software is planned, designed, built, tested, deployed, and maintained. In enterprise contexts, it encompasses seven phases — from planning and requirements analysis through system design, development, QA, deployment, and ongoing maintenance. The SDLC provides the framework for managing complexity, risk, and quality across a software project from inception to production.

Enterprise software development typically ranges from $80,000 for a mid-complexity application to $2 million or more for a full-scale digital transformation program. The primary cost variables are project complexity, architectural approach, team composition (onshore versus offshore), and quality investment in testing and security.

Custom software is built specifically for your business processes, workflows, and data architecture. You own the IP, control the roadmap, and the system is designed around your requirements. SaaS is a subscription-based platform built for a broad market: fast to deploy but limited in customization, with the vendor controlling the roadmap and the data architecture. The decision depends on whether the software addresses a differentiating or commoditized function.

Microservices architecture decomposes a software system into independently deployable services, each owning a bounded domain of functionality. It enables independent scaling, technology diversity per service, and fault isolation. The practical threshold for adopting microservices is approximately 30 to 50 engineers working on the same system, or when release frequency is constrained by a monolith’s shared deployment cycle. Below that threshold, a well-structured monolith is typically more cost-efficient.

An AI-native development company integrates AI capabilities into both how it builds software and what it builds. TechAhead uses AI-assisted development workflows — code generation, automated testing, AI-accelerated modernization — to improve delivery speed and quality. As an OpenAI Services Partner, we also build enterprise applications that integrate OpenAI’s models and APIs directly: LLM pipelines, RAG architectures, agentic systems, and AI-powered automation. This means enterprises working with TechAhead benefit from both faster delivery and access to AI capabilities that most development partners are still learning to apply.

Timeline varies significantly by scope and complexity. An MVP for a mid-complexity application typically takes three to six months. An enterprise platform with multiple integrations and compliance requirements ranges from nine to eighteen months. A full-scale digital transformation program typically spans twelve to twenty-four months across multiple workstreams. The factors that compress timelines are strong requirements definition upfront, experienced teams, and mature DevOps practices. The factors that extend them are scope changes, integration complexity, and compliance requirements that were not factored into the initial plan.

Legacy system modernization is essential for organizations to remain competitive, as it allows them to leverage new technologies and improve operational efficiency. The process of legacy system modernization often involves migrating to cloud-based solutions, which can enhance scalability and flexibility for businesses. Legacy system modernization is essential for organizations to remain competitive, as it allows them to leverage new technologies and improve operational efficiency. The strategic framework is: Rehost, Replatform, Refactor, Repurchase, Retire, Retain, or Relocate. Each application in a legacy portfolio maps to one R based on business criticality, modernization ROI, and risk tolerance. Modernizing legacy systems can lead to significant cost savings, improved performance, and enhanced security, which are critical for businesses operating in fast-paced environments.

Evaluate on seven criteria: technical depth (architecture capability, not just execution), domain and compliance experience, communication transparency, AI and modern stack fluency, IP ownership terms, post-launch support model, and references from comparable enterprises. Rate comparisons and portfolio presentations provide a starting point, but the most predictive question is: how has this partner handled a project that went wrong?

Research indicates that investing in UX/UI design can yield a return on investment (ROI) of up to 100% or more, as it directly impacts user experience and satisfaction. A well-designed user interface can improve the usability of software applications, making them more intuitive and easier to navigate for users. Moreover, effective UX/UI design significantly enhances user satisfaction and engagement, leading to higher retention rates and increased customer loyalty.