Key Takeaways:

- 90% of enterprises are actively pursuing AI, but only 15% have achieved enterprise-scale deployment. The ambition is near-universal. The execution is not. That 75-point gap is the single most important number in enterprise AI today.

- 42% of organizations cannot properly customize AI models due to poor-quality data. Data quality is the single most common blocker in enterprise AI. An AI readiness assessment identifies data gaps before they kill a deployment.

- The constraint holding most enterprises back from AI at scale is no longer model performance or tooling availability, but organizational readiness and implementation. Readiness is a people, process, and culture question as much as it is a data and infrastructure one.

- Organizations that deployed reasoning-driven AI systems outperformed those that retrofitted old automation logic with an AI label.

- The readiness gaps that never surface in a pilot, such as integration depth, change management, and governance infrastructure, are exactly what cause deployments to collapse at scale.

Here’s a number worth sitting with: 97% of enterprises say they want to deploy AI. Only 14% are actually ready to do it well. The rest are somewhere in between—enthusiastic, funded, and underprepared.

That gap is not a technology problem. It’s a readiness problem.

Most organizations treating AI as a procurement decision pick a vendor, sign a contract, and announce the initiative. They are setting themselves up for what the industry has started calling ‘pilot purgatory’: the graveyard of AI proof-of-concept that looked promising in a boardroom presentation and went nowhere in production.

The organizations that actually scale AI do not move faster. They move smarter. And the first smart move is almost always the same: conduct a rigorous AI readiness assessment before committing budget, headcount, or executive credibility to an initiative that the business is not yet built to support.

This blog is about what that assessment looks like, what it surfaces, and how to use it to build an AI strategy that holds for the years to come.

What Is an AI Readiness Assessment?

An AI readiness assessment is a structured diagnostic that evaluates whether your organization has the foundational conditions necessary to adopt, implement, and scale artificial intelligence sustainably. It looks at your data infrastructure, your technology stack, your talent landscape, your culture, your governance posture, and how closely any proposed AI initiatives are tied to actual business outcomes.

It is not a technology audit. It’s not a vendor discovery exercise. And it’s certainly not a box to tick before the real work begins. It is the strategic foundation that determines whether your AI investments will generate returns or quietly drain resources over the next 18 months.

Think of it like a structural survey before a major renovation. You wouldn’t knock down walls without knowing which ones are load-bearing. The same logic applies to enterprise AI.

What sets a serious AI readiness assessment apart from a generic technology review is its scope. It asks questions that make business leaders uncomfortable. It surfaces tensions between what the company says it wants to do with AI and what the company is actually capable of doing today.

That’s precisely what makes it valuable.

Why Enterprise AI Might Fail Without an AI Readiness Assessment

It would be convenient if AI failure were always a technology problem. Easier to diagnose, easier to fix. But the pattern that emerges from failed enterprise AI projects is far more organizational than technical.

The data is consistent: between 70 and 80 percent of AI projects never make it to production. Not because the models did not work. Because the business was not ready to absorb them.

Here’s what ‘not ready’ actually looks like in practice:

- Fragmented Data: The AI initiative gets scoped, the vendor gets selected, and then someone asks: where’s the training data? Turns out it’s spread across seven systems, three formats, and two business units that don’t talk to each other.

- No Executive Alignment: Without a unified leadership position, AI initiatives stall in committee.

- Skills Mismatch: The organization has developers but not data scientists. Or data scientists, but no ML engineers. Or ML engineers, but no one who can translate model outputs into business decisions.

- Absent Governance: No model risk framework. No defined accountability for AI-driven decisions. No plan for what happens when the model is wrong, and it will be wrong sometimes.

- Misaligned Use Cases: Someone chose an AI use case because it was interesting, not because it was tied to a specific P&L line or a measurable business outcome. Interesting does not survive a budget review.

The cost of skipping an AI readiness assessment is almost always higher than the cost of doing one. The difference is that one cost is visible upfront, and the other shows up later when it’s much harder to recover.

Importance of AI Readiness Assessment for Enterprises

AI solutions can offer a plethora of benefits to your organization. However, jumping into AI without a readiness assessment is like building an unexamined foundation. The structure may hold for a while, but the cracks show up at exactly the wrong moment. For enterprise leaders, an AI readiness assessment is a strategic instrument that shapes everything from budget decisions to board-level confidence in your AI roadmap.

Prevents Costly Blind Spots

Most AI initiatives fail because a foundational gap in data quality, governance, or talent alignment was never surfaced. An assessment makes the invisible visible before it becomes expensive.

Turns Ambition Into a Sequenced Plan

AI ambition without sequencing is just a wish list. The assessment maps your current state against your goals and produces a phased roadmap. Hence, leadership knows not just what to pursue, but in what order and why.

Reduces Regulatory and Compliance Risk

The reputational cost matters too. In regulated industries, deploying AI without proper governance and compliance readiness is a liability. GDPR, the EU AI Act, and sector-specific regulations are raising the stakes on every AI deployment decision your organization makes.

Protects and Maximizes ROI

The financial toll is real. Failed AI projects typically consume six to eighteen months of organizational runway—not just in direct cost but in leadership attention, team morale, and the political capital spent defending an initiative that did not deliver.

Builds Organization-wide Alignment

AI initiatives stall when IT, finance, legal, and business units are not on the same page. A readiness assessment creates a shared, evidence-based view of where the organization stands.

Accelerates the Path from Pilot to Scale

The difference between a proof-of-concept that impresses in a demo and one that survives contact with production is almost always a readiness gap. Knowing what those gaps are and closing them early is what takes AI from pilot purgatory to enterprise-wide value.

Also Read: Ways AI and Automation Boost Enterprise Productivity

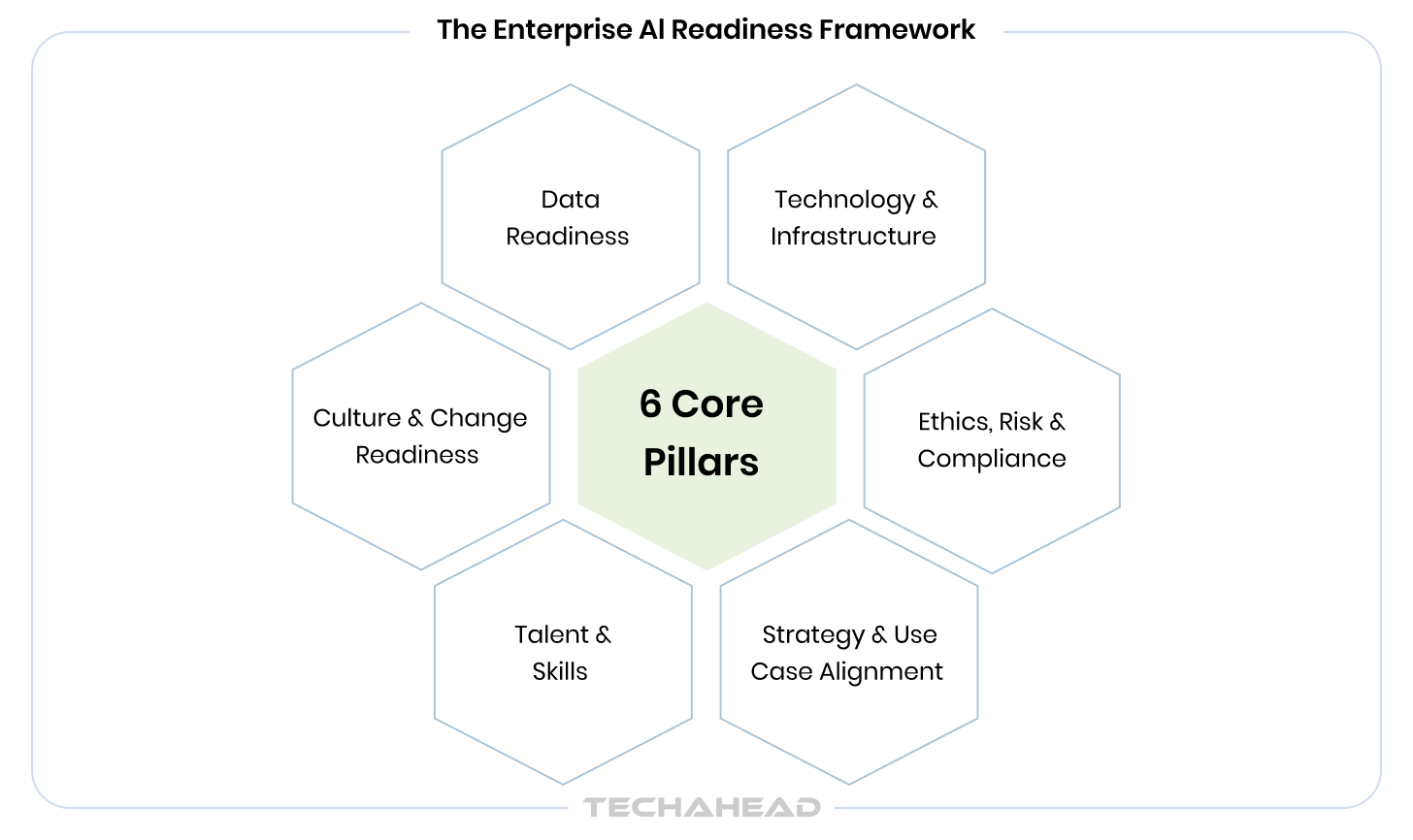

The Enterprise AI Readiness Framework: 6 Core Pillars

Over the years of working with enterprise clients across industries, we have developed a structured approach to this diagnostic—the Enterprise AI Readiness Model (EARM). It does not evaluate AI readiness as a single score. It maps it across six interconnected dimensions, each of which can accelerate or block your AI strategy depending on where you stand.

The cost of skipping an AI readiness assessment is almost always higher than the cost of doing one. The difference is that one cost is visible upfront, and the other shows up later when it’s much harder to recover.

| Pillar | What It Covers | What to ask? |

| 1. Data Readiness | Data quality, availability, governance, pipeline maturity, and accessibility across business units. | Is our data AI-ready, or just AI-adjacent? |

| 2. Technology & Infrastructure | Cloud maturity, system integration capability, compute scalability, and MLOps tooling. | Can our infrastructure actually run and manage AI workloads at scale? |

| 3. Talent & Skills | AI literacy across teams, data science maturity, and the gap between current skills and required capability. | Do we have the people to build it, run it, and act on what it tells us? |

| 4. Strategy & Use Case Alignment | Clarity of AI use cases, linkage to specific business KPIs, and prioritization logic. | Are we building AI for a business outcome, or for a press release? |

| 5. Culture & Change Readiness | Leadership commitment, cross-functional collaboration, and organizational resistance patterns. | Will the business actually use what we build? |

| 6. Ethics, Risk & Compliance | Regulatory readiness, model governance, bias controls, and accountability frameworks. | Are we prepared for what happens when AI makes a consequential mistake? |

No organization scores perfectly across all six pillars before their first major AI initiative, and that’s not the expectation. What the EARM reveals is where your critical blockers are, how to sequence your remediation, and which use cases you can pursue now versus which need foundational work first.

That sequencing is everything. The difference between an AI strategy that builds momentum and one that burns out in year one is almost always a readiness-informed roadmap versus an ambition-driven one.

AI Readiness Checklist: Where Does Your Organization Stand Today?

Use this diagnostic as a starting point. For each item, mark it complete only if it’s consistently true across your organization, not just in one department or one pilot project. Score one point per checked item.

Data Readiness

- Centralized or federated data repositories are in place, accessible, and current.

- Documented data quality standards exist and are actively enforced across business units.

- A formal data governance policy is operational, not just drafted.

- Clear data ownership is assigned per domain or business unit.

Technology & Infrastructure

- Cloud infrastructure has been assessed and confirmed capable of handling AI workloads.

- Core business systems are API-accessible and can integrate with AI pipelines.

- MLOps or model management tooling is in place or actively being evaluated.

Talent & Skills

- A dedicated data science or AI team exists, or a credible plan to build one is funded.

- Business-unit stakeholders have baseline AI literacy. They understand what AI can and cannot do.

- An upskilling roadmap for AI-adjacent roles has been defined and resourced.

Strategy & Use Case Alignment

- At least two AI use cases have been identified and tied to specific, measurable business KPIs.

- An executive sponsor is formally assigned to each AI initiative.

- AI appears as a distinct line item or initiative in the annual budget plan.

Culture & Change Readiness

- C-suite leadership actively and visibly champions AI adoption, not just in speeches, but in decisions as well.

- A cross-functional AI working group or steering committee has been established.

- A change management plan is in place to address workforce concerns about AI.

- At least one AI pilot has been run, evaluated, and its learnings formally documented.

Ethics, Risk & Compliance

- An AI governance policy covering accountability, model review, and escalation paths — has been drafted.

- Relevant regulatory requirements (GDPR, EU AI Act, sector-specific rules) have been mapped to your AI plans.

- A process for bias testing and ongoing model performance monitoring has been defined.

How to Read Your Score?

Add up your checked items. Use the table below to understand where your organization sits — and what to prioritize next.

| Score | Stage | What It Means |

| 0 – 7 | Early Stage | Significant foundational work required before any AI initiative. |

| 8 – 13 | Developing | Pockets of readiness exist. Structured remediation will unlock progress. |

| 14 – 18 | Advanced | Strong base. Address remaining gaps before scaling enterprise-wide. |

| 19 – 20 | Ready to Scale | Well-positioned for enterprise AI deployment. Prioritize governance and speed. |

How Consultants Assess AI Readiness in Businesses

When a specialist firm conducts an AI readiness assessment for an enterprise, it typically runs in four phases. Understanding this process helps you evaluate what you are buying and quickly spot engagements that are likely to underdeliver.

Phase 1: Discovery

This is where the real picture emerges. Structured interviews with stakeholders across the C-suite, IT, data, legal, and key business units. Current-state documentation review. System and data inventory. The goal is not to confirm what leadership believes about the organization — it’s to find where the belief and the reality diverge. That gap is almost always where the most important insights live.

Phase 2: Diagnostic

Each pillar of the EARM framework is scored independently, then benchmarked against industry peers. This is where organizations often get their first honest view of where they stand. The scoring is directional. A low score on data readiness does not mean you can’t pursue AI. It means you should sequence your AI strategy around what your data can actually support right now.

Phase 3: Gap Analysis

Blockers are identified, categorized, and prioritized by two dimensions: the impact on AI outcomes if left unaddressed, and the effort required to resolve them. This produces a clear priority stack, where some gaps are quick fixes that can be resolved in weeks. Others are structural and require months of parallel workstreams. Knowing which is which is half the strategy.

Phase 4: Roadmap Delivery

The assessment closes with a sequenced, actionable plan — not a slide deck full of recommendations that no one owns. A strong roadmap includes quick wins in the first six months to build organizational confidence, mid-term infrastructure and capability investments across six to eighteen months, and transformational use-case deployment in the eighteen to thirty-six month window. Every item has an owner, a success metric, and a dependency chain.

A credible assessment takes four to eight weeks for a complex enterprise. Anything faster is either narrowly scoped or cutting corners. Anything that does not produce an actionable roadmap as the primary deliverable isn’t a strategic assessment.

AI Readiness Assessments for Enterprises: What to Expect from Providers

Not all AI readiness assessments are built the same. The market ranges from deep, custom enterprise engagements to templated online scorecards that produce a PDF and a sales pitch. Knowing the difference before you engage a provider is a meaningful time and money saver.

A robust enterprise assessment will cover all six pillars of the EARM, beyond the technology stack. It will produce executive-level reporting that a board can act on, not just a technical inventory. It will include change management and governance as first-class concerns, not afterthoughts. And it will deliver a phased roadmap that the organization can immediately begin executing.

Watch for these patterns in weaker offerings:

- Technology-only scope: If the provider’s framework does not explicitly address talent, culture, and governance, they are evaluating your AI infrastructure.

- Template-first methodology: Templated assessments can work for small businesses. For enterprises, they consistently miss the organizational nuances that determine whether AI initiatives succeed or stall.

- The report trap: A thick document full of findings with no implementation path attached is a common output of providers who assess but don’t implement. The assessment has value only if someone is accountable for acting on it.

- No post-assessment continuity: The most common failure point after an assessment is not a lack of insights, but it’s a lack of execution. Providers who offer assessment as a standalone service create a handoff gap that organizations rarely bridge on their own.

The strongest providers are those who embed AI strategy consulting into the assessment itself, treating it not as a diagnostic product but as the opening chapter of an ongoing partnership.

How to Choose an AI Readiness Assessment Provider

If you are an enterprise decision-maker actively evaluating providers, here are the five criteria that consistently differentiate the engagements worth investing in from those that produce shelfware.

- Industry depth: Generic AI knowledge is table stakes. Has this provider assessed organizations in your vertical, for example, financial services, healthcare, manufacturing, and retail? Industry-specific assessment surfaces regulatory and operational risks that a horizontal methodology will almost certainly miss.

- Methodology breadth: Ask them directly: Does your framework cover data governance, talent, culture, and compliance? If they talk mostly about technology in the discovery conversation, that’s what you will get in the deliverable.

- Post-assessment support: An assessment without implementation continuity is a map without a driver. The provider who conducts your readiness assessment should either be equipped to help you execute the roadmap or have a credible, named handoff partner who will.

- Team composition: Look for a team that includes business strategists, data engineers, and change management specialists. AI readiness is not a technical problem. The team structure signals what the output will prioritize.

- Proven enterprise track record: Case studies, reference clients, measurable outcomes. Ask for examples where a readiness assessment led to a successful AI deployment at a comparable organizational scale. The absence of this evidence is itself an answer.

Questions to Ask Any Provider Before You Sign

- Can you show us a case study from our industry where this assessment led to a deployed AI initiative?

- What does your framework cover beyond technology infrastructure?

- How do you handle post-assessment execution? Is that within scope or a separate engagement?

- Who specifically will be on our team, and what are their backgrounds?

- How do you measure the success of an assessment engagement?

From Assessment to Action: TechAhead Build Your AI Strategy Roadmap

As an AI consulting company with 16+ years of enterprise delivery experience, we have seen what separates AI strategies that scale from those that stall. Our AI readiness assessments produce a clear, funded path to execution. SOC 2 Type II certified and AWS Advanced Partner backed.

Here’s what the transition looks like when it’s done well.

Immediately after the assessment, we prioritize converting findings into a sequenced, funded plan. Not all gaps need to be closed before AI work can begin. The EARM scoring tells you which pillars are strong enough to build on now and which need parallel remediation tracks.

In the first six months, the focus is on quick wins: use cases that are within reach given your current data and infrastructure posture, and that will build organizational confidence in AI as a value-delivery mechanism. These early wins give the C-suite evidence to maintain investment appetite and give business units a reason to engage rather than resist.

Between six and eighteen months, the work shifts to foundational investments: data quality remediation, cloud infrastructure upgrades, talent development, and governance framework implementation.

Beyond eighteen months, the transformational use cases open up. Enterprise-wide intelligent automation. Predictive systems that change how decisions are made. Customer and operational experiences that were not possible before. This is where the AI narrative reaches its potential, but only for organizations that have built the foundation in the preceding eighteen months.

One more thing worth stating clearly: readiness is not a one-time milestone. The AI landscape shifts fast. Regulatory requirements evolve. New model capabilities emerge. The organizations that sustain AI advantage are those that treat readiness assessment as a continuous practice.

Organizations with a formal AI readiness baseline achieve measurable AI ROI significantly faster than those who skip it. The assessment is not overhead. It is the strategy.

Conclusion: The Organizations That Assess First, Scale Fastest

The AI opportunity is real. So is the AI failure rate. And the gap between the two almost always comes down to preparation, specifically, whether the organization understood what it was walking into before it committed.

An AI readiness assessment does not slow you down. It makes sure you’re moving in the right direction when you do. It protects the investment you are about to make. It gives the C-suite a shared, evidence-based view of where the organization stands and what the path forward actually requires. Therefore, organizations collaborate with an experienced AI development company to turn an AI strategy from a narrative into a plan.

The question is no longer whether your organization needs AI. That conversation is over. The question now is whether you are ready to make it work in a way that generates returns.

An AI readiness assessment is a structured diagnostic that evaluates whether your organization has the data quality, technology infrastructure, talent, governance, culture, and strategic alignment required to adopt AI at scale. For enterprises, it matters because it determines whether your AI investments will deliver measurable outcomes or consume resources without producing results. Most enterprise AI failures can be traced back to a readiness gap that was never formally identified.

A thorough enterprise assessment typically runs four to eight weeks, depending on organizational complexity, the number of business units in scope, and the depth of the data and technology review. Assessments completed in less than three weeks are almost always scoped narrowly, which is useful for a single function or use case, but insufficient as the basis for an enterprise AI strategy.

A data readiness assessment is one component of a full AI readiness assessment. It focuses specifically on whether your data quality, availability, governance, and infrastructure are fit to power AI and machine learning models. An AI readiness assessment covers data readiness and five additional dimensions: technology, talent, strategy, culture, and ethics and compliance. Skipping the broader assessment and doing only a data review is a common mistake that surfaces one class of blockers while leaving the others hidden.

Costs vary significantly by scope, provider tier, and organizational complexity. Templated assessments from technology vendors can cost very little but deliver commensurately limited insight. Comprehensive enterprise assessments from specialist firms typically represent a meaningful professional services investment — but one that is routinely recovered in the avoidance of a single failed AI project. Ask providers for a clear scope and deliverable list before comparing costs.

The assessment should produce three things: a scored view of your current readiness across the EARM pillars, a prioritized gap analysis identifying your most impactful blockers, and a phased AI strategy roadmap with defined owners, timelines, and success metrics. What comes next is execution — ideally with continuity from the same team that conducted the assessment, or through a carefully managed handoff to an implementation partner.

Internal assessments are possible and better than no assessment at all. The limitation is objectivity. Internal teams almost always have organizational blind spots, such as areas where group assumptions about capability go unchallenged, or where political dynamics prevent honest scoring. An external AI development partner brings benchmark data from comparable organizations, structured methodology, and the independence to surface uncomfortable findings. For an initiative as strategically significant as enterprise AI, that objectivity is usually worth the investment.

Use the EARM scoring as your baseline and re-assess on a defined cadence, typically annually, or when there’s a significant change in your AI ambition, infrastructure, or regulatory environment. Track progress across each of the six pillars independently, since improvement in one area (such as data governance) often creates leverage in others (such as model performance). Organizations that establish a baseline and measure against it consistently move faster on AI than those that treat readiness as a one-time question.