Required for core functionality such as security, network management, and accessibility. These cannot be disabled.

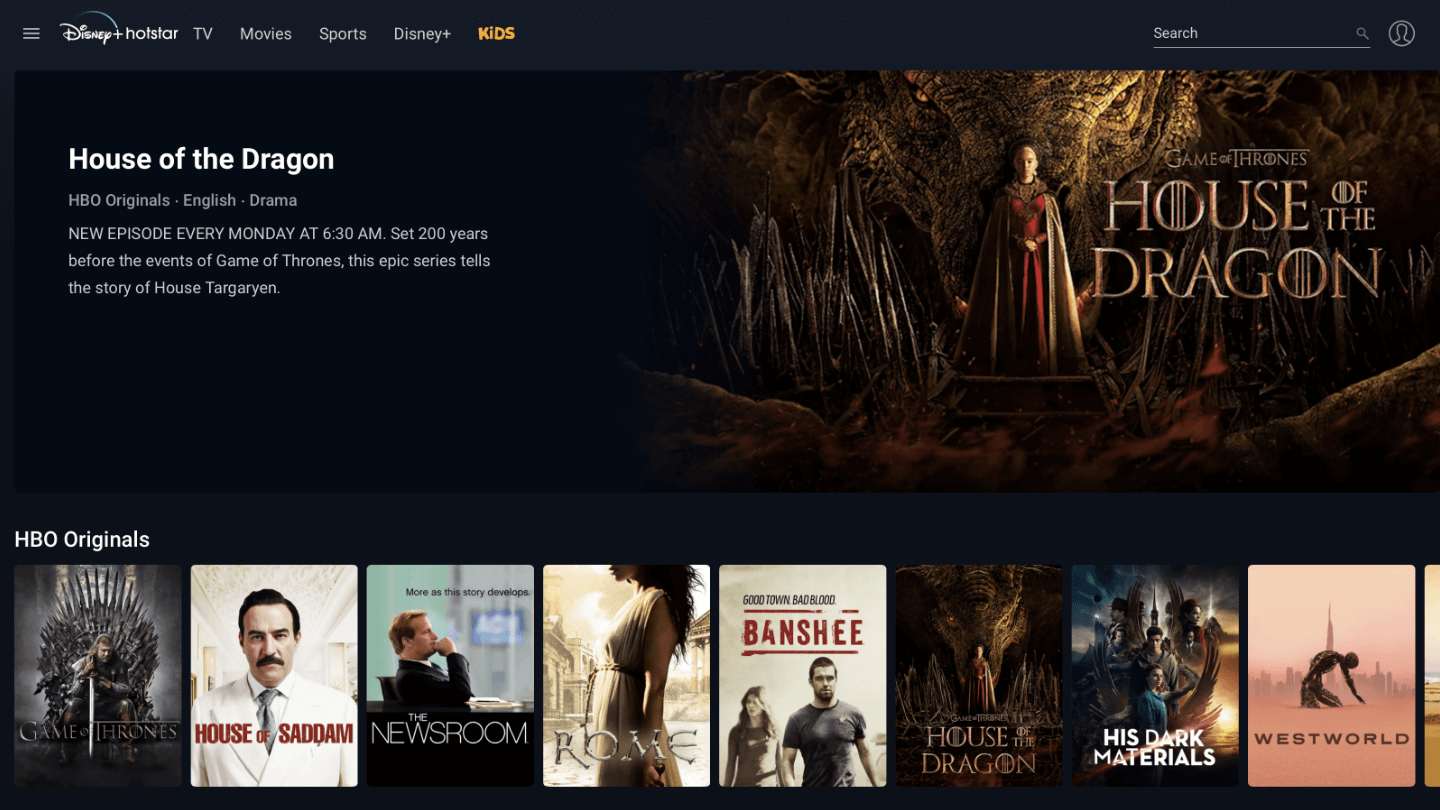

JioHotstar again set new records for entertainment applications. On March 9th this year, more than 61 million logged in to the JioHotstar (previously Disney+Hotstar) application to watch the ICC Champions Trophy Final between India and New Zealand. This year’s viewing stats are undeniable proof of JioHotstar’s success.

JioHostart didn’t just break the previous 59 million view record it set during the 2023 World Cup, but set new standards and made it clear who is the global leader in live sports streaming. Active viewers on a single mobile app, at this scale, is rare. The concurrent viewership exceeded the entire population of Italy—all streaming live, with zero downtime.

But how did JioHotstar manage this without overloading their servers?

In this blog, we will discuss how JioHotstar ensures this incredible scalability of the app by understanding and decoding its system architecture, concurrency, scalability models, and more.

But first, a brief introduction to the world’s second-biggest, and India’s #1 OTT platform: JioHotstar.

JioHotstar: An introduction

The journey started with the launch of the Hotstar app in 2015, which was developed by Star India. Around the same time, the 2015 Cricket World Cup was about to start, along with the 2015 IPL tournament, and Star network wanted to fully capitalize on the insane viewership.

While JioHotstar generated a massive 345 million views for World Cup, 200 million views were generated for the IPL Tournament.

This was before the Jio launch, which happened in 2016. And watching TV series and matches on the mobile was still at a nascent stage. The foundation was set.

Then came Reliance Jio’s telecom network with an unlimited 4G offer. For India, the launch of Jio changed Internet usage. More and more people could now live stream, which was perfect for JioHoster’s growth in India.

By 2017, JioHotstar had 300 million downloads, making it the world’s second-biggest OTT app, only below Netflix.

In 2019, Hotstar was acquired by Disney, as part of their 21st Century Fox acquisition, and the app was rebranded to Disney+Hotstar.

As of now, JioHotstar has over 1 billion downloads, with a whopping 500 million active users and more than 300 million paid subscribers.

The 2025 IPL tournament set an all-time historic record, clocking a staggering 840 billion minutes of total watch time, shattering all previous benchmarks. The 2025 IPL Final alone was watched for 31.7 billion minutes, making it the most-watched T20 match in history.

In India, JioHotstar has a very intense focus on regional content, as more than 60% of the content is viewed in local languages. This is the reason they support streaming in over 19 Indian languages, with plans to expand this number. The same strategy is visible in other countries as well, with a deep focus on regional content, along with regular English content.

They have 300,000+ hours of content for viewers, and India accounts for approximately 40% of their overall user base.

As of now, the unified JioHotstar platform is available in India, while the “Hotstar” specific app serves the UK, Canada, and Singapore.

TL;DR: The 60-Million User Tech Stack

| Component/Strategy | Description & Function |

| Orchestration | Shifted from raw EC2 instances to Amazon EKS (Kubernetes) to manage over 4,000+ nodes dynamically. |

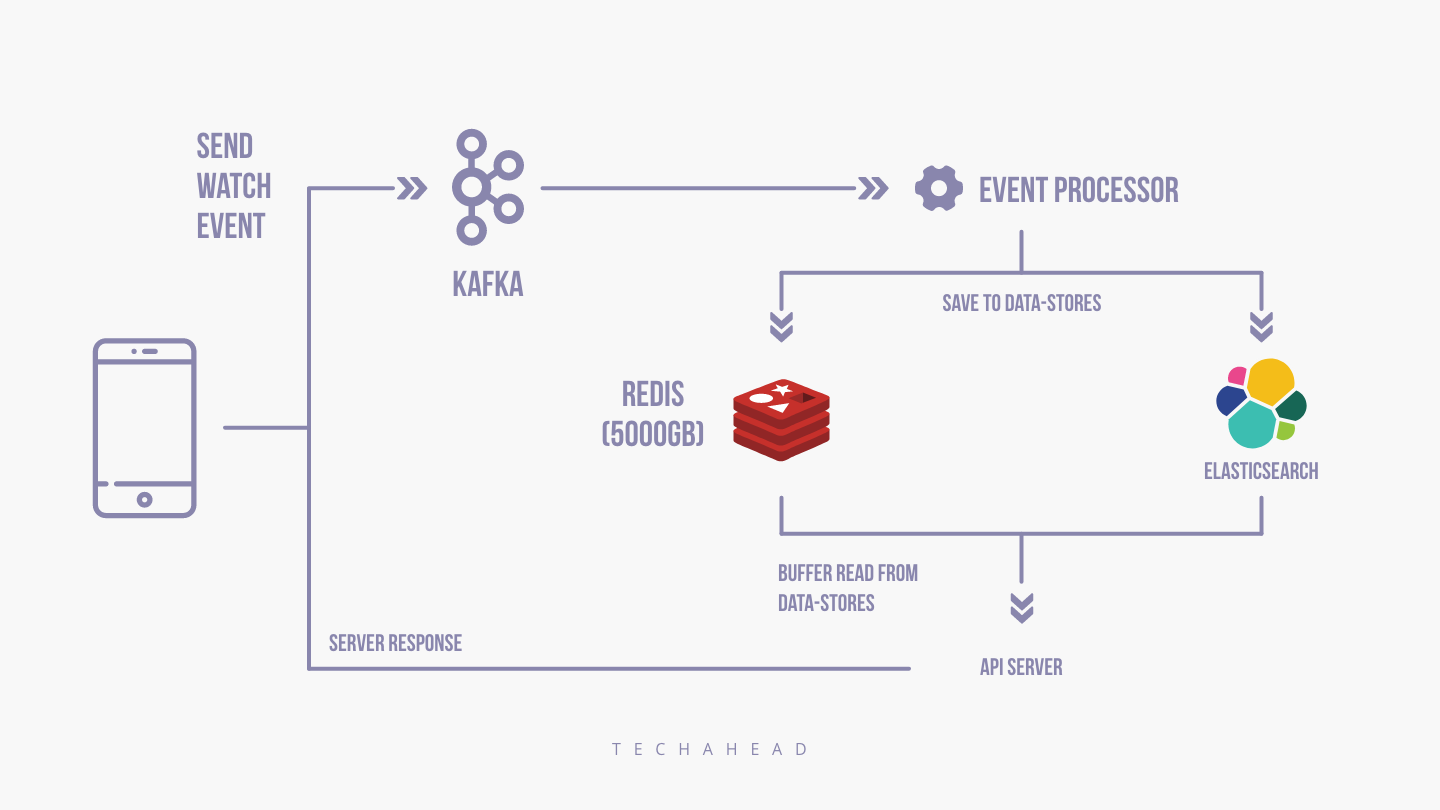

| Real-Time Data | JioHotstar makes use of Apache Flink & Kafka to process data in milliseconds for instant detection of buffering issues. |

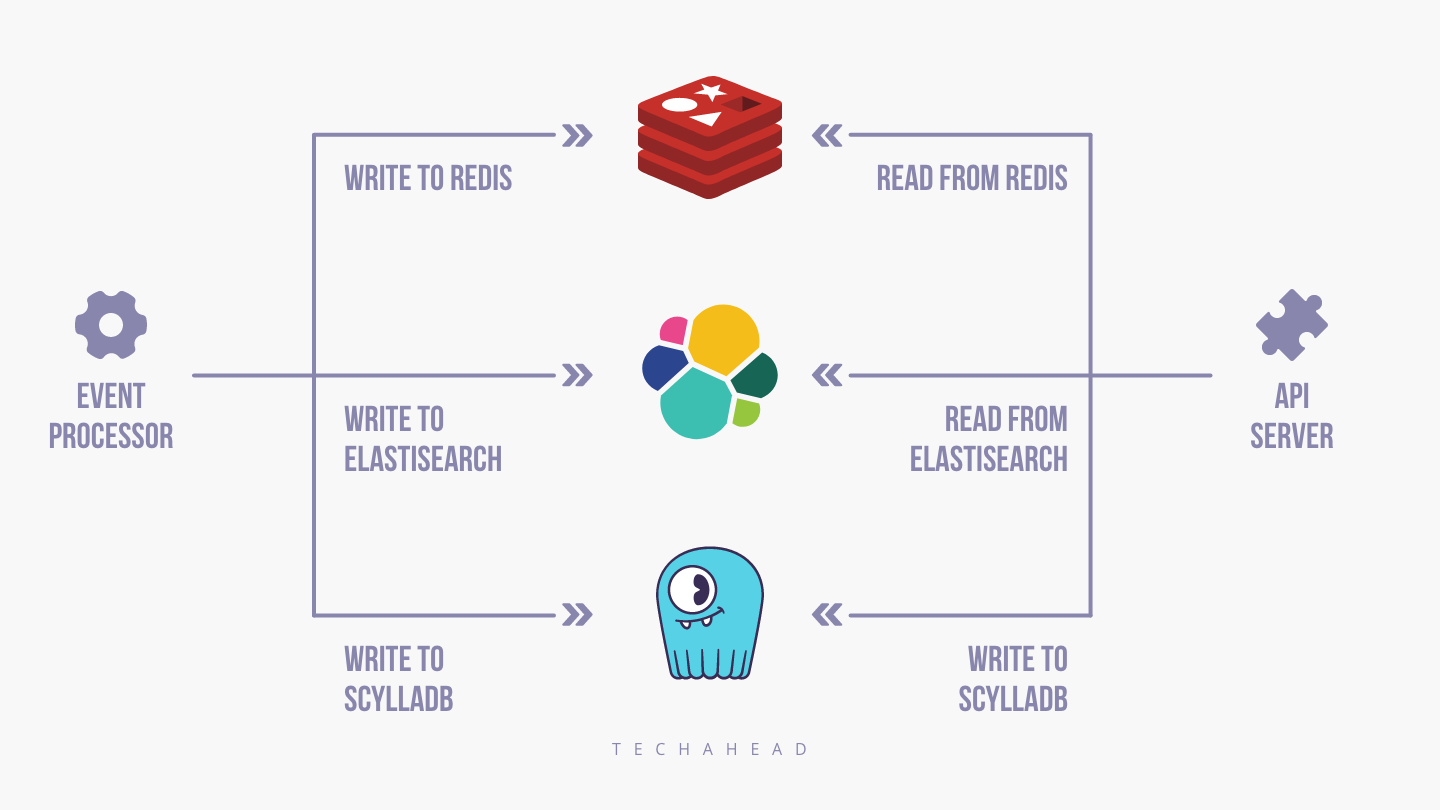

| Database | Migrated to ScyllaDB (high-speed NoSQL) and Trino for ultra-low latency data access during peak loads. |

| Scaling Strategy | Uses Predictive AI Scaling (Ladder-based) to handle sudden user traffic spikes. |

| Client Intelligence | The app features an “Intelligent Client” that automatically reduces server calls (exponential backoff) and switches CDNs if latency increases. |

How JioHotstar’s architecture handle 60+ million viewers?

We will observe the architecture of the JioHotstar app and decode how they are able to ensure such powerful scalability on a consistent basis.

Backend of JioHotstar

The team behind Disney+Hotstar has ensured a powerful backend by choosing AWS Cloud Services, while their CDN partner is Akamai.

While the original architecture relied on raw EC2 instances, JioHotstar now also leverages the power of Amazon EKS (Elastic Kubernetes Service) to manage thousands of containers dynamically. The developers rely on the microservices development services offered by EKS for easy code management.

At the same time, they use a mixture of on-demand & spot instances to ensure that the costs are controlled. For spot instances, they use machine learning & data analytics algorithms, which drastically reduce their overall expenses of managing the backend.

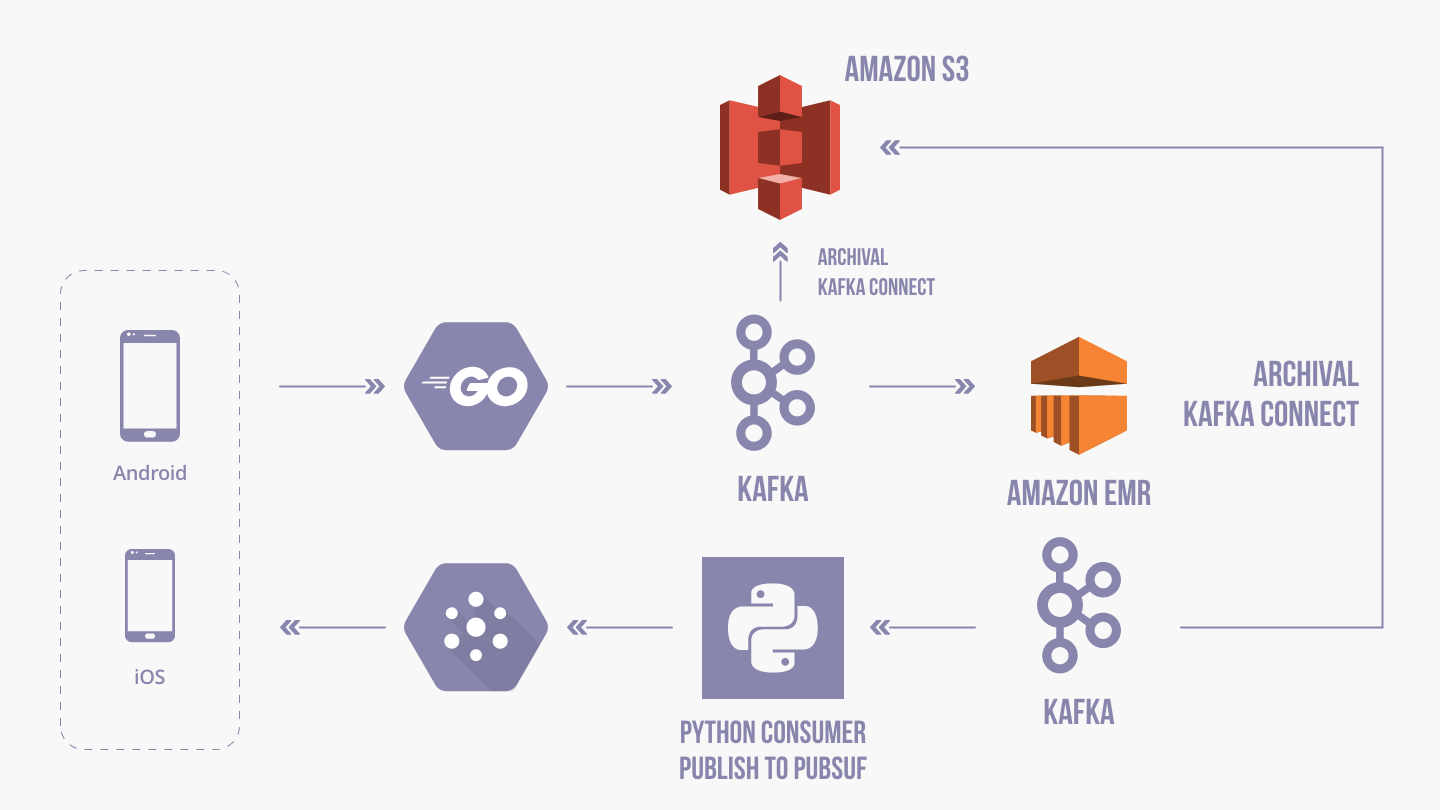

AWS EMR Clusters is the service they use to process terabytes of data (in double-digit) on a daily basis. Apache Flink and Kafka are used to process terabytes of telemetry data in real-time. This tech stack can handle sudden traffic spikes and helps in detecting buffering issues, too. Note here that AWS EMR is a managed Hadoop framework for processing massive data across all EC2 instances.

In some cases, they also use Apache Spark, Trino, and ScyllaDB in sync with AWS EMR.

At the same time, they use a mixture of on-demand & spot instances to ensure that the costs are controlled. For spot instances, they use machine learning & data analytics algorithms which drastically reduces their overall expenses of managing the backend.

AWS EMR Clusters is the service they use to process terabytes of data (in double-digit) on a daily basis. Note here, that AWS EMR is a managed Hadoop framework for processing massive data across all EC2 instances.

In some cases, they also use Apache Spark, Presto, HBase frameworks in-sync with AWS EMR.

The core of scalability: infrastructure setup

Here are some interesting details about their infrastructure setup for load testing, just before an important event such as IPL matches or cricket World Cup finals.

They have 500+ AWS CPU instances, which are C4.4X Large or C4.8X Large running at 75% utilization.

Note: The system can scale to over 4,000+ Kubernetes Worker Nodes during peak matches

C4.4X instances have typically 30 Gigs of RAM & C4.8X 60 Gigs of RAM!

The entire setup of JioHotstar on the cloud infrastructure has 16 TB of RAM, 8000 CPU cores, with a peak speed of 32Gbps for data transfer. This is the scale of their operations, which ensures that millions of users are able to concurrently access live streaming on their app.

Note here that C4X instances are really high CPU-intensive operations, ensuring a low price-per-compute ratio. With C4X instances, the app has high networking performance and optimal storage performance at no additional cost.

JioHotstar uses these Android components for having a powerful infrastructure (and to keep the design loosely coupled for more flexibility):

- ViewModel: For communicating with the network layer and filling the final result in LiveData.

- Room

- LifeCycleObserver

- RxJava 2

- Dagger 2 and Dagger Android

- AutoValue

- Glide 4

- Gson

- Retrofit 2 + okhttp 3

- Chuck Interceptor: For ensuring swift and easy debugging of all network requests, when the devices are not connected to the network.

How JioHotstar ensures seamless scalability?

There exist basically two models to ensure seamless scalability: Reactive (Traffic-based) and Predictive (AI-based Ladder Scaling).

In Reactive scaling, the tech team simply adds new servers and infrastructure to the pool, as the number of requests being processed by the system keeps increasing. However, this method is not reliable for handling events like millions of users joining in a very short time.

Predictive Ladder-based scaling is opted for in those cases, wherein the details and the nature of the new processes are not clear. In such cases, the tech team of JioHotstar has pre-defined ladders per million concurrent users. The developers are also making use of AI models to predict the traffic and scale the system as per need

As of now, the JioHotstar app has a concurrency buffer of 10 million concurrent users, which are, as we know, optimally utilized during the peak events such as World Cup matches or IPL tournaments.

In case the number of users goes beyond this concurrency level, new containers are added to the pool, and the container and the application take only a few seconds to start.

In order to handle this time lag, the team has a pre-provisioned buffer, which is the opposite of auto-scaling and has proven to be a better option.

The team also has an in-built dashboard called Infradashboard 2.0, which helps the team to make smart decisions based on the concurrency levels and prediction models of new users during an important event.

By using Fragments, the team behind JioHotstar has ensured modularity to the next level.

Here are some of the features that a typical page holds:

- Player

- Vertically and horizontally scrolling lists, which display other contents. Now, the type of data being displayed and the UI of these lists varies based on what type of content it is.

- Watch and Play, Emojis.

- Heatmap and Key Moments.

- Different types of player controllers. — Live, Ads, VoD (Episodes, Movies etc.)

- Different type of Ad formats

- Nudge to ask user to login.

- Nudge to ask user to pay for All Live Sports

- Chromecast

- Content Description

- Error View and more

Deploying intelligent client for seamless performance

On occasions when latency in response is increased for the application client and the backend is overwhelmed with new requests, then there are established protocols which absorb this sudden surge.

For instance, in such cases, the intelligent client deliberately increases the time interval between subsequent requests, and the backend is able to get some respite.

For the end-users, there exist caching & intelligent protocols, which ensures that they are not able to differentiate this intentional time-lag, and the user experience is not hampered.

Besides, the Infradashboard continuously observes and reports every single severe error and fatal exception happening on millions of devices, and either they are rectified in real-time, or deploy a retry mechanism for ensuring seamless performance.

This was just the tip of the iceberg!

If you wish to know more about how JioHotstar operates, its system architecture, database architecture, network protocols, and need streaming app development, then connect with us. Our mobile app developers can help build a highly scalable app for your business.

With more than 13 years of experience in accelerating business agility & stimulating digital transformation for startups, enterprises, and SMEs, TechAhead is a pioneer in this space.

Book an appointment with our team, and find out why some of the biggest and most well-known global brands have chosen us for their Digital and Mobile Transformation