Required for core functionality such as security, network management, and accessibility. These cannot be disabled.

Content Moderation: Amyra runs a boutique specializing in clothes for working women. After repeated requests from her frequent clientele for an online asset – website or Facebook store – where they could order their clothes, Amyra decided to invest in a website with an integrated E-Commerce platform. When the website was launched, Amyra expected her orders to increase. Everything was as expected for a couple of months. Then she started observing that a couple of regulars were again requesting the catalog through WhatsApp rather than visiting the latest collection on the website. She decided to investigate. She was shocked when told that some of the reviews being posted on her website were outright offensive, accompanied by some objectionable photos as well. Hence, they were avoiding visiting the website and requesting the latest catalog through WhatsApp, as they did earlier.

What Amyra needed to do was use content moderation on her website. Monitoring the user-generated content (UGC) on an online platform and applying a pre-determined set of guidelines to screen out the offensive content is called content moderation. With the easy availability of high-speed internet and increasing use of smartphones, the amount of user-generated content on any platform, and the Internet, on the whole, is increasing by the day. As of May 2019, every minute 500 hours of video were being uploaded to YouTube. And Facebook users are uploading 350 million photos every day. This has led to an exponential increase in insensitive content as well.

How content moderation works

The easiest way of moderating content is to examine and analyze content before it gets published for public consumption. Content creators submit their content for review and it gets published only after it is approved by the moderators. In some cases, like for product reviews on an e-commerce platform, this could be frustrating for the user. And also, potentially result in decreasing customer engagement. As found by AdWeek, 85% of users are more influenced by user-generated content as compared to content published directly by the brands themselves.

This can be dealt with by allowing the content to go live immediately but also putting it up for moderation in the moderators’ queue. If the content is found offensive, it can be taken down after being flagged by the content moderator. The downside here is that some users might have seen the offensive content before it is taken down. It’s up to the business to decide how much sensitive content they can tolerate on their platform before it can be moderated and taken down.

According to the Data Bridge Market Research report, the global content moderation solutions market is set to grow at a CAGR of 10.7% till 2027. Technology leaders like Microsoft, IBM, and Alphabet are developing on-cloud as well as on-premise solutions to help businesses of all size moderate UGC on their platforms. Let us delve into what they actually do for your business.

How to identify sensitive content for moderation

We are repeatedly talking about offensive content, sensitive content, and pre-determined rules for moderating content. This demands a discussion on what is sensitive content. Any content, be it text, image, video, audio, or in any other form, which depicts violence, nudity, or hate speech can be termed sensitive content. The rules that determine which content is sensitive depends upon a platform’s specific requirements. If a business wants to promote freedom of speech, many contents that would otherwise be turned off and safe maybe allowed.

There are many factors that must be taken into account when deciding the rules for moderation:

- Visitor demographics— If a lot of young people use an app or platform, content moderation must ensure they are not exposed to any type of sensitive content.

- User Expectations— If users expect their content to be published immediately, the business will need to find a way of doing this while screening out sensitive content.

- Content quality— As Technology advances, users expect a higher quality of content even after it has been moderated. So, pixelating all offensive images may not be a feasible Idea anymore.

- Duplicate content— It is a real problem across the Internet, especially discussion forums and social media sites. To maintain their integrity and reliability, businesses must remove duplicate content as quickly as possible.

Content moderation is a highly specialized activity and businesses serious about it must take help of experts in coming up with moderation rules and establishing systems that implement those rules successfully. Any error in content moderation can result in loss of business and credibility, as we saw in Amyra’s case.

AI helps moderate content

The huge amount of data being generated by users makes it nearly impossible for humans to moderate content on their own. Artificial Intelligence has solved this problem to a great extent. The latest approach to content moderation is a mix of Artificial Intelligence and human moderation, where artificial intelligence systems take care of most of the routine workload.

With COVID-19 forcing people to stay home, moderation efforts have suffered. Due to legal, privacy and security issues, not all types of content can be accessed from home by the content moderators. AI systems for content moderation are finding rapid adoption due to the forced non-availability of human moderators.

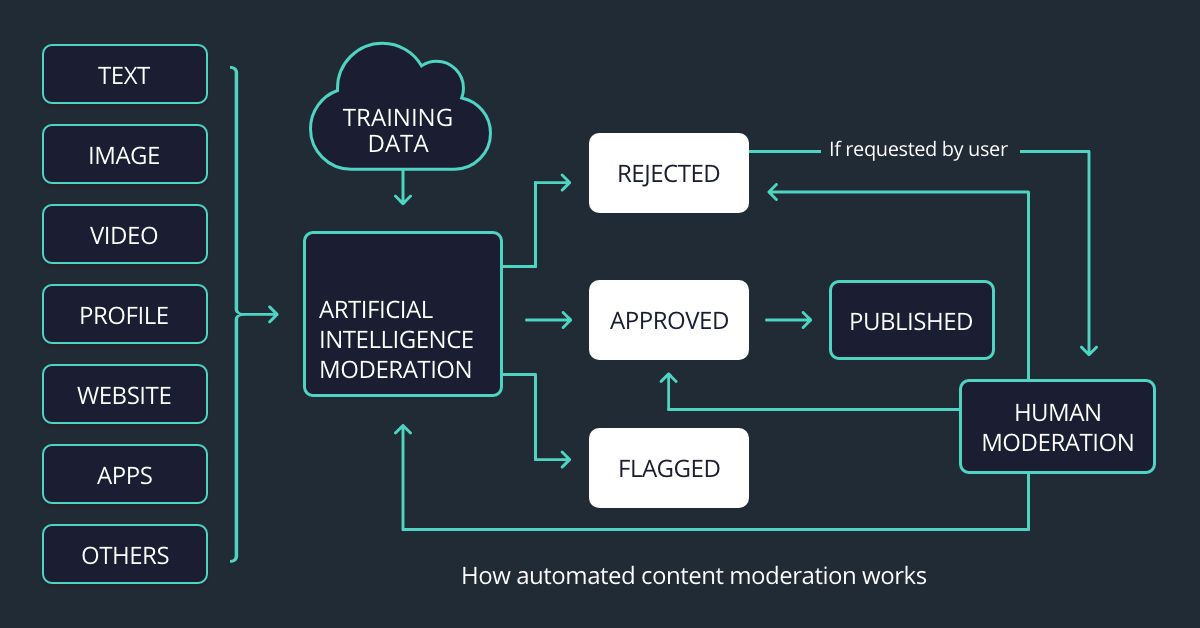

Let us understand how the AI content moderation system works in tandem with human moderators.

- All content, whether it has been published or not, is sent for moderation to the AI system.

- Based on a pre-determined set of rules and regulations fed into the system, content is screened. The offensive and racy content are then dealt with as specified in the guidelines; it may be removed completely or filtered.

- Some content is flagged for human moderation, again as per the guidelines.

- After the human moderators view the flagged content, feedback goes back into the moderation algorithm so that the AI algorithm is able to appropriately moderate the content. Thus, the system is continually trained through human expertise.

- Appropriate action is taken on the moderated content.

Human moderators bring cultural context into the moderation process. They understand the psychology behind the content because they understand the type of users they are dealing with. Because there is always a grey area of moderation where it is not possible to state clearly whether to classify the content as completely offensive or completely inoffensive; it depends on the context. This is where the expertise of human moderators comes into play.

Types of content that need to be moderated

More than 1 million people have been getting online every day, since January 2018. The majority of them are responsible for generating content on platforms that allow users. If nothing else, social media engagement generates humongous amounts of UGC. This leads to an increase in the variety of content that must pass through the moderation process. Let us look at some of them here.

Text moderation

Any website or application that accepts user-generated content can have a wide variety of text posted. If you don’t believe this, think of the comments, forum discussions, articles, etc. that you encourage your users to post. In the case of websites that have job boards or bulletin boards, the range of text to be moderated increases further. Moderating text is especially complicated because each piece of content can vary in length, format, and style.

Furthermore, language is both complex and fluid. Words and phrases that might seem innocent on their own can be put together to convey a meaning that is offensive. Even if to a specific culture or community. For the detection of cybercrimes like bullying or trolling, it is necessary to examine the sentences or paragraphs written. A blanket list of keywords or phrases that should be removed to screen out hate speech or bullying is not sufficient.

Image moderation

Although it is easier to moderate images as compared to text, image moderation has its own complexities. The same image that is acceptable for one country or culture may be offensive to another. If you have a website or app that accepts images from users, you will need to moderate the content depending upon the cultural expectations of the audience.

Video moderation

Moderating videos is extremely time-consuming because moderators need to watch it from start to finish. Even a single frame of offensive or sensitive content is sufficient to antagonize the viewers. If your platform allows video submissions, you will need to put in major moderation efforts to ensure community guidelines are not breached. Videos often have subtitles, transcriptions, and other forms of content attached to them. An end-to-end moderation requires explicit vetting of these components as well. Essentially, video moderation can end up encapsulating text moderation as well.

Profile moderation

More and more businesses encourage their users to register on their websites or ITs to understand customer behavior and expectations. However, this has added another type of content that must be a moderator — user profiles. Profile moderation can appear simplistic, but it is essential to get it 100% correct. Rogue users registered on your platform can destroy your brand image and credibility. On the flip side, if you are able to vet the profiles successfully, you can rest assured that the content posted by them will need minimum moderation.

Types of content moderation

The content moderation method that you adopt should depend upon your business goals. At least the goal for your application or platform. Do you want users to be able to post content quickly? Is it more important for you that no sensitive content is displayed even momentarily? Can you rely on your users themselves to moderate the content generated by their peers? Let’s discuss the different types of content moderation methods being used and then you can decide what is best for you.

Pre-moderation

As the name suggests, all user submissions are put in a queue for moderation before they are cybercrimes displayed on the platform. Pre-moderation ensures that no sensitive content — a comment, image or video — is ever posted on a site. But this can prove to be a hurdle for online communities that want an immediate and unrestricted engagement. Pre-moderation is best suited to platforms that require the highest levels of protection, like apps for children.

Post-moderation

Post-moderation allows users to publish their submissions immediately but the submissions are also added to a queue for moderation. If any sensitive content is found, it is taken down immediately. This increases the liability of the moderators because ideally there should be no inappropriate content on the platform if all content passes through the approval queue.

Reactive moderation

Platforms having a large number of community cybercrimes members ask the users to flag any content that they find offensive or not according to community guidelines. This allows the moderators to focus on content that has been flagged by the largest number of users. But this can also allow sensitive content to circulate on a platform for a long duration. It depends upon your business goals how long you can tolerate sensitive content to be on display.

Supervisor moderation

Supervisor moderation is cybercrimes a more focused form of reactive moderation. Here a group of moderators are selected from the online community itself, who you have the power to cybercrimes modify or delete submissions if they are offensive or do not follow community guidelines. However, the personal preferences of the moderators affect the moderation level. Also, your number of people are engaged in moderation, who are not also trained in this discipline, some offensive content might miss their eyes.

Automated moderation

In automated moderation, a variety of tools and techniques are used to filter, flag, or outrightly reject offensive content. Typically, the content passes first through an algorithm that looks for the occurrence of restricted words and verifies that content has not come from blocked IP addresses. As discussed earlier, elaborate artificial intelligence systems and be used to analyze text, image, and video content. Finally, human moderators may be involved in the automated systems flag something for their consideration.

Depending upon the sensitivity of the platform, a business can choose to you publish content at any stage of moderation. For instance, text content could be published after filtering for restricted words, and moderation on its meaning and context can continue in parallel. This ensures faster publishing of content, which can be an important consideration in user-generated content.

Challenges in content moderation

It is clear from our discussion till now that any business having an online presence needs to moderate the user-generated content on its platforms. Here are some of the most common challenges faced by them, depending upon their size, online presence, level of user engagement, and type of content being posted by users.

- Emotional well-being of content moderators — the human content moderators are exposed to extreme and graphic violence, explicit content horrific experiences. Continued exposure to such content can have mental impacts like post-traumatic stress disorder, mental burnout, and other mental health disorders.

- Quick response time — Each business has multiple online platforms to manage at any given point of time and there are different types of content being uploaded every minute. To top this, users expect the content to be moderated quickly. Managing this load even when processes are automated to a great extent is challenging.

- Catering to diverse cultures and expectations — as mentioned earlier, what is acceptable for one community may be offensive to another and vice versa. Businesses need to localize their moderation efforts so that they are able to meet the Expectations of their varied audiences.

TechAhead can help design content moderation solutions

If your business has an online presence, be it a website, an app, social media handles, or discussion forums, you need to moderate user-generated content on all your platforms to build brand image and loyalty.

Our team of Azure Content Moderator solutions experts can help you analyze and understand your requirements. As discussed, establishing the guidelines to identify sensitive content is the most important first step of content moderation strategy. Our experts help in designing these guidelines and then integrate Artificial Intelligence (AI) development and Machine Learning with other technologies like Blockchain development and crowd-sourcing to develop an efficient automated content moderation solution.

Summary

Reviewing user-generated content on any online platform and screening out offensive content based on a pre-determined set of guidelines is called content moderation. Text, photos, videos, audios, or any other type of content that depicts violence, hate speech or nudity can be called sensitive content. However, the ultimate onus of categorizing any content as offensive or sensitive lies completely on the business that is moderating the content.

Establishing clear guidelines for moderating content is the most important first step towards building an effective content moderation strategy. More than 1 million people have been getting online since January 2018. This large number of internet users ensures that millions of photos, videos, or text content are being uploaded on every platform continuously. It is not possible for human moderators to take on a full load of moderation.

Over the past couple of years, content moderation efforts are distributed between artificial intelligence systems and human moderators. All content that has to be moderated is sent to the system which screens it on the basis of moderation guidelines given to it. The approved content is published whereas some content-aware systems could not decide whether it was sensitive or not is flagged for human moderation. Human reviewers view the flagged content and take decision as to its appropriateness.

Human review is also sent back to the AI system as training feedback. There are different approaches to moderating content. Some businesses want to moderate content before publishing it whereas some of them publish it and simultaneously put it in a queue for moderation. Some platforms also choose moderators from their user base or allow all the users to flag content they find inappropriate.

Content moderation is emotionally very challenging for human moderators. Continued exposure to violence, hate speech, terrorism, or bullying wreak havoc on their mental health and some of them display symptoms of the post-stress traumatic disorder. Besides responding quickly to users’ moderation expectations, businesses also need to tailor their content moderation guidelines according to the community or country their audience resides in.