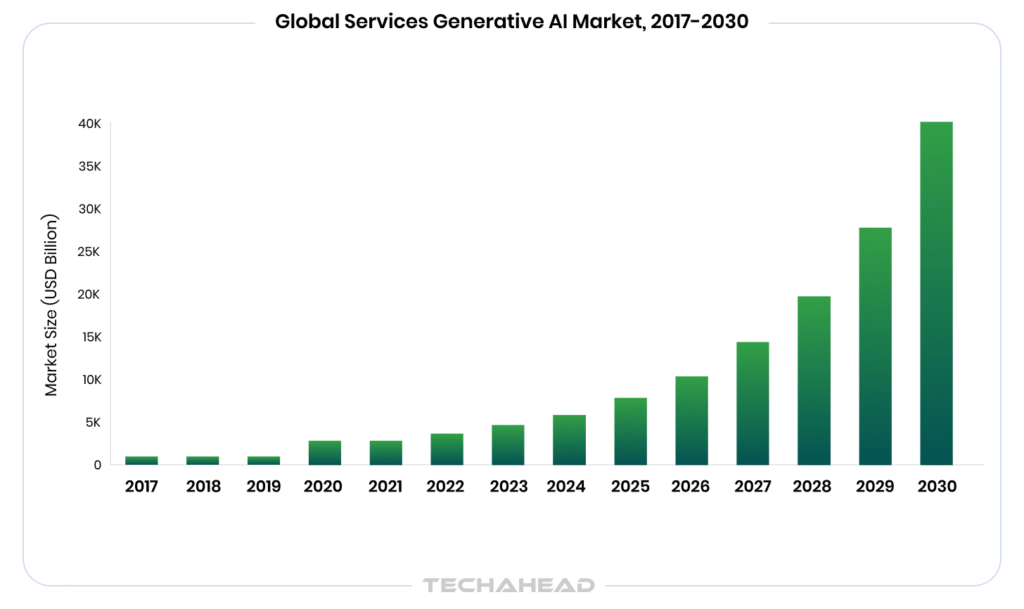

Artificial intelligence has become a business necessity; the global generative AI services market was valued at $7,976.3 million in 2025, and is projected to grow at a staggering 41.7% CAGR through 2033. Enterprises across every sector are racing to adopt GenAI. However, speed without structure is where the real danger begins.

The cybersecurity implications alone are staggering. Unguarded GenAI systems are active attack surfaces, vulnerable to prompt injection, data exfiltration, and adversarial manipulation that traditional security tools were never designed to catch. One successful breach does not just cost money. It costs customer trust, regulatory standing, and in heavily regulated industries, it can cost your operating license.

The financial exposure is equally serious. IBM’s Cost of a Data Breach Report consistently places the average enterprise breach cost above $4 million. When AI systems handle sensitive customer data, proprietary business logic, and high-stakes decisions without guardrails, that number climbs even higher; faster than most finance teams anticipate.

Yet many enterprises are still deploying GenAI with minimal controls, assuming the model itself is safe enough. It is not. The model is only as responsible as the infrastructure surrounding it. That is exactly what this blog addresses. This blog explores the exact framework, techniques, and use cases to build GenAI your enterprise can trust.

Key Takeaways

- GenAI without guardrails is an enterprise liability, not a competitive advantage.

- Confusing guardrails with governance leads to gaps that cost enterprises millions.

- Input guardrails block harmful prompts before they ever reach your AI model.

- Output guardrails catch problematic responses before they reach your end users.

- Human-in-the-loop filtering is essential for high-stakes, automated enterprise AI decisions.

Why is GenAI without Guardrails an Enterprise Liability, Not an Asset?

GenAI is powerful, however, power without control is a risk. When enterprises deploy AI systems without proper guardrails, they open the door to data leakage, biased outputs, regulatory violations that can cost millions.

Unguarded AI does not just produce errors. It exposes your business to reputational damage, legal scrutiny, and broken customer trust. For enterprise owners, that trade-off is simply not worth it.

Guardrails vs. AI Governance: Understanding the Difference Before You Build

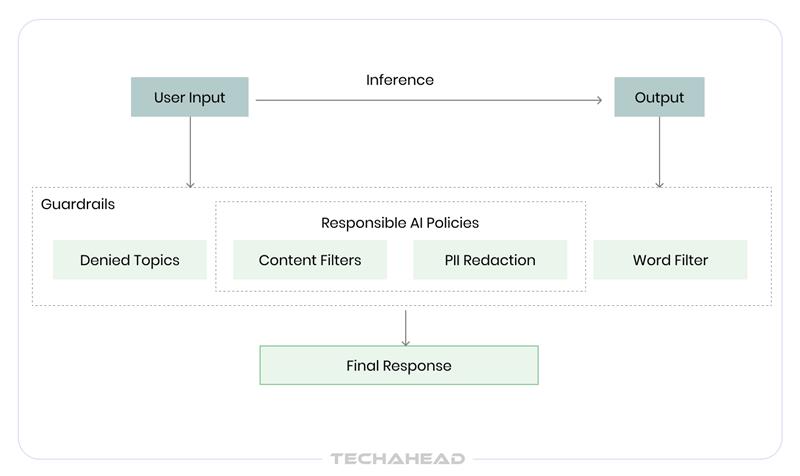

Most enterprises use guardrails and AI governance interchangeably. That is a costly mistake. Guardrails are the technical enforcement layer; the real-time controls built directly into your AI system. Governance is the broader policy framework that defines rules, accountability. One executes. The other directs. Here is the difference between these two:

| AI Guardrails | AI Governance | |

| What it is | Technical enforcement layer | Overarching policy framework |

| Who owns it | Engineering & Dev Teams | CIO, Legal & Compliance |

| When it acts | Real-time, at inference | Strategic & periodic |

| Examples | Content filters, PII redaction | AI ethics policies, audit trails |

| Goal | Block harmful outputs instantly | Ensure long-term accountability |

Think of governance as the rulebook and guardrails as the referee on the field. Without the rulebook, the referee has no direction. Without the referee, the rulebook is just paper. Enterprises that follow both, they build AI systems that scale responsibly.

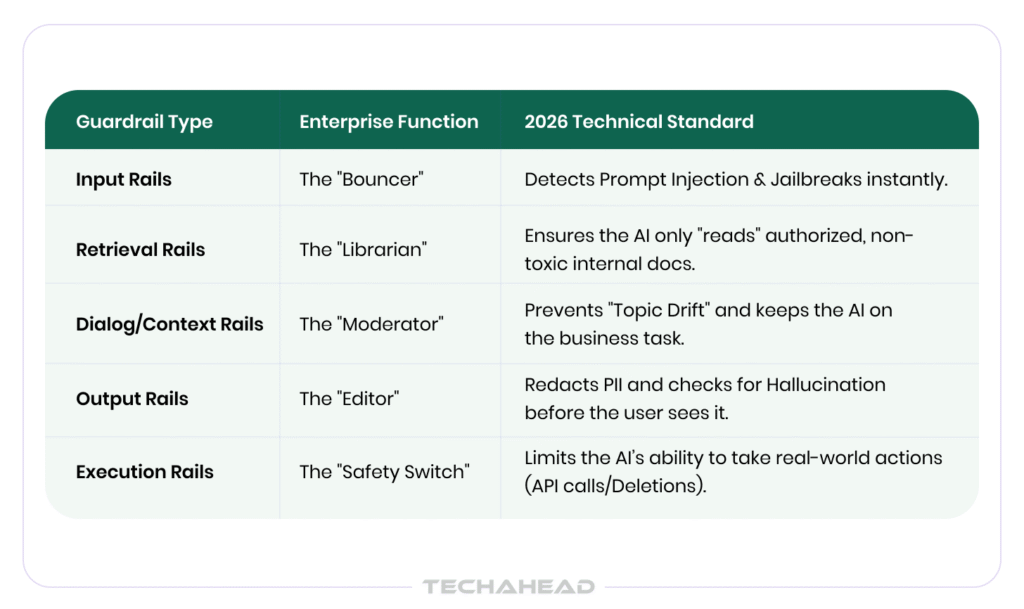

Types of Guardrails for Responsible Gen AI Systems

Not all guardrails are built the same. Depending on where and how your AI operates, you will need different layers of control working together. Here are the core types every enterprise should know:

Input Guardrails

These act as the first line of defense. Before any user prompt even reaches your AI model, input guardrails scan it for harmful intent, sensitive data, or policy violations. Think of it as a security checkpoint at the entrance.

Example: A financial services firm deploys input filters that automatically block any prompt containing account numbers, SSNs, or attempts to extract proprietary trading logic.

Output Guardrails

Even well-prompted models can produce problematic responses. Output guardrails review AI-generated content before it reaches the end user, flagging hallucinations, toxic language, or confidential information that may have slipped through.

Example: A healthcare enterprise uses output filters to make sure AI-generated patient summaries never include unverified diagnoses or non-compliant medical advice.

Contextual Guardrails

These are dynamic; they evaluate the full conversation context; not just a single message. It matters because harmful intent often builds gradually across multiple exchanges.

Example: An enterprise chatbot detects when a conversation is drifting toward a competitor comparison and gracefully redirects without exposing internal pricing data.

Role-Based Guardrails

Not every employee should have the same AI access. Role-based guardrails restrict what different users can ask or receive based on their permissions and job function.

Example: A junior sales rep can access product FAQs via AI, but only a senior manager can query revenue forecasts or customer churn data.

Together, these guardrails create a layered defense system. No single type is enough on its own, so enterprises that stack all four build AI environments that are not just powerful, but safe & audit-ready.

Content Filtering Techniques for Responsible GenAI Systems

Building a responsible GenAI system is not just about choosing the right model. It is about what you put around it. Content filtering techniques are the practical mechanisms that keep AI outputs safe and aligned with your enterprise standards. Here is what actually works.

Keyword Based Filtering

The most simple technique is to scan inputs-outputs for flagged words, phrases, or data patterns. Like brand name or competitor mentions. Fast and easy to implement. However, it does not work best as a standalone solution.

Semantic Filtering

Unlike keyword filtering, semantic filtering understands meaning. It uses NLP models to detect harmful intent even when users rephrase or disguise their prompts creatively. For example, when a user asks an enterprise HR bot “how do I get around the leave policy?” , here semantic filtering catches the intent even without an explicit policy violation keyword.

PII Detection and Redaction

Personally Identifiable Information must never flow freely through AI pipelines. PII filtering automatically detects and redacts names, emails, phone numbers, financial data before they enter or exit the model. For instance, a legal tech company automatically redacts client names, case numbers from any AI-generated document summaries before delivery.

Toxicity & Bias Detection

AI can unintentionally produce biased or harmful content. Toxicity filters score outputs against trained classifiers that detect hate speech, discriminatory language, or culturally insensitive responses.

Human-in-the-Loop Filtering

For high-stakes decisions, automation alone is not enough. Human review queues flag edge cases that automated filters are not confident about, adding a critical layer of judgment where it matters most. Here for instance, an insurance company routes any AI-generated claim decision with a confidence score below 85% to a human reviewer before sending it to the customer.

In short, no single technique covers every risk; and the best enterprise GenAI systems layer all these methods together.

Step-by-Step Implementation Guide for Responsible Gen AI Model

Deploying GenAI responsibly is a structured process. So, follow these steps to build an AI system your enterprise can actually trust.

Step 1: Define Your AI Use Case & Risk Profile

Identify What the AI Will Do: Before writing a single line of code, get clear on the purpose.

Is it customer support? Internal knowledge retrieval? Document generation? Each use case carries a different risk level that shapes every decision that follows.

Conduct a Risk Assessment: Map out what could go wrong. Data exposure, biased outputs, regulatory violations; document them early. It is your guardrail blueprint.

Step 2: Set Your Governance Framework

Set Policies Before You Build: Define acceptable use policies, data handling rules, escalation protocols. Involve your legal, compliance, and IT teams from day one, not after deployment.

Assign Ownership: Every AI system needs a responsible owner. Assign clear accountability for monitoring, updating & auditing the system on an ongoing basis.

Step 3: Layer Your Guardrails

Implement Input & Output Filters: Deploy keyword, semantic, PII filters at both ends of your AI pipeline. Start strict. You can loosen controls later, recovering from a data breach is far harder.

Configure Role-Based Access Controls: Restrict what different user roles can query or receive. Not everyone in your enterprise needs the same level of AI access.

Step 4: Test Rigorously Before Go-Live

Run Red Team Exercises: Simulate adversarial prompts, jailbreak attempts, edge cases. If your guardrails can be bypassed in testing, they will be bypassed in production.

Measure False Positive Rates: Over-filtering frustrates users and kills adoption. Calibrate your filters to balance security with usability.

Step 5: Monitor, Audit & Iterate

Set Up Real-Time Monitoring: Track flagged inputs, blocked outputs, and unusual usage patterns continuously. Threats evolve so your guardrails must too.

Schedule Regular Audits: Quarterly reviews (or Real-time Drift Detection) of your filtering rules, access controls, compliance alignment keep your system responsible as your business scales.

The enterprises that treat responsible AI as an ongoing discipline are the ones that scale confidently without costly setbacks.

Use Cases of Guardrails for Responsible GenAI Systems

Indeed, building guardrails are not a one-size-fits-all solution. Across industries, enterprises are deploying them in distinctly different ways, each tailored to their unique compliance demands, customer expectations, and operational risks.

Here is how it looks in practice:

Financial Services

Banks or investment firms handle some of the most sensitive data in existence. GenAI guardrails here enforce strict PII redaction, block unauthorized access to account data that prevent AI from generating speculative financial advice which could violate SEC regulations.

Healthcare

In healthcare, one wrong AI output can have life-altering consequences. Guardrails make sure your AI tools never generate unverified diagnoses, recommend prescription dosages, or expose protected health information. For example, you can apply it in your hospital network to prevent staff from inadvertently querying or sharing patient records outside your authorized department.

Legal and Compliance

Law firms and corporate legal teams use GenAI for document review or contract analysis. Guardrails here prevent confidential case details from leaking across matters and block AI from generating legal opinions that could constitute unauthorized practice of law.

Retail & E-Commerce

Customer-facing AI in retail must stay on-brand and accurate. Content filters block competitor mentions, prevent pricing errors from being communicated, and offer personalized promotional content following the local advertising standards.

Across every industry, the pattern is the same. Guardrails are not obstacles to AI adoption; they are what makes adoption sustainable/scalable for enterprise operations.

Frameworks for Implementing Guardrails in Responsible GenAI Systems

Every enterprise needs a structured approach. These proven frameworks translate guardrail strategy into real, operational controls your AI system enforces automatically.

Core Pillars of a Responsible GenAI Framework

Safety, Fairness, Transparency & Accountability; every GenAI framework must be anchored to four non-negotiables. Safety means outputs never cause operational or regulatory harm. Fairness prevents model bias from influencing decisions. Transparency means every AI action is logged and explainable. Accountability assigns clear human ownership to every automated decision.

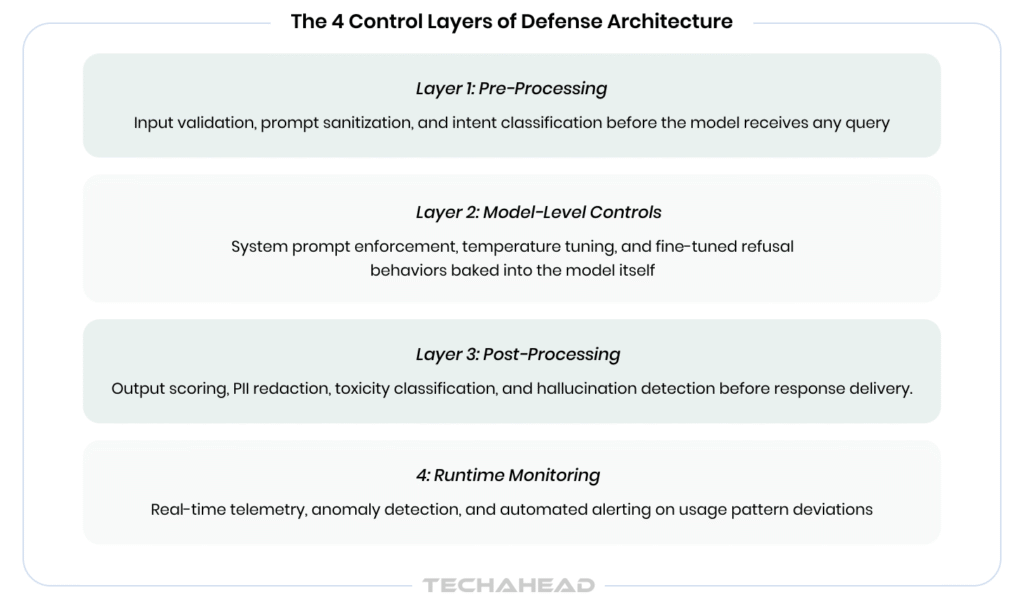

The Layered Defense Architecture

Borrowing from cybersecurity, a GenAI framework applies defense-in-depth; multiple independent control layers, each catching what the previous one missed.

Incident Response and Escalation Protocols

It means define severity tiers for guardrail breaches; from low-risk filter flags to crucial data exposure events. Integrate SIEM tools to trigger automated containment workflows when thresholds are breached, which isolate affected AI endpoints before human review begins.

Continuous Monitoring, Model Drift Detection & Iteration

AI models degrade over time so schedule quarterly red team exercises, monitor output quality metrics continuously, track model drift using statistical benchmarks.

Every audit cycle should produce documented updates to filtering rules, access policies, and escalation thresholds; keeping the framework aligned with business growth.

Conclusion

Throughout this guide, we have walked through the types of guardrails, filtering techniques, implementation steps, real-world industry use cases, and the technical framework your enterprise needs to deploy GenAI responsibly. However, you need a deliberate architecture, cross-functional ownership, and a development partner who understands both the technology and the stakes. Ready to build a responsible GenAI system for your enterprise?

As a leading GenAI app development company, we bring deep expertise in designing RAG & Gen AI systems with advanced guardrails, compliance-aligned architectures, content filtering pipelines tailored to your industry. Our team has the technical depth and enterprise experience to get it done. Schedule a free consultation with TechAhead.

A basic deployment with essential guardrails takes 8–12 weeks. However, enterprise-grade systems with full compliance alignment, custom filtering pipelines, role-based controls need 12-16 weeks.

Well-architected guardrails add 30-100ms (via SLMs) of latency; negligible for most enterprise use cases. Asynchronous filtering and edge-deployed models make sure the performance does not degrade under high-volume loads.

Flagged queries are routed to a human review queue or logged for rule refinement. Enterprises should track false positive rates monthly (or implement Real-time Drift Alerts) and recalibrate filtering thresholds to reduce unnecessary disruptions.

Treat guardrails as independent infrastructure, not model-dependent code. Version-control your filtering rules separately, and run full regression testing against new model versions before every production update.