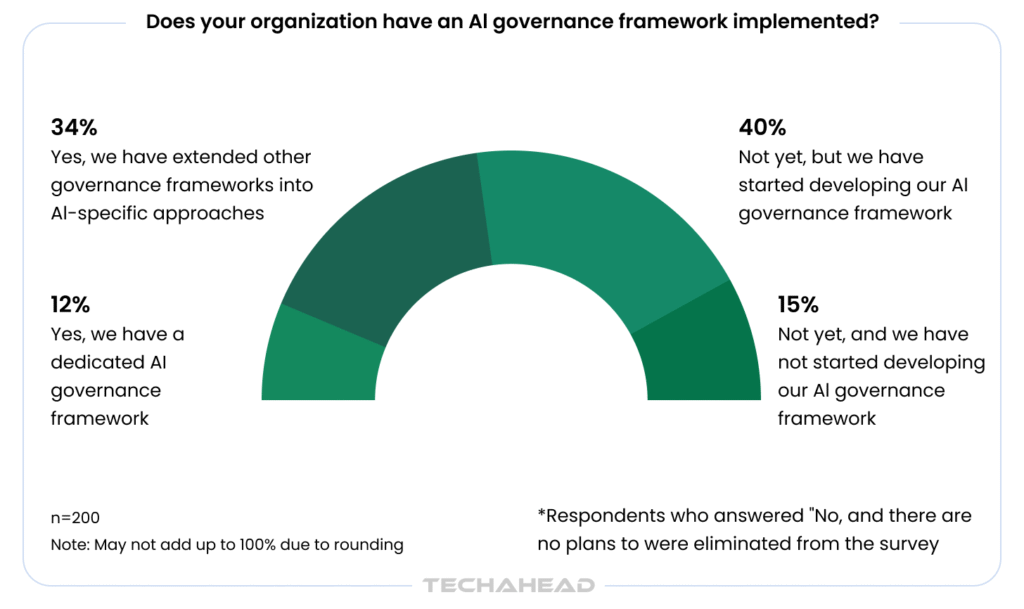

Your enterprise is likely running AI right now, but is it truly secure? The IBM X-Force 2026 Threat Intelligence Index reveals that a staggering 70% of all incidents they responded to targeted critical infrastructure. Meanwhile, a Gartner survey found that only 23% of IT leaders are truly confident in their organization’s AI governance. By 2028, these gaps are projected to drive a 30% spike in legal disputes for tech firms. So if you are deploying AI without a responsibility framework, you are not just taking a technology risk, you are taking a business risk. Organizations that deploy AI governance platforms are 3.4 times more likely to achieve high governance effectiveness than those that don’t (Gartner, 2025). This guide breaks down exactly how to protect your enterprise and build AI systems that are compliant, secure, and transparent; before regulators force your hand.

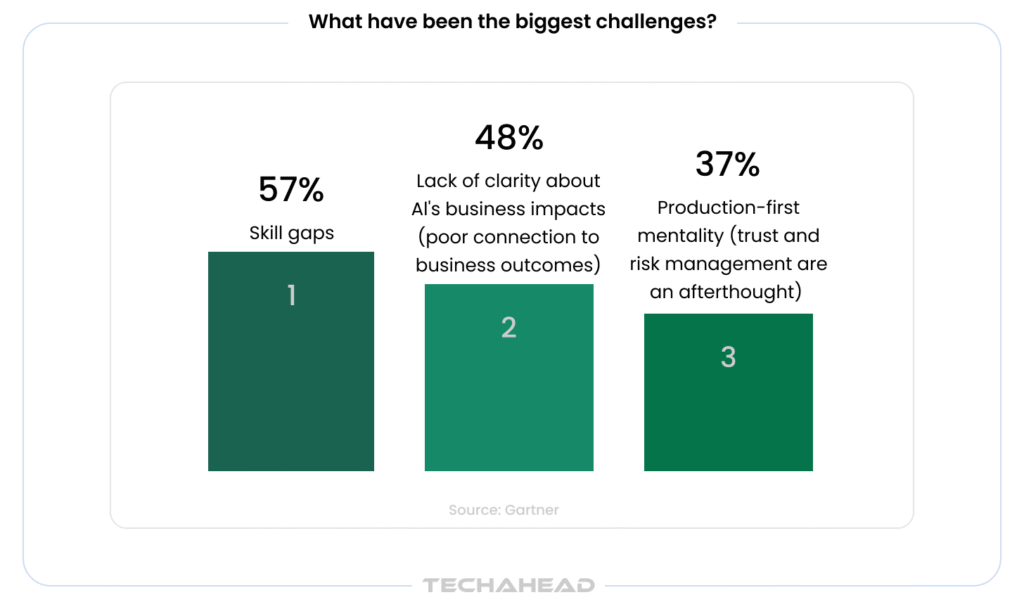

Source: Gartner

Key Takeaways

- AI cyberattacks to exceed 28M incidents in 2025.

- IBM X-Force: Vulnerabilities cause 40% incidents in 2026.

- Prompt injection risks from third-party content.

- 72% S&P 500 disclosed AI risks in 2025, up from 12%.

- AI governance platforms boost effectiveness 3.4 times, Gartner 2025.

Why Responsible AI is No Longer Optional for Enterprises?

AI adoption is accelerating, but so is the exposure that comes with it. In 2025, 72% of S&P 500 companies disclosed at least one material AI risk, up from just 12% in 2023. For enterprise owners, this is not a trend to monitor from a distance; it is a priority. The risks fall into three clear categories that directly threaten your bottom line:

Reputational Damage: One lapse, an unsafe output, a biased decision, or a failed rollout, triggering customer backlash, investor skepticism, regulatory scrutiny.

Legal & Regulatory Exposure: Global AI-driven data privacy and biometric fines exceeded $2.6 billion in 2024, with the EU AI Act now imposing steep penalties on high-risk systems.

Financial and Security Risk: Ransomware costs have surged to $5.5–6 million per incident, with 41% of breaches originating from third-party vendors.

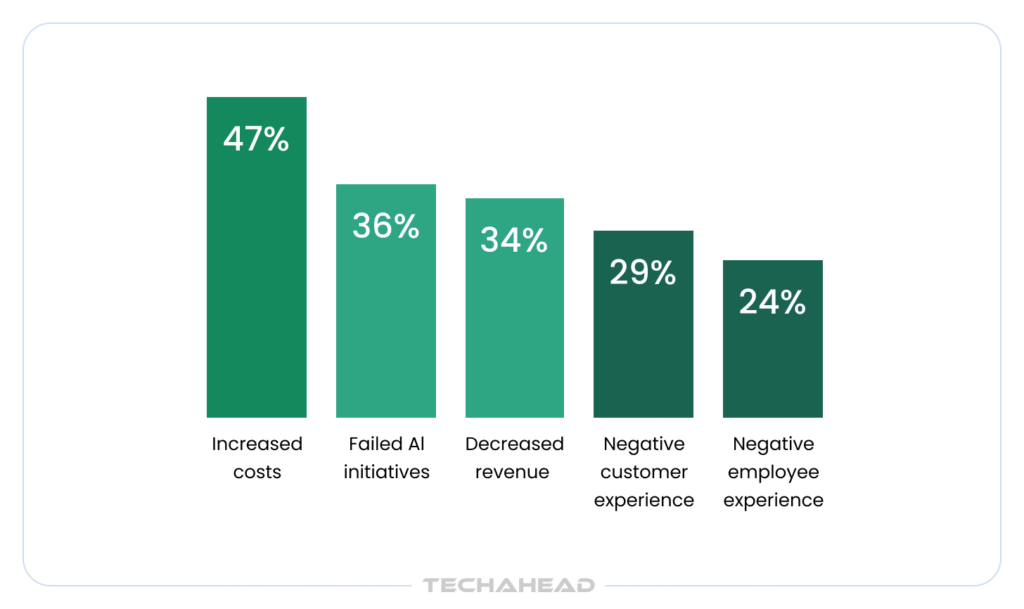

So, What negative impacts has your organization experienced due to lack of AI governance?

Source: Gartner

In many enterprises, AI adoption is moving faster than governance, regulation, workforce readiness can keep up. Closing that gap is not just about avoiding penalties; it is about protecting the trust your business is built on.

Understanding the AI Regulatory Landscape in 2026

AI regulation has officially moved from voluntary guidance to enforceable law. In 2024 alone, U.S. federal agencies introduced 59 AI-related regulations more than double the previous year. For enterprise owners, this signals one thing: the window to “prepare later” has closed.

The EU AI Act: What Every Enterprise Must Know

The EU AI Act classifies AI systems across four risk tiers: unacceptable (banned), high-risk (strict obligations), limited risk (transparency rules), and minimal risk with compliance obligations scaling to match each tier. The deadline for most enterprises is now here:

- August 2, 2026 is when requirements for high-risk AI systems become enforceable; covering AI used in employment, credit decisions, education, and law enforcement contexts.

- Violations carry fines of up to €35 million or 7% of global annual turnover, penalties that actually exceed those under GDPR.

- The Act applies to any organization deploying AI that affects people within the EU.

U.S. Federal and State AI Mandates (NIST, HIPAA, FTC)

The U.S. operates under a fragmented model. At the federal level, agencies like the FTC, NIST, and Department of Commerce are interpreting AI compliance within existing mandates. In the absence of a single federal AI law, states have taken the lead. While the federal government currently prioritizes “innovation-first” policies, states like Texas and Colorado have introduced laws that reward proactive governance. In these states, aligning with the NIST AI RMF isn’t just a best practice, it provides a “Safe Harbor” legal defense against claims of algorithmic discrimination.

Key obligations enterprises must track:

- NIST AI RMF: A highly influential voluntary framework that structures AI risk management across four pillars: Govern, Map, Measure, and Manage.

- FTC: Actively targeting “AI washing”, misleading claims about AI capabilities along with privacy violations and algorithmic pricing concerns.

- HIPAA + Sector Rules: Healthcare AI must comply with HIPAA privacy requirements, FDA medical device regulations, and state medical practice laws simultaneously.

- State Laws: The Colorado AI Act (effective June 2026) is the most comprehensive U.S. law and also emphasizes the “duty of reasonable care” which is satisfied by NIST-style frameworks.

How to Build an AI Compliance Framework That Actually Works?

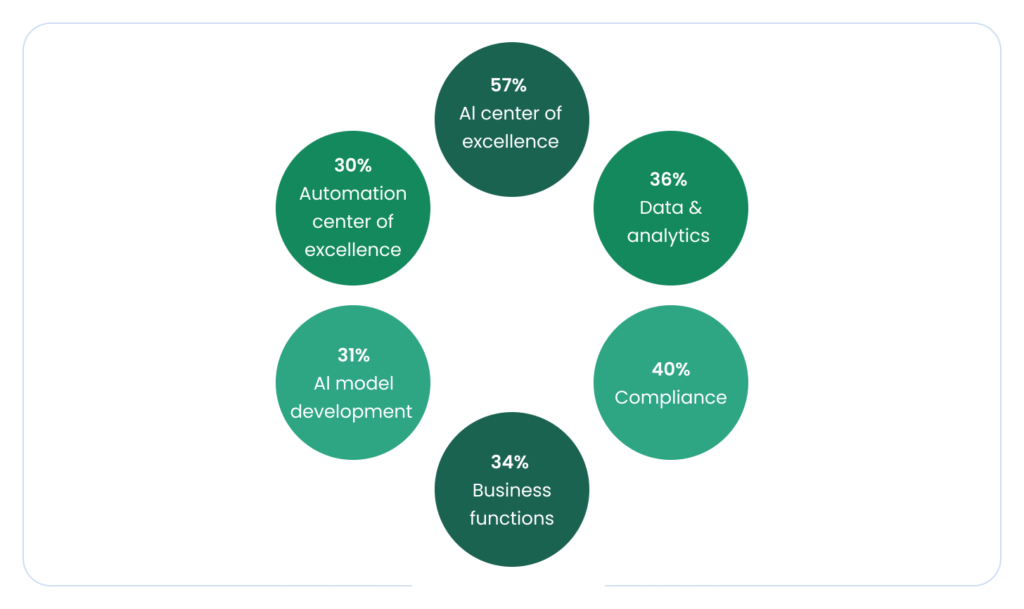

The majority (57%) of those whose organizations had already implemented an AI governance framework involved an AI center of excellence in determining AI governance policies and procedures at their organization.

Source: Gartner

Most enterprises have good intentions around AI compliance, but intentions do not hold up in a regulatory audit. Approximately 38% of enterprises have moved from ‘ad-hoc’ to ‘defined’ AI governance as of 2026. However, the gap between policy and practice is where companies get exposed. Here is how to close it.

Risk-Based Classification of AI Systems

Not all AI carries the same risk, and your compliance effort should reflect that. The EU AI Act categorizes AI systems into four tiers: Prohibited, High-Risk, Limited Risk, and Minimal Risk, each with distinct compliance obligations.

Start by mapping every AI system you deploy against these categories:

- Prohibited: Social scoring, real-time biometric surveillance prohibited with narrow law enforcement exceptions

- High-Risk: Hiring tools, credit scoring, healthcare diagnostics, require strict documentation, human oversight, and bias testing

- Limited/Minimal: Chatbots, productivity tools, transparency disclosures apply

Fines for non-compliance can reach €35 million or 7% of global annual turnover for serious EU AI Act violations.

Creating Audit Trails and Compliance Documentation

Maintaining timestamped documentation throughout AI lifecycles, which covers risk assessments, control implementations, monitoring results, and incident responses. Besides that, it also creates audit-ready evidence that holds up during regulatory inquiries.

Key documentation requirements include:

- Model versioning and change logs

- Data lineage records showing where training data originated

- Human override and intervention logs for high-risk systems

- Regular bias audit results across protected classes

Approximately 45% of enterprises have implemented a recurring AI audit schedule. So it means, if your audit trail is not continuous, it is already behind the curve.

Setting Up an AI Ethics Board

An AI Ethics Board is the governance muscle that turns written policy into daily practice. Key roles include Model Owners accountable for AI system performance, Compliance Officers tracking regulatory changes, Ethics Advisors reviewing high-risk use cases, and Security Analysts monitoring real-time AI events.

55% of organizations rank senior management sponsorship as the single most crucial factor in building a strong compliance culture.

Securing AI Systems Against Emerging Threats

AI is rewriting the rules of cybersecurity; on both sides. The same technology helping enterprises defend their systems is being weaponized against them. Global AI cyberattacks are projected to surpass 28 million incidents in 2025, a 72% year-over-year increase. For enterprises running AI-powered operations, the attack surface has never been larger.

AI-Specific Vulnerabilities Enterprises Need to Address

AI systems introduce risks that traditional security tools were not built to handle. The main threats are adversarial attacks, where malicious inputs trick models into making incorrect decisions and data poisoning. Here hackers corrupt training datasets to introduce backdoors or cause misclassifications.

Then there is the prompt injection problem. By design, LLMs cannot distinguish between instructions and passive data. It means content passed from third-party sources like documents or emails can be executed as a prompt.

Recent 2025 data reveals a staggering reality: nearly 1 in 10 prompts (8.5%) sent to GenAI tools by employees contain sensitive corporate data, ranging from unreleased source code to M&A strategy.

Data Privacy and Protection in AI Pipelines

Your AI pipeline is only as secure as its weakest integration. As of 2026, the complexity of AI workloads has significantly shifted the threat landscape: one-third of organizations have already reported a cloud data breach involving an AI workload. However, the root causes have evolved. According to the 2026 IBM X-Force Threat Intelligence Index, the era of “AI-enabled vulnerability discovery” has arrived, with vulnerabilities now accounting for 40% of incidents—a sharp increase from previous years. This is followed by 32% of breaches caused by compromised credentials and 23% linked to misconfigured cloud settings.

Compounding this technical risk is the human element. Shadow AI remains a critical blind spot; 49% of employees continue to use unsanctioned AI tools to maintain productivity, often feeding proprietary data into public models. Perhaps more concerning for enterprise owners is that over 60% of staff admit they do not understand whether their inputs are stored, analyzed, or used to train future model iterations. In 2026, “Shadow AI” isn’t just a policy violation—it is a continuous data leak that bypasses traditional perimeter security.

Human Oversight as a Security Layer

Technology alone cannot secure AI. 63% of organizations lack formal AI governance policies, and those without governance pay significantly more when AI-related incidents occur.

IBM’s X-Force found a 44% increase in attacks exploiting public-facing applications, driven by missing authentication controls and AI-enabled vulnerability discovery. (GDPR Local) Human-led oversight, regular audits, access controls, and clearly defined accountability remains the most reliable last line of defense.

Building Transparent AI: From Black Box to Explainability

When AI makes a decision (approving a loan, screening a candidate, or flagging a fraud) can your team explain why? If not, you have a transparency problem. Nearly 80% of major organizations now have an ethical charter or set of AI principles, leaving the vast majority exposed to regulatory and reputational risk.

What “Explainability by Design” Means for Your Business?

Explainability is not a feature you bolt on after deployment; it is an architectural choice made from day one. It means building AI systems where every decision has a traceable, auditable reasoning path.

Techniques like LIME and SHAP help developers understand which features drive predictions across different groups, turning opaque models into systems your teams, customers, and regulators can actually trust.

Communicating AI Decisions to Customers and Regulators

Transparency is not just internal. The EU AI Act came into force in August 2024, For the most serious violations of the EU AI Act (e.g., using prohibited AI practices), fines can reach up to €35 million or 7% of global annual turnover, whichever is higher. Enterprises must be able to clearly communicate how AI decisions are made, especially in high-stakes areas like hiring, lending, and healthcare. Public trust in AI is also declining, 61% of Americans now prioritize data privacy as “very important,” and 85% support national efforts to make AI transparent and secure before products hit the market.

Tools for AI Monitoring and Bias Detection

The right tooling makes transparency scalable. Leading platforms in 2026 include:

- IBM AIF360: open-source bias detection across pre-, in-, and post-processing stages

- Fiddler AI: real-time bias, drift, and anomaly detection with dashboards for both technical and non-technical stakeholders

- Credo AI: policy-driven governance aligned with NIST and ISO AI risk frameworks, purpose-built for regulated industries

- AWS SageMaker Clarify: detects potential bias and generates explainability metrics throughout the MLOps workflow.

How to Implement an AI Governance Framework Step by Step?

A responsible AI strategy does not start with technology, it starts with ‘structure’. Without clear ownership, defined risk processes, and ongoing oversight, even well-intentioned AI deployments can spiral into compliance failures.

Assigning Roles: Data Stewards, AI Leads, and Compliance Officers

Effective governance begins by assigning data stewards to oversee data quality. Besides that, AI leads to manage implementation, and compliance officers to handle regulatory risk. Beyond these three pillars:

- CAIO (Chief AI Officer): Owns the strategic AI roadmap and sits at the intersection of business value and ethical risk.

- Model Owners: The “Product Managers” of the AI world. They are legally accountable for the model’s output and for maintaining the “Digital Fact Sheet” required by regulators.

- Compliance Officers: track evolving regulatory requirements, conduct governance audits, and maintain documentation demonstrating compliance with applicable frameworks.

- CISOs: ensure AI governance integrates with broader cybersecurity strategies and risk management processes.

Integrating AI Risk Into Your Enterprise Risk Management

In 2026, organizations with mature AI governance—often termed ‘AI Achievers’—are realizing 30% higher ROI on AI initiatives compared to peers who skip the governance layer, primarily due to faster scaling and fewer ‘re-work’ cycles caused by bias or error.

Continuous Auditing and Monitoring Processes

Governance in 2026 requires real-time observability. Best-in-class enterprises now utilize:

- Automated Guardrails: Middleware that blocks “hallucinations” or PII leaks before the user sees the output.

- Adversarial Testing Logs: Records of “Red Teaming” exercises where the system is intentionally attacked to find weaknesses.

- Immutable Decision Logs: Using secure, timestamped ledgers (sometimes blockchain-based) to prove that a model’s decision-making process hasn’t been tampered with post-deployment.

Conclusion

In 2026, responsible AI is no longer a corporate social responsibility initiative—it is a mandatory pillar of enterprise architecture. The landscape has shifted from voluntary ethics to hard-coded legal requirements, where transparency and security are the primary currencies of trust. For enterprise owners, the challenge lies in bridging the gap between rapid innovation and rigid compliance. Building AI that is both powerful and ethical requires more than just code; it requires a partner who understands the nuances of the EU AI Act, ISO 42001, and NIST frameworks. At TechAhead, we specialize in developing custom enterprise platform solutions, “compliance-by-design”. Is your AI strategy ready for 2026? Consult with our AI experts for a comprehensive AI Risk Assessment and let’s build a future your customers can trust.

Responsible AI means deploying AI systems that are fair, transparent and secure. It protects your business from legal penalties, reputational damage, and operational failures while building long-term customer trust.

Most AI breaches in 2025/2026 did not happen through direct hacks, but through Model Supply Chain vulnerabilities. Implement access controls, encrypt sensitive data, continuously monitor AI pipelines. Regular security audits and a dedicated AI risk owner significantly reduce your exposure to costly breaches and compliance violations.

The most common mistakes include shadow AI, skipping governance frameworks, underestimating bias risks, over-automating without human oversight, and ignoring regulatory requirements. Many enterprises also deploy AI faster than their teams are trained to manage it responsibly.

Absolutely. AI governance scales to your size. SMEs can start with lightweight policies, clear role ownership, and affordable monitoring tools. You can build a solid ethical foundation well before regulatory pressure or business complexity demands a larger framework.