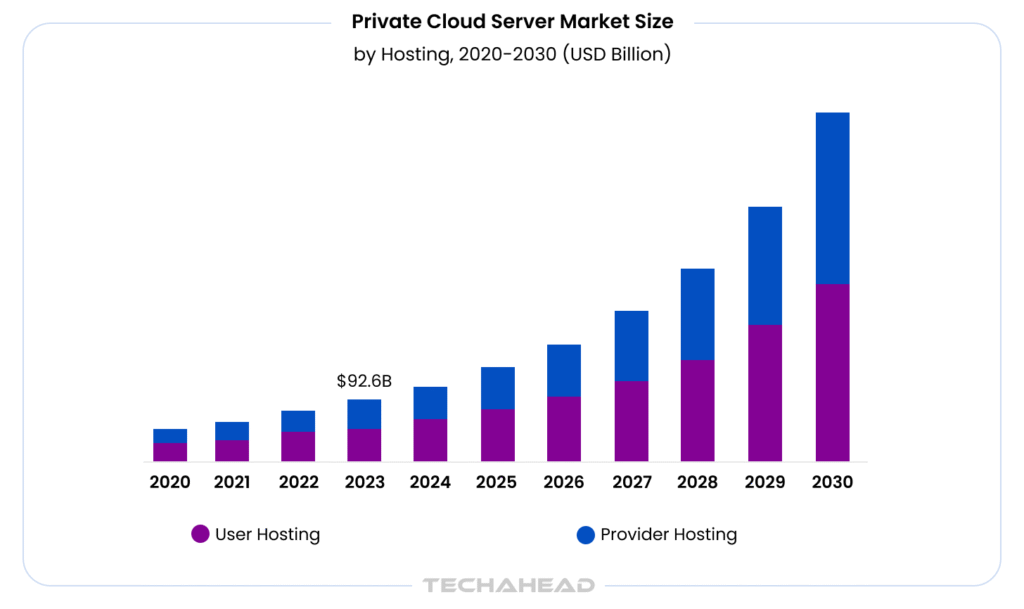

AI is rewriting the rules of enterprise infrastructure. The global private cloud server market was estimated at USD 92.56 billion in 2023 and is projected to reach USD 508.50 billion by 2030, growing at a CAGR of 28.3%. This explosive growth signals one thing clearly; enterprises are no longer settling for shared infrastructure.

Source: Grand View Research

As AI workloads grow more complex and data regulations tighten, private cloud has become a necessity. Private cloud delivers the dedicated performance, regulatory alignment, and customization that public environments simply cannot match. This blog breaks down why private cloud is the smartest infrastructure move enterprise leaders can make for AI-driven growth today.

Key Takeaways

- Private cloud gives enterprises dedicated infrastructure, which runs faster, safer, and more reliably than public cloud environments.

- AI workloads demand high compute power & low latency; private cloud delivers both without sharing resources with others.

- Enterprises using private cloud gain full control over their data.

- Scalability in private cloud means enterprises can expand infrastructure on their own terms.

- With private cloud, enterprises own their AI infrastructure roadmap

What is a Private Cloud for AI Workloads?

A private cloud for AI workloads is a dedicated computing environment, hosted on-premises or through a single-tenant data center. Built exclusively to run AI-specific tasks such as model training, inference, data processing. Unlike general-purpose infrastructure, it is engineered to handle the extreme compute and storage demands that AI workloads require.

The recent IDC report predicts that in 2027, companies will spend more than US$30 billion on AI-related infrastructure, platforms, software, and services to support their ability to compete on highly personalized customer experiences.

Public Cloud vs. Private Cloud for AI: What is the Difference?

Public cloud platforms like AWS, Azure, and GCP offer on-demand AI compute, but at a cost. Enterprises running continuous AI workloads report 40–60% higher long-term costs on public cloud compared to equivalent private infrastructure. Moreover, public cloud means shared environments; a direct conflict with data governance policies in regulated industries.

The best part? Private cloud gives enterprises dedicated resources, predictable pricing, and full data ownership.

Key Components of a Private Cloud Infrastructure for AI

A production-ready private cloud for AI includes:

- High-performance GPU clusters (NVIDIA A100/H100) for model training

- Low-latency storage systems like NVMe-based arrays for fast data access

- Orchestration layers such as Kubernetes with Kubeflow for managing AI pipelines

- Network fabric built on InfiniBand or high-speed Ethernet to minimize bottlenecks

According to Deloitte, 70% of infrastructure leaders cite power and grid capacity and 63% cite cybersecurity as their two biggest concerns as AI compute demand grows.

Why are Enterprise Businesses Shifting to Private Cloud for AI?

Enterprises are no longer treating AI as an experiment. It has become a core business operation. A 2025 Cloudera survey of 1,500+ IT leaders found that 63% of enterprises already store their data in private cloud environments, quietly outpacing public cloud adoption. The shift is intentional.

The Hidden Costs of Running AI on Public Cloud

Public cloud looks affordable on paper. It rarely is in practice! Major cloud providers charge between $0.09 and $0.12 per gigabyte for data egress, and 95% of IT leaders have reported unexpected cloud storage costs as a direct result. However, for AI workloads, this is particularly painful. Models do not read your dataset once, they read it repeatedly across checkpoints and distributed workers.

Why Data Sovereignty Is No Longer Optional for Enterprises?

Compliance is not a checkbox anymore; it is a competitive liability if ignored. Companies that failed compliance audits experience a 31% breach rate, compared to only 3% among compliant businesses.

Regulations like GDPR, HIPAA, and SOC 2 demand that sensitive data stays within defined boundaries.

Public cloud providers operate across multiple jurisdictions, but private cloud puts governance back in your hands; it means full audit trails, defined access controls, zero ambiguity about where your data lives.

Private Cloud and AI Scalability: Can It Keep Up With Growing Demands?

Scalability has always been a problem especially when enterprises consider private clouds for AI.

How Private Cloud Handles Large-Scale AI Model Training?

Training large AI models demands serious compute power. Private cloud environments built on dedicated hardware clusters; think NVIDIA DGX systems or custom bare-metal nodes. According to IDC, global spending on AI infrastructure is projected to exceed $200 billion by 2028, and most importantly, a growing share of that is flowing toward on-premises and private deployments.

Auto-Scaling AI Workloads Without Compromising Performance

Yes, private clouds can auto-scale. Tools like Kubernetes with cluster autoscaler, combined with OpenStack’s resource scheduling, allow dynamic workload distribution. It is not as instant as AWS, but it is predictable, auditable, and far more cost-efficient.

GPU and Compute Resource Management in Private Cloud Environments

GPUs are expensive. Wasting them is unacceptable. Private cloud lets teams implement fine-grained GPU partitioning using technologies like NVIDIA MIG (Multi-Instance GPU), slicing a single A100 into isolated compute units. Gartner reports that enterprises using optimized GPU resource pooling reduce infrastructure costs by up to 30% compared to unmanaged deployments.

How to Architect a Private Cloud for AI Workloads?

Getting the architecture right from the start is everything. A poorly designed private cloud would not just underperform; it will become a liability as your AI workloads grow.

Today, 60% of enterprises use private clouds for AI data storage, and that number is climbing fast. The infrastructure decisions you make now will define how well your AI scales two or three years from now.

Choosing the Right Infrastructure Stack

Not all infrastructure is built for AI, bare metal servers give you raw, dedicated compute power with no virtualization overhead. VMware works well when you need fast deployment and strong workload isolation.

OpenStack, on the other hand, offers a flexible open-source foundation that many enterprises prefer for long-term cost control. Servers with embedded accelerators now account for 91.8% of all AI infrastructure spending, which tells you exactly where the industry is placing its bets.

So choose your stack based on your AI pipeline’s latency requirements, not just upfront cost.

Containerization and Orchestration: Kubernetes for AI at Scale

Kubernetes is essential for AI infrastructure scalability. It lets you package AI models into containers, manage resource allocation dynamically, deploy across nodes without manual intervention.

This matters because 82% of IT leaders have experienced performance issues with AI workloads in the past 12 months, and most of which stem from poor resource orchestration. Kubernetes fixes that. Pair it with Helm charts and GPU-aware scheduling for production-grade AI environments.

Designing for High Availability and Disaster Recovery

Downtime in an AI environment is not just an inconvenience, it is lost revenue and broken pipelines. A solid HA design includes redundant compute nodes, distributed storage, and automated failover.

Latency challenges have surged from 32% to 53% year-over-year among enterprises running AI workloads, making resilient architecture non-negotiable. Implement geo-redundant backups, define your RTO and RPO upfront, and test your recovery procedures regularly.

Private Cloud vs. Hybrid Cloud for AI: Which is Right for Your Enterprise?

Choosing the wrong cloud model for AI workloads is not just a technical misstep, it is a costly business decision.And the stakes are high. Cloudera survey cites 96% AI integration, and 62% hybrid security preference. So which model actually fits your enterprise needs?

It depends. Heavily.

However, a private cloud gives you maximum control. Dedicated infrastructure, isolated environments, full data sovereignty. For industries like banking, healthcare, or defense (where compliance is non-negotiable), it is often the only viable path. Financial institutions remain strong believers in this model, with 63% of them hosting crucial applications in private clouds.

Indeed, this control has a cost. Private cloud demands significant capital investment in hardware/maintenance. And not every enterprise is ready for that.

When a Hybrid Cloud Model Makes More Sense?

Sometimes, you do not need to choose one over the other. Hybrid cloud lets you have it both ways, running sensitive AI workloads on private infrastructure while tapping into the elastic compute power of public cloud.

Sensitive or latency-critical workloads stay on dedicated nodes, while elastic bursts, AI training jobs, and global user traffic land on hyperscale regions. As of 2025, 92% of organizations operate in hybrid or multi-cloud environments. The reasons are practical: flexibility, cost efficiency, and the ability to meet data localization laws without abandoning cloud-native capabilities altogether.

Hybrid also makes sense when your AI roadmap is still evolving. You get agility now without over-committing infrastructure budget upfront.

Migration Path: Moving AI Workloads From Public to Private Cloud

Migration is not a flip of a switch. It is a phased strategy. Most enterprises start by identifying which AI workloads carry the highest compliance.

Organizations are increasingly moving AI workloads away from public cloud to dedicated environments, driven primarily by performance/cost, and the need for hybrid flexibility.

The pattern is clear.

Start with what is regulated. Keep burst and experimental workloads in the public cloud until the ROI justifies a full shift.

One more thing worth noting: enterprises with hybrid cloud deployments report 23% lower operational costs on average compared to traditional on-premise environments.

The bottom line?

Private cloud wins on control and compliance. Hybrid wins on flexibility; the right answer lies in your data sensitivity and growth trajectory.

Real-World Use Cases: Enterprises Winning With Private Cloud AI

Across industries, the world’s most demanding enterprises are not experimenting with private cloud AI, they are scaling it.

And the outcomes speak for themselves.

Financial Services: JPMorgan Chase

JPMorgan Chase runs an $18 billion annual technology budget with over 450 AI use cases currently in production. Their flagship tool, LLM Suite, reached 200,000 employees in just eight months. All built on secure, controlled infrastructure.

Why private clouds? Because when you are managing $4 trillion in assets, you simply cannot expose sensitive financial models. The bank’s infrastructure strategy centers around consolidating from 32 data centers down to roughly 20 highly automated facilities.

The result? Investment bankers producing pitch decks in 30 seconds, work that used to take junior analysts hours.

Healthcare: Mayo Clinic

Healthcare is arguably the highest-stakes industry for data privacy. Mayo Clinic understands this deeply. The institution partnered with NVIDIA to launch a dedicated AI computing platform to support foundation models for digital pathology. All within a controlled infrastructure environment.

Mayo also built 34 AI-powered “virtual workers” that now handle millions of revenue-related tasks. From claims submission to coding accuracy, patient data never leaves a secured environment. HIPAA compliance is not an afterthought; it is built into the architecture from day one.

Manufacturing: Siemens

Siemens has deployed private cloud infrastructure to power predictive maintenance, quality control. Running AI workloads on dedicated infrastructure means millisecond-level response times on the factory floor; something public cloud latency simply cannot guarantee.

It also means full control over proprietary manufacturing process data. No vendor lock-in. No risk of competitors accessing your operational intelligence through shared infrastructure layers.

Common Challenges When Building a Private Cloud for AI

Most enterprises do not fail at the technology level; they fail at the decisions made before a single server is racked.

Here are the five mistakes that consistently derail private cloud AI projects:

1. Underestimating GPU Infrastructure Needs: Teams plan for today’s workloads. AI scales faster than any roadmap predicts. Computing becomes a bottleneck within months.

2. Ignoring Network Architecture Early On: High-speed interconnects between storage, compute, and GPUs are non-negotiable for AI. Retrofitting this later is expensive and disruptive.

3. Treating Security as an Add-On: Compliance controls bolted on after deployment create gaps. Security must be designed in, not patched in.

4. No Clear Data Pipeline Strategy: AI is only as good as its data flow. Without structured ingestion, storage, and versioning, model performance suffers.

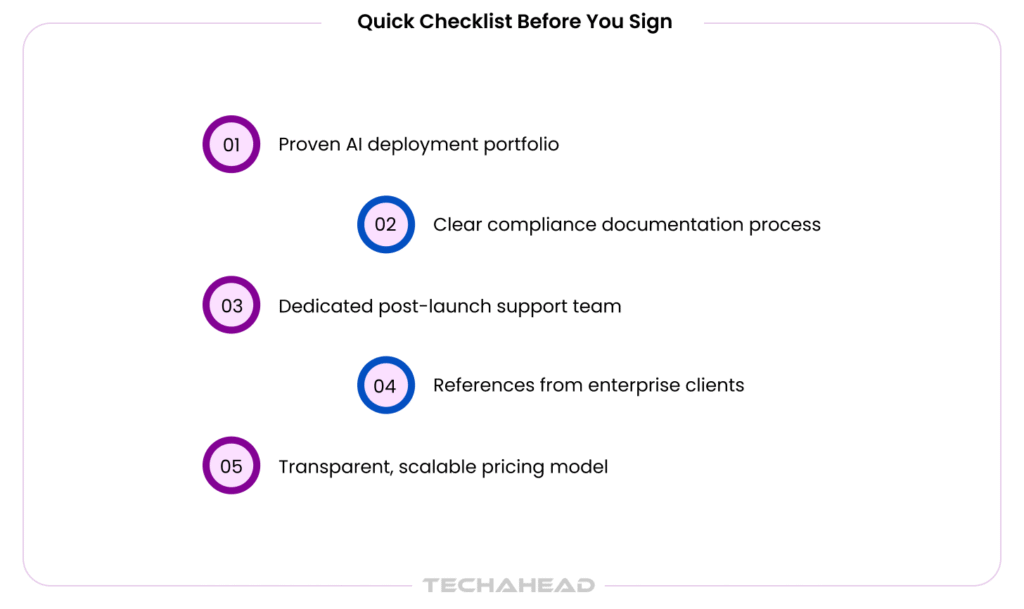

How to Choose the Right Private Cloud Development Partner for AI?

Choosing the wrong partner doesn’t just slow you down, it costs you. A lot. Enterprise AI spending hit $97.2 billion in 2025, and yet 70–85% of AI initiatives (McKinsey cite ~80-90%) still fail to meet expected outcomes.The difference between success and failure often comes down to who builds your infrastructure.

Key Questions to Ask Before Hiring a Cloud Solutions Provider

Not every cloud vendor understands AI workloads. Ask these before signing anything.

- Do you have experience deploying GPU-intensive AI environments? AI training and inference require specialized compute management. General-purpose cloud builders often lack this depth.

- How do you handle compliance and data residency? This is non-negotiable. Your partner must understand GDPR, HIPAA, and SOC 2.

- What does your post-deployment support look like? Deployment is step one. Ongoing optimization, monitoring, and patching matter just as much. Ask for SLAs in writing.

- Can you show proven enterprise case studies? Request references from companies of similar size and industry.

What to Look for in an Enterprise AI Infrastructure Partner?

The right partner does more than provision servers. They think ahead.

- Deep AI-specific expertise: Look for teams experienced in MLOps, model training pipelines, and inference optimization. Not just “cloud architects.”

- Compliance-first mindset: Companies that failed compliance audits experienced a 31% breach rate, versus only 3% among compliant businesses.

- Scalability by design: 65% of organizations now use generative AI regularly, yet 74% still struggle to scale. Your infrastructure partner should solve this, not contribute to it.

- Transparent cost modeling: Hidden infrastructure costs kill ROI. Demand clarity on CapEx vs. OpEx from day one.

- Vendor-agnostic approach: Avoid partners who push you toward a single stack. The best teams build what you need, not what is easiest for them.

Conclusion

AI workloads demand more than legacy infrastructure can offer. The future of enterprise AI is not public; it is private, controlled, and built for scale. Private cloud gives enterprises the performance, privacy, and flexibility to scale AI without compromise. TechAhead is a leading cloud consulting company helping enterprises design and deploy private cloud environments. With deep technical expertise and a proven track record, we transform complex infrastructure challenges into seamless and high-performance cloud solutions tailored to your business goals.

Not exactly. A private cloud adds virtualization, automation, and self-service capabilities on top of on-premises hardware. On-premises is just infrastructure. Private cloud is intelligent, scalable infrastructure, a meaningful distinction for enterprise AI environments.

Yes, when architectured correctly. With pre-provisioned GPU clusters, Kubernetes orchestration, and auto-scaling policies, private clouds match public cloud speed. The difference is you control the resources entirely, eliminating noisy-neighbor issues common in shared public environments.

Private cloud keeps sensitive AI data within your controlled environment. No shared tenancy means fewer attack surfaces. Combined with role-based access, encryption, and internal firewalls, your data never travels through third-party infrastructure where interception risks are higher.

You do. Entirely. Unlike public cloud providers whose terms often include broad data usage rights, a private cloud gives your enterprise full legal ownership and control over every dataset, model, and output with zero third-party access by default.

Usually, 18 to 36 months, depending on workload volume and infrastructure scale. Enterprises running continuous, high-compute AI operations often break even faster. After that, operational costs drop significantly compared to recurring public cloud subscription and egress fees.