Required for core functionality such as security, network management, and accessibility. These cannot be disabled.

Your AI chatbot sounds smart. It answers questions fluently. But ask it to send an email, update a spreadsheet, or summarize your actual files, and it fails completely!

Why?

Modern AI models predict text brilliantly, but they’re fundamentally disconnected from your real data, tools, and business systems.

This is the gap that Model Context Protocol (MCP) solves.

Think of it this way: A traditional USB cable is proprietary; your phone charger doesn’t work with your laptop, which doesn’t work with your tablet. Then USB-C arrived as a universal standard, and everything suddenly connected seamlessly. That’s what MCP does for AI.

Key Takeaways

- Model Context Protocol is AI’s universal adapter for enterprise systems.

- Ninety-seven million SDK downloads prove MCP’s rapid global adoption rate.

- MCP eliminates custom integrations and reduces implementation time by sixty percent.

- Eighty-six percent of AI pilots fail without a standardized integration architecture.

- MCP servers expose tools securely using the JSON-RPC 2.0 protocol standardization.

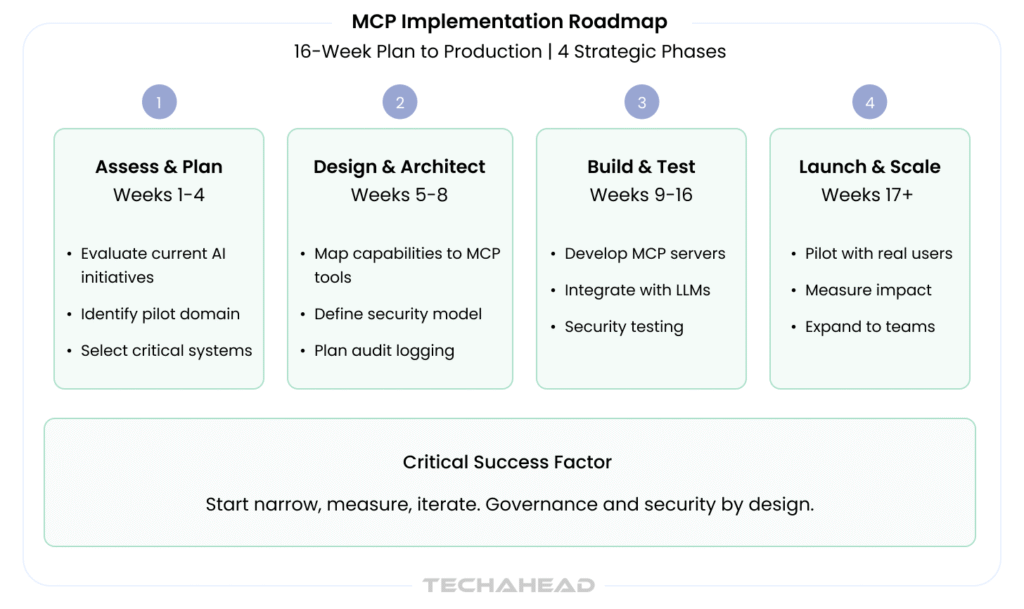

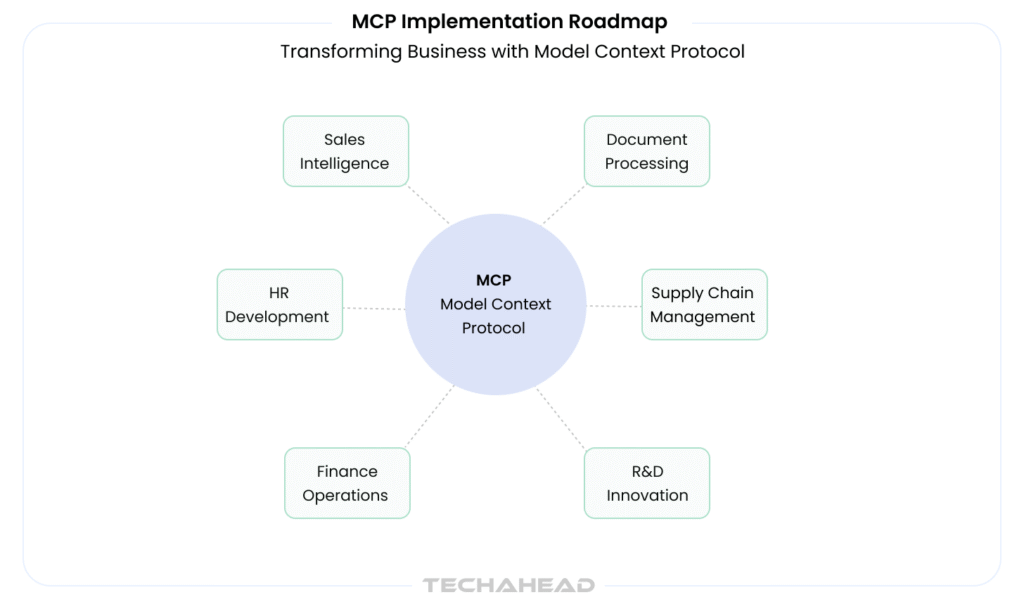

- Four-phase MCP implementation roadmap takes enterprises from assessment to production.

- Agentic AI’s future depends on protocols like MCP connecting everything.

Launched by Anthropic in November 2024, MCP is an open standard that standardizes how AI models connect to external tools, data sources, and services. Instead of building custom integrations every time you want your AI to access a database, CRM, or API, MCP provides a single “nervous system” that AI applications can tap into.

By the end of this guide, you’ll understand MCP’s architecture, why it matters, and how organizations are already using it to break free from “integration hell.” No jargon required: Just plain English explanations with real examples.

What is MCP? Source

The AI Integration Problem: Why MCP Exists

LLMs Are Smart But Blind

Large Language Models like GPT-4, Claude, and Gemini excel at one thing: predicting the next word. They’re trained on massive amounts of text and can hold sophisticated conversations. But here’s the critical limitation: they have no direct access to your data, systems, or the ability to take action in the real world.

A ChatGPT instance can’t access your email inbox. Claude can’t pull live data from your database. Neither can execute code directly or modify files without external assistance. They’re conversational, but contextually blind.

The Current Integration Nightmare

Today’s workaround is messy. Every time you want an AI to access a new tool, developers must:

- Build custom API connectors (weeks of engineering per tool)

- Manage authentication and permissions separately

- Update and maintain each integration independently

- Handle error cases and edge conditions manually

Real-world example: If you’re building an AI IDE that needs access to GitHub, documentation databases, and a debugger, that’s three separate integration projects. Add Slack, Jira, and your internal databases, and suddenly you’re managing a dozen point-to-point connections.

Companies like Perplexity have reportedly built specialized “search hacks” to bypass these limitations. But these are band-aids, not solutions.

The Cost

Industry data shows:

- 71% of AI implementation teams spend over 25% of their time on data integration alone (CData, 2026)

- 86-89% of AI agent pilots fail before reaching production, primarily due to integration complexity and governance gaps (Forrester, 2026)

- Custom integrations introduce 3-5x maintenance costs compared to standardized approaches (McKinsey, 2026)

This is why MCP was created: to eliminate the custom integration tax.

What Is MCP? Core Definition

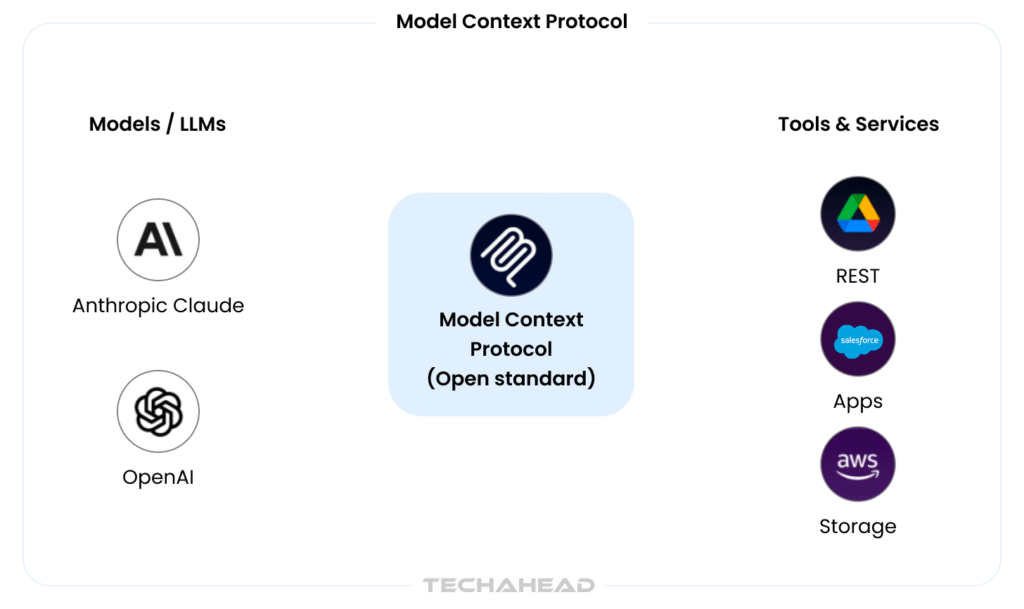

Model Context Protocol is an open-source standard that enables AI models to connect to tools, data sources, and services through a standardized interface.

Here’s the key: MCP isn’t a model. It’s not an AI application. It’s the protocol, the agreed-upon rules and message format, that AI applications and external services use to talk to each other.

Technical Foundation

MCP uses JSON-RPC 2.0, a lightweight remote procedure call protocol, to enable bidirectional communication between AI clients and servers. This means:

- AI clients (like Claude, ChatGPT integrations, or custom agents) send requests

- MCP servers (representing tools, data sources, or services) receive requests and send back responses

- The protocol standardizes the message format, security, and workflow

What MCP Actually Does

- Shares Context – Exposes files, prompts, and knowledge to AI models

- Exposes Tools – Makes external functions callable from AI applications

- Manages Workflows – Enables multi-step processes with multiple systems

- Secures Connections – Builds authentication and permission controls into the protocol itself

The USB-C Analogy

Before USB-C, every device manufacturer had their own charging cable. Your phone charger didn’t work with your laptop. You carried five cables. USB-C standardized the connection, now one cable works everywhere.

MCP does the same for AI. One protocol. Multiple tools. No proprietary cables required.

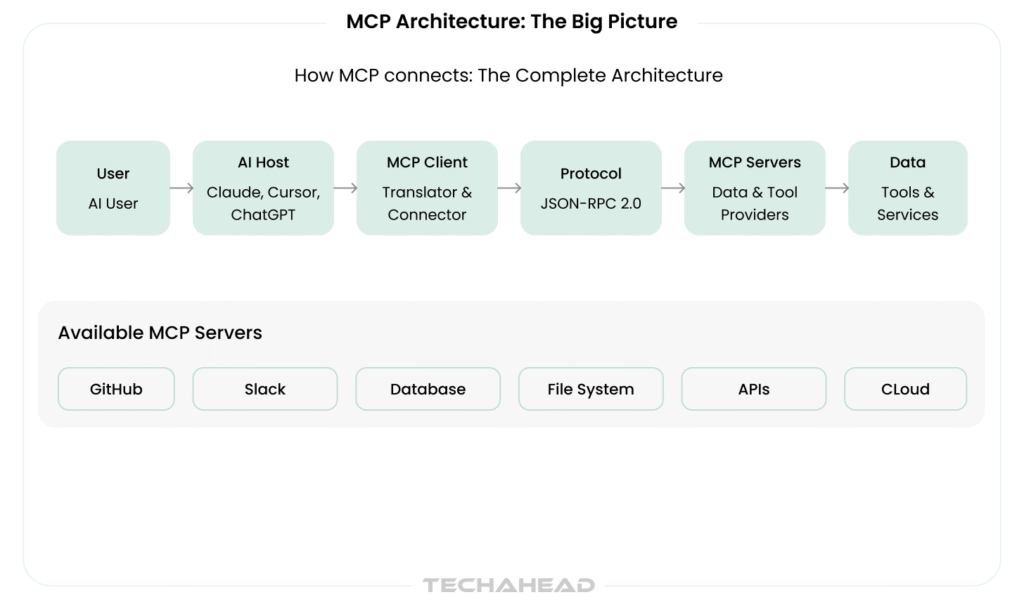

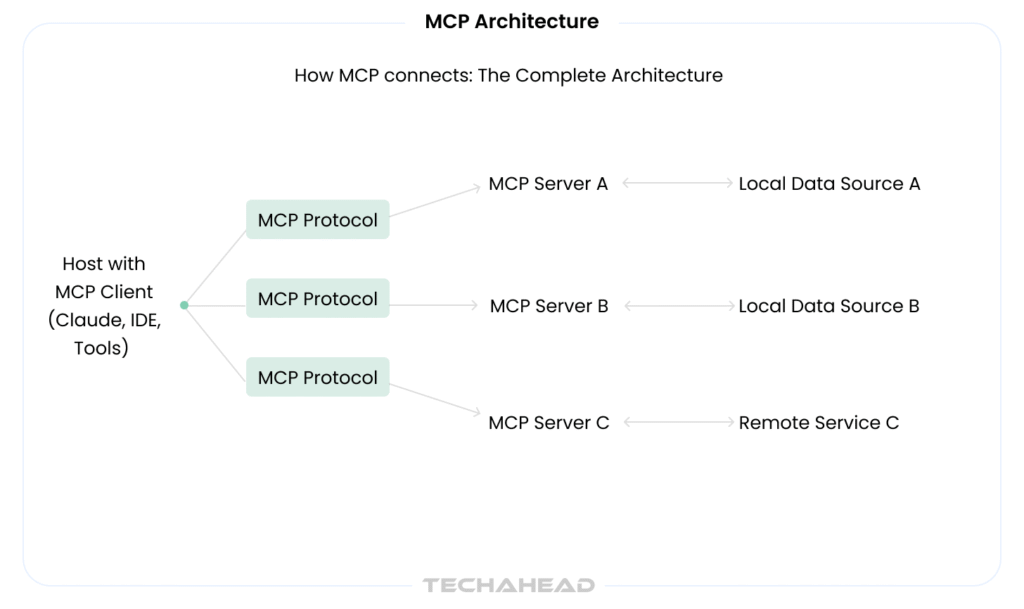

MCP Architecture: The Big Picture

Source: TechAhead AI Team

The Four Core Components

1. Hosts (User-Facing AI Applications)

Hosts are the AI applications that end-users interact with. Examples include:

- Claude (Anthropic’s AI)

- Cursor (AI code editor)

- Custom chatbots

- Enterprise AI applications

The host initiates connections and sends requests for data or tool execution.

2. Clients (Connection Managers)

The client is the part of the host that manages the MCP connection. It:

- Discovers available servers

- Initiates secure handshakes

- Formats requests according to the MCP protocol

- Handles responses and errors

Think of it as the “translator” between the AI and external systems.

3. Servers (Data and Tool Providers)

MCP servers expose data and tools. They:

- Run on machines (local, cloud, or on-premises)

- Listen for MCP protocol requests

- Execute operations (fetch data, call APIs, run scripts)

- Return results back to clients

Examples:

- GitHub server (provides repository data and actions)

- SQL database server (exposes database queries)

- Slack server (provides messaging capabilities)

- File system server (exposes local or cloud files)

4. The Protocol (JSON-RPC 2.0)

The protocol defines:

- Request format – How clients ask servers for data or actions

- Response format – How servers send back results

- Methods – Standardized operations (context/list, tools/call, prompt/run)

- Security – Authentication tokens, permission checks, capability negotiation

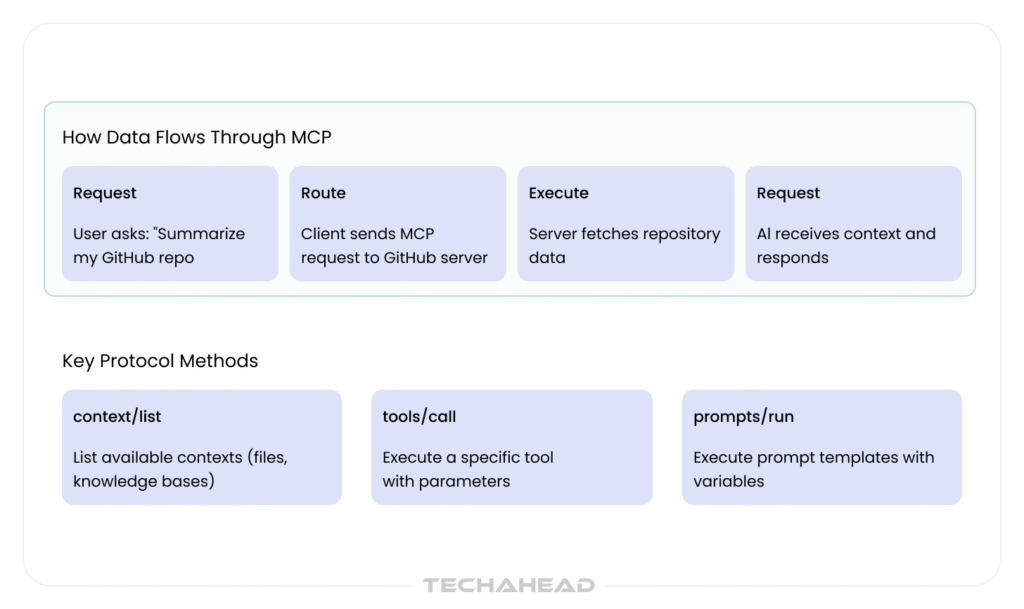

How Data Flows

Example: AI Summarizing a GitHub Repository

- User asks Claude: “Summarize my GitHub repo’s recent commits.”

- Claude’s client sends an MCP request to the GitHub server: tools/call with method “list_commits”

- GitHub server fetches the commit history

- Server returns JSON-formatted commit data

- Claude receives context and generates a summary

- User sees a well-informed response

MCP Architecture: Component Deep Dive

Hosts and Clients

Hosts are the AI applications themselves. They’re where users interact with AI. Recent examples include:

- Claude (Anthropic’s interface)

- Cursor (AI-powered code editor using MCP for GitHub/docs access)

- TempoAI and Windsurf (AI development environments)

- Custom enterprise chatbots and agents

The client component embedded in each host handles all MCP communication. It’s responsible for:

- Server discovery (finding available MCP servers)

- Authentication (securing connections with API keys or tokens)

- Request routing (sending user intent to the right server)

- Response handling (formatting server responses for the AI model)

Servers: The Data and Tool Layer

MCP servers are built and deployed by organizations to expose their tools and data. They can run:

- Locally on a developer’s machine

- On-premises in enterprise data centers

- In the cloud (AWS, Azure, Google Cloud)

Popular MCP servers include:

| Server Type | Purpose | Provider |

| GitHub MCP | Repository access, commits, PRs | Anthropic |

| Slack MCP | Channel access, messaging | Anthropic |

| PostgreSQL MCP | Database queries | Community |

| File System MCP | Local file access | Anthropic |

Organizations can build custom servers for proprietary systems, using SDKs provided by Anthropic (Python, Node.js, Go).

Protocol Messages: The Communication Layer

MCP defines three primary method categories:

1. Context Methods

- context/list – List available contexts (files, knowledge bases)

- context/read – Fetch specific context data

2. Tools Methods

- tools/list – Enumerate available tools

- tools/call – Execute a specific tool with parameters

3. Prompts Methods

- prompts/list – List available prompt templates

- prompts/run – Execute a prompt with variables

Security Architecture:

Every MCP connection includes:

- API Key authentication – Servers validate client credentials

- Capability negotiation – Clients declare what they need; servers declare what they offer

- Permission boundaries – Users can grant/revoke tool access

- Audit logging – Track which tools are called and by whom

How MCP Works: A Step-by-Step Implementation Flow

The 5-Step MCP Workflow

Step 1: Setup Install the MCP client SDK into your AI application. For developers: npm install @anthropic-ai/sdk (Node.js) or pip install anthropic (Python).

Step 2: Discovery and Connection The client discovers available MCP servers (either configured locally or remotely). It establishes a secure connection with authentication (API keys, OAuth tokens, or mutual TLS).

Example configuration:

{

“servers”: {

“github”: {

“url”: “stdio://github-mcp-server”,

“auth_token”: “your-github-token”

},

“slack”: {

“url”: “http://localhost:3001”,

“auth_token”: “your-slack-token”

}

}

}

Step 3: Request The AI model (or user, through the host) sends a request. For example:

- “Fetch my open GitHub issues” → tools/call to GitHub server

- “Send a Slack message” → tools/call to Slack server

Step 4: Execute The server receives the request, validates permissions, executes the operation, and returns results.

Step 5: Response and Integration The server responds with context (data, documents, tool outputs). The AI model receives this context and generates informed, grounded responses.

Error Handling and Retries

MCP includes built-in error handling:

- Connection retries with exponential backoff

- Timeout management

- Fallback strategies (cached responses if servers are unavailable)

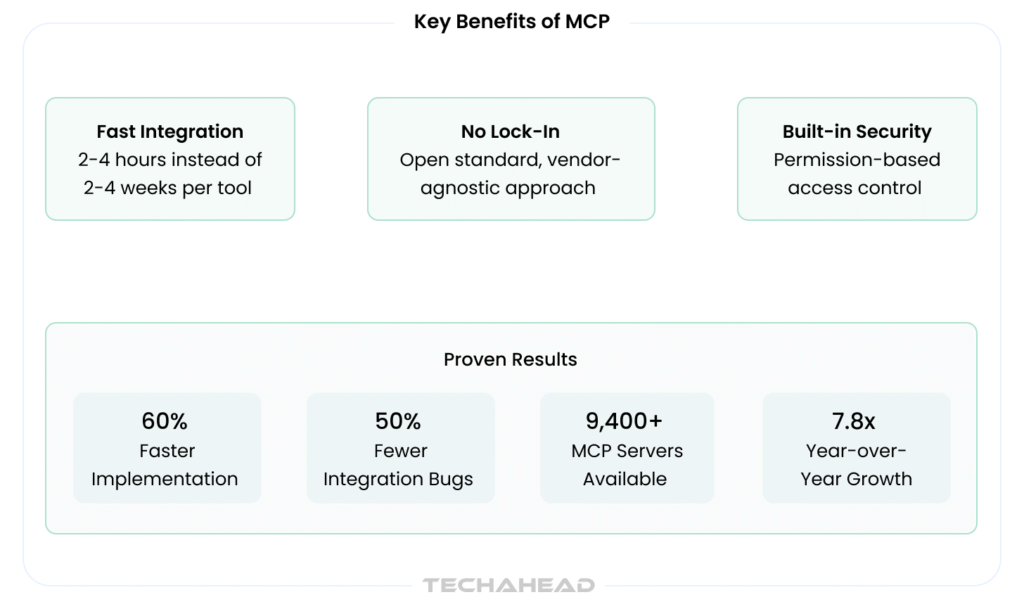

Comparison: Traditional Integration vs. MCP

| Metric | Traditional Custom Integration | MCP Approach |

| Integration Time | 2-4 weeks per tool | 2-4 hours per tool |

| Vendor Lock-in | High (proprietary code) | None (open standard) |

| Scalability | Nightmare (10+ tools = months) | Linear (plug-and-play) |

| Maintenance Burden | Continuous (each tool separately) | Minimal (standardized updates) |

| Developer Cost | $50k-$100k per integration | $5k-$15k per integration |

| Security Model | Ad-hoc (custom per tool) | Built-in (standard controls) |

| Ecosystem Growth | Dependent on single vendor | Community-driven |

Benefits and Real-World Impact

Standardization: Breaking the Integration Chain

Before MCP, each tool required unique connector code. With MCP, one protocol works across all servers. This has immediate benefits:

- Reduced complexity – Teams no longer need deep expertise in 10+ different APIs

- Faster deployment – New integrations can be configured in hours instead of weeks

- Consistency – Authentication, error handling, and security work the same way everywhere

Scalability: Compose Workflows Across Systems

With MCP, you can orchestrate workflows that touch multiple systems:

Real example:

- AI reads customer data from Salesforce (via MCP)

- Analyzes sentiment from recent emails (via Gmail MCP)

- Generates a summary in a document (via Google Docs MCP)

- Posts the summary to Slack (via Slack MCP)

All in one agentic workflow. Without MCP, each step would require custom integration code.

Security: Built-In, Not Bolted-On

MCP includes security by design:

- Permission-based access control (users grant specific tool access)

- No direct API key exposure (servers handle authentication)

- Audit trails for compliance

- Capability negotiation prevents unauthorized operations

Ecosystem Growth

As of April 2026, the MCP ecosystem includes:

- 9,400+ public MCP servers (GitHub, Slack, databases, custom tools)

- 7.8x year-over-year growth in server registrations

- 41% of enterprises building custom MCP servers for proprietary systems

Quantified Impact

Organizations using MCP report:

- 60% faster implementation of new AI capabilities (Anthropic, 2026)

- 50% reduction in integration bugs (internal TechAhead data)

- 40% lower total cost of ownership for AI systems (Gartner, 2026)

Getting Started: A Hands-On Guide

For Developers

1. Install the SDK

# Node.js

npm install @anthropic-ai/sdk

# Python

pip install anthropic

2. Configure Your Servers Create an mcp-config.json file with your server list and authentication tokens.

3. Write Your First MCP Client

from anthropic import Anthropic

client = Anthropic()

# MCP configuration is passed to Claude

response = client.messages.create(

model=”claude-3-5-sonnet-20241022″,

max_tokens=1024,

tools=[

{

“type”: “mcp_tool”,

“name”: “github_list_issues”,

“description”: “List open GitHub issues”

}

],

messages=[{“role”: “user”, “content”: “List my open issues”}]

)

4. Test with Public Servers Anthropic provides demo MCP servers for testing:

- File system server

- GitHub server

- Web search server

Resources:

- Official MCP Spec: https://modelcontextprotocol.io

- GitHub Repository: https://github.com/anthropics/mcp

- Community Servers: https://github.com/topics/mcp-server

- VS Code Extension: MCP Tools

For Non-Technical Users

If you use AI tools like Claude or Cursor, you’re already benefiting from MCP:

- Claude’s code execution features use MCP servers

- Cursor IDE integrates MCP for GitHub and documentation access

- No configuration needed, it works in the background

Use Cases and Real-World Examples

1. Development Tools

AI IDEs like Cursor use MCP to access:

- Git repositories (commits, branches, diffs)

- Documentation databases

- Code snippets and templates

- Debugging tools

Result: Real-time AI code suggestions grounded in your actual codebase.

2. Sales and Business Analytics

Sales teams use MCP-connected AI to:

- Pull live CRM data (Salesforce, HubSpot)

- Access email conversations

- Analyze customer contracts

- Generate insights

Result: AI that actually knows your customer data.

3. Customer Support

Support agents use MCP to connect AI with:

- Ticketing systems (Jira Service Management)

- Knowledge bases

- Customer history

- Live chat logs

Result: Support AI that has context.

4. Enterprise Workflows

Corporations deploy MCP to orchestrate:

- HR systems (employee records, policies)

- Finance systems (expense tracking, budgets)

- Internal documentation

- Communication platforms (Slack, Teams)

Challenges and Limitations

Early Adoption Stage

MCP launched in November 2024. It’s still evolving:

- New features and servers are being added regularly

- Best practices are still being established

- Not all tools have MCP servers yet

Development Effort Required

Building a custom MCP server requires developer work. It’s not drag-and-drop yet.

Performance Considerations

Latency in chained MCP calls (multiple servers per request) requires:

- Caching strategies

- Asynchronous processing

- Smart request batching

Adoption Timeline

Full ecosystem adoption will take 2-3 years as organizations migrate legacy integrations.

The Future of MCP and AI

Near-term (2026-2027)

- Enterprise server marketplace – Companies will publish approved MCP servers

- Deeper model integration – Claude, GPT-5, and other models will be built with MCP-first architecture

- Multimodal support – MCP will extend to images, audio, and video context

Medium-term (2027-2028)

- Autonomous agent workflows – Complex multi-system orchestrations will become standard

- Industry-specific servers – Healthcare, Finance, Manufacturing, will build proprietary servers

- Real-time collaboration – Multiple AIs coordinating via shared MCP servers

Why It Matters

MCP isn’t just about simplifying integrations. It’s about unlocking true agentic AI Universe: Smart AI systems that understand context, take grounded actions, and operate autonomously across enterprise systems.

Conclusion: Your Path Forward

Model Context Protocol is the missing link in enterprise AI.

It’s the difference between AI that talks and AI that acts, between information extraction and real business impact.

Your next step: If you’re building AI applications, start exploring MCP today. Check the official repository, test with demo servers, and begin planning your MCP architecture.

The future of AI isn’t just smarter models. It’s smarter connections.

Transform your enterprise AI strategy. Contact TechAhead to design and deploy MCP solutions that deliver measurable business results.

MCP is an open standard enabling AI models to securely connect with external tools, data sources, and standardized services..

MCP provides standardized connections instead of custom code, reducing integration time from weeks to hours per new tool exponentially.

Faster implementation, lower costs, reduced vendor lock-in, built-in security, improved developer productivity, and measurable operational efficiency gains organization-wide.

Implementation takes four phases spanning sixteen weeks: assess and plan four weeks, design and architect four weeks, build and test eight weeks, launch and scale ongoing.

Leading enterprises, including Anthropic, GitHub, Salesforce, and community organizations, deploy MCP servers for development, sales analytics, and enterprise workflows.